Organizations want seamless entry to their structured information repositories to energy clever AI brokers. Nevertheless, when these sources span a number of AWS accounts integration challenges can come up. This submit explores a sensible resolution for connecting Amazon Bedrock brokers to information bases in Amazon Redshift clusters residing in several AWS accounts.

The problem

Organizations that construct AI brokers utilizing Amazon Bedrock can preserve their structured information in Amazon Redshift clusters. When these information repositories exist in separate AWS accounts from their AI brokers, they face a big limitation: Amazon Bedrock Data Bases doesn’t natively assist cross-account Redshift integration.

This creates a problem for enterprises with multi-account architectures who wish to:

- Leverage current structured information in Redshift for his or her AI brokers.

- Preserve separation of issues throughout completely different AWS accounts.

- Keep away from duplicating information throughout accounts.

- Guarantee correct safety and entry controls.

Answer overview

Our resolution permits cross-account information base integration by means of a safe, serverless structure that maintains safe entry controls whereas permitting AI brokers to question structured information. The method makes use of AWS Lambda as an middleman to facilitate safe cross-account information entry.

The motion stream as proven above:

- Customers enter their pure language query in Amazon Bedrock Brokers which is configured within the agent account.

- Amazon Bedrock Brokers invokes a Lambda perform by means of motion teams which gives entry to the Amazon Bedrock information base configured within the agent-kb account above.

- Motion group Lambda perform working in agent account assumes an IAM function created in agent-kb account above to connect with the information base within the agent-kb account.

- Amazon Bedrock Data Base within the agent-kb account makes use of an IAM function created in the identical account to entry Amazon Redshift information warehouse and question information within the information warehouse.

The answer follows these key elements:

- Amazon Bedrock agent within the agent account that handles person interactions.

- Amazon Redshift serverless workgroup in VPC and personal subnet within the agent-kb account containing structured information.

- Amazon Bedrock Data base utilizing the Amazon Redshift serverless workgroup as structured information supply.

- Lambda perform within the agent account.

- Motion group configuration to attach the agent within the agent account to the Lambda perform.

- IAM roles and insurance policies that allow safe cross-account entry.

Conditions

This resolution requires you to have the next:

- Two AWS accounts. Create an AWS account for those who don’t have one. Particular permissions required for each account which will likely be arrange in subsequent steps.

- Set up the AWS CLI (2.24.22 – present model)

- Arrange authentication utilizing IAM person credentials for the AWS CLI for every account

- Be sure to have jq put in,

jqis light-weight command-line JSON processor. For instance, in Mac you need to use the commandbrew set up jq(jq-1.7.1-apple – present model) to put in it. - Navigate to the Amazon Bedrock console and be sure you allow entry to the meta.llama3-1-70b-instruct-v1:0 mannequin for the agent-kb account and entry for us.amazon.nova-pro-v1:0 mannequin within the agent account within the us-west-2, US West (Oregon) AWS Area.

Assumption

Let’s name the AWS account profile, agent profile that has the Amazon Bedrock agent. Equally, the AWS account profile be referred to as agent-kb that has the Amazon Bedrock information base with Amazon Redshift Serverless and the structured information supply. We are going to use the us-west-2 US West (Oregon) AWS Area however be happy to decide on one other AWS Area as crucial (the conditions will likely be relevant to the AWS Area you select to deploy this resolution in). We are going to use the meta.llama3-1-70b-instruct-v1:0 mannequin for the agent-kb. That is an obtainable on-demand mannequin in us-west-2. You might be free to decide on different fashions with cross-Area inference however that may imply altering the roles and polices accordingly and allow mannequin entry in all Areas they’re obtainable in. Based mostly on our mannequin alternative for this resolution the AWS Area have to be us-west-2. For the agent we will likely be utilizing an Amazon Bedrock agent optimized mannequin like us.amazon.nova-pro-v1:0.

Implementation walkthrough

The next is a step-by-step implementation information. Make certain to carry out all steps in the identical AWS Area in each accounts.

These steps are to deploy and take a look at an end-to-end resolution from scratch and if you’re already working a few of these elements, you could skip over these steps.

-

- Make an observation of the AWS account numbers within the agent and agent-kb account. Within the implementation steps we’ll refer them as follows:

Profile AWS account Description agent 111122223333 Account for the Bedrock Agent agent-kb 999999999999 Account for the Bedrock Data base Notice: These steps use instance profile names and account numbers, please change with actuals earlier than working.

- Create the Amazon Redshift Serverless workgroup within the agent-kb account:

- Go surfing to the agent-kb account

- Observe the workshop hyperlink to create the Amazon Redshift Serverless workgroup in personal subnet

- Make an observation of the namespace, workgroup, and different particulars and observe the remainder of the hands-on workshop directions.

- Arrange your information warehouse within the agent-kb account.

- Create your AI information base within the agent-kb account. Make an observation of the information base ID.

- Prepare your AI Assistant within the agent-kb account.

- Take a look at pure language queries within the agent-kb account. You could find the code in aws-samples git repository: sample-for-amazon-bedrock-agent-connect-cross-account-kb.

- Create crucial roles and insurance policies in each the accounts. Run the script create_bedrock_agent_kb_roles_policies.sh with the next enter parameters.

Enter parameter Worth Description –agent-kb-profile agent-kb The agent knowledgebase profile that you just arrange with the AWS CLI with aws_access_key_id, aws_secret_access_key as talked about within the conditions. –lambda-role lambda_bedrock_kb_query_role That is the IAM function the agent account Bedrock agent motion group lambda will assume to connect with the Redshift cross account –kb-access-role bedrock_kb_access_role That is the IAM function the agent-kb account which the lambda_bedrock_kb_query_rolein agent account assumes to connect with the Redshift cross account–kb-access-policy bedrock_kb_access_policy IAM coverage hooked up to the IAM function bedrock_kb_access_role–lambda-policy lambda_bedrock_kb_query_policy IAM coverage hooked up to the IAM function lambda_bedrock_kb_query_role–knowledge-base-id XXXXXXXXXX Substitute with the precise information base ID created in Step 4 –agent-account 111122223333 Substitute with the 12-digit AWS account quantity the place the Bedrock agent is working. (agent account) –agent-kb-account 999999999999 Substitute with the 12-digit AWS account quantity the place the Bedrock information base is working. (agent-kb acccount) - Obtain the script (create_bedrock_agent_kb_roles_policies.sh) from the aws-samples GitHub repository.

- Open Terminal in Mac or comparable bash shell for different platforms.

- Find and alter the listing to the downloaded location, present executable permissions:

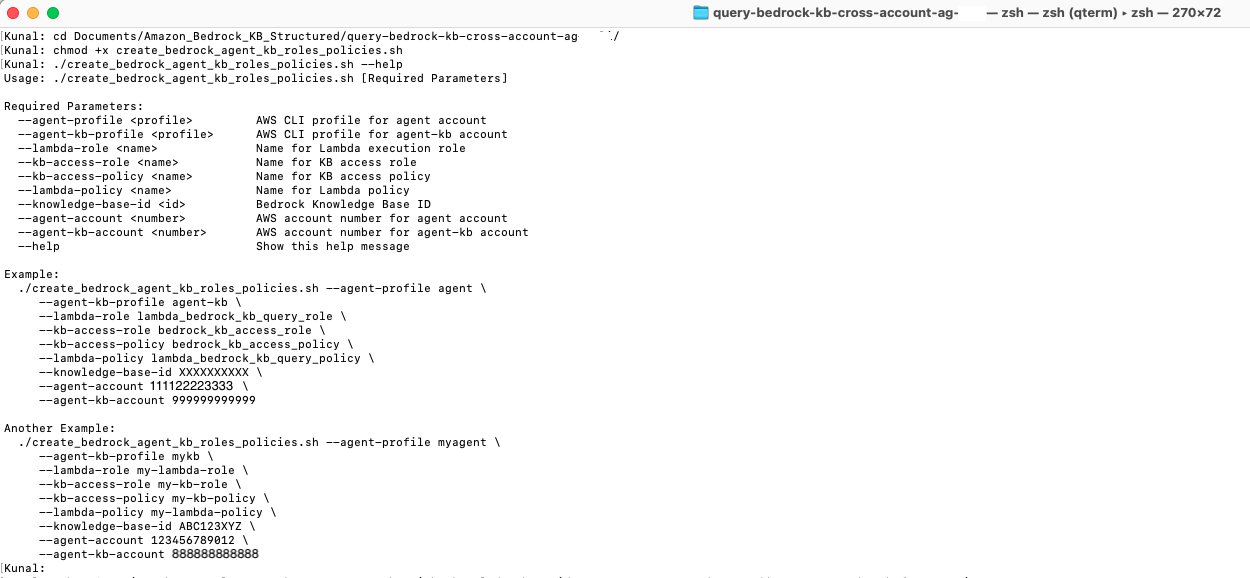

- In case you are nonetheless not clear on the script utilization or inputs, then you possibly can run the script with the –assist possibility and the script will show the utilization:

./create_bedrock_agent_kb_roles_policies.sh –assist

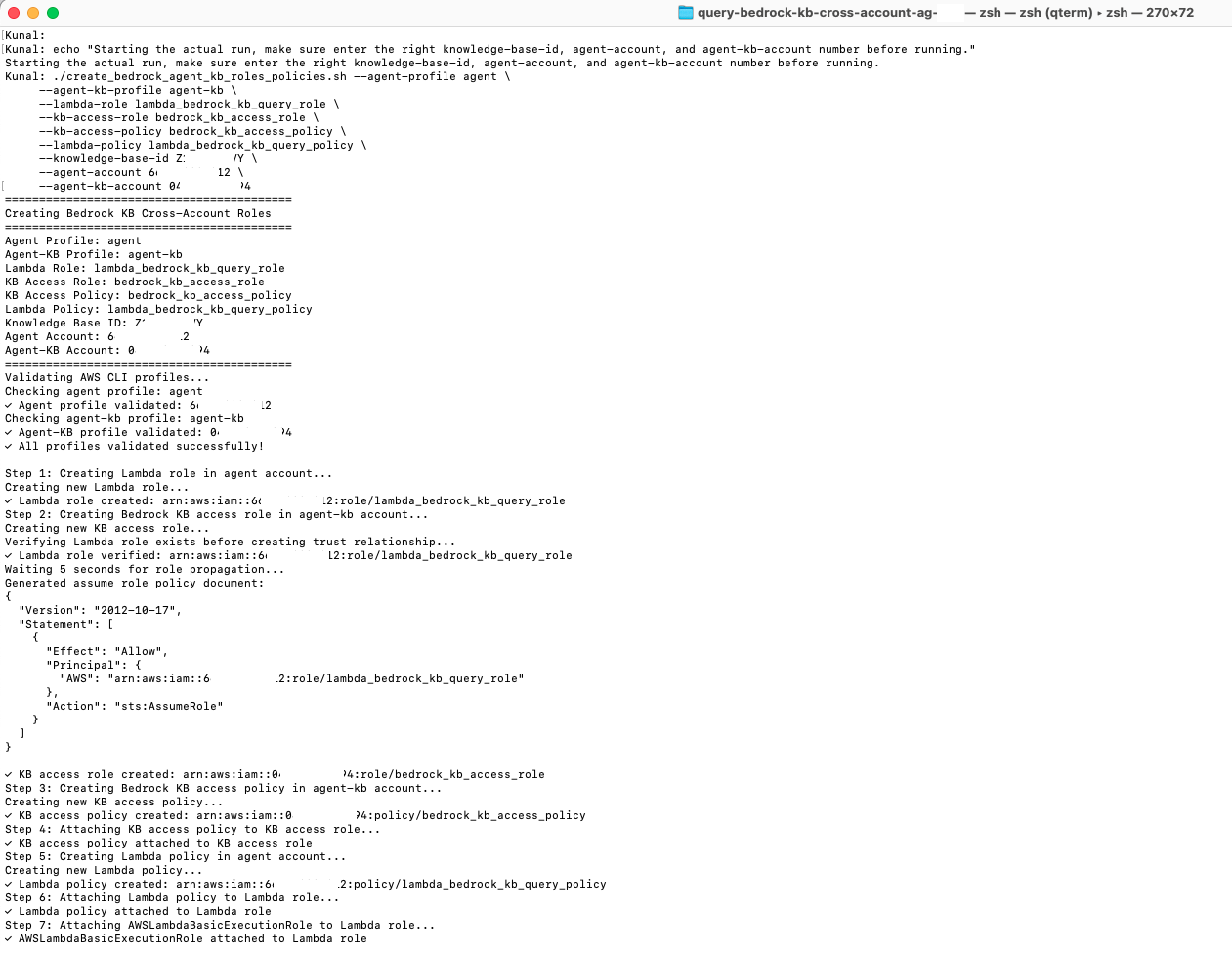

- Run the script with the proper enter parameters as described within the earlier desk.

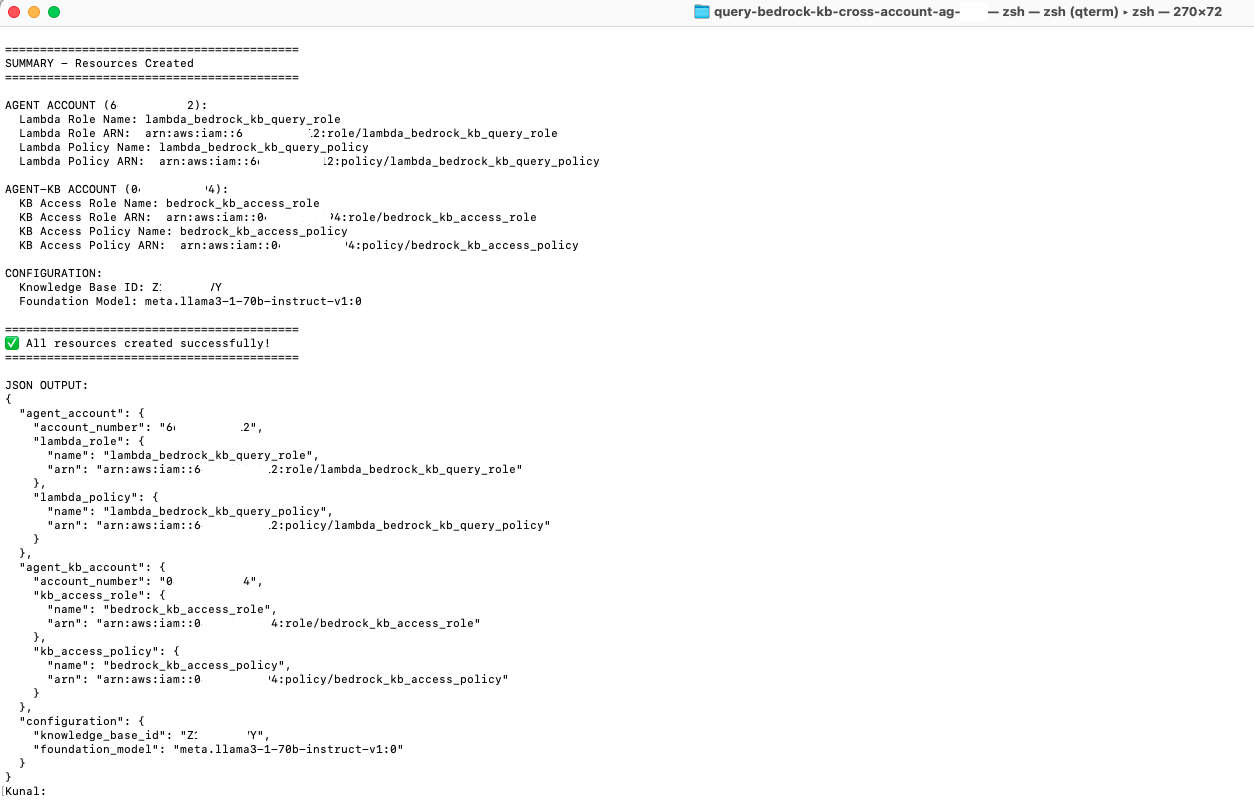

- The script on profitable execution exhibits the abstract of the IAM, roles and insurance policies created in each accounts.

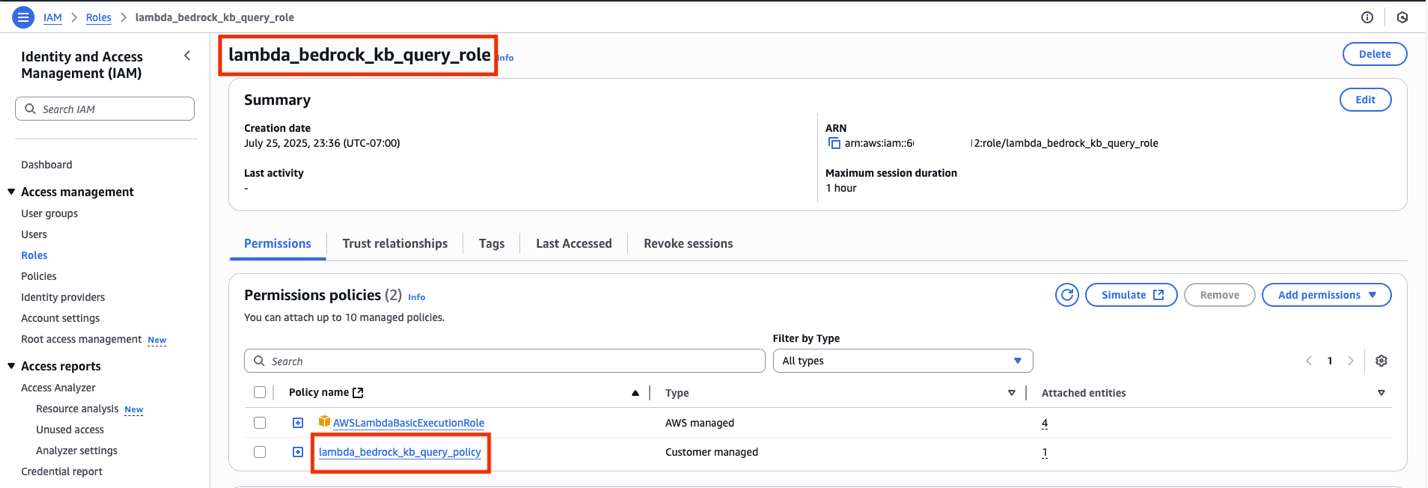

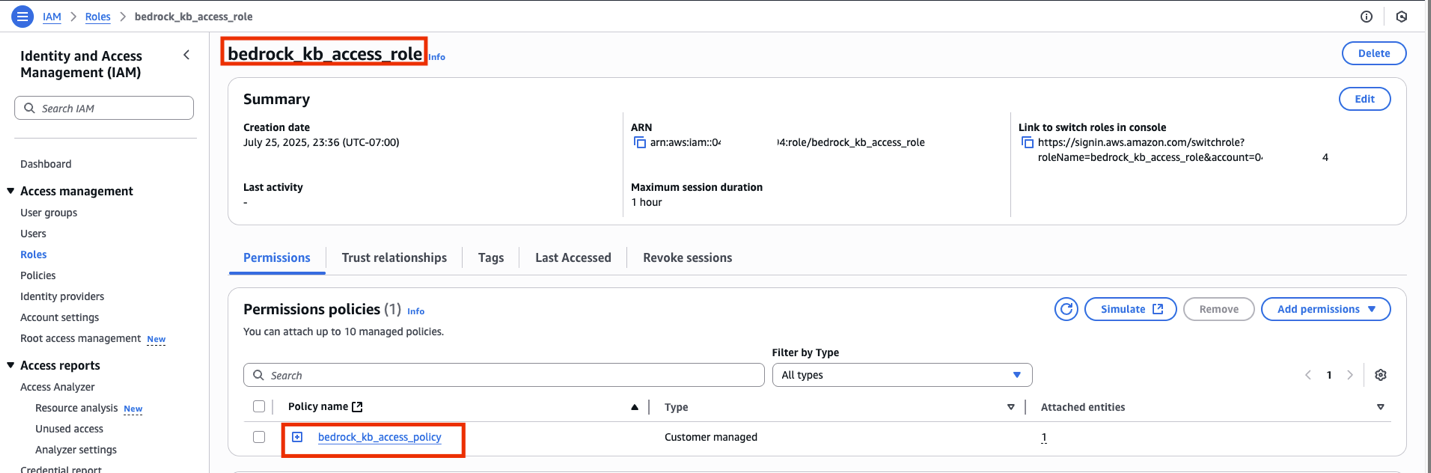

- Go surfing to each the agent and agent-kb account to confirm the IAM roles and insurance policies are created.

-

-

- For the agent account: Make an observation of the ARN of the

lambda_bedrock_kb_query_roleas that would be the worth of CloudFormation stack parameter AgentLambdaExecutionRoleArn within the subsequent step.

- For the agent-kb account: Make an observation of the ARN of the

bedrock_kb_access_roleas that would be the worth of CloudFormation stack parameterTargetRoleArnwithin the subsequent step.

- For the agent account: Make an observation of the ARN of the

-

-

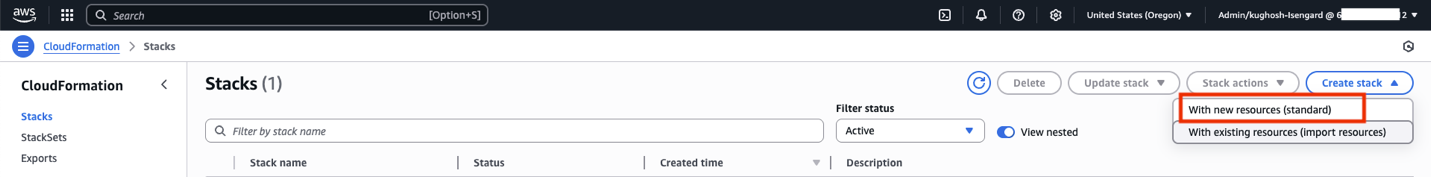

- Run the AWS CloudFormation script to create a Bedrock agent:

-

-

-

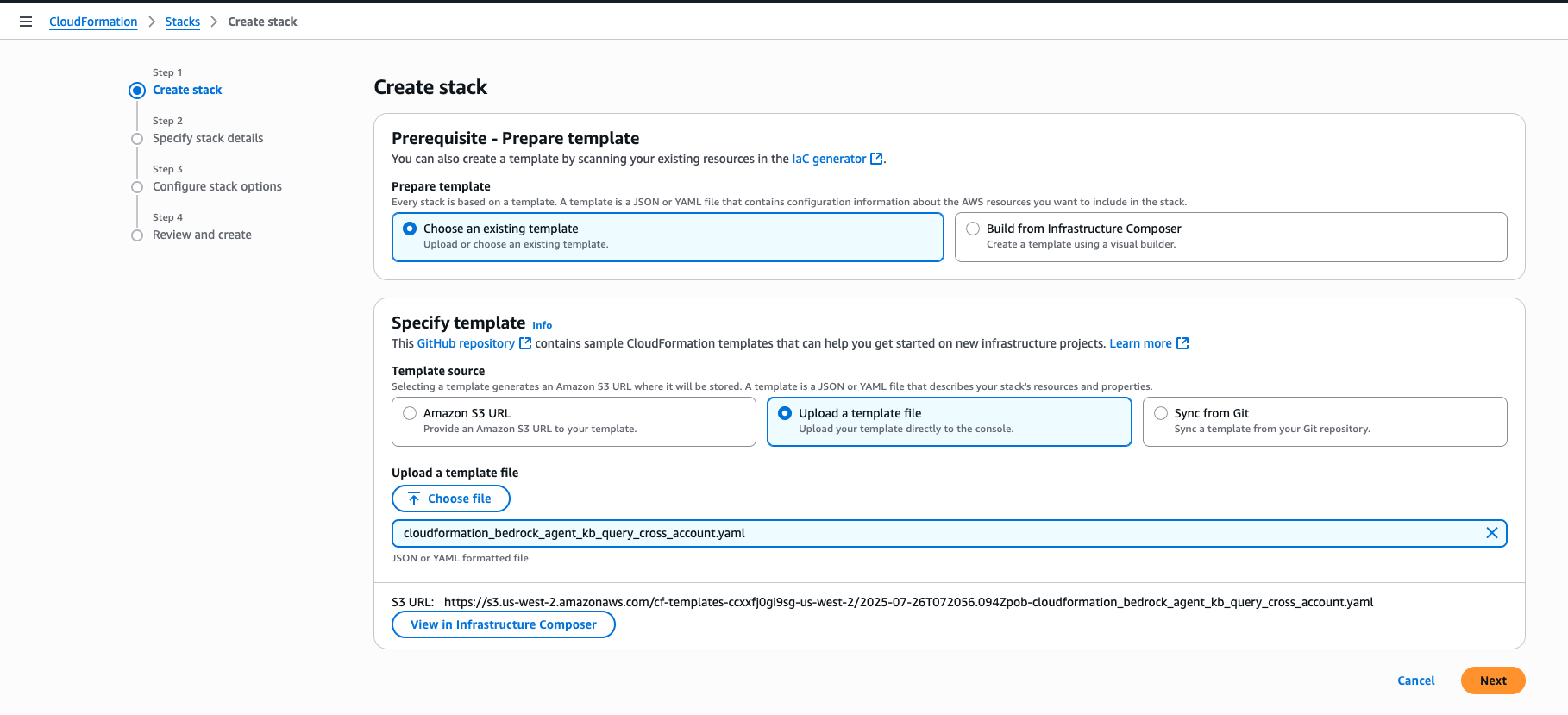

- Obtain the CloudFormation script: cloudformation_bedrock_agent_kb_query_cross_account.yaml from the aws-samples GitHub repository.

- Go surfing to the agent account and navigate to the CloudFormation console, and confirm you might be within the us-west-2 (Oregon) Area, select Create stack and select With new sources (commonplace).

- Within the Specify template part select Add a template file after which Select file and choose the file from (1). Then, select Subsequent.

- Enter the next stack particulars and select Subsequent.

Parameter Worth Description Stack title bedrock-agent-connect-kb-cross-account-agent You possibly can select any title AgentFoundationModelId us.amazon.nova-pro-v1:0 Don’t change AgentLambdaExecutionRoleArn arn:aws:iam:: 111122223333:function/lambda_bedrock_kb_query_role Substitute with you agent account quantity BedrockAgentDescription Agent to question stock information from Redshift Serverless database Preserve this as default BedrockAgentInstructions You might be an assistant that helps customers question stock information from our Redshift Serverless database utilizing the motion group. Don’t change BedrockAgentName bedrock_kb_query_cross_account Preserve this as default KBFoundationModelId meta.llama3-1-70b-instruct-v1:0 Don’t change KnowledgeBaseId XXXXXXXXXX Data base id from Step 4 TargetRoleArn arn:aws:iam::999999999999:function/bedrock_kb_access_role Substitute with you agent-kb account quantity

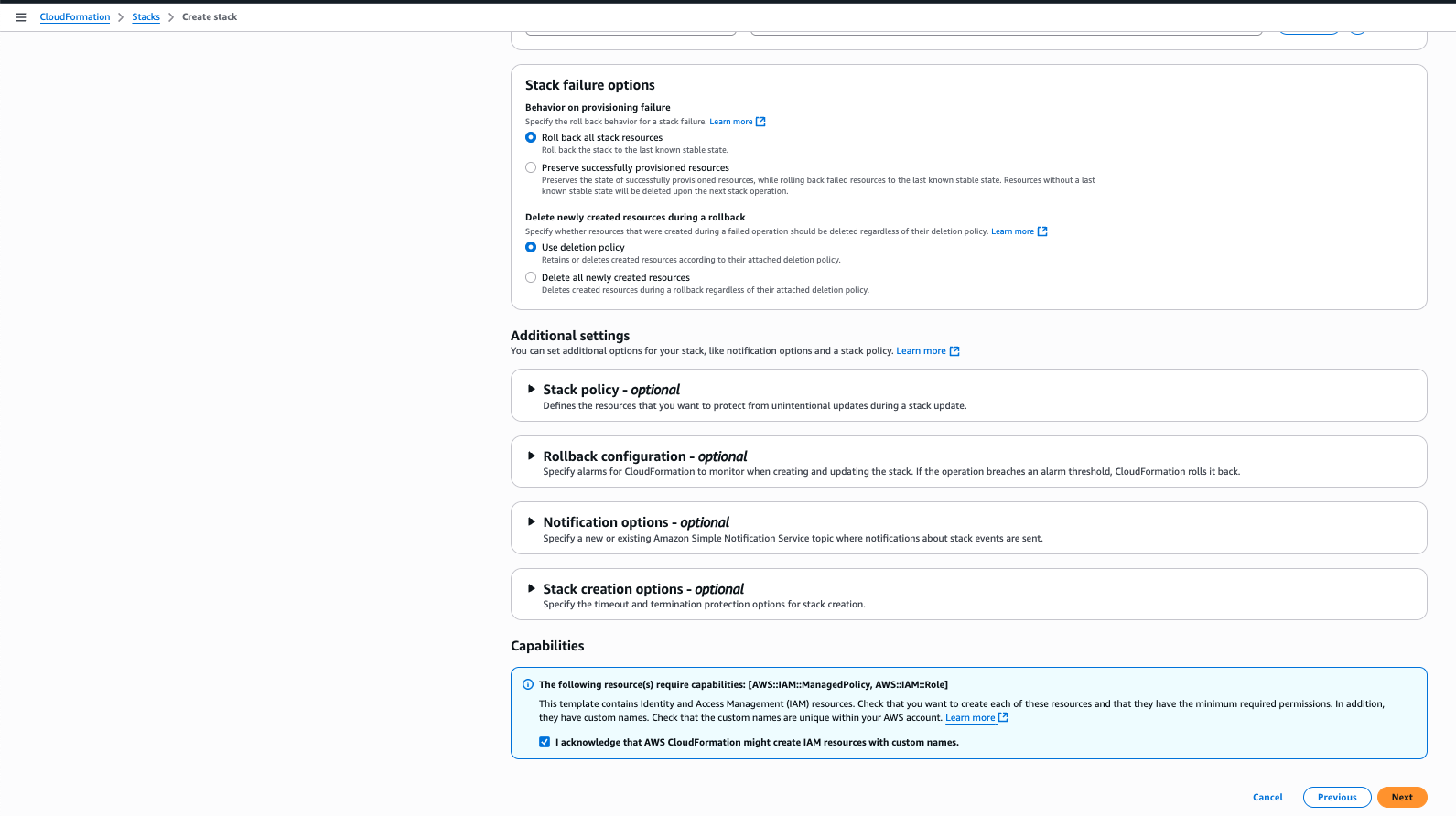

- Full the acknowledgement and select Subsequent.

- Scroll down by means of the web page and select Submit.

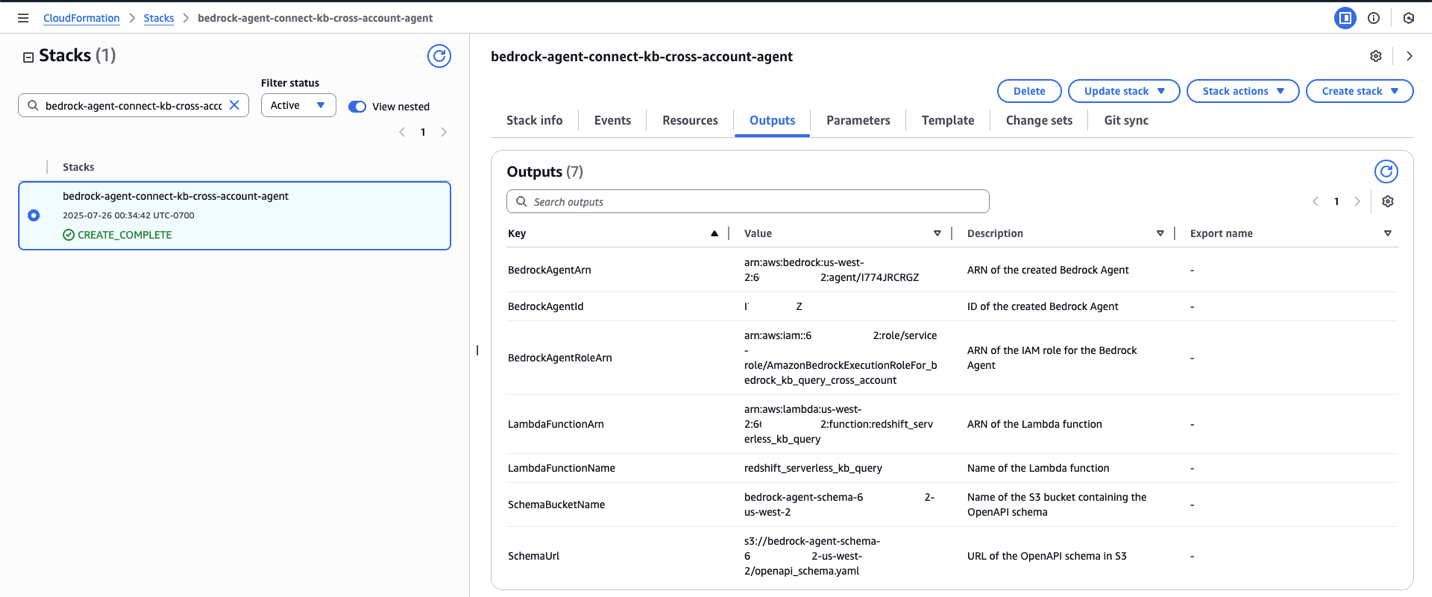

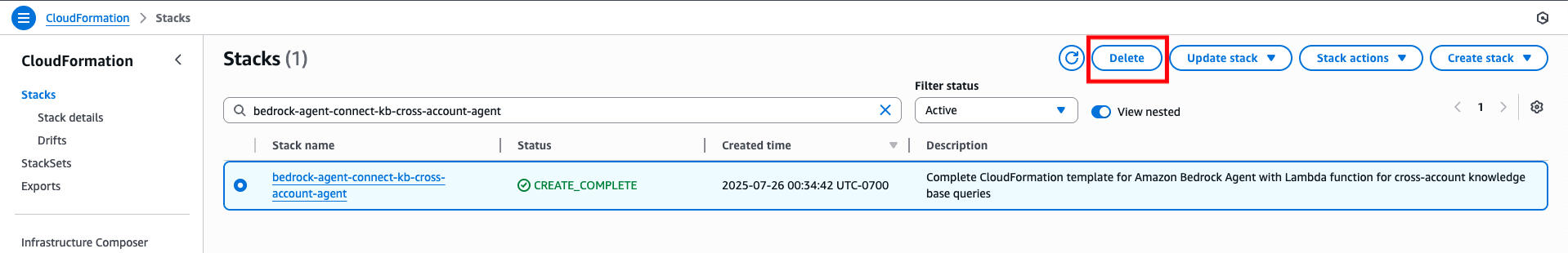

- You will notice the CloudFormation stack is getting created as proven by the standing CREATE_IN_PROGRESS.

- It is going to take a couple of minutes, and you will notice the standing change to CREATE_COMPLETE indicating creation of all sources. Select the Outputs tab to make an observation of the sources that have been created.

In abstract, the CloudFormation script does the next within the agent account.-

-

- Creates a Bedrock agent

- Creates an motion group

- Additionally creates a Lambda perform which is invoked by the Bedrock motion group

- Defines the OpenAPI schema

- Creates crucial roles and permissions for the Bedrock agent

- Lastly, it prepares the Bedrock agent in order that it is able to take a look at.

-

-

-

-

-

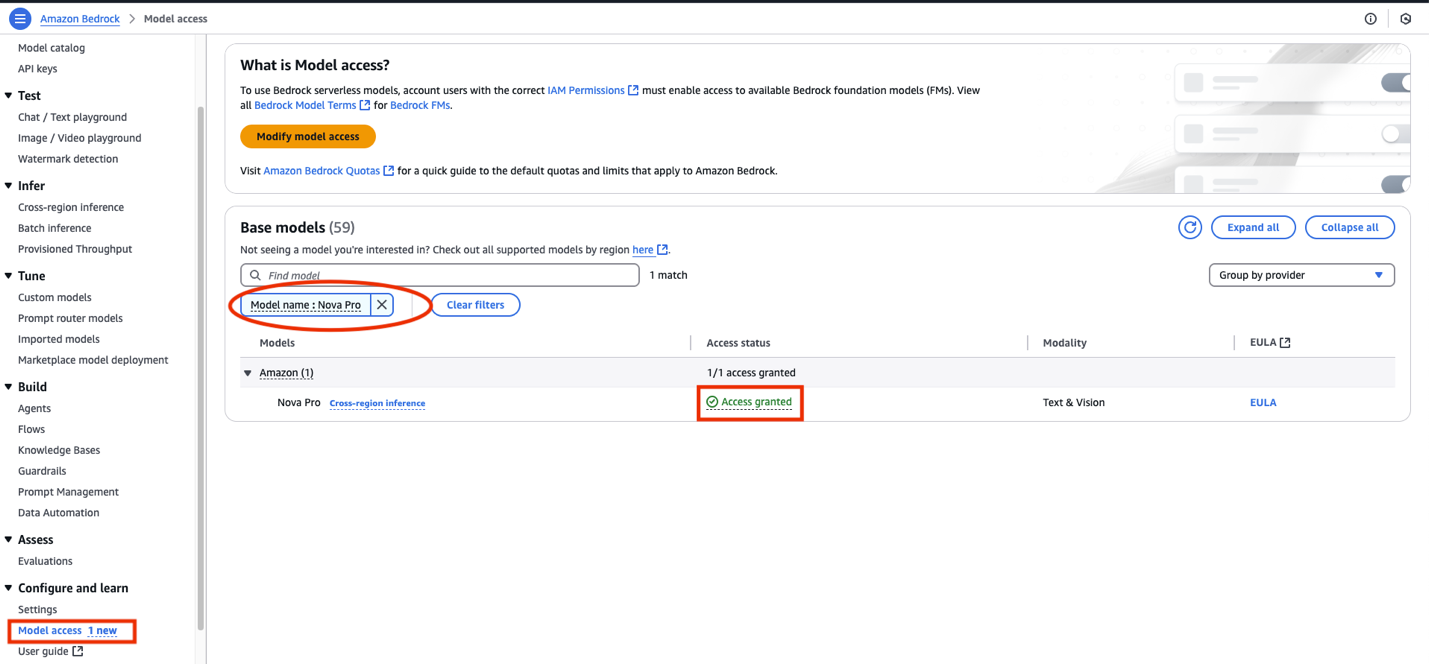

- Test for mannequin entry in Oregon (us-west-2)

-

-

-

- Confirm Nova Professional (us.amazon.nova-pro-v1:0) mannequin entry within the agent account. Navigate to the Amazon Bedrock console and select Mannequin entry underneath Configure and study. Seek for Mannequin title : Nova Professional to confirm entry. If not, then allow mannequin entry.

- Confirm entry to the meta.llama3-1-70b-instruct-v1:0 mannequin within the agent-kb account. This could already be enabled as we arrange the information base earlier.

- Confirm Nova Professional (us.amazon.nova-pro-v1:0) mannequin entry within the agent account. Navigate to the Amazon Bedrock console and select Mannequin entry underneath Configure and study. Seek for Mannequin title : Nova Professional to confirm entry. If not, then allow mannequin entry.

-

-

-

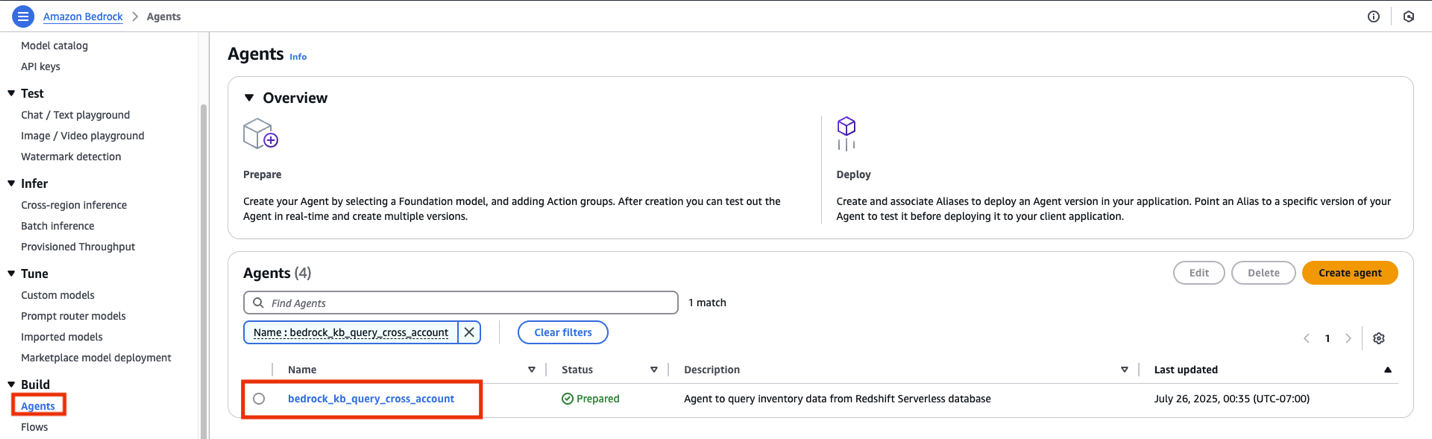

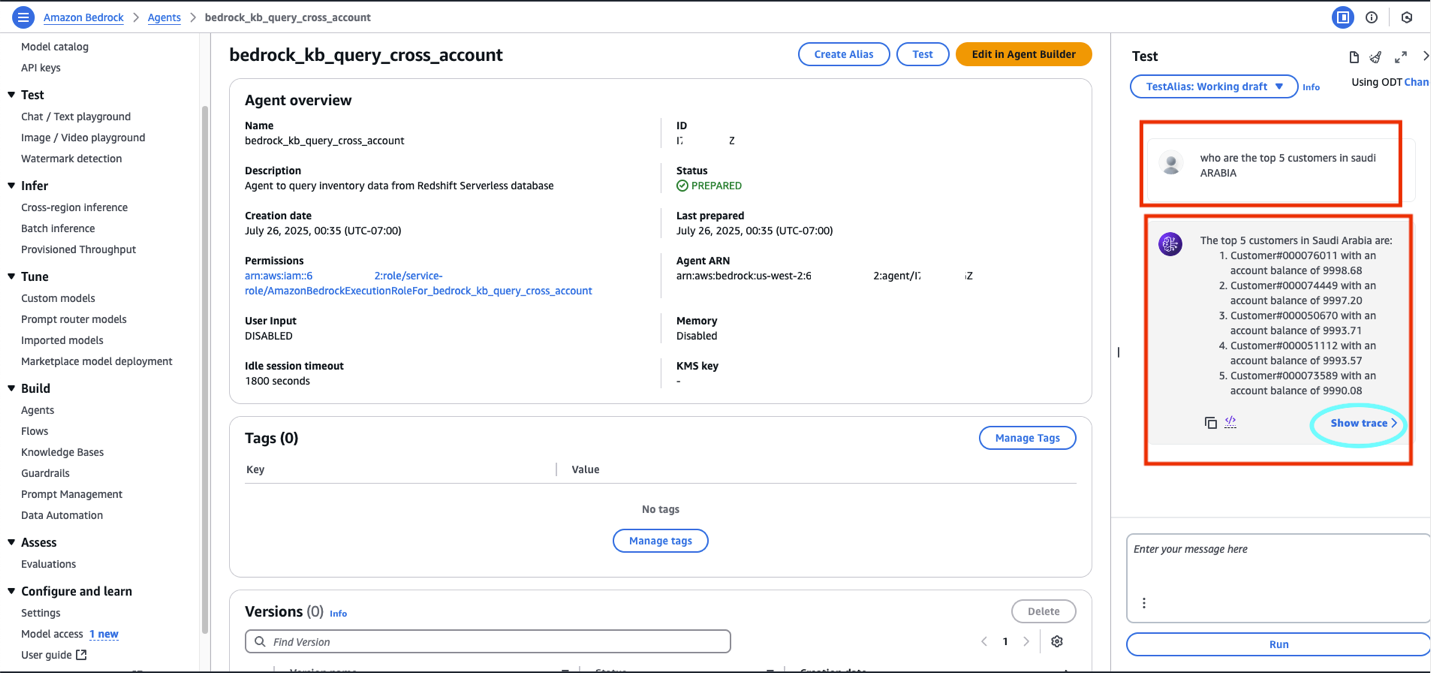

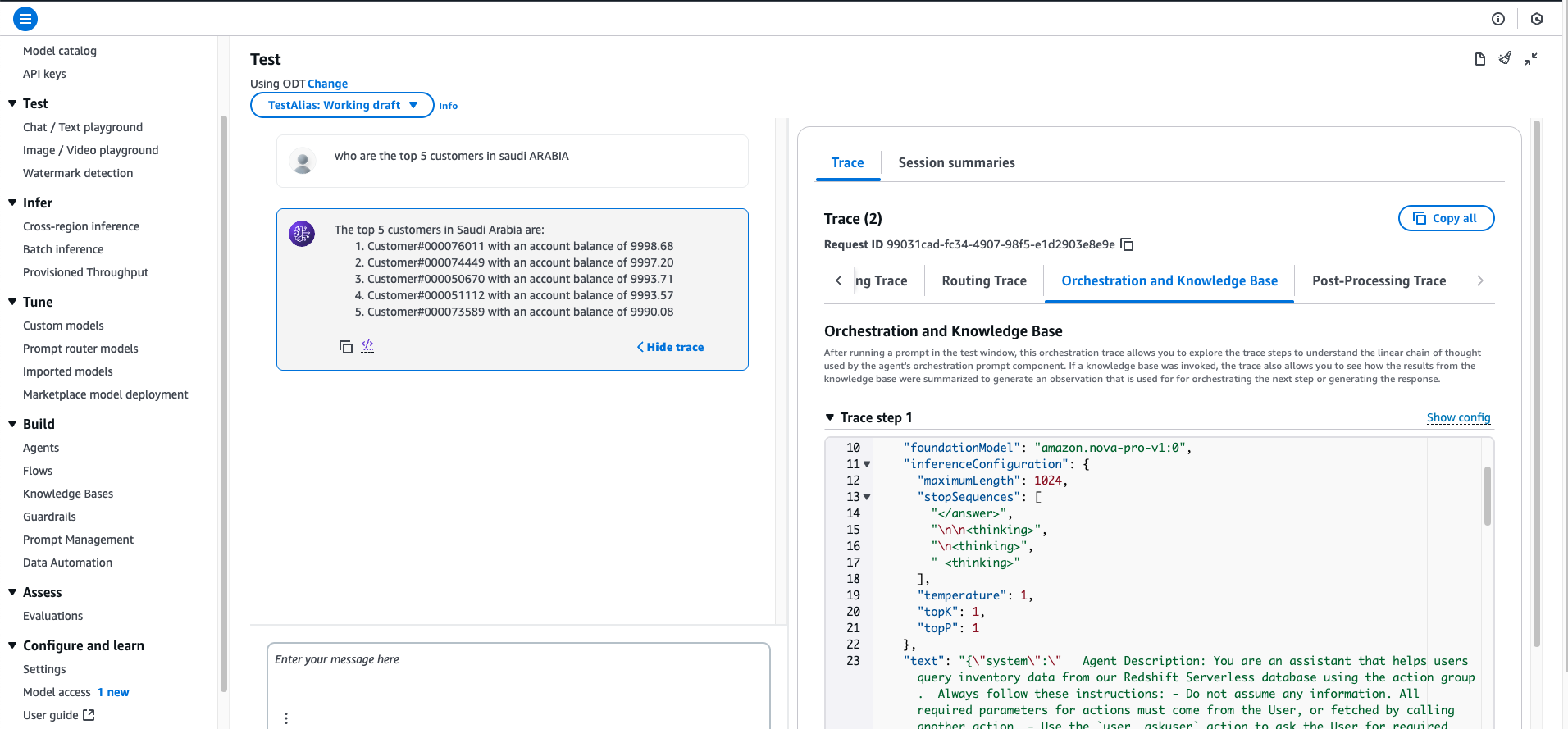

- Run the agent. Go surfing to agent account. Navigate to Amazon Bedrock console and select Brokers underneath Construct.

- Select the title of the agent and select Take a look at. You possibly can take a look at the next questions as talked about the workshop’s Stage 4: Take a look at Pure Language Queries web page. For instance:

-

-

-

- Who’re the highest 5 prospects in Saudi Arabia?

- Who’re the highest components provider in america by quantity?

- What’s the whole income by area for the yr 1998?

- Which merchandise have the very best revenue margins?

- Present me orders with the very best precedence from the final quarter of 1997.

-

-

-

- Select Present hint to analyze the agent traces.

- Make an observation of the AWS account numbers within the agent and agent-kb account. Within the implementation steps we’ll refer them as follows:

Some really useful greatest practices:

-

-

- Phrase your query to be extra particular

- Use terminology that matches your desk descriptions

- Strive questions much like your curated examples

- Confirm your query pertains to information that exists within the TPCH dataset

- Use Amazon Bedrock Guardrails so as to add configurable safeguards to questions and responses.

-

Clear up sources

It’s endorsed that you just clear up any sources you do not want anymore to keep away from any pointless costs:

-

-

- Navigate to the CloudFormation console for the agent and agent-kb account, seek for the stack and and select Delete.

- S3 buckets should be deleted individually.

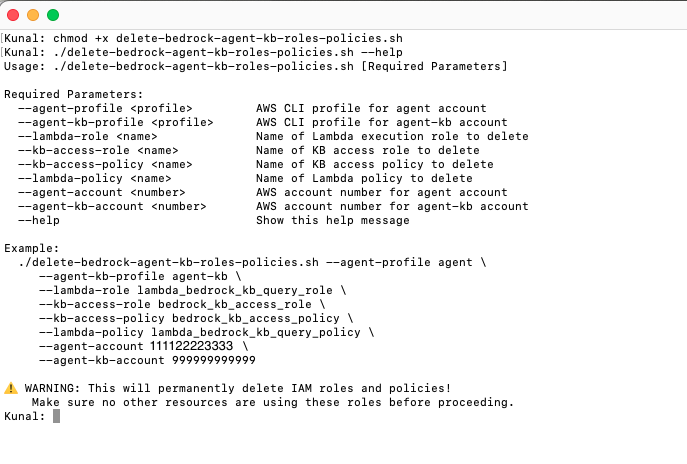

- For deleting the roles and insurance policies created in each accounts, obtain the script

delete-bedrock-agent-kb-roles-policies.shfrom the aws-samples GitHub repository.- Open Terminal in Mac or comparable bash shell on different platforms.

- Find and alter the listing to the downloaded location, present executable permissions:

- In case you are nonetheless not clear on the script utilization or inputs, then you possibly can run the script with the –assist possibility then the script will show the utilization:

./ delete-bedrock-agent-kb-roles-policies.sh –assist

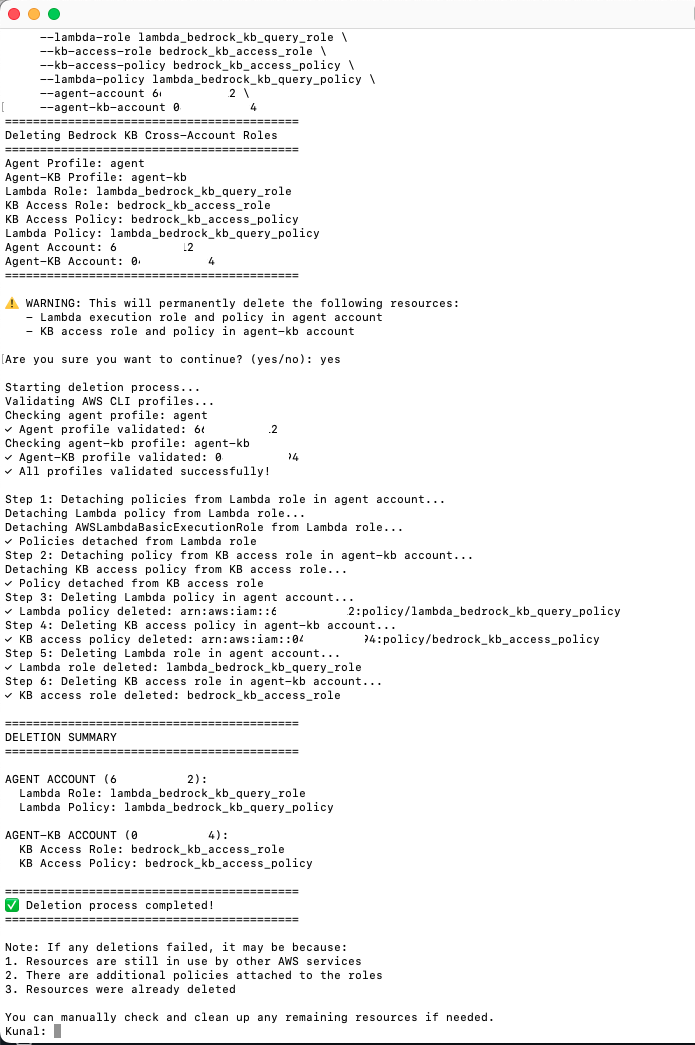

- Run the script:

delete-bedrock-agent-kb-roles-policies.shwith the identical values for a similar enter parameters as in Step7 when working thecreate_bedrock_agent_kb_roles_policies.shscript. Notice: Enter the right account numbers for agent-account and agent-kb-account earlier than working.The script will ask for a affirmation, say sure and press enter.

-

Abstract

This resolution demonstrates how the Amazon Bedrock agent within the agent account can question the Amazon Bedrock information base within the agent-kb account.

Conclusion

This resolution makes use of Amazon Bedrock Data Bases for structured information to create a extra built-in method to cross-account information entry. The information base in agent-kb account connects on to Amazon Redshift Serverless in a personal VPC. The Amazon Bedrock agent within the agent account invokes an AWS Lambda perform as a part of its motion group to make a cross-account connection to retrieve response from the structured information base.

This structure provides a number of benefits:

-

-

- Makes use of Amazon Bedrock Data Bases capabilities for structured information

- Offers a extra seamless integration between the agent and the info supply

- Maintains correct safety boundaries between accounts

- Reduces the complexity of direct database entry codes

-

As Amazon Bedrock continues to evolve, you possibly can make the most of future enhancements to information base performance whereas sustaining your multi-account structure.

Concerning the Authors

Kunal Ghosh is an skilled in AWS applied sciences. He keen about constructing environment friendly and efficient options on AWS, particularly involving generative AI, analytics, information science, and machine studying. Apart from household time, he likes studying, swimming, biking, and watching films, and he’s a foodie.

Kunal Ghosh is an skilled in AWS applied sciences. He keen about constructing environment friendly and efficient options on AWS, particularly involving generative AI, analytics, information science, and machine studying. Apart from household time, he likes studying, swimming, biking, and watching films, and he’s a foodie.

Arghya Banerjee is a Sr. Options Architect at AWS within the San Francisco Bay Space, centered on serving to prospects undertake and use the AWS Cloud. He’s centered on huge information, information lakes, streaming and batch analytics providers, and generative AI applied sciences.

Arghya Banerjee is a Sr. Options Architect at AWS within the San Francisco Bay Space, centered on serving to prospects undertake and use the AWS Cloud. He’s centered on huge information, information lakes, streaming and batch analytics providers, and generative AI applied sciences.

Indranil Banerjee is a Sr. Options Architect at AWS within the San Francisco Bay Space, centered on serving to prospects within the hi-tech and semi-conductor sectors clear up advanced enterprise issues utilizing the AWS Cloud. His particular pursuits are within the areas of legacy modernization and migration, constructing analytics platforms and serving to prospects undertake leading edge applied sciences resembling generative AI.

Indranil Banerjee is a Sr. Options Architect at AWS within the San Francisco Bay Space, centered on serving to prospects within the hi-tech and semi-conductor sectors clear up advanced enterprise issues utilizing the AWS Cloud. His particular pursuits are within the areas of legacy modernization and migration, constructing analytics platforms and serving to prospects undertake leading edge applied sciences resembling generative AI.

Vinayak Datar is Sr. Options Supervisor based mostly in Bay Space, serving to enterprise prospects speed up their AWS Cloud journey. He’s specializing in serving to prospects to transform concepts from ideas to working prototypes to manufacturing utilizing AWS generative AI providers.

Vinayak Datar is Sr. Options Supervisor based mostly in Bay Space, serving to enterprise prospects speed up their AWS Cloud journey. He’s specializing in serving to prospects to transform concepts from ideas to working prototypes to manufacturing utilizing AWS generative AI providers.