Generative AI has created unprecedented alternatives for Canadian organizations to remodel their operations and buyer experiences. We’re excited to announce that prospects in Canada can now entry superior basis fashions together with Anthropic’s Claude Sonnet 4.5 and Claude Haiku 4.5 on Amazon Bedrock via cross-Area inference (CRIS).

This put up explores how Canadian organizations can use cross-Area inference profiles from the Canada (Central) Area to entry the most recent basis fashions to speed up AI initiatives. We’ll exhibit find out how to get began with these new capabilities, present steering for migrating from older fashions, and share really helpful practices for quota administration.

Canadian cross-Area inference: Your gateway to world AI innovation

To assist prospects obtain the dimensions of their Generative AI functions, Amazon Bedrock presents Cross-Area Inference (CRIS) profiles, a strong function that allows organizations to seamlessly distribute inference processing throughout a number of AWS Areas. This functionality helps you get larger throughput whereas constructing at scale, serving to to make sure your generative AI functions stay responsive and dependable even underneath heavy load.

Amazon Bedrock offers two varieties of cross-Area Inference profiles:

- Geographic CRIS: Amazon Bedrock routinely selects the optimum business Area inside that geography to course of your inference request.

- International CRIS: International CRIS additional enhances cross-Area inference by enabling the routing of inference requests to supported business Areas worldwide, optimizing obtainable sources and enabling larger mannequin throughput.

Cross-Area Inference operates via the safe AWS community with end-to-end encryption for each information in transit and at relaxation. When a buyer submits an inference request from the Canada (Central) Area, CRIS intelligently routes the request to one of many vacation spot areas configured for the inference profile (US or International profiles).

The important thing distinction is that whereas inference processing (the transient computation) might happen in one other Area, all information at relaxation—together with logs, data bases, and any saved configurations—stays completely inside the Canada (Central) Area. The inference request travels over the AWS International Community, by no means traversing the general public web, and responses are returned encrypted to your utility in Canada.

Cross-Area inference configuration for Canada

With CRIS, Canadian organizations achieve earlier entry to basis fashions, together with cutting-edge fashions like Claude Sonnet 4.5 with enhanced reasoning capabilities, offering a quicker path to innovation. CRIS additionally delivers enhanced capability and efficiency by offering entry to capability throughout a number of Areas. This allows larger throughput throughout peak durations akin to tax season, Black Friday, and vacation purchasing, computerized burst dealing with with out handbook intervention, and better resiliency by serving requests from a bigger pool of sources.

Canadian prospects can select between two inference profile sorts based mostly on their necessities:

| CRIS profile | Supply Area | Vacation spot Areas | Description |

| US cross-Area inference | ca-central-1 |

A number of US Areas | Requests from Canada (Central) may be routed to supported US Areas with capability. |

| International inference | ca-central-1 |

International AWS Areas | Requests from Canada (Central) may be routed to a Area within the AWS world CRIS profile. |

Getting began with CRIS from Canada

To start utilizing cross-Area Inference from Canada, comply with these steps:

Configure AWS Id and Entry Administration (IAM) permissions

First, confirm your IAM function or consumer has the mandatory permissions to invoke Amazon Bedrock fashions utilizing cross-Area inference profiles.

Right here’s an instance of a coverage for US cross-Area inference:

For world CRIS seek advice from the weblog put up, Unlock world AI inference scalability utilizing new world cross-Area inference on Amazon Bedrock with Anthropic’s Claude Sonnet 4.5.

Use cross-Area inference profiles

Configure your utility to make use of the related inference profile ID. The profiles use prefixes to point their routing scope:

| Mannequin | Routing scope | Inference profile ID |

| Claude Sonnet 4.5 | US Areas | us.anthropic.claude-sonnet-4-5-20250929-v1:0 |

| Claude Sonnet 4.5 | International | world.anthropic.claude-sonnet-4-5-20250929-v1:0 |

| Claude Haiku 4.5 | US Areas | us.anthropic.claude-haiku-4-5-20251001-v1:0 |

| Claude Haiku 4.5 | International | world.anthropic.claude-haiku-4-5-20251001-v1:0 |

Instance code

Right here’s find out how to use the Amazon Bedrock Converse API with a US CRIS inference profile from Canada:

Quota administration for Canadian workloads

When utilizing CRIS from Canada, quota administration is carried out on the supply Area degree (ca-central-1). This implies quota will increase requested for the Canada (Central) Area apply to all inference requests originating from Canada, no matter the place they’re processed.

Understanding quota calculations

Vital: When calculating your required quota will increase, you should take into consideration the burndown price, outlined as the speed at which enter and output tokens are transformed into token quota utilization for the throttling system. The next fashions have a 5x burn down price for output tokens (1 output token consumes 5 tokens out of your quotas):

- Anthropic Claude Opus 4

- Anthropic Claude Sonnet 4.5

- Anthropic Claude Sonnet 4

- Anthropic Claude 3.7 Sonnet

For different fashions, the burndown price is 1:1 (1 output token consumes 1 token out of your quota). For enter tokens, the token to quota ratio is 1:1. The calculation for the overall variety of tokens per request is as follows:

Enter token depend + Cache write enter tokens + (Output token depend x Burndown price)

Requesting quota will increase

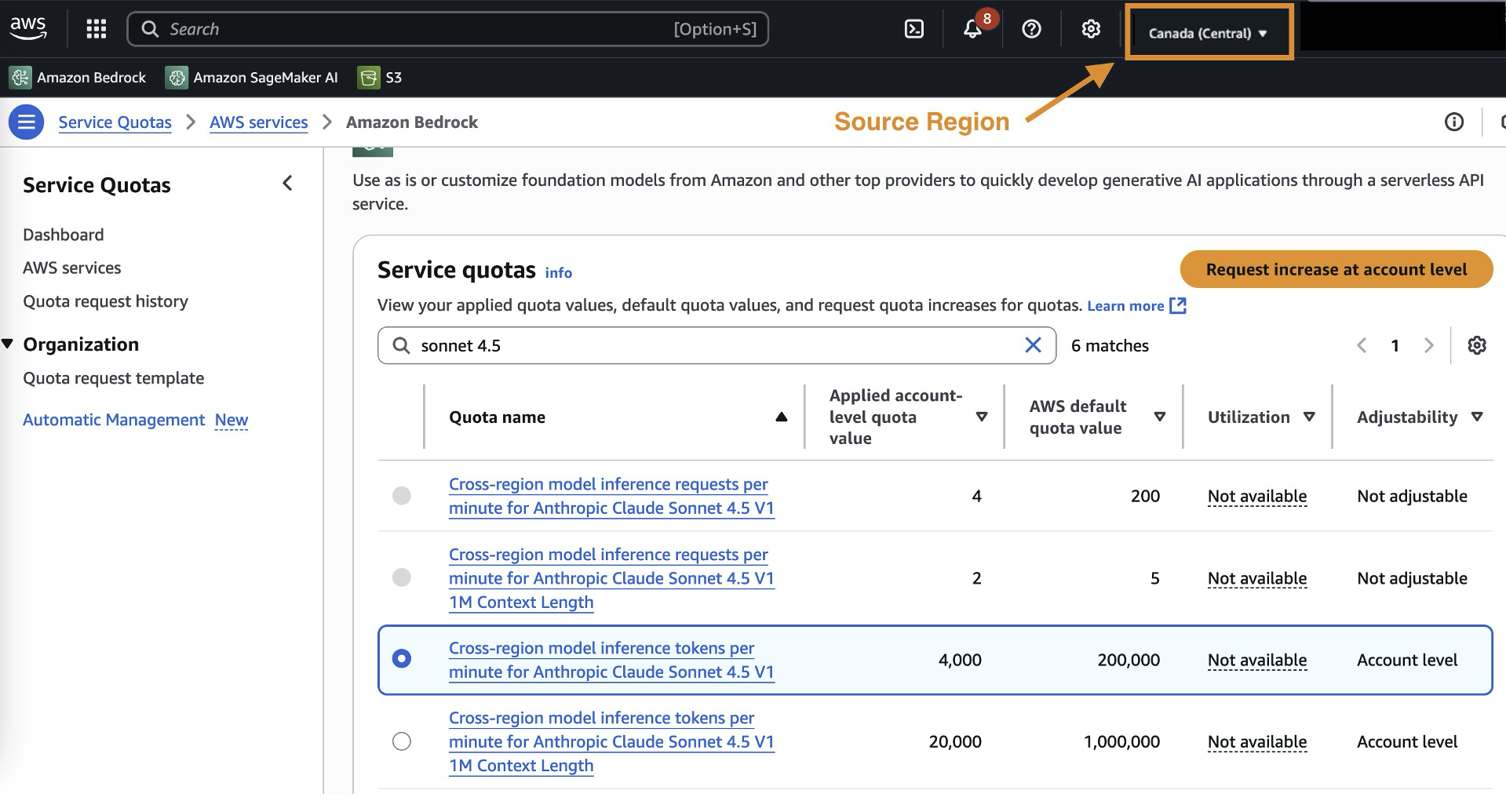

To request quota will increase for CRIS in Canada:

- Navigate to the AWS Service Quotas console within the Canada (Central) Area

- Seek for the precise mannequin quota (for instance, “Claude Sonnet 4.5 tokens per minute”)

- Submit a rise request based mostly in your projected utilization

Migrating from older Claude fashions to Claude 4.5

Organizations at present utilizing older Claude fashions ought to plan their migration to Claude 4.5 to leverage the newest mannequin capabilities.

To plan your migration technique, incorporate the next components:

- Benchmark present efficiency: Set up baseline metrics to your current fashions.

- Take a look at with consultant workloads and optimize prompts: Validate Claude 4.5 efficiency together with your particular use circumstances, and modify immediate to leverage Claude 4.5’s enhanced capabilities and make use of the Bedrock immediate optimizer software.

- Implement gradual rollout: Transition site visitors progressively.

- Monitor and modify: Observe efficiency metrics and modify quotas as wanted.

Selecting between US and International inference profiles

When implementing CRIS from Canada, organizations can select between US and International inference profiles based mostly on their particular necessities.

US cross-Area inference is really helpful for organizations with current US information processing agreements, excessive throughput and resilience necessities and growth and testing environments.

Conclusion

Cross-Area inference for Amazon Bedrock represents a possibility for Canadian organizations that wish to use AI whereas sustaining information governance. By distinguishing between transient inference processing and chronic information storage, CRIS offers quicker entry to the most recent basis fashions with out compromising compliance necessities.

With CRIS, Canadian organizations get entry to new fashions inside days as a substitute of months. The system scales routinely throughout peak enterprise durations whereas sustaining full audit trails inside Canada. This helps you meet compliance necessities and use the identical superior AI capabilities as organizations worldwide. To get began, assessment your information governance necessities and configure IAM permissions. Then take a look at with the inference profile that matches your wants—US for decrease latency to US Areas, or International for max capability.

Concerning the authors

Daniel Duplessis is a Principal Generative AI Specialist Options Architect at Amazon Internet Providers (AWS), the place he guides enterprises in crafting complete AI implementation methods and set up the foundational capabilities important for scaling AI throughout the enterprise.

Daniel Duplessis is a Principal Generative AI Specialist Options Architect at Amazon Internet Providers (AWS), the place he guides enterprises in crafting complete AI implementation methods and set up the foundational capabilities important for scaling AI throughout the enterprise.

Dan MacKay is the Monetary Providers Compliance Specialist for AWS Canada. He advises prospects on really helpful practices and sensible options for cloud-related governance, threat, and compliance. Dan makes a speciality of serving to AWS prospects navigate monetary companies and privateness laws relevant to the usage of cloud expertise in Canada with a give attention to third-party threat administration and operational resilience.

Dan MacKay is the Monetary Providers Compliance Specialist for AWS Canada. He advises prospects on really helpful practices and sensible options for cloud-related governance, threat, and compliance. Dan makes a speciality of serving to AWS prospects navigate monetary companies and privateness laws relevant to the usage of cloud expertise in Canada with a give attention to third-party threat administration and operational resilience.

Melanie Li, PhD, is a Senior Generative AI Specialist Options Architect at AWS based mostly in Sydney, Australia, the place her focus is on working with prospects to construct options utilizing state-of-the-art AI/ML instruments. She has been actively concerned in a number of generative AI initiatives throughout APJ, harnessing the ability of LLMs. Previous to becoming a member of AWS, Dr. Li held information science roles within the monetary and retail industries.

Melanie Li, PhD, is a Senior Generative AI Specialist Options Architect at AWS based mostly in Sydney, Australia, the place her focus is on working with prospects to construct options utilizing state-of-the-art AI/ML instruments. She has been actively concerned in a number of generative AI initiatives throughout APJ, harnessing the ability of LLMs. Previous to becoming a member of AWS, Dr. Li held information science roles within the monetary and retail industries.

Serge Malikov is a Senior Options Architect Supervisor based mostly out of Canada. His focus is on the monetary companies business.

Serge Malikov is a Senior Options Architect Supervisor based mostly out of Canada. His focus is on the monetary companies business.

Saurabh Trikande is a Senior Product Supervisor for Amazon Bedrock and Amazon SageMaker Inference. He’s keen about working with prospects and companions, motivated by the aim of democratizing AI. He focuses on core challenges associated to deploying advanced AI functions, inference with multi-tenant fashions, value optimizations, and making the deployment of generative AI fashions extra accessible. In his spare time, Saurabh enjoys climbing, studying about revolutionary applied sciences, following TechCrunch, and spending time along with his household.

Saurabh Trikande is a Senior Product Supervisor for Amazon Bedrock and Amazon SageMaker Inference. He’s keen about working with prospects and companions, motivated by the aim of democratizing AI. He focuses on core challenges associated to deploying advanced AI functions, inference with multi-tenant fashions, value optimizations, and making the deployment of generative AI fashions extra accessible. In his spare time, Saurabh enjoys climbing, studying about revolutionary applied sciences, following TechCrunch, and spending time along with his household.

Sharadha Kandasubramanian is a Senior Technical Program Supervisor for Amazon Bedrock. She drives cross-functional GenAI packages for Amazon Bedrock, enabling prospects to develop and scale their GenAI workloads. Outdoors of labor, she’s an avid runner and biker who loves spending time outdoor within the solar.

Sharadha Kandasubramanian is a Senior Technical Program Supervisor for Amazon Bedrock. She drives cross-functional GenAI packages for Amazon Bedrock, enabling prospects to develop and scale their GenAI workloads. Outdoors of labor, she’s an avid runner and biker who loves spending time outdoor within the solar.