Companies face a rising problem: prospects want solutions quick, however assist groups are overwhelmed. Assist documentation like product manuals and information base articles usually require customers to go looking by way of lots of of pages, and assist brokers typically run 20–30 buyer queries per day to find particular info.

This publish demonstrates clear up this problem by constructing an AI-powered web site assistant utilizing Amazon Bedrock and Amazon Bedrock Data Bases. This answer is designed to learn each inner groups and exterior prospects, and may supply the next advantages:

- Instantaneous, related solutions for purchasers, assuaging the necessity to search by way of documentation

- A robust information retrieval system for assist brokers, decreasing decision time

- Round the clock automated assist

Answer overview

The answer makes use of Retrieval-Augmented Technology (RAG) to retrieve related info from a information base and return it to the consumer primarily based on their entry. It consists of the next key elements:

- Amazon Bedrock Data Bases – Content material from the corporate’s web site is crawled and saved within the information base. Paperwork from an Amazon Easy Storage Service (Amazon S3) bucket, together with manuals and troubleshooting guides, are additionally listed and saved within the information base. With Amazon Bedrock Data Bases, you’ll be able to configure a number of knowledge sources and use the filter configurations to distinguish between inner and exterior info. This helps shield inner knowledge by way of superior safety controls.

- Amazon Bedrock managed LLMs – A big language mannequin (LLM) from Amazon Bedrock generates AI-powered responses to consumer questions.

- Scalable serverless structure – The answer makes use of Amazon Elastic Container Service (Amazon ECS) to host the UI, and an AWS Lambda perform to deal with the consumer requests.

- Automated CI/CD deployment – The answer makes use of the AWS Cloud Growth Package (AWS CDK) to deal with steady integration and supply (CI/CD) deployment.

The next diagram illustrates the structure of this answer.

The workflow consists of the next steps:

- Amazon Bedrock Data Bases processes paperwork uploaded to Amazon S3 by chunking them and producing embeddings. Moreover, the Amazon Bedrock internet crawler accesses chosen web sites to extract and ingest their contents.

- The online software runs as an ECS software. Inside and exterior customers use browsers to entry the appliance by way of Elastic Load Balancing (ELB). Customers log in to the appliance utilizing their login credentials registered in an Amazon Cognito consumer pool.

- When a consumer submits a query, the appliance invokes a Lambda perform, which makes use of the Amazon Bedrock APIs to retrieve the related info from the information base. It additionally provides the related knowledge supply IDs to Amazon Bedrock primarily based on consumer kind (exterior or inner) so the information base retrieves solely the data accessible to that consumer kind.

- The Lambda perform then invokes the Amazon Nova Lite LLM to generate responses. The LLM augments the data from the information base to generate a response to the consumer question, which is returned from the Lambda perform and exhibited to the consumer.

Within the following sections, we show crawl and configure the exterior web site as a information base, and likewise add inner documentation.

Conditions

You need to have the next in place to deploy the answer on this publish:

Create information base and ingest web site knowledge

Step one is to construct a information base to ingest knowledge from an internet site and operational paperwork from an S3 bucket. Full the next steps to create your information base:

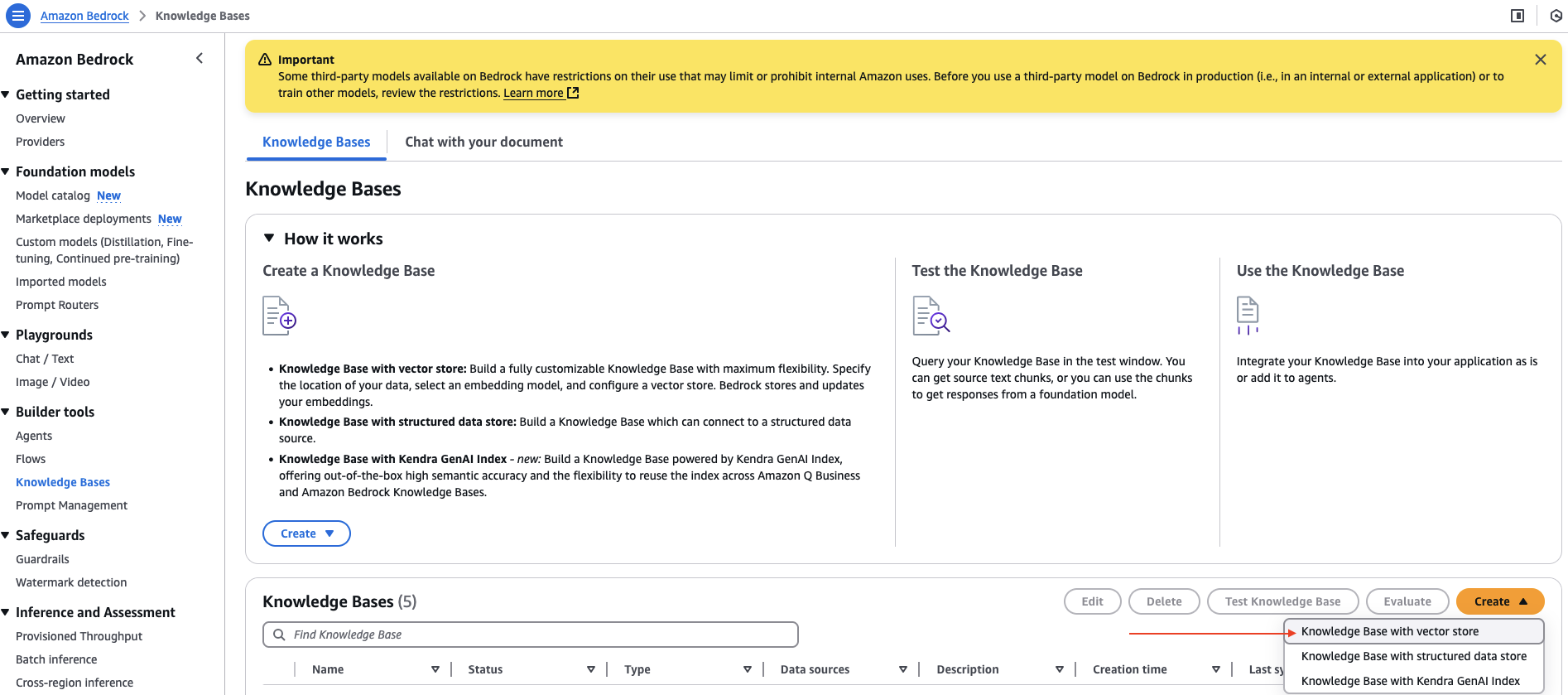

- On the Amazon Bedrock console, select Data Bases underneath Builder instruments within the navigation pane.

- On the Create dropdown menu, select Data Base with vector retailer.

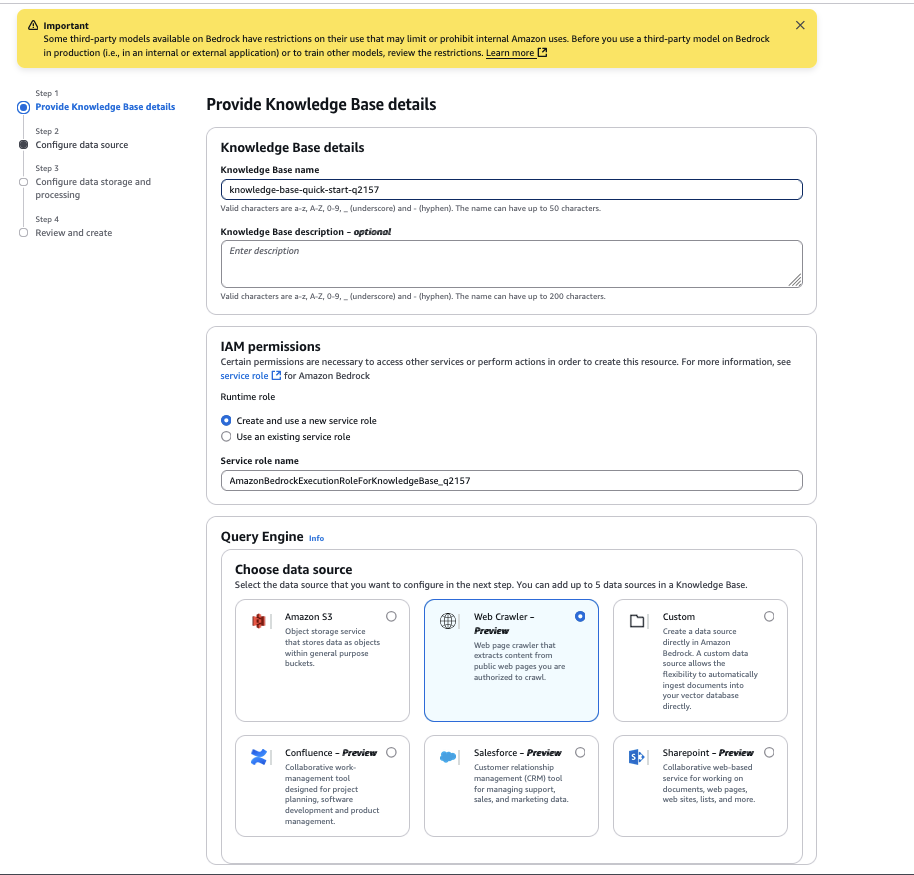

- For Data Base identify, enter a reputation.

- For Select a knowledge supply, choose Net Crawler.

- Select Subsequent.

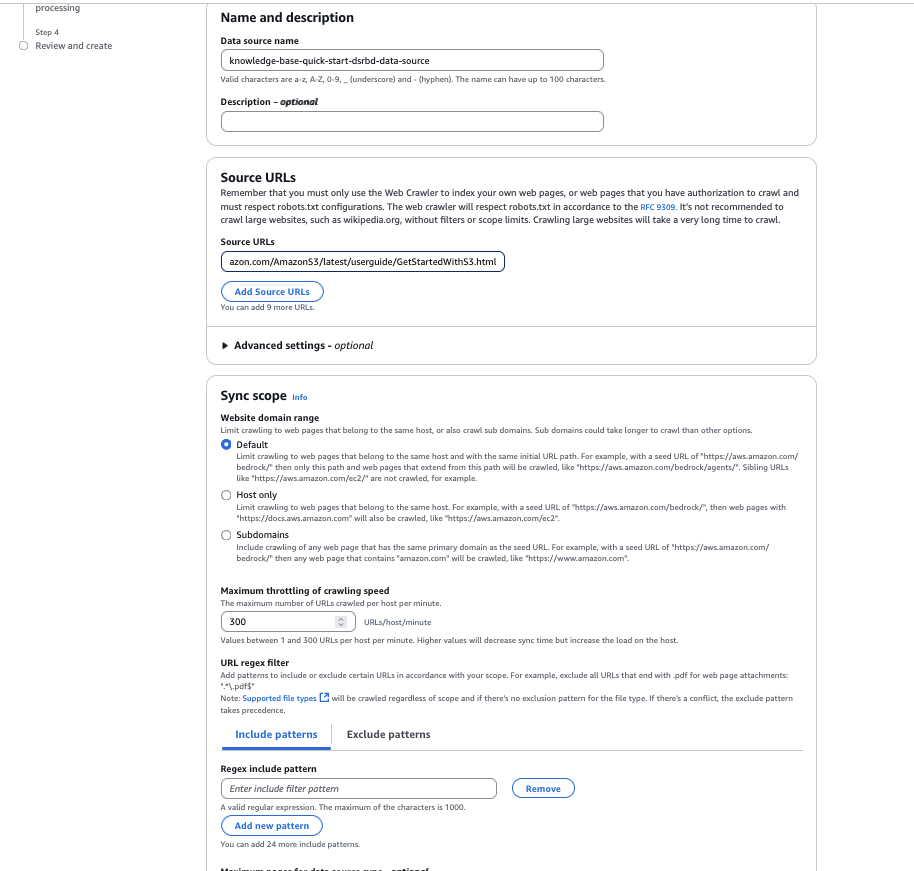

- For Information supply identify, enter a reputation on your knowledge supply.

- For Supply URLs, enter the goal web site HTML web page to crawl. For instance, we use

https://docs.aws.amazon.com/AmazonS3/newest/userguide/GetStartedWithS3.html. - For Web site area vary, choose Default because the crawling scope. You can even configure it to host solely domains or subdomains if you wish to limit the crawling to a particular area or subdomain.

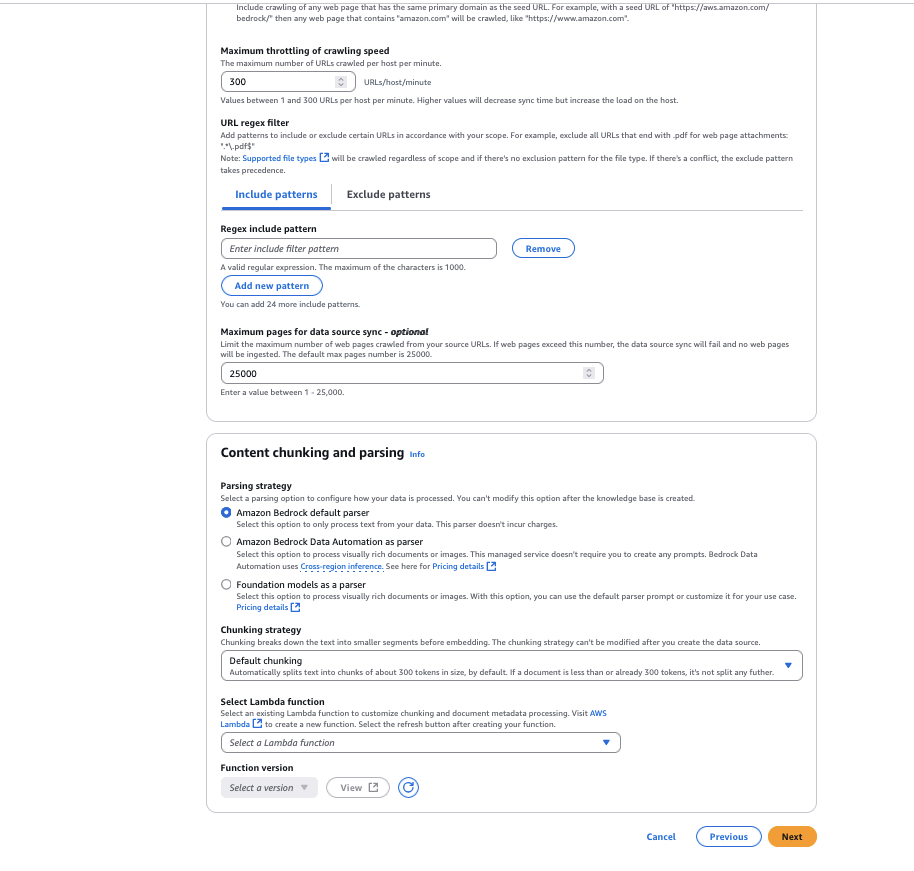

- For URL regex filter, you’ll be able to configure the URL patterns to incorporate or exclude particular URLs. For this instance, we go away this setting clean.

- For Chunking technique, you’ll be able to configure the content material parsing choices to customise the info chunking technique. For this instance, we go away it as Default chunking.

- Select Subsequent.

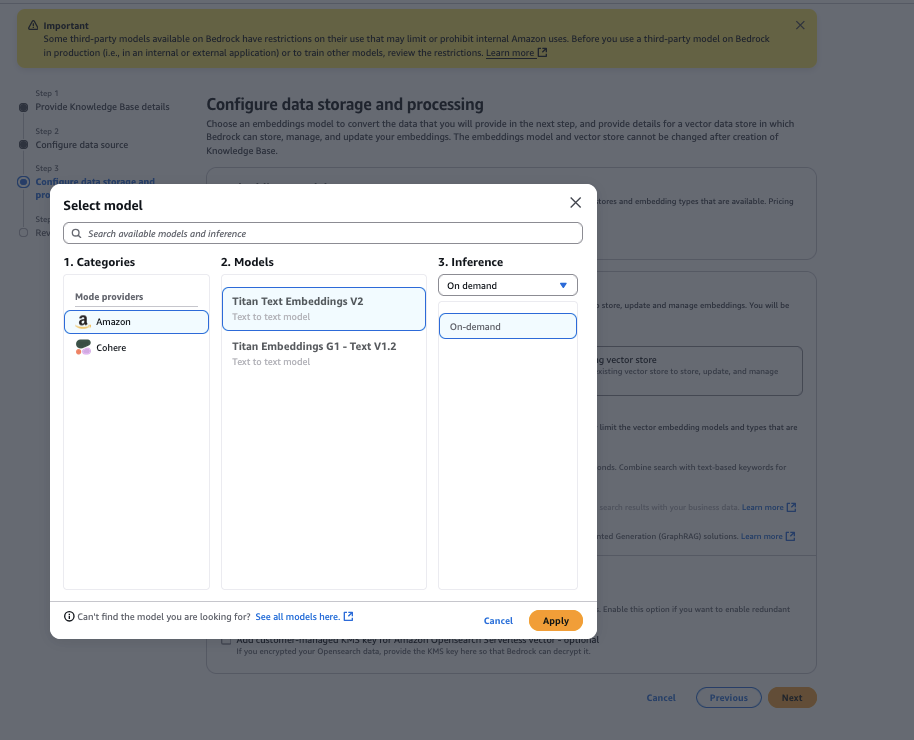

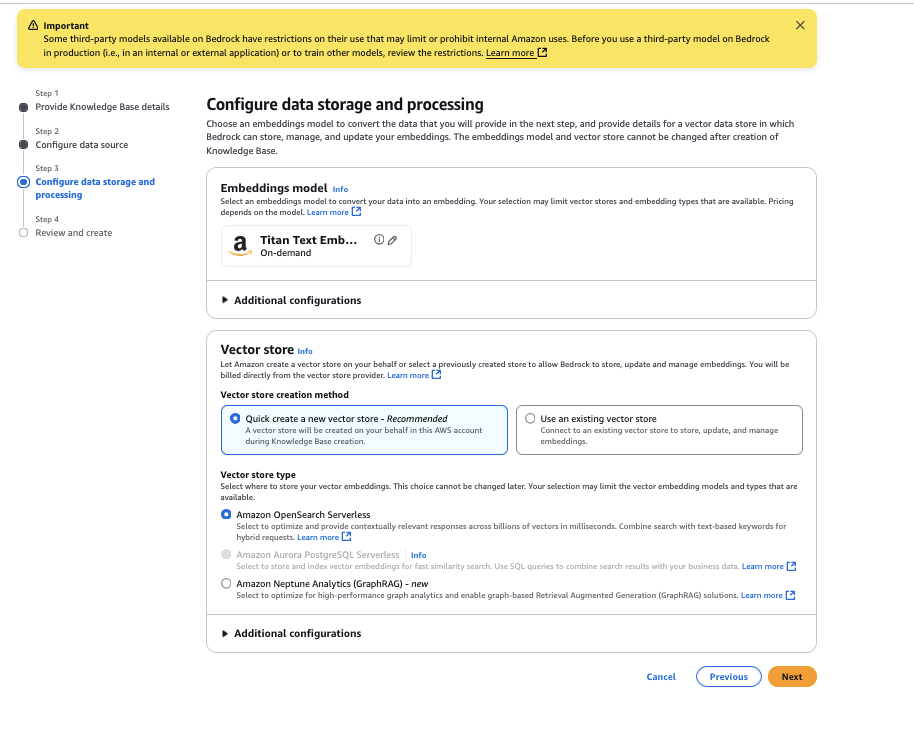

- Select the Amazon Titan Textual content Embeddings V2 mannequin, then select Apply.

- For Vector retailer kind, choose Amazon OpenSearch Serverless, then select Subsequent.

- Evaluation the configurations and select Create Data Base.

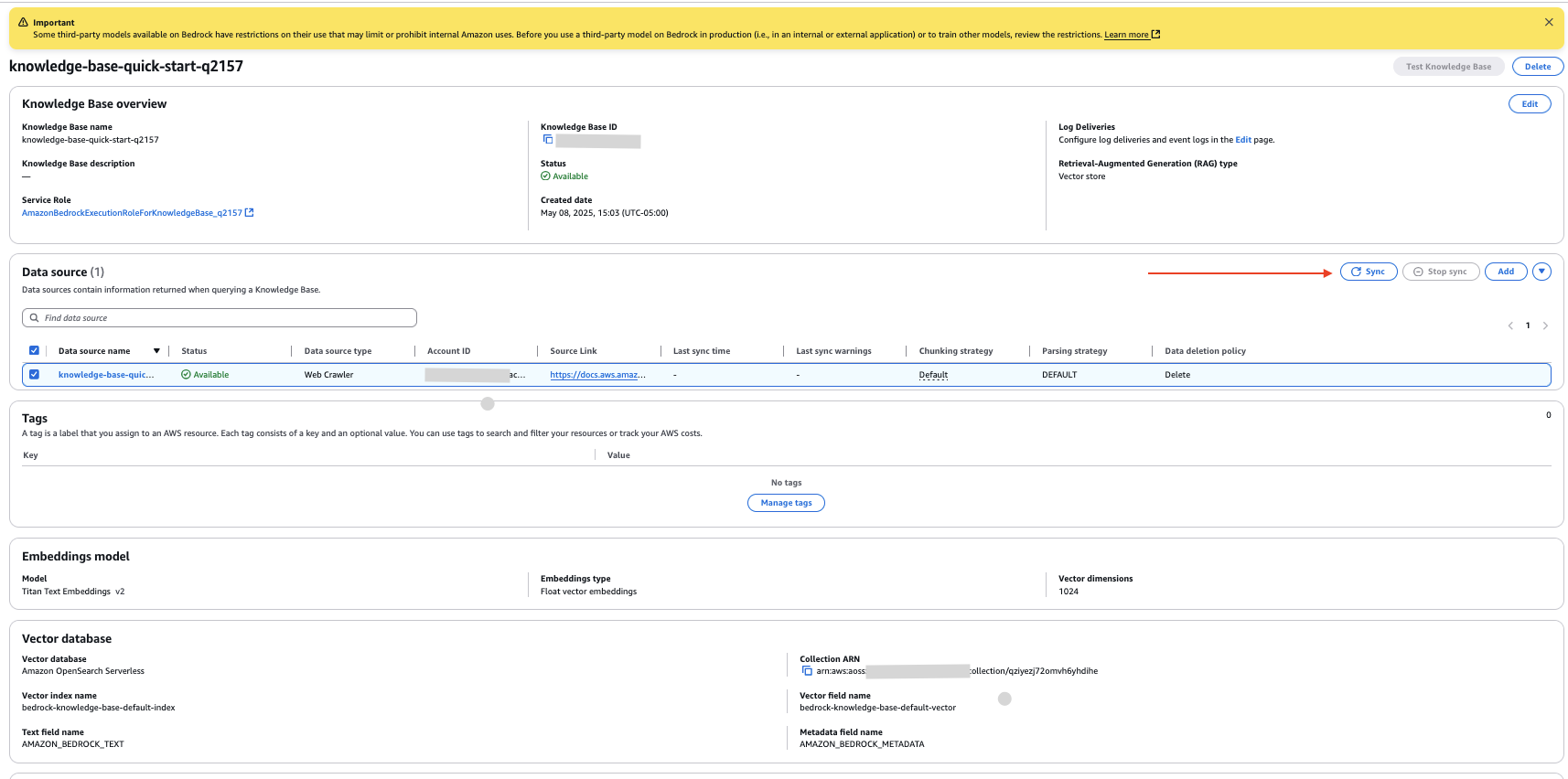

You’ve gotten now created a information base with the info supply configured as the web site hyperlink you offered.

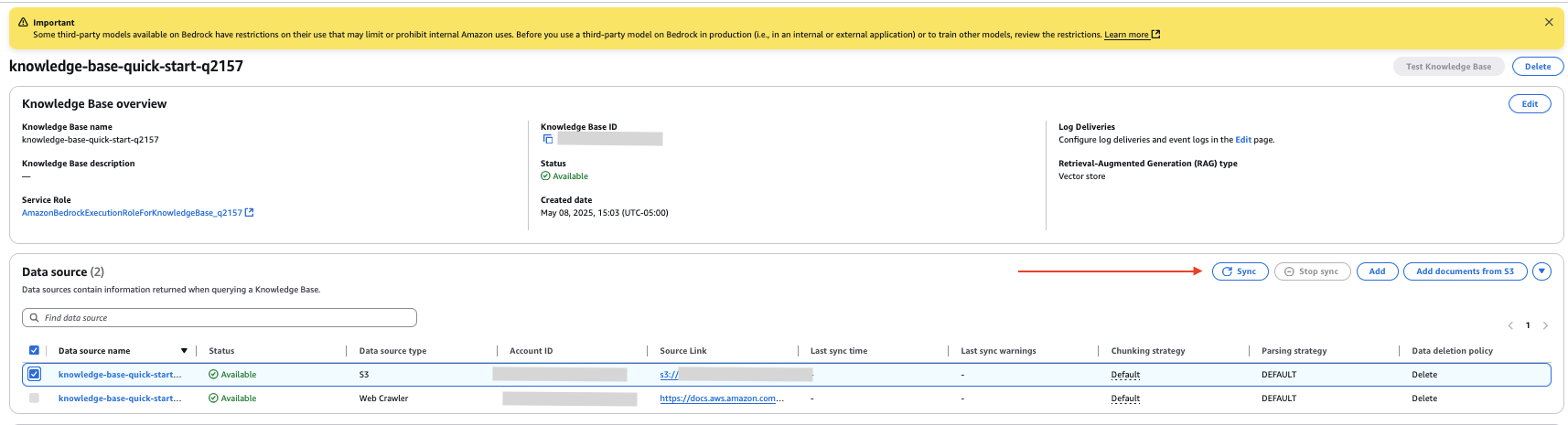

- On the information base particulars web page, choose your new knowledge supply and select Sync to crawl the web site and ingest the info.

Configure Amazon S3 knowledge supply

Full the next steps to configure paperwork out of your S3 bucket as an inner knowledge supply:

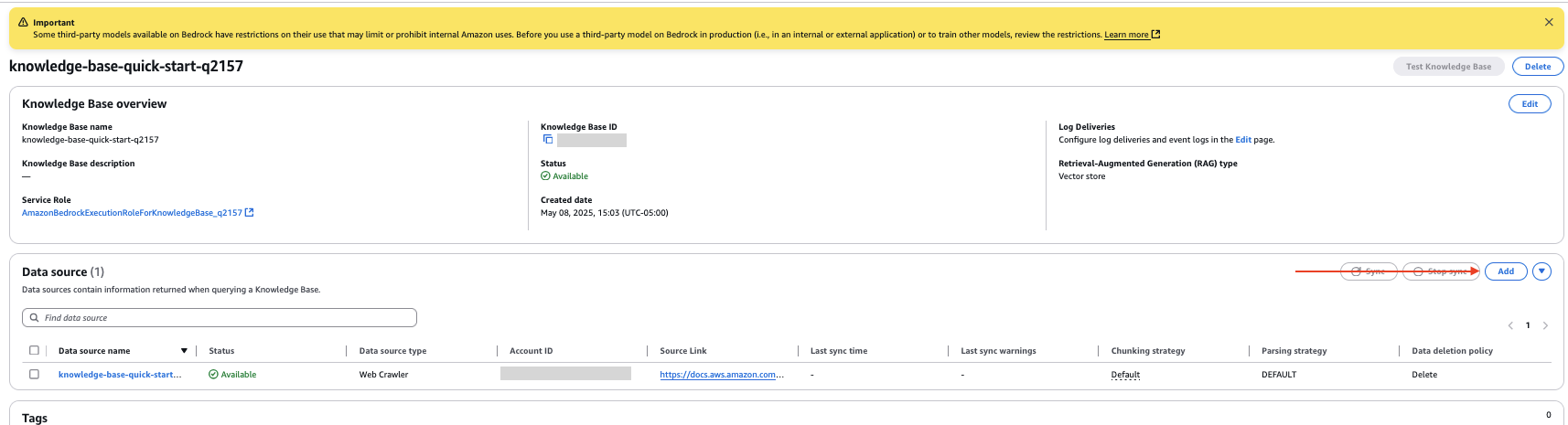

- On the information base particulars web page, select Add within the Information supply part.

- Specify the info supply as Amazon S3.

- Select your S3 bucket.

- Go away the parsing technique because the default setting.

- Select Subsequent.

- Evaluation the configurations and select Add knowledge supply.

- Within the Information supply part of the information base particulars web page, choose your new knowledge supply and select Sync to index the info from the paperwork within the S3 bucket.

Add inner doc

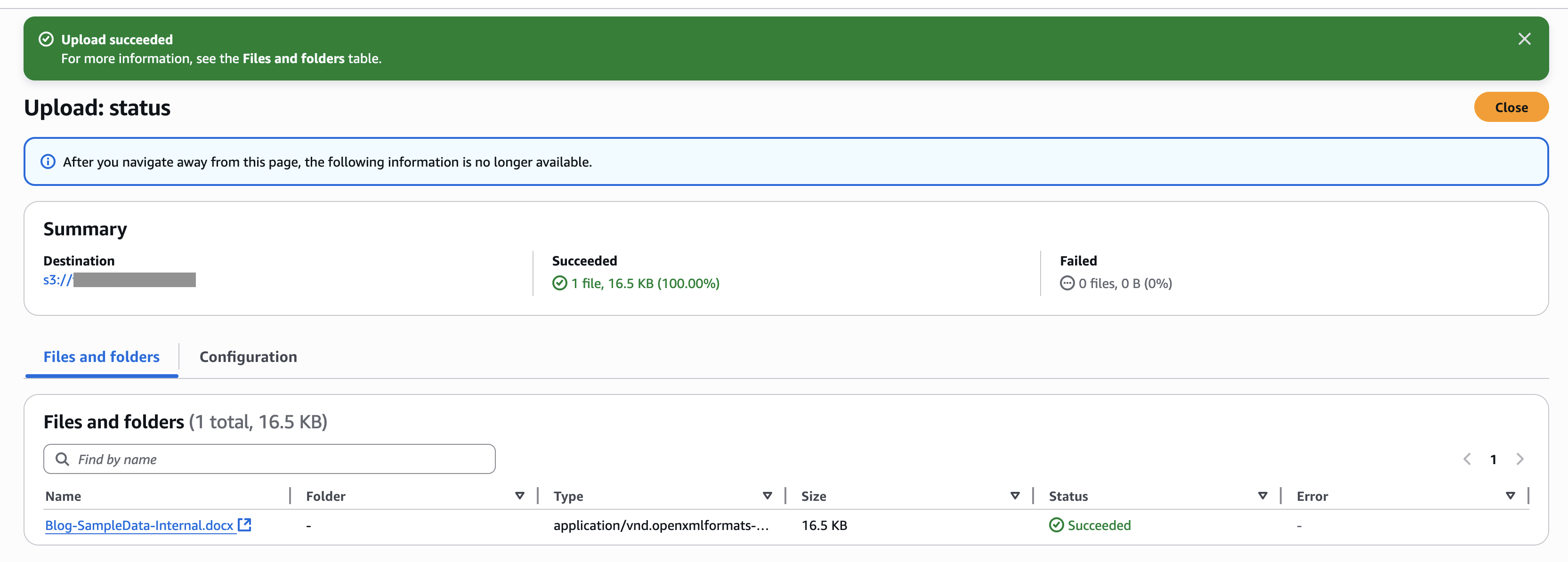

For this instance, we add a doc within the new S3 bucket knowledge supply. The next screenshot exhibits an instance of our doc.

Full the next steps to add the doc:

- On the Amazon S3 console, select Buckets within the navigation pane.

- Choose the bucket you created and select Add to add the doc.

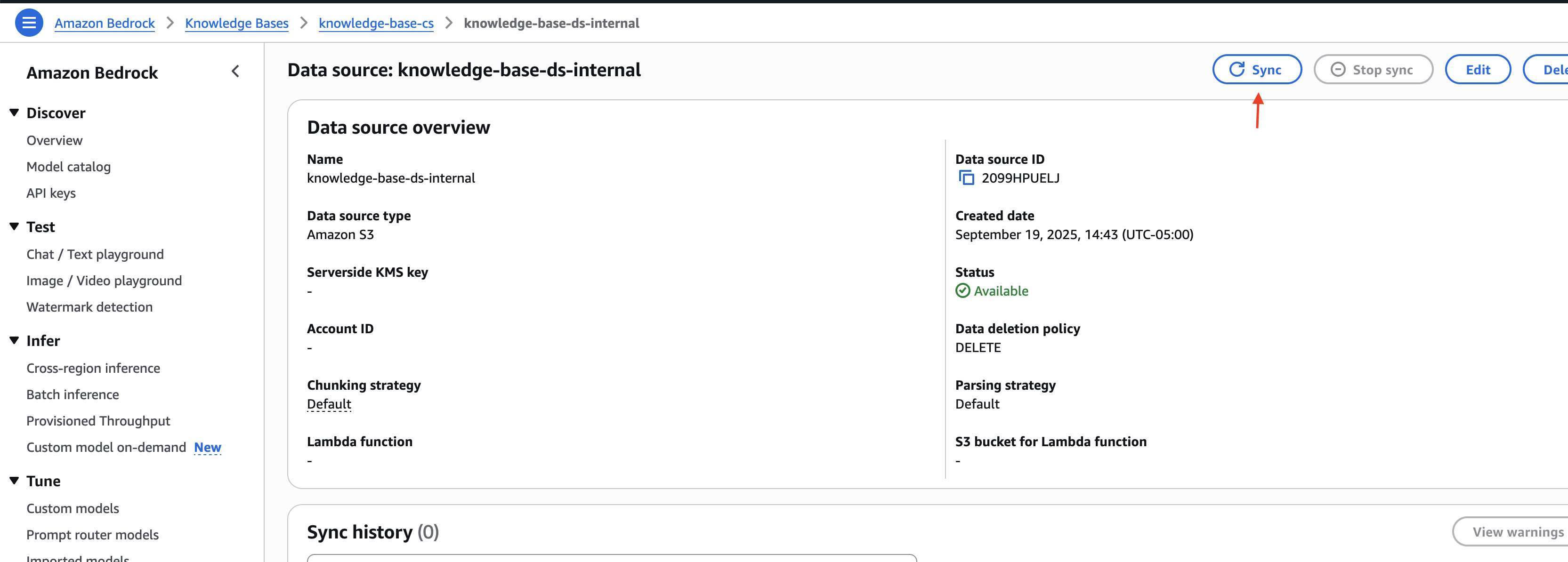

- On the Amazon Bedrock console, go to the information base you created.

- Select the inner knowledge supply you created and select Sync to sync the uploaded doc with the vector retailer.

Notice the information base ID and the info supply IDs for the exterior and inner knowledge sources. You employ this info within the subsequent step when deploying the answer infrastructure.

Deploy answer infrastructure

To deploy the answer infrastructure utilizing the AWS CDK, full the next steps:

- Obtain the code from code repository.

- Go to the iac listing contained in the downloaded challenge:

cd ./customer-support-ai/iac

- Open the parameters.json file and replace the information base and knowledge supply IDs with the values captured within the earlier part:

- Observe the deployment directions outlined within the customer-support-ai/README.md file to arrange the answer infrastructure.

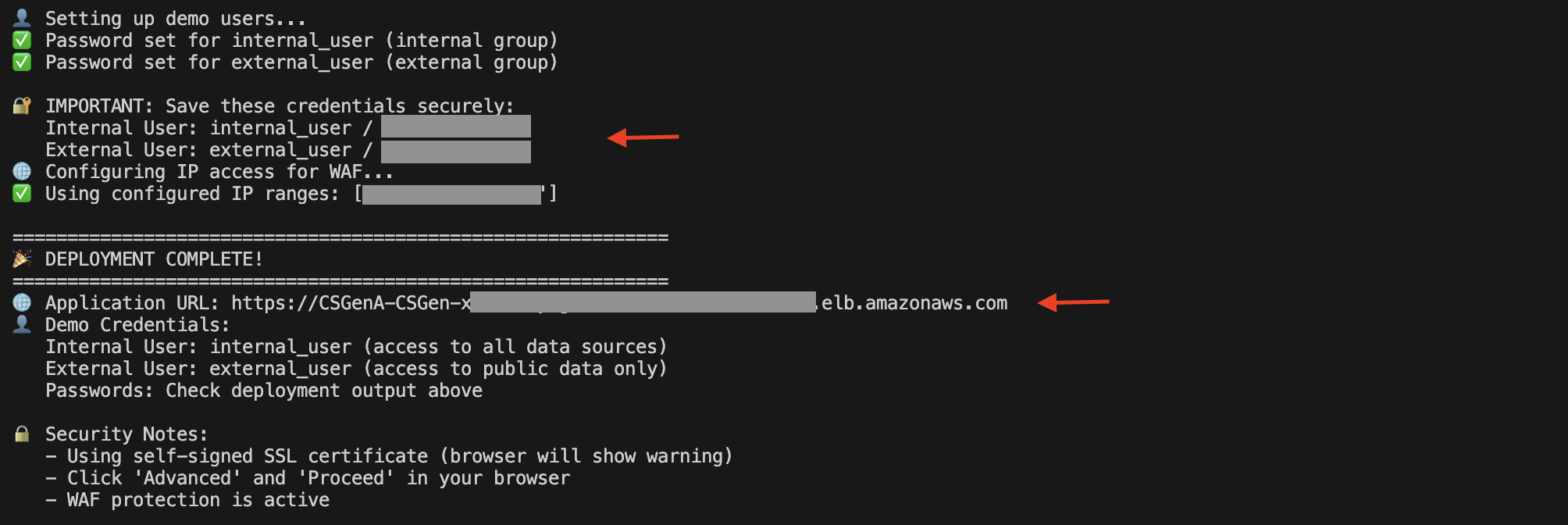

When the deployment is full, yow will discover the Software Load Balancer (ALB) URL and demo consumer particulars within the script execution output.

You can even open the Amazon EC2 console and select Load Balancers within the navigation pane to view the ALB.

On the ALB particulars web page, copy the DNS identify. You should use it to entry the UI to check out the answer.

Submit questions

Let’s discover an instance of Amazon S3 service assist. This answer helps completely different courses of customers to assist resolve their queries whereas utilizing Amazon Bedrock Data Bases to handle particular knowledge sources (resembling web site content material, documentation, and assist tickets) with built-in filtering controls that separate inner operational paperwork from publicly accessible info. For instance, inner customers can entry each company-specific operational guides and public documentation, whereas exterior customers are restricted to publicly accessible content material solely.

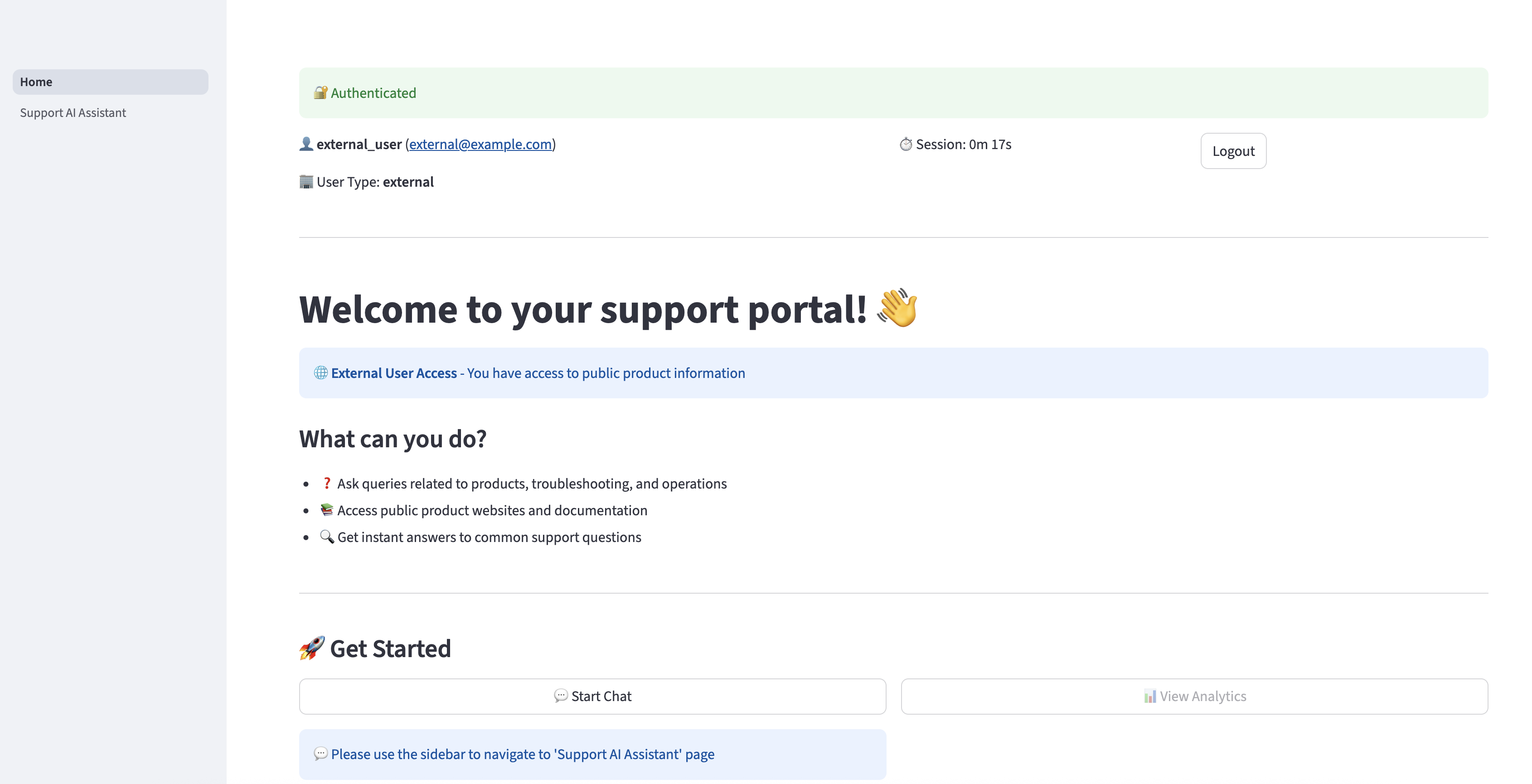

Open the DNS URL within the browser. Enter the exterior consumer credentials and select Login.

After you’re efficiently authenticated, you’ll be redirected to the house web page.

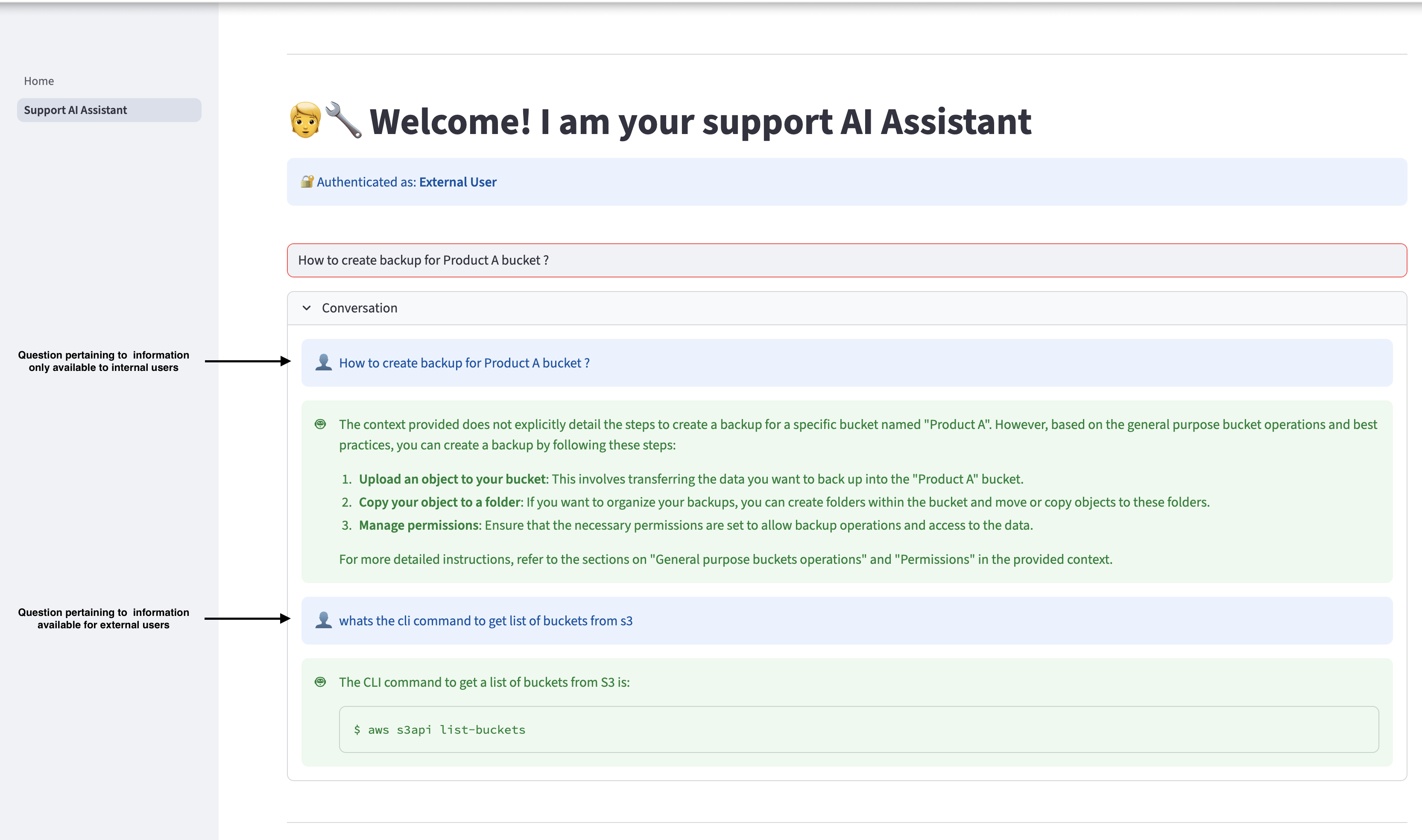

Select Assist AI Assistant within the navigation pane to ask questions associated to Amazon S3. The assistant can present related responses primarily based on the data accessible within the Getting began with Amazon S3 information. Nevertheless, if an exterior consumer asks a query that’s associated to info accessible just for inner customers, the AI assistant won’t present the inner info to consumer and can reply solely with info accessible for exterior customers.

Sign off and log in once more as an inner consumer, and ask the identical queries. The inner consumer can entry the related info accessible within the inner paperwork.

Clear up

When you resolve to cease utilizing this answer, full the next steps to take away its related assets:

- Go to the iac listing contained in the challenge code and run the next command from terminal:

- To run a cleanup script, use the next command:

- To carry out this operation manually, use the next command:

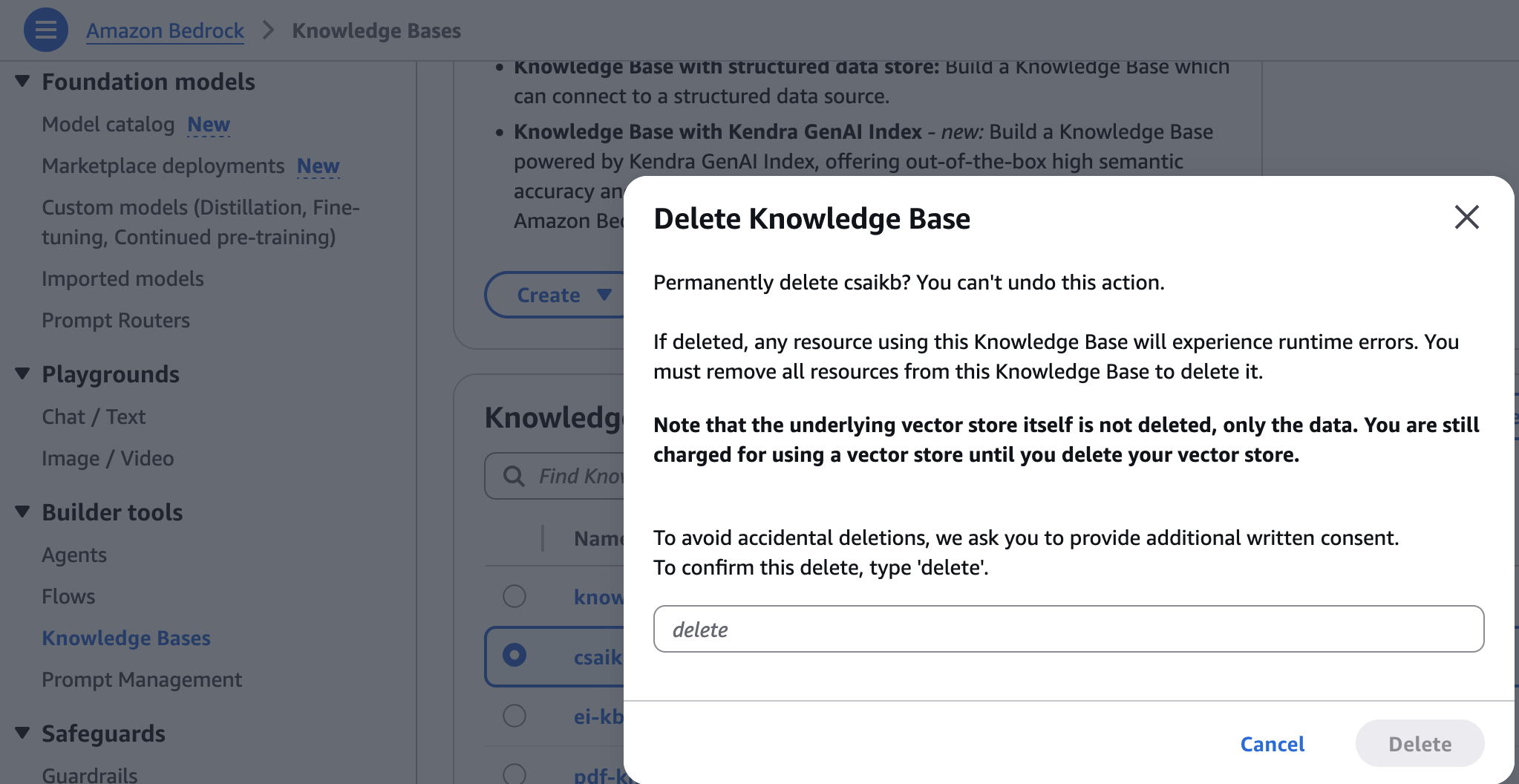

- On the Amazon Bedrock console, select Data Bases underneath Builder instruments within the navigation pane.

- Select the information base you created, then select Delete.

- Enter delete and select Delete to substantiate.

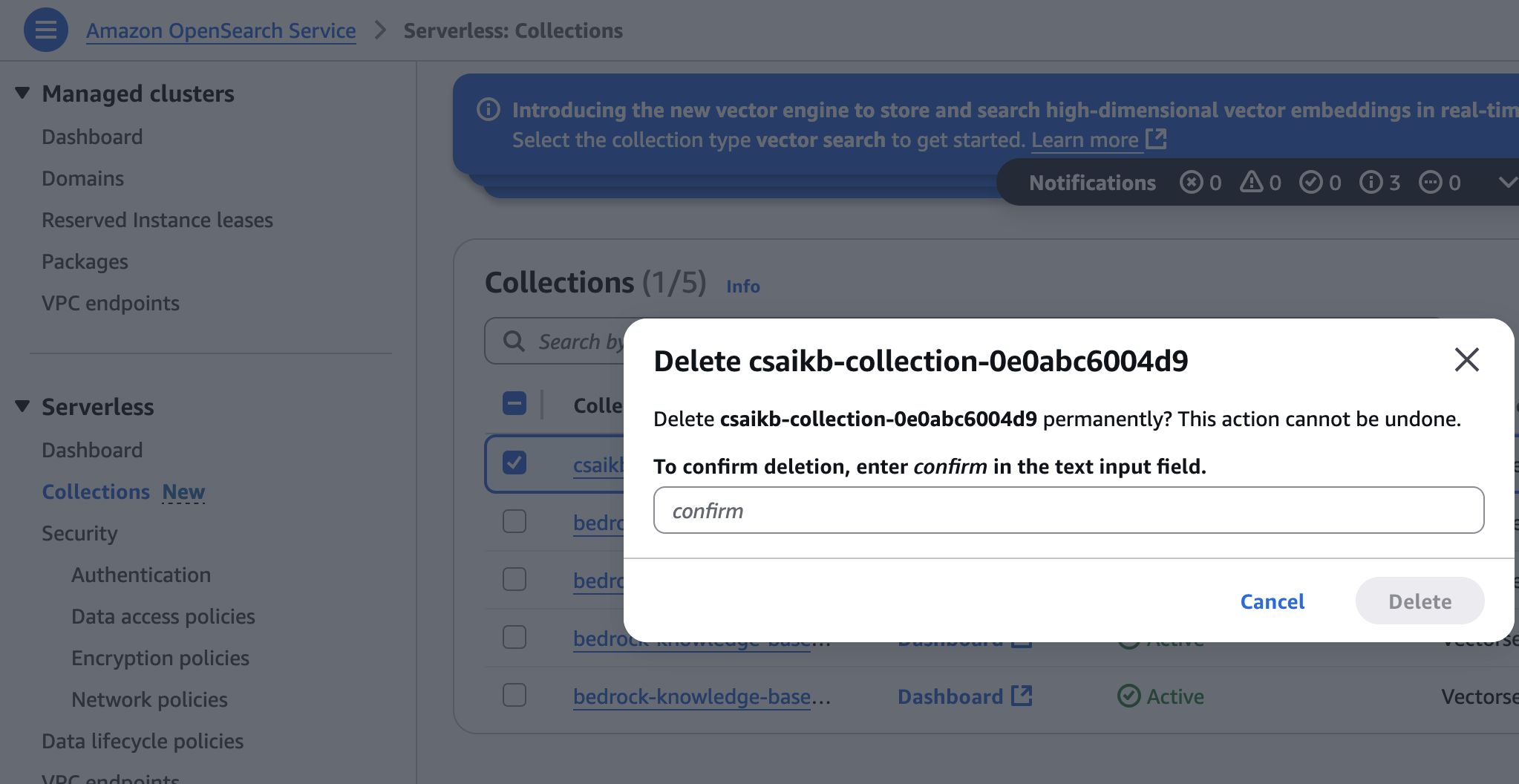

- On the OpenSearch Service console, select Collections underneath Serverless within the navigation pane.

- Select the gathering created throughout infrastructure provisioning, then select Delete.

- Enter verify and select Delete to substantiate.

Conclusion

This publish demonstrated create an AI-powered web site assistant to retrieve info rapidly by setting up a information base by way of internet crawling and importing paperwork. You should use the identical strategy to develop different generative AI prototypes and functions.

When you’re within the fundamentals of generative AI and work with FMs, together with superior prompting strategies, try the hands-on course Generative AI with LLMs. This on-demand, 3-week course is for knowledge scientists and engineers who need to learn to construct generative AI functions with LLMs. It’s the great basis to start out constructing with Amazon Bedrock. Join to study extra about Amazon Bedrock.

In regards to the authors

Shashank Jain is a Cloud Software Architect at Amazon Net Companies (AWS), specializing in generative AI options, cloud-native software structure, and sustainability. He works with prospects to design and implement safe, scalable AI-powered functions utilizing serverless applied sciences, trendy DevSecOps practices, Infrastructure as Code, and event-driven architectures that ship measurable enterprise worth.

Shashank Jain is a Cloud Software Architect at Amazon Net Companies (AWS), specializing in generative AI options, cloud-native software structure, and sustainability. He works with prospects to design and implement safe, scalable AI-powered functions utilizing serverless applied sciences, trendy DevSecOps practices, Infrastructure as Code, and event-driven architectures that ship measurable enterprise worth.

Jeff Li is a Senior Cloud Software Architect with the Skilled Companies staff at AWS. He’s captivated with diving deep with prospects to create options and modernize functions that assist enterprise improvements. In his spare time, he enjoys taking part in tennis, listening to music, and studying.

Jeff Li is a Senior Cloud Software Architect with the Skilled Companies staff at AWS. He’s captivated with diving deep with prospects to create options and modernize functions that assist enterprise improvements. In his spare time, he enjoys taking part in tennis, listening to music, and studying.

Ranjith Kurumbaru Kandiyil is a Information and AI/ML Architect at Amazon Net Companies (AWS) primarily based in Toronto. He focuses on collaborating with prospects to architect and implement cutting-edge AI/ML options. His present focus lies in leveraging state-of-the-art synthetic intelligence applied sciences to unravel advanced enterprise challenges.

Ranjith Kurumbaru Kandiyil is a Information and AI/ML Architect at Amazon Net Companies (AWS) primarily based in Toronto. He focuses on collaborating with prospects to architect and implement cutting-edge AI/ML options. His present focus lies in leveraging state-of-the-art synthetic intelligence applied sciences to unravel advanced enterprise challenges.