On this article, you’ll study seven sensible methods to show generic LLM embeddings into task-specific, high-signal options that increase downstream mannequin efficiency.

Matters we’ll cowl embrace:

- Constructing interpretable similarity options with idea anchors

- Decreasing, normalizing, and whitening embeddings to chop noise

- Creating interplay, clustering, and synthesized options

Alright — on we go.

7 Superior Function Engineering Tips Utilizing LLM Embeddings

Picture by Editor

The Embedding Hole

You’ve got mastered mannequin.encode(textual content) to show phrases into numbers. Now what? This text strikes past primary embedding extraction to discover seven superior, sensible methods for remodeling uncooked giant language mannequin (LLM) embeddings into highly effective, task-specific options in your machine studying fashions. Utilizing scikit-learn, sentence-transformers, and different commonplace libraries, we’ll translate idea into actionable code.

Fashionable LLMs like these offered by the sentence-transformers library generate wealthy, complicated vector representations (embeddings) that seize semantic which means. Whereas utilizing these embeddings immediately can enhance mannequin efficiency, there’s usually a niche between the overall semantic information in a primary embedding and the particular sign wanted in your distinctive prediction activity.

That is the place superior characteristic engineering is available in. By creatively processing, evaluating, and decomposing these elementary embeddings, we are able to extract extra particular info, cut back noise, and supply our downstream fashions (classifiers, regressors, and many others.) with options which can be way more related. The next seven methods are designed to shut that hole.

1. Semantic Similarity as a Function

As a substitute of treating an embedding as a single monolithic characteristic vector, calculate its similarity to key idea embeddings vital to your downside. This yields comprehensible, scalar options.

For instance, a support-ticket urgency mannequin wants to know whether or not a ticket is about “billing,” “login failure,” or a “characteristic request.” Uncooked embeddings comprise this info, however a easy mannequin can’t entry it immediately.

The answer is to create concept-anchor embeddings for key phrases or phrases. For every textual content, compute the embedding’s cosine similarity to every anchor.

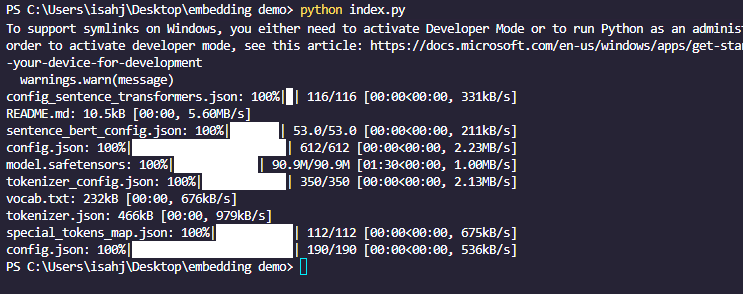

First you must set up sentence_transformers, sklearn, and numpy with pip. The command is similar on Home windows and Linux:

|

pip set up sentence_transformers sklearn numpy |

|

from sentence_transformers import SentenceTransformer from sklearn.metrics.pairwise import cosine_similarity import numpy as np

# Initialize mannequin and encode anchors mannequin = SentenceTransformer(‘all-MiniLM-L6-v2’) anchors = [“billing issue”, “login problem”, “feature request”] anchor_embeddings = mannequin.encode(anchors)

# Encode a brand new ticket new_ticket = [“I can’t access my account, it says password invalid.”] ticket_embedding = mannequin.encode(new_ticket)

# Calculate similarity options similarity_features = cosine_similarity(ticket_embedding, anchor_embeddings) print(similarity_features) # e.g., [[0.1, 0.85, 0.3]] -> excessive similarity to “login downside” |

This works as a result of it quantifies relevance, offering the mannequin with targeted, human-interpretable indicators about content material themes.

Select anchors rigorously. They are often derived from area experience or through clustering (see Cluster Labels & Distances as Options).

2. Dimensionality Discount and Denoising

LLM embeddings are high-dimensional (e.g., 384 or 768). Decreasing dimensions can take away noise, lower computational value, and typically reveal extra correct patterns.

The “curse of dimensionality” means some fashions (like Random Forests) could carry out poorly when many dimensions are uninformative.

The answer is to make use of scikit-learn’s decomposition methods to mission embeddings right into a lower-dimensional area.

First outline your textual content dataset:

|

text_dataset = [ “I was charged twice for my subscription”, “Cannot reset my password”, “Would love to see dark mode added”, “My invoice shows the wrong amount”, “Login keeps failing with error 401”, “Please add export to PDF feature”, ] |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

from sklearn.decomposition import PCA, TruncatedSVD from sklearn.manifold import TSNE # For visualization, not sometimes for characteristic engineering

# Assume ’embeddings’ is a numpy array of form (n_samples, 384) embeddings = np.array([model.encode(text) for text in text_dataset])

# Methodology 1: PCA – for linear relationships # n_components have to be <= min(n_samples, n_features) n_components = min(50, len(text_dataset)) pca = PCA(n_components=n_components) reduced_pca = pca.fit_transform(embeddings)

# Methodology 2: TruncatedSVD – comparable, works on matrices from TF-IDF as properly svd = TruncatedSVD(n_components=n_components) reduced_svd = svd.fit_transform(embeddings)

print(f“Unique form: {embeddings.form}”) print(f“Diminished form: {reduced_pca.form}”) print(f“PCA retains {sum(pca.explained_variance_ratio_):.2%} of variance.”) |

The code above works as a result of PCA finds axes of most variance, usually capturing probably the most vital semantic info in fewer, uncorrelated dimensions.

|

Unique form: (6, 384) Diminished form: (6, 6) PCA retains 100.00% of variance |

Word that dimensionality discount is lossy. All the time take a look at whether or not decreased options preserve or enhance mannequin efficiency. PCA is linear; for nonlinear relationships, contemplate UMAP (however be conscious of its sensitivity to hyperparameters).

3. Cluster Labels and Distances as Options

Use unsupervised clustering in your assortment embeddings to find pure thematic teams. Use cluster assignments and distances to cluster centroids as new categorical and steady options.

The issue: your knowledge could have unknown or rising classes not captured by predefined anchors (keep in mind the semantic similarity trick). Clustering all doc embeddings after which utilizing the outcomes as options addresses this.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

from sklearn.cluster import KMeans, DBSCAN from sklearn.preprocessing import LabelEncoder

# Cluster the embeddings # n_clusters have to be <= n_samples n_clusters = min(10, len(text_dataset)) kmeans = KMeans(n_clusters=n_clusters, random_state=42) cluster_labels = kmeans.fit_predict(embeddings)

# Function 1: Cluster task (encode in case your mannequin wants numeric) encoder = LabelEncoder() cluster_id_feature = encoder.fit_transform(cluster_labels)

# Function 2: Distance to every cluster centroid distances_to_centroids = kmeans.rework(embeddings) # Form: (n_samples, n_clusters) # ‘distances_to_centroids’ now has as much as n_clusters new steady options per pattern

# Mix with authentic embeddings or use alone enhanced_features = np.hstack([embeddings, distances_to_centroids]) |

This works as a result of it offers the mannequin with structural information in regards to the knowledge’s pure grouping, which could be extremely informative for duties like classification or anomaly detection.

Word: we’re utilizing n_clusters = min(10, len(text_dataset)) as a result of we don’t have a lot knowledge. Selecting the variety of clusters (okay) is crucial—use the elbow technique or area information. DBSCAN is an alternate for density-based clustering that doesn’t require specifying okay.

4. Textual content Distinction Embeddings

For duties involving pairs of texts (for instance, duplicate-question detection and semantic search relevance), the interplay between embeddings is extra vital than the embeddings in isolation.

Merely concatenating two embeddings doesn’t explicitly mannequin their relationship. A greater strategy is to create options that encode the distinction and element-wise product between embeddings.

|

# For pairs of texts (e.g., question and doc, ticket1 and ticket2) texts1 = [“I can’t log in to my account”] texts2 = [“Login keeps failing with error 401”]

embeddings1 = mannequin.encode(texts1) embeddings2 = mannequin.encode(texts2)

# Fundamental concatenation (baseline) concatenated = np.hstack([embeddings1, embeddings2])

# Superior interplay options absolute_diff = np.abs(embeddings1 – embeddings2) # Captures magnitude of disagreement elementwise_product = embeddings1 * embeddings2 # Captures alignment (like a dot product per dimension)

# Mix all for a wealthy characteristic set interaction_features = np.hstack([embeddings1, embeddings2, absolute_diff, elementwise_product]) |

Why does this work? The distinction vector highlights the place semantic meanings diverge. The product vector will increase the place they agree. This design is influenced by profitable neural community architectures like Siamese Networks utilized in similarity studying.

This strategy roughly quadruples the characteristic dimension. Apply dimensionality discount (as above) and regularization to manage dimension and noise.

5. Embedding Whitening Normalization

If the instructions of most variance in your dataset don’t align with crucial semantic axes in your activity, whitening may help. Whitening rescales and rotates embeddings to have zero imply and unit covariance, which may enhance efficiency in similarity and retrieval duties.

The issue is the pure directional dependence of embedding areas (the place some instructions have extra variance than others), which may skew distance calculations.

The answer is to use ZCA whitening (or PCA whitening) utilizing scikit-learn.

|

from sklearn.decomposition import PCA from sklearn.preprocessing import StandardScaler

# 1. Heart the information (zero imply) scaler = StandardScaler(with_std=False) # We do not scale std but embeddings_centered = scaler.fit_transform(embeddings)

# 2. Apply PCA with whitening pca = PCA(whiten=True, n_components=None) # Maintain all elements embeddings_whitened = pca.fit_transform(embeddings_centered) |

Now cosine similarity on whitened embeddings is equal to correlation.

Why it really works: Whitening equalizes the significance of all dimensions, stopping just a few high-variance instructions from dominating similarity measures. It’s a normal step in state-of-the-art semantic search pipelines just like the one described within the Sentence-BERT paper.

Prepare the whitening rework on a consultant pattern. Use the identical scaler and PCA objects to remodel new inference knowledge.

6. Sentence-Degree vs. Phrase-Degree Embedding Aggregation

LLMs can embed phrases, sentences, or paragraphs. For longer paperwork, strategically aggregating word-level embeddings can seize info {that a} single document-level embedding would possibly miss. The issue is {that a} single sentence embedding for a protracted, multi-topic doc can lose fine-grained info.

To handle this, use a token-embedding mannequin (e.g., all-MiniLM-L6-v2 in word-piece mode or bert-base-uncased from Transformers), then pool key tokens.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 |

from transformers import AutoTokenizer, AutoModel import torch import numpy as np

# Load a mannequin that gives token embeddings model_name = “bert-base-uncased” tokenizer = AutoTokenizer.from_pretrained(model_name) mannequin = AutoModel.from_pretrained(model_name)

def get_pooled_embeddings(textual content, pooling_strategy=“imply”): inputs = tokenizer(textual content, return_tensors=“pt”, truncation=True, padding=True) with torch.no_grad(): outputs = mannequin(**inputs) token_embeddings = outputs.last_hidden_state # Form: (batch, seq_len, hidden_dim) attention_mask = inputs[“attention_mask”].unsqueeze(–1) # (batch, seq_len, 1)

if pooling_strategy == “imply”: # Masked imply to disregard padding tokens masked = token_embeddings * attention_mask summed = masked.sum(dim=1) counts = attention_mask.sum(dim=1).clamp(min=1) return (summed / counts).squeeze(0).numpy() elif pooling_strategy == “max”: # Very damaging quantity for masked positions masked = token_embeddings.masked_fill(attention_mask == 0, –1e9) return masked.max(dim=1).values.squeeze(0).numpy() elif pooling_strategy == “cls”: return token_embeddings[:, 0, :].squeeze(0).numpy()

# Instance: Get imply of non-padding token embeddings doc_embedding = get_pooled_embeddings(“A protracted doc about a number of matters.”) |

Why it really works: Imply pooling averages out noise, whereas max pooling highlights probably the most salient options. For duties the place particular key phrases are crucial (e.g., sentiment from “wonderful” vs. “horrible”), this may be more practical than commonplace sentence embeddings.

Word that this may be computationally heavier than sentence-transformers. It additionally requires cautious dealing with of padding and a focus masks. The [CLS] token embedding is commonly fine-tuned for particular duties however could also be much less normal as a characteristic.

7. Embeddings as Enter for Function Synthesis (AutoML)

Let automated characteristic engineering instruments deal with your embeddings as uncooked enter to find complicated, non-linear interactions you may not contemplate manually. Manually engineering interactions between a whole lot of embedding dimensions is impractical.

One sensible strategy is to make use of scikit-learn’s PolynomialFeatures on reduced-dimension embeddings.

|

from sklearn.preprocessing import PolynomialFeatures from sklearn.decomposition import PCA

# Begin with decreased embeddings to keep away from explosion # n_components have to be <= min(n_samples, n_features) n_components_poly = min(20, len(text_dataset)) pca = PCA(n_components=n_components_poly) embeddings_reduced = pca.fit_transform(embeddings)

# Generate polynomial and interplay options as much as diploma 2 poly = PolynomialFeatures(diploma=2, interaction_only=False, include_bias=False) synthesized_features = poly.fit_transform(embeddings_reduced)

print(f“Diminished dimensions: {embeddings_reduced.form[1]}”) print(f“Synthesized options: {synthesized_features.form[1]}”) # n + n*(n+1)/2 options |

This code mechanically creates options representing significant interactions between completely different semantic ideas captured by the principal elements of your embeddings.

As a result of this will result in characteristic explosion and overfitting, all the time use robust regularization (L1/L2) and rigorous validation. Apply after vital dimensionality discount.

Conclusion

On this article you might have discovered that superior characteristic engineering with LLM embeddings is a structured, iterative means of:

- Understanding your downside’s semantic wants

- Remodeling uncooked embeddings into focused indicators (similarity, clusters, variations)

- Optimizing the illustration (normalization, discount)

- Synthesizing new interactions cautiously

Begin by integrating one or two of those methods into your current pipeline. For instance, mix Trick 1 (Semantic Similarity) with Trick 2 (Dimensionality Discount) to create a robust, interpretable characteristic set. Monitor validation efficiency rigorously to see what works in your particular area and knowledge.

The aim is to maneuver from seeing an LLM embedding as a black-box vector to treating it as a wealthy, structured semantic basis from which you’ll sculpt exact options that give your fashions a decisive edge.