This submit exhibits you how you can construct a scalable multimodal video search system that allows pure language search throughout giant video datasets utilizing Amazon Nova fashions and Amazon OpenSearch Service. You’ll discover ways to transfer past guide tagging and keyword-based searches to allow semantic search that captures the total richness of video content material.

We show this at scale by processing 792,270 movies from two AWS Open Knowledge Registry datasets: Multimedia Commons (787,479 movies, 37-second common) and MEVA (4,791 movies, 5-minute common). Processing 8,480 hours of video content material (30.5M seconds) took 41 hours. First-year whole price: $27,328 (with OpenSearch on-demand) or $23,632 (with OpenSearch Service Reserved Situations). The fee consisted of one-time ingestion ($18,088) and annual Amazon OpenSearch Service ($9,240 on-demand or $5,544 Reserved).

The ingestion breakdown is as follows:

- Amazon Elastic Compute Cloud (Amazon EC2) compute (4× c7i.48xlarge spot at $2.57/hour × 41 hours): $421

- Amazon Bedrock Nova Multimodal Embeddings (30.5M seconds × $0.00056/second batch pricing): $17,096

- Nova Professional tagging (792K movies × 600 tokens(avg.)): $571

The answer generates audio-visual embeddings utilizing AUDIO_VIDEO_COMBINED mode (see Nova Multimodal Embeddings API schema), shops them in OpenSearch Service, and helps text-to-video, video-to-video, and hybrid search.

Answer overview

The structure consists of two predominant workflows—ingestion and search—that work collectively to allow multimodal video search at scale:

Video ingestion pipeline:

The ingestion pipeline makes use of 4 Amazon EC2 c7i.48xlarge cases with 600 parallel staff to course of 19,400 movies per hour. The async API has a concurrency restrict of 30 concurrent jobs per account (see Amazon Bedrock quotas), so the pipeline implements a job queue with polling. Staff submit jobs as much as the concurrency restrict, ballot for completion, and submit new jobs as slots develop into obtainable. Amazon Nova Multimodal Embeddings handles video processing asynchronously, segmenting movies into 15-second chunks (optimized for capturing scene modifications whereas conserving embedding counts manageable) and producing 1024-dimensional embeddings. These embeddings have been chosen over 3072-dimensional for 3x price financial savings from the storage viewpoint with minimal accuracy influence. The embedding era price is agnostic to embedding dimensions. Amazon Nova Professional provides 10-15 descriptive tags per video from a predefined taxonomy.

Be aware: Amazon Nova 2 Lite presents improved accuracy at decrease price for tagging duties. We suggest that you simply contemplate it for brand spanking new deployments. The system shops embeddings in an OpenSearch k-NN index for semantic search and metadata tags in a separate textual content index for key phrase matching. For search, you possibly can question movies 3 ways: convert pure language to embeddings for text-to-video search, examine video embeddings immediately for video-to-video search, or mix each approaches in hybrid search.

Kinds of searches enabled by this answer:

- Textual content-to-video Search – Pure language queries transformed to embeddings for semantic similarity matching

- Video-to-video Search – Discover comparable content material by evaluating video embeddings immediately

- Hybrid search – Combines vector similarity (70% weight) with key phrase matching (30% weight) for optimum accuracy

Video ingestion pipeline

The next diagram illustrates the video ingestion and processing pipeline:

Determine 1: Video ingestion pipeline exhibiting the circulate from S3 video storage by means of Nova Multimodal Embeddings and Nova Professional to twin OpenSearch indexes

The video processing workflow is as follows:

- Add movies to Amazon Easy Storage Service (Amazon S3).

- Course of movies utilizing Nova Multimodal Embeddings async API, which mechanically segments movies and generates embeddings. An orchestrator polls for job completion (async API has a 30 concurrent job restrict per account, see Amazon Bedrock quotas) and retrieves outcomes from Amazon S3.

- Generate descriptive tags utilizing Nova Professional (or Nova Lite for higher accuracy at decrease price) from a predefined taxonomy for enhanced search capabilities.

- Index embeddings in OpenSearch k-NN index and tags in textual content index.

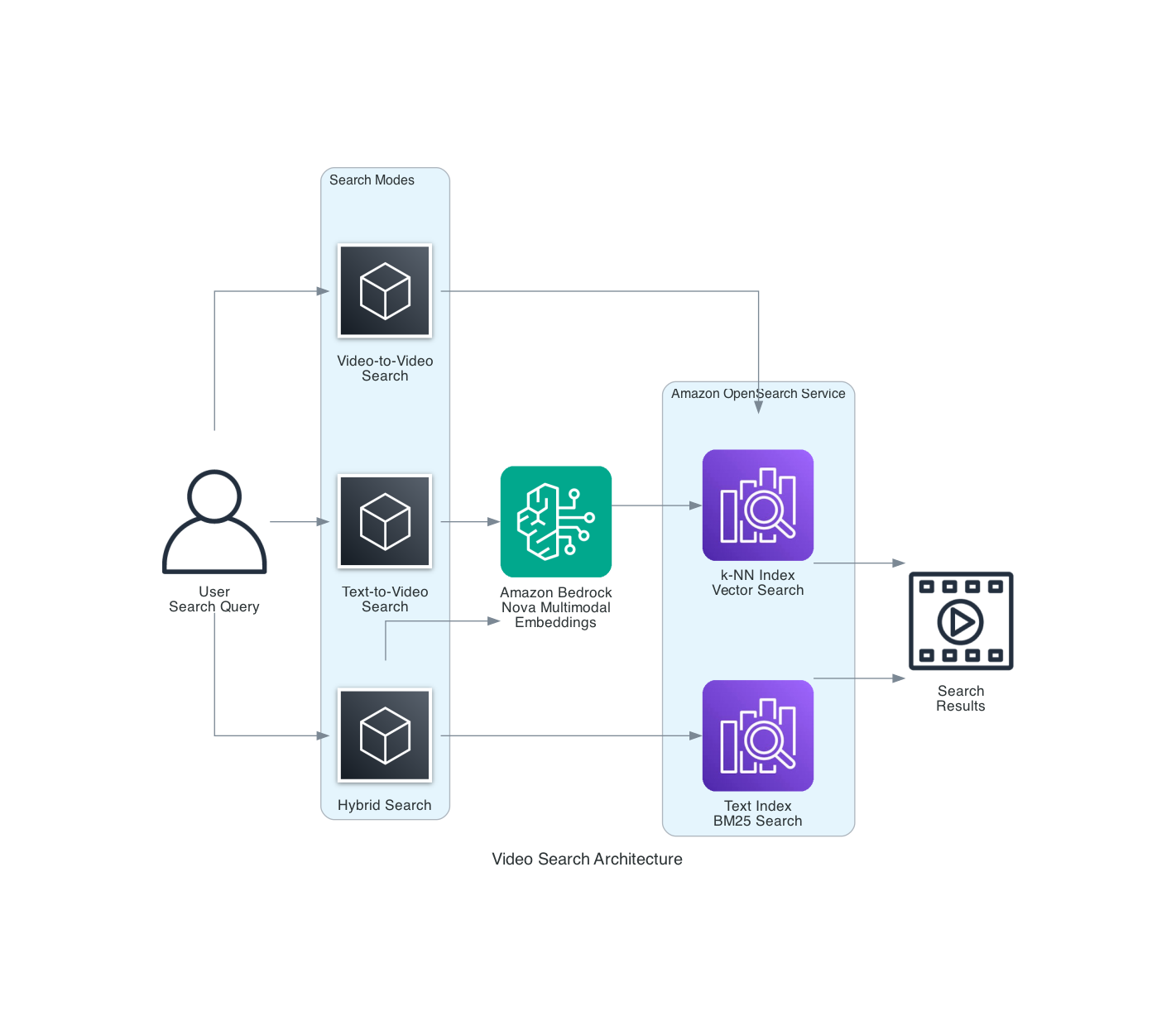

Video search structure

The next diagram exhibits the whole search structure:

Determine 2: Video search structure demonstrating three search modes – text-to-video, video-to-video, and hybrid search combining k-NN and BM25

The search structure permits three modes:

- Textual content-to-video – Pure language queries

- Video-to-video – Related content material discovery

- Hybrid – Mixed semantic and key phrase matching

Stipulations

Earlier than you start, you have to:

- An AWS account with entry to Amazon Bedrock in

us-east-1(Nova fashions are enabled by default with acceptable IAM permissions) - Python 3.9 or later put in

- AWS Command Line Interface (AWS CLI) configured with acceptable credentials

- An Amazon OpenSearch Service area (r6g.giant or bigger really helpful)

- An Amazon S3 bucket for video storage and embedding outputs

- AWS Identification and Entry Administration (IAM) for Amazon Bedrock, OpenSearch Service, and Amazon S3

The answer makes use of:

- Amazon Bedrock with Nova Multimodal Embeddings (amazon.nova-2-multimodal-embeddings-v1:0)

- Amazon Bedrock with Nova Professional (us.amazon.nova-pro-v1:0) or Nova Lite (us.amazon.nova-2-lite-v1:0) for tagging

- Amazon OpenSearch Service 2.11 or later with k-NN plugin

- Amazon S3 for video and embedding storage

Walkthrough

Step 1: Create IAM roles and insurance policies

Create an IAM function with permissions to invoke Amazon Bedrock fashions, write to OpenSearch indexes, and skim/write S3 objects.

{

"Model": "2012-10-17",

"Assertion": [

{

"Effect": "Allow",

"Action": [

"bedrock:InvokeModel",

"bedrock:StartAsyncInvoke",

"bedrock:GetAsyncInvoke",

"bedrock:ListAsyncInvoke"

],

"Useful resource": [

"arn:aws:bedrock:us-east-1::foundation-model/amazon.nova-2-multimodal-embeddings-v1:0",

"arn:aws:bedrock:us-east-1::foundation-model/us.amazon.nova-pro-v1:0"

]

},

{

"Impact": "Permit",

"Motion": [

"es:ESHttpPost",

"es:ESHttpPut",

"es:ESHttpGet"

],

"Useful resource": "arn:aws:es:us-east-1:ACCOUNT_ID:area/DOMAIN_NAME/*"

},

{

"Impact": "Permit",

"Motion": [

"s3:GetObject",

"s3:PutObject"

],

"Useful resource": [

"arn:aws:s3:::amzn-s3-demo-video-bucket/*",

"arn:aws:s3:::amzn-s3-demo-embedding-bucket/*"

]

}

]

}

Step 2: Arrange OpenSearch Service indexes

Create two OpenSearch Service indexes: one for vector embeddings (k-NN) and one for textual content metadata. This structure helps semantic search and hybrid queries.

from opensearchpy import OpenSearch, RequestsHttpConnection

from requests_aws4auth import AWS4Auth

import boto3

session = boto3.Session()

credentials = session.get_credentials()

awsauth = AWS4Auth(

credentials.access_key,

credentials.secret_key,

session.region_name,

'es',

session_token=credentials.token

)

opensearch_client = OpenSearch(

hosts=[{'host': 'YOUR_OPENSEARCH_ENDPOINT', 'port': 443}],

http_auth=awsauth,

use_ssl=True,

verify_certs=True,

connection_class=RequestsHttpConnection

)

# Create k-Nearest Neighbors (k-NN) index for embeddings

knn_index_body = {

"settings": {

"index.knn": True,

"number_of_shards": 2,

"number_of_replicas": 1

},

"mappings": {

"properties": {

"video_id": {"kind": "key phrase"},

"segment_index": {"kind": "integer"},

"timestamp": {"kind": "float"},

"embedding": {

"kind": "knn_vector",

"dimension": 1024,

"technique": {

"title": "hnsw",

"space_type": "cosinesimilarity",

"engine": "faiss"

}

},

"s3_uri": {"kind": "key phrase"}

}

}

}

opensearch_client.indices.create(

index="video-embeddings-knn",

physique=knn_index_body

)

# Create textual content index for metadata

text_index_body = {

"settings": {

"number_of_shards": 2,

"number_of_replicas": 1

},

"mappings": {

"properties": {

"video_id": {"kind": "key phrase"},

"segment_index": {"kind": "integer"},

"tags": {"kind": "textual content", "analyzer": "normal"}

}

}

}

opensearch_client.indices.create(

index="video-embeddings-text",

physique=text_index_body

)Step 3: Course of movies with Nova Multimodal Embeddings

The Amazon Bedrock async API processes movies and generates embeddings. It segments movies into 15-second chunks and combines audio and visible info.

import boto3

import json

import time

bedrock = boto3.consumer('bedrock-runtime', region_name="us-east-1")

def generate_video_embeddings(video_s3_uri, output_s3_uri):

"""Generate embeddings for a video utilizing Nova MME async API."""

# Begin async job

response = bedrock.start_async_invoke(

modelId="amazon.nova-2-multimodal-embeddings-v1:0",

modelInput={

"taskType": "SEGMENTED_EMBEDDING",

"segmentedEmbeddingParams": {

"embeddingPurpose": "GENERIC_INDEX",

"embeddingDimension": 1024,

"video": {

"format": "mp4",

"embeddingMode": "AUDIO_VIDEO_COMBINED",

"supply": {"s3Location": {"uri": video_s3_uri}},

"segmentationConfig": {"durationSeconds": 15}

}

}

},

outputDataConfig={"s3OutputDataConfig": {"s3Uri": output_s3_uri}}

)

# Ballot for completion

invocation_arn = response["invocationArn"]

whereas True:

job = bedrock.get_async_invoke(invocationArn=invocation_arn)

if job["status"] == "Accomplished":

return read_embeddings_from_s3(job["outputDataConfig"]["s3OutputDataConfig"]["s3Uri"])

elif job["status"] in ["Failed", "Expired"]:

elevate RuntimeError(f"Job failed: {job.get('failureMessage')}")

time.sleep(10)

def manage_concurrent_jobs(bedrock_client, video_queue, max_concurrent=30):

"""Handle 30 concurrent async jobs inside quota limits."""

active_jobs = {}

whereas video_queue or active_jobs:

# Submit new jobs as much as restrict (makes use of similar start_async_invoke name as above)

whereas len(active_jobs) < max_concurrent and video_queue:

video_info = video_queue.pop(0)

response = bedrock_client.start_async_invoke(

modelId="amazon.nova-2-multimodal-embeddings-v1:0",

modelInput={...}, # Identical model_input construction as generate_video_embeddings()

outputDataConfig={"s3OutputDataConfig": {"s3Uri": video_info['output_uri']}}

)

active_jobs[response["invocationArn"]] = video_info

# Ballot all energetic jobs

for arn in record(active_jobs.keys()):

job = bedrock_client.get_async_invoke(invocationArn=arn)

if job["status"] == "Accomplished":

video_info = active_jobs.pop(arn)

embeddings = read_embeddings_from_s3(job["outputDataConfig"]["s3OutputDataConfig"]["s3Uri"])

# Course of embeddings...

elif job["status"] in ["Failed", "Expired"]:

active_jobs.pop(arn)

if active_jobs:

time.sleep(10)

def read_embeddings_from_s3(s3_uri):

"""Learn JSONL embeddings from S3. Returns record of {startTime, endTime, embedding} dicts."""

# Obtain and parse JSONL from s3_uri (normal S3 GetObject + json.masses per line)

Step 4: Generate metadata tags with Nova Professional or Nova Lite

Generate descriptive tags for movies utilizing Nova Professional (or Nova Lite for higher accuracy at decrease price) to allow hybrid search that mixes semantic and key phrase matching.

VALID_TAGS = [

"person", "vehicle", "animal", "building", "nature", "indoor", "outdoor",

"walking", "running", "sitting", "standing", "talking", "driving",

"day", "night", "sunny", "cloudy", "urban", "rural", "beach", "forest",

"sports", "music", "food", "technology", "crowd", "solo"

]

def generate_tags(video_s3_uri, sample_frame_count=3):

"""Generate descriptive tags utilizing Nova Professional or Nova Lite."""

immediate = f"""Analyze this video and choose 10-15 tags from this predefined record that greatest describe the content material:

{', '.be a part of(VALID_TAGS)}

Solely return tags from this record as a comma-separated record. Don't invent new tags."""

response = bedrock.converse(

modelId="us.amazon.nova-pro-v1:0", # Or use us.amazon.nova-2-lite-v1:0

messages=[{

"role": "user",

"content": [{

"video": {

"format": "mp4",

"source": {"s3Location": {"uri": video_s3_uri}}

}

}, {

"text": prompt

}]

}]

)

# Parse tags from response and validate towards taxonomy

tags_text = response['output']['message']['content'][0]['text']

tags = [tag.strip().lower() for tag in tags_text.split(',')]

# Filter to solely legitimate tags from our taxonomy

valid_tags = [tag for tag in tags if tag in VALID_TAGS]

return valid_tags

Step 5: Index embeddings and tags in OpenSearch Service

Retailer the generated embeddings and tags in OpenSearch Service utilizing bulk indexing for effectivity.

from opensearchpy import helpers

def index_video_data(video_id, s3_uri, embeddings, tags):

"""Index embeddings and tags in OpenSearch."""

# Put together bulk actions for k-NN index

knn_actions = []

for idx, emb in enumerate(embeddings):

doc_id = f"{video_id}_{idx}"

knn_actions.append({

"_index": "video-embeddings-knn",

"_id": doc_id,

"_source": {

"video_id": video_id,

"segment_index": idx,

"timestamp": emb['start_time'],

"embedding": emb['embedding'],

"s3_uri": s3_uri

}

})

# Bulk index embeddings

helpers.bulk(opensearch_client, knn_actions)

# Put together bulk actions for textual content index

text_actions = []

for idx in vary(len(embeddings)):

doc_id = f"{video_id}_{idx}"

text_actions.append({

"_index": "video-embeddings-text",

"_id": doc_id,

"_source": {

"video_id": video_id,

"segment_index": idx,

"tags": " ".be a part of(tags)

}

})

# Bulk index tags

helpers.bulk(opensearch_client, text_actions)

print(f"Listed {len(embeddings)} segments for video {video_id}")

Step 6: Implement search performance

After ingestion completes, search the listed movies 3 ways. The implementation targets low-latency queries.

Initialize OpenSearch Service consumer for search

First, create the OpenSearch Service consumer for search operations:

from opensearchpy import OpenSearch, RequestsHttpConnection

from requests_aws4auth import AWS4Auth

import boto3

def create_opensearch_client():

"""Create OpenSearch consumer with AWS authentication."""

session = boto3.Session(region_name="us-east-1")

credentials = session.get_credentials()

awsauth = AWS4Auth(

credentials.access_key,

credentials.secret_key,

'us-east-1',

'es',

session_token=credentials.token

)

return OpenSearch(

hosts=[{'host': 'YOUR_OPENSEARCH_ENDPOINT', 'port': 443}],

http_auth=awsauth,

use_ssl=True,

verify_certs=True,

connection_class=RequestsHttpConnection,

timeout=30

)

# Create consumer

opensearch_client = create_opensearch_client()

Textual content-to-video semantic search

Convert pure language queries to embeddings utilizing the sync API, then carry out a k-NN similarity search:

def search_text_to_video(query_text, opensearch_client, ok=10):

"""Search movies utilizing pure language question transformed to embedding."""

bedrock_client = boto3.consumer('bedrock-runtime', region_name="us-east-1")

# Use SINGLE_EMBEDDING process kind for text-to-embedding conversion

# VIDEO_RETRIEVAL function optimizes embeddings for looking video content material

request_body = {

"taskType": "SINGLE_EMBEDDING",

"singleEmbeddingParams": {

"embeddingPurpose": "VIDEO_RETRIEVAL",

"embeddingDimension": 1024,

"textual content": {

"truncationMode": "END",

"worth": query_text

}

}

}

response = bedrock_client.invoke_model(

modelId='amazon.nova-2-multimodal-embeddings-v1:0',

physique=json.dumps(request_body),

settle for="utility/json",

contentType="utility/json"

)

response_body = json.masses(response['body'].learn())

# Response construction: {"embeddings": [{"embeddingType": "TEXT", "embedding": [...]}]}

query_embedding = response_body['embeddings'][0]['embedding']

# Carry out k-NN search towards video embeddings

search_body = {

"question": {

"knn": {

"embedding": {

"vector": query_embedding,

"ok": ok

}

}

},

"dimension": ok,

"_source": ["video_id", "segment_index", "timestamp", "s3_uri"]

}

response = opensearch_client.search(

index="video-embeddings-knn",

physique=search_body

)

# Extract outcomes

return [{'score': hit['_score'],

'video_id': hit['_source']['video_id'],

'segment_index': hit['_source']['segment_index'],

'timestamp': hit['_source'].get('timestamp', 0)}

for hit in response['hits']['hits']]

Textual content search with BM25 (key phrase matching)

Use the OpenSearch BM25 scoring for key phrase matching on tags with out producing embeddings:

def search_text_bm25(search_term, opensearch_client, ok=10):

"""Search movies utilizing BM25 key phrase matching on tags discipline."""

# Search textual content index utilizing match question on tags

search_body = {

"question": {

"match": {

"tags": search_term

}

},

"dimension": ok,

"_source": ["video_id", "segment_index", "tags"]

}

response = opensearch_client.search(

index="video-embeddings-text",

physique=search_body

)

return response['hits']['hits'] # Extract outcomes (similar sample as above)

Video-to-video search

Retrieve an current video’s embedding from OpenSearch Service and seek for comparable content material—no Amazon Bedrock API name wanted:

def search_video_to_video(query_video_id, query_segment_index, opensearch_client, ok=10):

"""Discover comparable movies utilizing a reference video phase."""

# Get the embedding from the reference video phase

sample_query = {

"question": {

"bool": {

"should": [

{"term": {"video_id": query_video_id}},

{"term": {"segment_index": query_segment_index}}

]

}

},

"_source": ["video_id", "segment_index", "embedding"]

}

sample_response = opensearch_client.search(

index="video-embeddings-knn",

physique=sample_query

)

if not sample_response['hits']['hits']:

return []

sample_doc = sample_response['hits']['hits'][0]['_source']

query_embedding = sample_doc.get('embedding')

# Carry out k-NN search with the embedding

search_body = {

"question": {

"knn": {

"embedding": {

"vector": query_embedding,

"ok": ok

}

}

},

"dimension": ok,

"_source": ["video_id", "segment_index", "timestamp"]

}

response = opensearch_client.search(

index="video-embeddings-knn",

physique=search_body

)

return response['hits']['hits'] # Extract outcomes as wanted

Hybrid search

Mix semantic k-NN and BM25 key phrase matching by retrieving outcomes from each indexes and merging with weighted scoring:

def search_hybrid(query_text, opensearch_client, ok=10, vector_weight=0.7, text_weight=0.3):

"""Hybrid search combining k-NN semantic search and BM25 textual content matching."""

# Generate question embedding (use similar code as search_text_to_video above)

query_embedding = generate_query_embedding(query_text) # See text-to-video instance

# Get k-NN outcomes (similar question as search_text_to_video)

knn_response = opensearch_client.search(

index="video-embeddings-knn",

physique={"question": {"knn": {"embedding": {"vector": query_embedding, "ok": 20}}}, "dimension": 20}

)

# Get BM25 textual content outcomes (similar question as search_text_bm25)

text_response = opensearch_client.search(

index="video-embeddings-text",

physique={"question": {"match": {"tags": query_text}}, "dimension": 20}

)

# Mix outcomes with weighted scoring

knn_hits = knn_response['hits']['hits']

text_hits = text_response['hits']['hits']

mixed = {}

for hit in knn_hits:

vid = hit['_source']['video_id']

seg = hit['_source']['segment_index']

key = f"{vid}_{seg}"

mixed[key] = {

'video_id': vid,

'segment_index': seg,

'tags': hit['_source'].get('tags', ''),

'vector_score': hit['_score'],

'text_score': 0,

'combined_score': hit['_score'] * vector_weight

}

for hit in text_hits:

vid = hit['_source']['video_id']

seg = hit['_source']['segment_index']

key = f"{vid}_{seg}"

if key in mixed:

mixed[key]['text_score'] = hit['_score']

mixed[key]['combined_score'] += hit['_score'] * text_weight

else:

mixed[key] = {

'video_id': vid,

'segment_index': seg,

'tags': hit['_source'].get('tags', ''),

'vector_score': 0,

'text_score': hit['_score'],

'combined_score': hit['_score'] * text_weight

}

# Type by mixed rating and return prime ok

sorted_results = sorted(mixed.values(), key=lambda x: x['combined_score'], reverse=True)[:k]

return sorted_results

# Utilization instance - search with pure language question

question = "particular person strolling on seashore at sundown"

hybrid_results = search_hybrid(question, opensearch_client, ok=10)

for r in hybrid_results:

print(f"Mixed: {r['combined_score']:.4f} (Vector: {r['vector_score']:.4f}, Textual content: {r['text_score']:.4f})")

print(f" Video: {r['video_id']}, Phase: {r['segment_index']}")

print(f" Tags: {r['tags']}n")

Search efficiency at scale

After indexing all 792,218 movies, we measured search efficiency throughout all three strategies.

The measured question latencies at 792,218 movies are as follows:

- Semantic k-NN search: ~76ms (utilizing HNSW logarithmic scaling)

- BM25 textual content search: ~30ms

- Hybrid search: ~106ms

After indexing and storing all 792,218 movies and producing embeddings, the storage necessities are as follows:

- k-NN index: 28.8 GB for 792K movies

- Textual content index: 1.0 GB for 792K movies

- Complete: 29.8 GB (manageable on trendy OpenSearch clusters)

The Hierarchical Navigable Small World (HNSW) algorithm used for k-NN search gives logarithmic time complexity, which suggests search instances develop slowly because the dataset will increase. All three search strategies preserve sub-200 ms response instances even at 792K video scale, assembly manufacturing necessities for interactive search purposes.

Issues to know

Efficiency and value issues

Video processing time is dependent upon video size. In our testing, a 45-second video took roughly 70 seconds to course of utilizing the async API. The processing consists of computerized segmentation, embedding era for every phase, and output to Amazon S3. Search operations scale effectively—our testing exhibits that even at 792K movies, semantic search completes in below 80 ms, textual content search in below 30 ms, and hybrid search in below 11 0ms.Use 1024-dimensional embeddings as an alternative of 3072 to scale back storage prices whereas sustaining accuracy. Nova Multimodal Embeddings fees per second of video enter ($0.00056/second batch), so video period—not embedding dimension or segmentation—determines processing price. The async API is cheaper than processing frames individually. For OpenSearch Service, utilizing r6g cases gives higher price-performance than earlier occasion varieties, and you may implement tiering to maneuver chilly information to Amazon S3 for added financial savings.

Scaling to manufacturing

For manufacturing deployments with giant video libraries, think about using AWS Batch to course of movies in parallel throughout a number of compute cases. You’ll be able to partition your video dataset and assign subsets to totally different staff. Monitor OpenSearch Service cluster well being and scale information nodes as your index grows. The 2-index structure scales properly as a result of k-NN and textual content searches could be optimized independently.

Search accuracy tuning

Tune hybrid search weights based mostly in your use case. The default 0.7/0.3 break up (vector/textual content) favors semantic similarity for many situations. If in case you have high-quality metadata tags, rising the textual content weight to 0.5 can enhance outcomes. We suggest that you simply check totally different configurations together with your particular content material to discover a stability.

Cleanup

To keep away from ongoing fees, delete the assets that you simply created:

- Delete the OpenSearch Service area from the Amazon OpenSearch Service console

- Empty and delete the S3 buckets used for movies and embeddings

- Delete any IAM roles created particularly for this answer

Be aware that Amazon Bedrock fees are based mostly on utilization, so no cleanup is required for the Amazon Bedrock fashions themselves.

Conclusion

This walkthrough coated constructing a multimodal video search system for pure language queries throughout video content material. The answer makes use of Amazon Bedrock Nova fashions to generate embeddings. These embeddings seize each audio and visible info, shops them effectively in OpenSearch Service utilizing a two-index structure, and gives three search modes for various use instances.The async processing method scales to deal with giant video libraries, and the hybrid search functionality combines semantic and keyword-based matching for optimum accuracy. You’ll be able to prolong this basis by including options like video-to-video similarity search, implementing caching for continuously searched queries, or integrating with AWS Batch for parallel processing of enormous datasets.

To be taught extra in regards to the applied sciences used on this answer, see Amazon Nova Multimodal Embeddings and Hybrid Search with Amazon OpenSearch Service.

Concerning the authors