Subject modeling uncovers hidden themes in giant doc collections. Conventional strategies like Latent Dirichlet Allocation depend on phrase frequency and deal with textual content as luggage of phrases, typically lacking deeper context and which means.

BERTopic takes a unique route, combining transformer embeddings, clustering, and c-TF-IDF to seize semantic relationships between paperwork. It produces extra significant, context-aware subjects suited to real-world information. On this article, we break down how BERTopic works and how one can apply it step-by-step.

What’s BERTopic?

BERTopic is a modular subject modeling framework that treats subject discovery as a pipeline of unbiased however related steps. It integrates deep studying and classical pure language processing methods to provide coherent and interpretable subjects.

The core concept is to rework paperwork into semantic embeddings, cluster them based mostly on similarity, after which extract consultant phrases for every cluster. This strategy permits BERTopic to seize each which means and construction inside textual content information.

At a excessive stage, BERTopic follows this course of:

Every part of this pipeline may be modified or changed, making BERTopic extremely versatile for various purposes.

Key Elements of the BERTopic Pipeline

1. Preprocessing

Step one entails getting ready uncooked textual content information. Not like conventional NLP pipelines, BERTopic doesn’t require heavy preprocessing. Minimal cleansing, comparable to lowercasing, eradicating further areas, and filtering very brief paperwork is normally adequate.

2. Doc Embeddings

Every doc is transformed right into a dense vector utilizing transformer-based fashions comparable to SentenceTransformers. This enables the mannequin to seize semantic relationships between paperwork.

Mathematically:

The place di is a doc and vi is its vector illustration.

3. Dimensionality Discount

Excessive-dimensional embeddings are troublesome to cluster successfully. BERTopic makes use of UMAP to cut back the dimensionality whereas preserving the construction of the info.

This step improves clustering efficiency and computational effectivity.

4. Clustering

After dimensionality discount, clustering is carried out utilizing HDBSCAN. This algorithm teams related paperwork into clusters and identifies outliers.

The place zi is the assigned subject label. Paperwork labeled as −1 are thought of outliers.

5. c-TF-IDF Subject Illustration

As soon as clusters are shaped, BERTopic generates subject representations utilizing c-TF-IDF.

Time period Frequency:

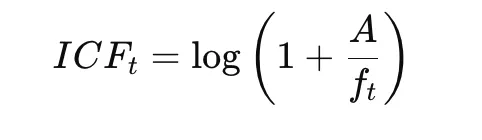

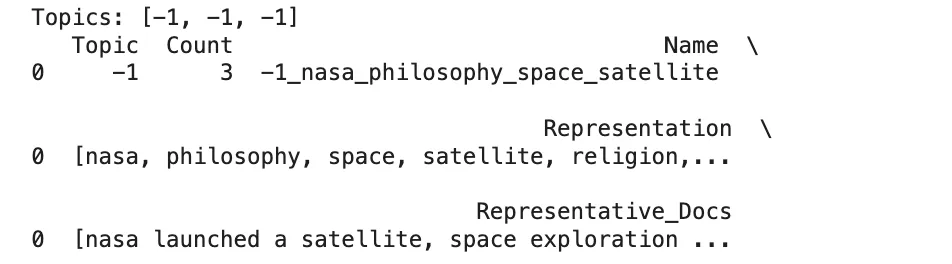

Inverse Class Frequency:

Closing c-TF-IDF:

This technique highlights phrases which might be distinctive inside a cluster whereas lowering the significance of widespread phrases throughout clusters.

Arms-On Implementation

This part demonstrates a easy implementation of BERTopic utilizing a really small dataset. The purpose right here is to not construct a production-scale subject mannequin, however to know how BERTopic works step-by-step. On this instance, we preprocess the textual content, configure UMAP and HDBSCAN, prepare the BERTopic mannequin, and examine the generated subjects.

Step 1: Import Libraries and Put together the Dataset

import re

import umap

import hdbscan

from bertopic import BERTopic

docs = [

"NASA launched a satellite",

"Philosophy and religion are related",

"Space exploration is growing"

] On this first step, the required libraries are imported. The re module is used for primary textual content preprocessing, whereas umap and hdbscan are used for dimensionality discount and clustering. BERTopic is the principle library that mixes these elements into a subject modeling pipeline.

A small record of pattern paperwork can also be created. These paperwork belong to totally different themes, comparable to area and philosophy, which makes them helpful for demonstrating how BERTopic makes an attempt to separate textual content into totally different subjects.

Step 2: Preprocess the Textual content

def preprocess(textual content):

textual content = textual content.decrease()

textual content = re.sub(r"s+", " ", textual content)

return textual content.strip()

docs = [preprocess(doc) for doc in docs]This step performs primary textual content cleansing. Every doc is transformed to lowercase in order that phrases like “NASA” and “nasa” are handled as the identical token. Further areas are additionally eliminated to standardize the formatting.

Preprocessing is vital as a result of it reduces noise within the enter. Though BERTopic makes use of transformer embeddings which might be much less depending on heavy textual content cleansing, easy normalization nonetheless improves consistency and makes the enter cleaner for downstream processing.

Step 3: Configure UMAP

umap_model = umap.UMAP(

n_neighbors=2,

n_components=2,

min_dist=0.0,

metric="cosine",

random_state=42,

init="random"

)UMAP is used right here to cut back the dimensionality of the doc embeddings earlier than clustering. Since embeddings are normally high-dimensional, clustering them immediately is commonly troublesome. UMAP helps by projecting them right into a lower-dimensional area whereas preserving their semantic relationships.

The parameter init=”random” is very vital on this instance as a result of the dataset is extraordinarily small. With solely three paperwork, UMAP’s default spectral initialization might fail, so random initialization is used to keep away from that error. The settings n_neighbors=2 and n_components=2 are chosen to go well with this tiny dataset.

Step 4: Configure HDBSCAN

hdbscan_model = hdbscan.HDBSCAN(

min_cluster_size=2,

metric="euclidean",

cluster_selection_method="eom",

prediction_data=True

)HDBSCAN is the clustering algorithm utilized by BERTopic. Its function is to group related paperwork collectively after dimensionality discount. Not like strategies comparable to Ok-Means, HDBSCAN doesn’t require the variety of clusters to be specified upfront.

Right here, min_cluster_size=2 implies that a minimum of two paperwork are wanted to kind a cluster. That is acceptable for such a small instance. The prediction_data=True argument permits the mannequin to retain info helpful for later inference and chance estimation.

Step 5: Create the BERTopic Mannequin

topic_model = BERTopic(

umap_model=umap_model,

hdbscan_model=hdbscan_model,

calculate_probabilities=True,

verbose=True

) On this step, the BERTopic mannequin is created by passing the customized UMAP and HDBSCAN configurations. This reveals one in all BERTopic’s strengths: it’s modular, so particular person elements may be personalized in line with the dataset and use case.

The choice calculate_probabilities=True allows the mannequin to estimate subject chances for every doc. The verbose=True possibility is beneficial throughout experimentation as a result of it shows progress and inside processing steps whereas the mannequin is operating.

Step 6: Match the BERTopic Mannequin

subjects, probs = topic_model.fit_transform(docs) That is the principle coaching step. BERTopic now performs the entire pipeline internally:

- It converts paperwork into embeddings

- It reduces the embedding dimensions utilizing UMAP

- It clusters the lowered embeddings utilizing HDBSCAN

- It extracts subject phrases utilizing c-TF-IDF

The result’s saved in two outputs:

- subjects, which incorporates the assigned subject label for every doc

- probs, which incorporates the chance distribution or confidence values for the assignments

That is the purpose the place the uncooked paperwork are reworked into topic-based construction.

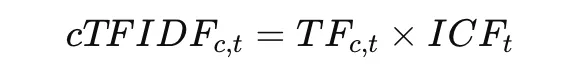

Step 7: View Subject Assignments and Subject Data

print("Matters:", subjects)

print(topic_model.get_topic_info())

for topic_id in sorted(set(subjects)):

if topic_id != -1:

print(f"nTopic {topic_id}:")

print(topic_model.get_topic(topic_id))

This last step is used to examine the mannequin’s output.

print("Matters:", subjects)reveals the subject label assigned to every doc.get_topic_info()shows a abstract desk of all subjects, together with subject IDs and the variety of paperwork in every subject.get_topic(topic_id)returns the highest consultant phrases for a given subject.

The situation if topic_id != -1 excludes outliers. In BERTopic, a subject label of -1 implies that the doc was not confidently assigned to any cluster. This can be a regular conduct in density-based clustering and helps keep away from forcing unrelated paperwork into incorrect subjects.

Benefits of BERTopic

Listed here are the principle benefits of utilizing BERTopic:

- Captures semantic which means utilizing embeddings

BERTopic makes use of transformer-based embeddings to know the context of textual content slightly than simply phrase frequency. This enables it to group paperwork with related meanings even when they use totally different phrases. - Routinely determines variety of subjects

Utilizing HDBSCAN, BERTopic doesn’t require a predefined variety of subjects. It discovers the pure construction of the info, making it appropriate for unknown or evolving datasets. - Handles noise and outliers successfully

Paperwork that don’t clearly belong to any cluster are labeled as outliers as a substitute of being compelled into incorrect subjects. This improves the general high quality and readability of the subjects. - Produces interpretable subject representations

With c-TF-IDF, BERTopic extracts key phrases that clearly characterize every subject. These phrases are distinctive and simple to know, making interpretation simple. - Extremely modular and customizable

Every a part of the pipeline may be adjusted or changed, comparable to embeddings, clustering, or vectorization. This flexibility permits it to adapt to totally different datasets and use circumstances.

Conclusion

BERTopic represents a big development in subject modeling by combining semantic embeddings, dimensionality discount, clustering, and class-based TF-IDF. This hybrid strategy permits it to provide significant and interpretable subjects that align extra intently with human understanding.

Quite than relying solely on phrase frequency, BERTopic leverages the construction of semantic area to determine patterns in textual content information. Its modular design additionally makes it adaptable to a variety of purposes, from analyzing buyer suggestions to organizing analysis paperwork.

In apply, the effectiveness of BERTopic will depend on cautious number of embeddings, tuning of clustering parameters, and considerate analysis of outcomes. When utilized appropriately, it supplies a robust and sensible answer for contemporary subject modeling duties.

Often Requested Questions

A. It makes use of semantic embeddings as a substitute of phrase frequency, permitting it to seize context and which means extra successfully.

A. It makes use of HDBSCAN clustering, which mechanically discovers the pure variety of subjects with out predefined enter.

A. It’s computationally costly on account of embedding era, particularly for giant datasets.

Login to proceed studying and luxuriate in expert-curated content material.