Picture by Editor

# Introduction

Likelihood is, you have already got the sensation that the brand new, agent-first synthetic intelligence period is right here, with builders resorting to new instruments that, as a substitute of simply producing code reactively, genuinely perceive the distinctive processes behind code technology.

Google Antigravity has rather a lot to say on this matter. This device holds the important thing to constructing extremely customizable brokers. This text unveils a part of its potential by demystifying three cornerstone ideas: guidelines, expertise, and workflows.

On this article, you will discover ways to hyperlink these key ideas collectively to construct extra sturdy brokers and highly effective automated pipelines. Particularly, we are going to carry out a step-by-step course of to arrange a code high quality assurance (QA) agent workflow, based mostly on specified guidelines and expertise. Off we go!

# Understanding the Three Core Ideas

Earlier than getting our fingers soiled, it’s handy to interrupt down the next three parts belonging to the Google Antigravity ecosystem:

- Rule: These are the baseline constraints that dictate the agent’s habits, in addition to the best way to adapt it to our stack and match our fashion. They’re saved as markdown information.

- Talent: Take into account expertise as a reusable bundle containing data that instructs the agent on the best way to deal with a concrete job. They’re allotted in a devoted folder that comprises a file named

SKILL.md. - Workflow: These are the orchestrators that put all of it collectively. Workflows are invoked by utilizing command-like directions preceded by a ahead slash, e.g.

/deploy. Merely put, workflows information the agent by an motion plan or trajectory that’s well-structured and consists of a number of steps. That is the important thing to automating repetitive duties with out lack of precision.

# Taking Motion

Let’s transfer on to our sensible instance. We’ll see the best way to configure Antigravity to evaluate Python code, apply appropriate formatting, and generate assessments — all with out the necessity for extra third-party instruments.

Earlier than taking these steps, ensure you have downloaded and put in Google Antigravity in your pc first.

As soon as put in, open the desktop utility and open your Python challenge folder — if you’re new to the device, you can be requested to outline a folder in your pc file system to behave because the challenge folder. Regardless, the best way so as to add a manually created folder into Antigravity is thru the “File >> Add Folder to Workspace…” choice within the higher menu toolbar.

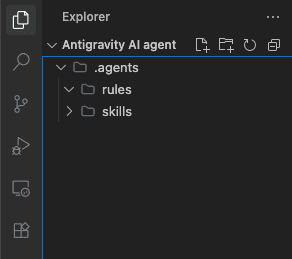

Say you might have a brand new, empty workspace folder. Within the root of the challenge listing (left-hand facet), create a brand new folder and provides it the identify .brokers. Inside this folder, we are going to create two subfolders: one known as guidelines and one named expertise. Chances are you’ll guess that these two are the place we are going to outline the 2 pillars for our agent’s habits: guidelines and expertise.

The challenge folder hierarchy | Picture by Writer

Let’s outline a rule first, containing our baseline constraints that can make sure the agent’s adherence to Python formatting requirements. We do not want verbose syntax to do that: in Antigravity, we outline it utilizing clear directions in pure language. Contained in the guidelines subfolder, you will create a file named python-style.md and paste the next content material:

# Python Fashion Rule

At all times use PEP 8 requirements. When offering or refactoring code, assume we're utilizing `black` for formatting. Maintain dependencies strictly to free, open-source libraries to make sure our challenge stays free-friendly.

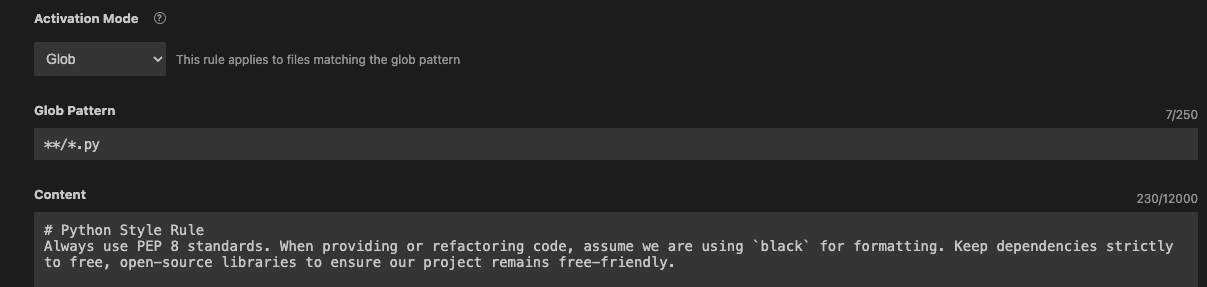

If you wish to nail it, go to the agent customizations panel that prompts on the right-hand facet of the editor, open it, and discover and choose the rule we simply outlined:

Customizing the activation of agent guidelines | Picture by Writer

Customization choices will seem above the file we simply edited. Set the activation mannequin to “glob” and specify this glob sample: **/*.py, as proven beneath:

Setting the glob activation mode | Picture by Writer

With this, you simply ensured the agent that will likely be launched later at all times applies the rule outlined after we are particularly engaged on Python scripts.

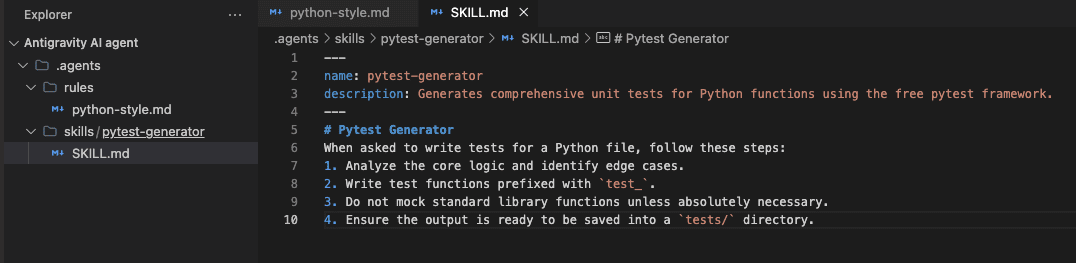

Subsequent, it is time to outline (or “train”) the agent some expertise. That would be the ability of performing sturdy assessments on Python code — one thing extraordinarily helpful in at present’s demanding software program improvement panorama. Contained in the expertise subfolder, we are going to create one other folder with the identify pytest-generator. Create a SKILL.md file inside it, with the next content material:

Defining agent expertise throughout the workspace | Picture by Writer

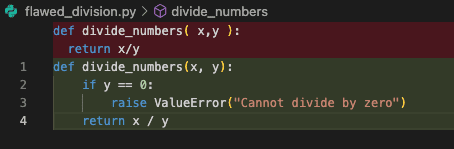

Now it is time to put all of it collectively and launch our agent, however not with out having inside our challenge workspace an instance Python file containing “poor-quality” code first to strive all of it on. If you haven’t any, strive creating a brand new .py file, calling it one thing like flawed_division.py within the root listing, and add this code:

def divide_numbers( x,y ):

return x/y

You’ll have seen this Python code is deliberately messy and flawed. Let’s examine what our agent can do about it. Go to the customization panel on the right-hand facet, and this time concentrate on the “Workflows” navigation pane. Click on “+Workspace” to create a brand new workflow we are going to name qa-check, with this content material:

# Title: Python QA Test

# Description: Automates code evaluate and take a look at technology for Python information.

Step 1: Assessment the presently open Python file for bugs and magnificence points, adhering to our Python Fashion Rule.

Step 2: Refactor any inefficient code.

Step 3: Name the `pytest-generator` ability to jot down complete unit assessments for the refactored code.

Step 4: Output the ultimate take a look at code and recommend operating `pytest` within the terminal.

All these items, when glued collectively by the agent, will rework the event loop as a complete. With the messy Python file nonetheless open within the workspace, we are going to put our agent to work by clicking the agent icon within the right-hand facet panel, typing the qa-check command, and hitting enter to run the agent:

Invoking the QA workflow through the agent console | Picture by Writer

After execution, the agent can have revised the code and mechanically steered a brand new model within the Python file, as proven beneath:

The refactored code steered by the agent | Picture by Writer

However that is not all: the agent additionally comes with the great high quality test we had been searching for by producing a lot of code excerpts you should use to run various kinds of assessments utilizing pytest. For the sake of illustration, that is what a few of these assessments might appear like:

import pytest

from flawed_division import divide_numbers

def test_divide_numbers_normal():

assert divide_numbers(10, 2) == 5.0

assert divide_numbers(9, 3) == 3.0

def test_divide_numbers_negative():

assert divide_numbers(-10, 2) == -5.0

assert divide_numbers(10, -2) == -5.0

assert divide_numbers(-10, -2) == 5.0

def test_divide_numbers_float():

assert divide_numbers(5.0, 2.0) == 2.5

def test_divide_numbers_zero_numerator():

assert divide_numbers(0, 5) == 0.0

def test_divide_numbers_zero_denominator():

with pytest.raises(ValueError, match="Can not divide by zero"):

divide_numbers(10, 0)

All this sequential course of carried out by the agent has consisted of first analyzing the code below the constraints we outlined by guidelines, then autonomously calling the newly outlined ability to provide a complete testing technique tailor-made to our codebase.

# Wrapping Up

Trying again, on this article, we now have proven the best way to mix three key parts of Google Antigravity — guidelines, expertise, and workflows — to show generic brokers into specialised, sturdy, and environment friendly workmates. We illustrated the best way to make an agent specialised in appropriately formatting messy code and defining QA assessments.

Iván Palomares Carrascosa is a frontrunner, author, speaker, and adviser in AI, machine studying, deep studying & LLMs. He trains and guides others in harnessing AI in the true world.