Web site reliability engineers (SREs) face an more and more complicated problem in fashionable distributed methods. Throughout manufacturing incidents, they need to quickly correlate knowledge from a number of sources—logs, metrics, Kubernetes occasions, and operational runbooks—to determine root causes and implement options. Conventional monitoring instruments present uncooked knowledge however lack the intelligence to synthesize data throughout these various methods, typically leaving SREs to manually piece collectively the story behind system failures.

With a generative AI resolution, SREs can ask their infrastructure questions in pure language. For instance, they will ask “Why are the payment-service pods crash looping?” or “What’s inflicting the API latency spike?” and obtain complete, actionable insights that mix infrastructure standing, log evaluation, efficiency metrics, and step-by-step remediation procedures. This functionality transforms incident response from a handbook, time-intensive course of right into a time-efficient, collaborative investigation.

On this publish, we show methods to construct a multi-agent SRE assistant utilizing Amazon Bedrock AgentCore, LangGraph, and the Mannequin Context Protocol (MCP). This method deploys specialised AI brokers that collaborate to supply the deep, contextual intelligence that fashionable SRE groups want for efficient incident response and infrastructure administration. We stroll you thru the entire implementation, from organising the demo surroundings to deploying on Amazon Bedrock AgentCore Runtime for manufacturing use.

Resolution overview

This resolution makes use of a complete multi-agent structure that addresses the challenges of contemporary SRE operations by means of clever automation. The answer consists of 4 specialised AI brokers working collectively below a supervisor agent to supply complete infrastructure evaluation and incident response help.

The examples on this publish use synthetically generated knowledge from our demo surroundings. The backend servers simulate sensible Kubernetes clusters, utility logs, efficiency metrics, and operational runbooks. In manufacturing deployments, these stub servers would get replaced with connections to your precise infrastructure methods, monitoring providers, and documentation repositories.

The structure demonstrates a number of key capabilities:

- Pure language infrastructure queries – You possibly can ask complicated questions on your infrastructure in plain English and obtain detailed evaluation combining knowledge from a number of sources

- Multi-agent collaboration – Specialised brokers for Kubernetes, logs, metrics, and operational procedures work collectively to supply complete insights

- Actual-time knowledge synthesis – Brokers entry reside infrastructure knowledge by means of standardized APIs and current correlated findings

- Automated runbook execution – Brokers retrieve and show step-by-step operational procedures for frequent incident eventualities

- Supply attribution – Each discovering consists of specific supply attribution for verification and audit functions

The next diagram illustrates the answer structure.

The structure demonstrates how the SRE help agent integrates seamlessly with Amazon Bedrock AgentCore parts:

- Buyer interface – Receives alerts about degraded API response instances and returns complete agent responses

- Amazon Bedrock AgentCore Runtime – Manages the execution surroundings for the multi-agent SRE resolution

- SRE help agent – Multi-agent collaboration system that processes incidents and orchestrates responses

- Amazon Bedrock AgentCore Gateway – Routes requests to specialised instruments by means of OpenAPI interfaces:

- Kubernetes API for getting cluster occasions

- Logs API for analyzing log patterns

- Metrics API for analyzing efficiency tendencies

- Runbooks API for looking operational procedures

- Amazon Bedrock AgentCore Reminiscence – Shops and retrieves session context and former interactions for continuity

- Amazon Bedrock AgentCore Id – Handles authentication for instrument entry utilizing Amazon Cognito integration

- Amazon Bedrock AgentCore Observability – Collects and visualizes agent traces for monitoring and debugging

- Amazon Bedrock LLMs – Powers the agent intelligence by means of Anthropic’s Claude giant language fashions (LLMs)

The multi-agent resolution makes use of a supervisor-agent sample the place a central orchestrator coordinates 5 specialised brokers:

- Supervisor agent – Analyzes incoming queries and creates investigation plans, routing work to applicable specialists and aggregating outcomes into complete stories

- Kubernetes infrastructure agent – Handles container orchestration and cluster operations, investigating pod failures, deployment points, useful resource constraints, and cluster occasions

- Utility logs agent – Processes log knowledge to seek out related data, identifies patterns and anomalies, and correlates occasions throughout a number of providers

- Efficiency metrics agent – Screens system metrics and identifies efficiency points, offering real-time evaluation and historic trending

- Operational runbooks agent – Supplies entry to documented procedures, troubleshooting guides, and escalation procedures based mostly on the present state of affairs

Utilizing Amazon Bedrock AgentCore primitives

The answer showcases the facility of Amazon Bedrock AgentCore through the use of a number of core primitives. The answer helps two suppliers for Anthropic’s LLMs. Amazon Bedrock helps Anthropic’s Claude 3.7 Sonnet for AWS built-in deployments, and Anthropic API helps Anthropic’s Claude 4 Sonnet for direct API entry.

The Amazon Bedrock AgentCore Gateway part converts the SRE agent’s backend APIs (Kubernetes, utility logs, efficiency metrics, and operational runbooks) into Mannequin Context Protocol (MCP) instruments. This permits brokers constructed with an open-source framework supporting MCP (similar to LangGraph on this publish) to seamlessly entry infrastructure APIs.

Safety for your entire resolution is offered by Amazon Bedrock AgentCore Id. It helps ingress authentication for safe entry management for brokers connecting to the gateway, and egress authentication to handle authentication with backend servers, offering safe API entry with out hardcoding credentials.

The serverless execution surroundings for deploying the SRE agent in manufacturing is offered by Amazon Bedrock AgentCore Runtime. It routinely scales from zero to deal with concurrent incident investigations whereas sustaining full session isolation. Amazon Bedrock AgentCore Runtime helps each OAuth and AWS Id and Entry Administration (IAM) for agent authentication. Purposes that invoke brokers should have applicable IAM permissions and belief insurance policies. For extra data, see Id and entry administration for Amazon Bedrock AgentCore.

Amazon Bedrock AgentCore Reminiscence transforms the SRE agent from a stateless system into an clever studying assistant that personalizes investigations based mostly on consumer preferences and historic context. The reminiscence part offers three distinct methods:

- Consumer preferences technique (/sre/customers/{user_id}/preferences) – Shops particular person consumer preferences for investigation model, communication channels, escalation procedures, and report formatting. For instance, Alice (a technical SRE) receives detailed systematic evaluation with troubleshooting steps, whereas Carol (an government) receives business-focused summaries with impression evaluation.

- Infrastructure information technique (/sre/infrastructure/{user_id}/{session_id}) – Accumulates area experience throughout investigations, enabling brokers to study from previous discoveries. When the Kubernetes agent identifies a reminiscence leak sample, this information turns into accessible for future investigations, enabling sooner root trigger identification.

- Investigation reminiscence technique (/sre/investigations/{user_id}/{session_id}) – Maintains historic context of previous incidents and their resolutions. This permits the answer to recommend confirmed remediation approaches and keep away from anti-patterns that beforehand failed.

The reminiscence part demonstrates its worth by means of customized investigations. When each Alice and Carol examine “API response instances have degraded 3x within the final hour,” they obtain equivalent technical findings however utterly totally different displays.

Alice receives a technical evaluation:

Carol receives an government abstract:

Including observability to the SRE agent

Including observability to an SRE agent deployed on Amazon Bedrock AgentCore Runtime is easy utilizing the Amazon Bedrock AgentCore Observability primitive. This permits complete monitoring by means of Amazon CloudWatch with metrics, traces, and logs. Organising observability requires three steps:

- Add the OpenTelemetry packages to your pyproject.toml:

- Configure observability in your brokers to allow metrics in CloudWatch.

- Begin your container utilizing the opentelemetry-instrument utility to routinely instrument your utility.

The next command is added to the Dockerfile for the SRE agent:

As proven within the following screenshot, with observability enabled, you acquire visibility into the next:

- LLM invocation metrics – Token utilization, latency, and mannequin efficiency throughout brokers

- Device execution traces – Period and success charges for every MCP instrument name

- Reminiscence operations – Retrieval patterns and storage effectivity

- Finish-to-end request tracing – Full request stream from consumer question to ultimate response

The observability primitive routinely captures these metrics with out further code adjustments, offering production-grade monitoring capabilities out of the field.

Improvement to manufacturing stream

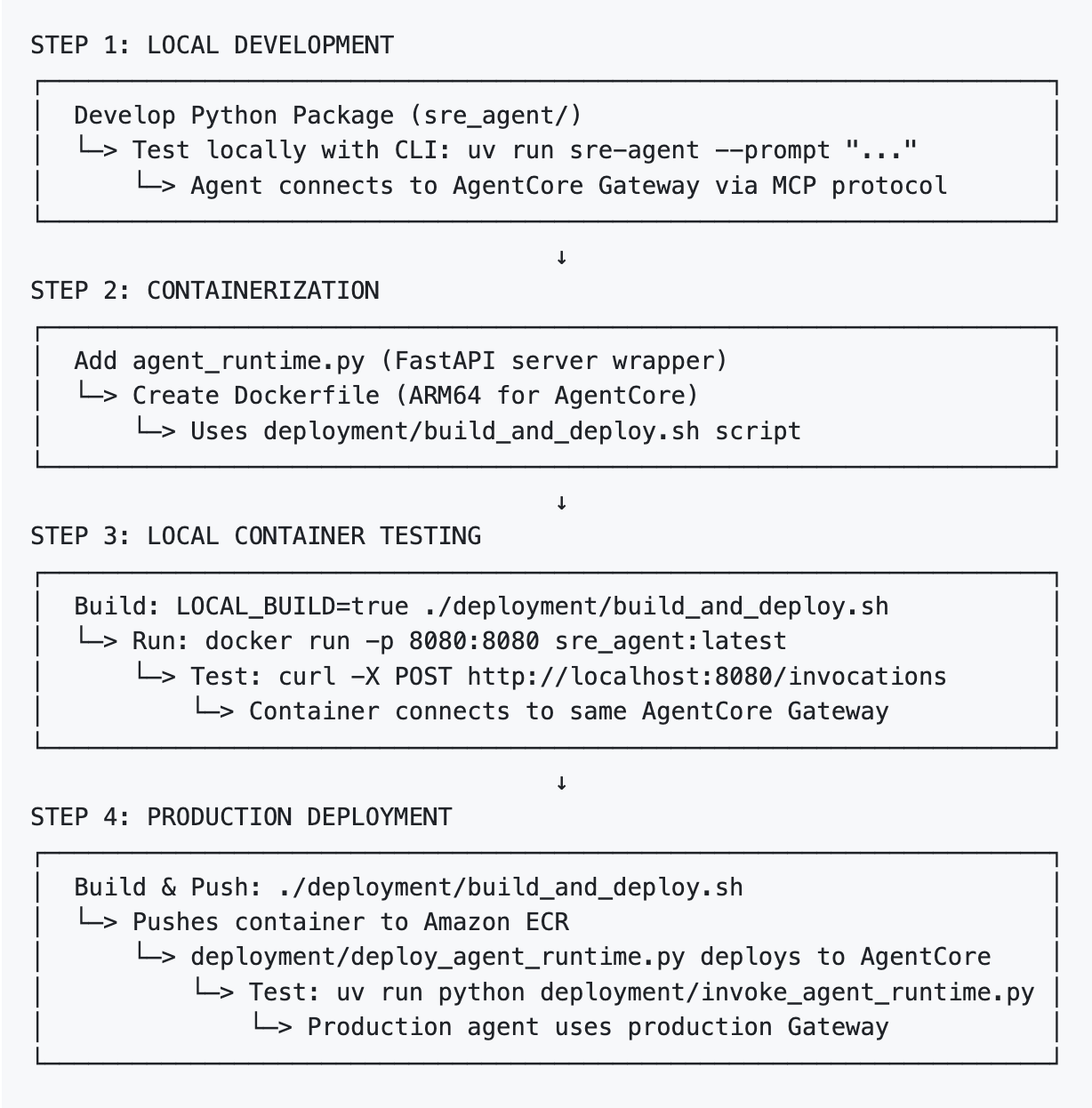

The SRE agent follows a four-step structured deployment course of from native growth to manufacturing, with detailed procedures documented in Improvement to Manufacturing Stream within the accompanying GitHub repo:

The deployment course of maintains consistency throughout environments: the core agent code (sre_agent/) stays unchanged, and the deployment/ folder incorporates deployment-specific utilities. The identical agent works domestically and in manufacturing by means of surroundings configuration, with Amazon Bedrock AgentCore Gateway offering MCP instruments entry throughout totally different phases of growth and deployment.

Implementation walkthrough

Within the following part, we concentrate on how Amazon Bedrock AgentCore Gateway, Reminiscence, and Runtime work collectively to construct this multi-agent collaboration resolution and deploy it end-to-end with MCP help and protracted intelligence.

We begin by organising the repository and establishing the native runtime surroundings with API keys, LLM suppliers, and demo infrastructure. We then convey core AgentCore parts on-line by creating the gateway for standardized API entry, configuring authentication, and establishing instrument connectivity. We add intelligence by means of AgentCore Reminiscence, creating methods for consumer preferences and investigation historical past whereas loading personas for customized incident response. Lastly, we configure particular person brokers with specialised instruments, combine reminiscence capabilities, orchestrate collaborative workflows, and deploy to AgentCore Runtime with full observability.

Detailed directions for every step are offered within the repository:

Stipulations

You will discover the port forwarding necessities and different setup directions within the README file’s Stipulations part.

Convert APIs to MCP instruments with Amazon Bedrock AgentCore Gateway

Amazon Bedrock AgentCore Gateway demonstrates the facility of protocol standardization by changing current backend APIs into MCP instruments that agent frameworks can devour. This transformation occurs seamlessly, requiring solely OpenAPI specs.

Add OpenAPI specs

The gateway course of begins by importing your current API specs to Amazon Easy Storage Service (Amazon S3). The create_gateway.sh script routinely handles importing the 4 API specs (Kubernetes, Logs, Metrics, and Runbooks) to your configured S3 bucket with correct metadata and content material varieties. These specs shall be used to create API endpoint targets within the gateway.

Create an identification supplier and gateway

Authentication is dealt with seamlessly by means of Amazon Bedrock AgentCore Id. The important.py script creates each the credential supplier and gateway:

Deploy API endpoint targets with credential suppliers

Every API turns into an MCP goal by means of the gateway. The answer routinely handles credential administration:

Validate MCP instruments are prepared for agent framework

Submit-deployment, Amazon Bedrock AgentCore Gateway offers a standardized /mcp endpoint secured with JWT tokens. Testing the deployment with mcp_cmds.sh reveals the facility of this transformation:

Common agent framework compatibility

This MCP-standardized gateway can now be configured as a Streamable-HTTP server for MCP shoppers, together with AWS Strands, Amazon’s agent growth framework, LangGraph, the framework utilized in our SRE agent implementation, and CrewAI, a multi-agent collaboration framework.

The benefit of this method is that current APIs require no modification—solely OpenAPI specs. Amazon Bedrock AgentCore Gateway handles the next:

- Protocol translation – Between REST APIs to MCP

- Authentication – JWT token validation and credential injection

- Safety – TLS termination and entry management

- Standardization – Constant instrument naming and parameter dealing with

This implies you’ll be able to take current infrastructure APIs (Kubernetes, monitoring, logging, documentation) and immediately make them accessible to AI agent frameworks that help MCP—by means of a single, safe, standardized interface.

Implement persistent intelligence with Amazon Bedrock AgentCore Reminiscence

Whereas Amazon Bedrock AgentCore Gateway offers seamless API entry, Amazon Bedrock AgentCore Reminiscence transforms the SRE agent from a stateless system into an clever, studying assistant. The reminiscence implementation demonstrates how a number of strains of code can allow refined personalization and cross-session information retention.

Initialize reminiscence methods

The SRE agent reminiscence part is constructed on Amazon Bedrock AgentCore Reminiscence’s event-based mannequin with computerized namespace routing. Throughout initialization, the answer creates three reminiscence methods with particular namespace patterns:

The three methods every serve distinct functions:

- Consumer preferences (/sre/customers/{user_id}/preferences) – Particular person investigation kinds and communication preferences

- Infrastructure Data: /sre/infrastructure/{user_id}/{session_id} – Area experience gathered throughout investigations

- Investigation Summaries: /sre/investigations/{user_id}/{session_id} – Historic incident patterns and resolutions

Load consumer personas and preferences

The answer comes preconfigured with consumer personas that show customized investigations. The manage_memories.py script hundreds these personas:

Automated namespace routing in motion

The ability of Amazon Bedrock AgentCore Reminiscence lies in its computerized namespace routing. When the SRE agent creates occasions, it solely wants to supply the actor_id—Amazon Bedrock AgentCore Reminiscence routinely determines which namespaces the occasion belongs to:

Validate the customized investigation expertise

The reminiscence part’s impression turns into clear when each Alice and Carol examine the identical problem. Utilizing equivalent technical findings, the answer produces utterly totally different displays of the identical underlying content material.

Alice’s technical report incorporates detailed systematic evaluation for technical groups:

Carol’s government abstract incorporates enterprise impression centered for government stakeholders:

The reminiscence part allows this personalization whereas constantly studying from every investigation, constructing organizational information that improves incident response over time.

Deploy to manufacturing with Amazon Bedrock AgentCore Runtime

Amazon Bedrock AgentCore makes it easy to deploy current brokers to manufacturing. The method includes three key steps: containerizing your agent, deploying to Amazon Bedrock AgentCore Runtime, and invoking the deployed agent.

Containerize your agent

Amazon Bedrock AgentCore Runtime requires ARM64 containers. The next code reveals the entire Dockerfile:

Current brokers simply want a FastAPI wrapper (agent_runtime:app) to turn into suitable with Amazon Bedrock AgentCore, and we add opentelemetry-instrument to allow observability by means of Amazon Bedrock AgentCore.

Deploy to Amazon Bedrock AgentCore Runtime

Deploying to Amazon Bedrock AgentCore Runtime is easy with the deploy_agent_runtime.py script:

Amazon Bedrock AgentCore handles the infrastructure, scaling, and session administration routinely.

Invoke your deployed agent

Calling your deployed agent is simply as easy with invoke_agent_runtime.py:

Key advantages of Amazon Bedrock AgentCore Runtime

Amazon Bedrock AgentCore Runtime affords the next key advantages:

- Zero infrastructure administration – No servers, load balancers, or scaling to configure

- Constructed-in session isolation – Every dialog is totally remoted

- AWS IAM integration – Safe entry management with out customized authentication

- Automated scaling – Scales from zero to hundreds of concurrent classes

The whole deployment course of, together with constructing containers and dealing with AWS permissions, is documented within the Deployment Information.

Actual-world use circumstances

Let’s discover how the SRE agent handles frequent incident response eventualities with an actual investigation.

When going through a manufacturing problem, you’ll be able to question the system in pure language. The answer makes use of Amazon Bedrock AgentCore Reminiscence to personalize the investigation based mostly in your function and preferences:

The supervisor retrieves Alice’s preferences from reminiscence (detailed systematic evaluation model) and creates an investigation plan tailor-made to her function as a Technical SRE:

The brokers examine sequentially based on the plan, every contributing their specialised evaluation. The answer then aggregates these findings right into a complete government abstract:

This investigation demonstrates how Amazon Bedrock AgentCore primitives work collectively:

- Amazon Bedrock AgentCore Gateway – Supplies safe entry to infrastructure APIs by means of MCP instruments

- Amazon Bedrock AgentCore Id – Handles ingress and egress authentication

- Amazon Bedrock AgentCore Runtime – Hosts the multi-agent resolution with computerized scaling

- Amazon Bedrock AgentCore Reminiscence – Personalizes Alice’s expertise and shops investigation information for future incidents

- Amazon Bedrock AgentCore Observability – Captures detailed metrics and traces in CloudWatch for monitoring and debugging

The SRE agent demonstrates clever agent orchestration, with the supervisor routing work to specialists based mostly on the investigation plan. The answer’s reminiscence capabilities be certain every investigation builds organizational information and offers customized experiences based mostly on consumer roles and preferences.

This investigation showcases a number of key capabilities:

- Multi-source correlation – It connects database configuration points to API efficiency degradation

- Sequential investigation – Brokers work systematically by means of the investigation plan whereas offering reside updates

- Supply attribution – Findings embody the particular instrument and knowledge supply

- Actionable insights – It offers a transparent timeline of occasions and prioritized restoration steps

- Cascading failure detection – It might assist present how one failure propagates by means of the system

Enterprise impression

Organizations implementing AI-powered SRE help report important enhancements in key operational metrics. Preliminary investigations that beforehand took 30–45 minutes can now be accomplished in 5–10 minutes, offering SREs with complete context earlier than diving into detailed evaluation. This dramatic discount in investigation time interprets on to sooner incident decision and lowered downtime.The answer improves how SREs work together with their infrastructure. As an alternative of navigating a number of dashboards and instruments, engineers can ask questions in pure language and obtain aggregated insights from related knowledge sources. This discount in context switching allows groups to keep up focus throughout important incidents and reduces cognitive load throughout investigations.Maybe most significantly, the answer democratizes information throughout the staff. All staff members can entry the identical complete investigation strategies, lowering dependency on tribal information and on-call burden. The constant methodology offered by the answer makes positive investigation approaches stay uniform throughout staff members and incident varieties, enhancing total reliability and lowering the prospect of missed proof.

The routinely generated investigation stories present helpful documentation for post-incident evaluations and assist groups study from every incident, constructing organizational information over time. Moreover, the answer extends current AWS infrastructure investments, working alongside providers like Amazon CloudWatch, AWS Techniques Supervisor, and different AWS operational instruments to supply a unified operational intelligence system.

Extending the answer

The modular structure makes it easy to increase the answer in your particular wants.

For instance, you’ll be able to add specialised brokers in your area:

- Safety agent – For compliance checks and safety incident response

- Database agent – For database-specific troubleshooting and optimization

- Community agent – For connectivity and infrastructure debugging

You can even substitute the demo APIs with connections to your precise methods:

- Kubernetes integration – Hook up with your cluster APIs for pod standing, deployments, and occasions

- Log aggregation – Combine along with your log administration service (Elasticsearch, Splunk, CloudWatch Logs)

- Metrics platform – Hook up with your monitoring service (Prometheus, Datadog, CloudWatch Metrics)

- Runbook repository – Hyperlink to your operational documentation and playbooks saved in wikis, Git repositories, or information bases

Clear up

To keep away from incurring future prices, use the cleanup script to take away the billable AWS sources created through the demo:

This script routinely performs the next actions:

- Cease backend servers

- Delete the gateway and its targets

- Delete Amazon Bedrock AgentCore Reminiscence sources

- Delete the Amazon Bedrock AgentCore Runtime

- Take away generated recordsdata (gateway URIs, tokens, agent ARNs, reminiscence IDs)

For detailed cleanup directions, seek advice from Cleanup Directions.

Conclusion

The SRE agent demonstrates how multi-agent methods can rework incident response from a handbook, time-intensive course of right into a time-efficient, collaborative investigation that gives SREs with the insights they should resolve points shortly and confidently.

By combining the enterprise-grade infrastructure of Amazon Bedrock AgentCore with standardized instrument entry in MCP, we’ve created a basis that may adapt as your infrastructure evolves and new capabilities emerge.

The whole implementation is accessible in our GitHub repository, together with demo environments, configuration guides, and extension examples. We encourage you to discover the answer, customise it in your infrastructure, and share your experiences with the group.

To get began constructing your personal SRE assistant, seek advice from the next sources:

Concerning the authors

Amit Arora is an AI and ML Specialist Architect at Amazon Net Providers, serving to enterprise prospects use cloud-based machine studying providers to quickly scale their improvements. He’s additionally an adjunct lecturer within the MS knowledge science and analytics program at Georgetown College in Washington, D.C.

Amit Arora is an AI and ML Specialist Architect at Amazon Net Providers, serving to enterprise prospects use cloud-based machine studying providers to quickly scale their improvements. He’s additionally an adjunct lecturer within the MS knowledge science and analytics program at Georgetown College in Washington, D.C.

Dheeraj Oruganty is a Supply Advisor at Amazon Net Providers. He’s obsessed with constructing progressive Generative AI and Machine Studying options that drive actual enterprise impression. His experience spans Agentic AI Evaluations, Benchmarking and Agent Orchestration, the place he actively contributes to analysis advancing the sphere. He holds a grasp’s diploma in Information Science from Georgetown College. Outdoors of labor, he enjoys geeking out on vehicles, bikes, and exploring nature.

Dheeraj Oruganty is a Supply Advisor at Amazon Net Providers. He’s obsessed with constructing progressive Generative AI and Machine Studying options that drive actual enterprise impression. His experience spans Agentic AI Evaluations, Benchmarking and Agent Orchestration, the place he actively contributes to analysis advancing the sphere. He holds a grasp’s diploma in Information Science from Georgetown College. Outdoors of labor, he enjoys geeking out on vehicles, bikes, and exploring nature.