What number of instances have you ever spent months evaluating automation initiatives – enduring a number of vendor assessments, navigating prolonged RFPs, and managing complicated procurement cycles – solely to face underwhelming outcomes or outright failure? You’re not alone.

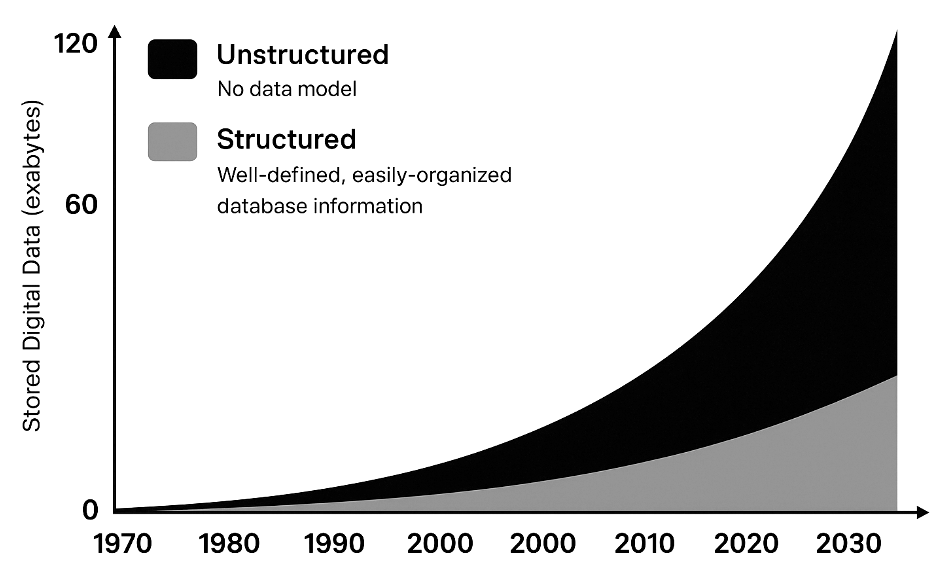

Many enterprises wrestle to scale automation, not attributable to an absence of instruments, however as a result of their information isn’t prepared. In idea, AI brokers and RPA bots might deal with numerous duties; in observe, they fail when fed messy or unstructured inputs. Research present that 80%-90% of all enterprise information is unstructured – consider emails, PDFs, invoices, photos, audio, and many others. This pervasive unstructured information is the actual bottleneck. Irrespective of how superior your automation platform, it could actually’t reliably course of what it can’t correctly learn or perceive. Briefly, low automation ranges are often a knowledge downside, not a device downside.

Why Brokers and RPA Require Structured Knowledge

Automation instruments like Robotic Course of Automation (RPA) excel with structured, predictable information – neatly organized in databases, spreadsheets, or standardized types. They falter with unstructured inputs. A typical RPA bot is basically a rules-based engine (“digital employee”) that follows express directions. If the enter is a scanned doc or a free-form textual content discipline, the bot doesn’t inherently know learn how to interpret it. RPA is unable to immediately handle unstructured datasets; the info should first be transformed into structured type utilizing further strategies. In different phrases, an RPA bot wants a clear desk of information, not a pile of paperwork.

“RPA is handiest when processes contain structured, predictable information. In observe, many enterprise paperwork equivalent to invoices are unstructured or semi-structured, making automated processing troublesome”. Unstructured information now accounts for ~80% of enterprise information, underscoring why many RPA initiatives stall.

The identical holds true for AI brokers and workflow automation: they solely carry out in addition to the info they obtain. If an AI customer support agent is drawing solutions from disorganized logs and unlabeled information, it’ll possible give mistaken solutions. The muse of any profitable automation or AI agent is “AI-ready” information that’s clear, well-organized, and ideally structured. This is the reason organizations that make investments closely in instruments however neglect information preparation typically see disappointing automation ROI.

Challenges with Conventional Knowledge Structuring Strategies

If unstructured information is the difficulty, why not simply convert it to structured type? That is simpler stated than finished. Conventional strategies to construction information like OCR, ICR, and ETL have important challenges:

- OCR and ICR: OCR and ICR have lengthy been used to digitize paperwork, however they crumble in real-world eventualities. Basic OCR is simply pattern-matching, it struggles with diverse fonts, layouts, tables, photos, or signatures. Even high engines hit solely 80 – 90% accuracy on semi-structured docs, creating 1,000 – 2,000 errors per 10,000 paperwork and forcing guide assessment on 60%+ of information. Handwriting makes it worse, ICR barely manages 65 – 75% accuracy on cursive. Most techniques are additionally template-based, demanding countless rule updates for each new bill or type format.OCR/ICR can pull textual content, however it can’t perceive context or construction at scale, making them unreliable for enterprise automation.

- Typical ETL Pipelines: ETL works nice for structured databases however falls aside with unstructured information. No fastened schema, excessive variability, and messy inputs imply conventional ETL instruments want heavy customized scripting to parse pure language or photos. The outcome? Errors, duplicates, and inconsistencies pile up, forcing information engineers to spend 80% of their time cleansing and prepping information—leaving solely 20% for precise evaluation or AI modeling. ETL was constructed for rows and columns, not for immediately’s messy, unstructured information lakes—slowing automation and AI adoption considerably.

- Rule-Based mostly Approaches: Older automation options typically tried to deal with unstructured data with brute-force guidelines, e.g. utilizing regex patterns to search out key phrases in textual content, or establishing resolution guidelines for sure doc layouts. These approaches are extraordinarily brittle. The second the enter varies from what was anticipated, the principles fail. Consequently, corporations find yourself with fragile pipelines that break each time a vendor adjustments an bill format or a brand new textual content sample seems. Upkeep of those rule techniques turns into a heavy burden.

All these elements contribute to why so many organizations nonetheless depend on armies of information entry employees or guide assessment. McKinsey observes that present doc extraction instruments are sometimes “cumbersome to arrange” and fail to yield excessive accuracy over time, forcing corporations to take a position closely in guide exception dealing with. In different phrases, regardless of utilizing OCR or ETL, you find yourself with folks within the loop to repair all of the issues the automation couldn’t determine. This not solely cuts into the effectivity features but in addition dampens worker enthusiasm (since employees are caught correcting machine errors or doing low-value information clean-up). It’s a irritating establishment: automation tech exists, however with out clear, structured information, its potential is rarely realized.

Foundational LLMs Are Not a Silver Bullet for Unstructured Knowledge

With the rise of huge language fashions, one would possibly hope that they may merely “learn” all of the unstructured information and magically output structured data. Certainly, trendy basis fashions (like GPT-4) are superb at understanding language and even deciphering photos. Nevertheless, general-purpose LLMs should not purpose-built to unravel the enterprise unstructured information downside of scale, accuracy, and integration. There are a number of causes for this:

- Scale Limitations: Out-of-the-box LLMs can’t ingest thousands and thousands of paperwork or whole information lakes in a single go. Enterprise information typically spans terabytes, far past an LLM’s capability at any given time. Chunking the info into smaller items helps, however then the mannequin loses the “large image” and may simply combine up or miss particulars. LLMs are additionally comparatively gradual and computationally costly for processing very giant volumes of textual content. Utilizing them naively to parse each doc can turn into cost-prohibitive and latency-prone.

- Lack of Reliability and Construction: LLMs generate outputs probabilistically, which suggests they could “hallucinate” info or fill in gaps with plausible-sounding however incorrect information. For important fields (like an bill complete or a date), you want 100% precision, a made-up worth is unacceptable. Foundational LLMs don’t assure constant, structured output except closely constrained. They don’t inherently know which components of a doc are vital or correspond to which discipline labels (except skilled or prompted in a really particular method). As one analysis research famous, “sole reliance on LLMs isn’t viable for a lot of RPA use circumstances” as a result of they’re costly to coach, require plenty of information, and are vulnerable to errors/hallucinations with out human oversight. In essence, a chatty common AI would possibly summarize an e-mail for you, however trusting it to extract each bill line merchandise with good accuracy, each time, is dangerous.

- Not Skilled on Your Knowledge: By default, basis fashions be taught from internet-scale textual content (books, internet pages, and many others.), not out of your firm’s proprietary types and vocabulary. They could not perceive particular jargon on a type, or the format conventions of your trade’s paperwork. Effective-tuning them in your information is feasible however expensive and complicated, and even then, they continue to be generalists, not specialists in doc processing. As a Forbes Tech Council perception put it, an LLM by itself “doesn’t know your organization’s information” and lacks the context of inner data. You typically want further techniques (like retrieval-augmented era, information graphs, and many others.) to floor the LLM in your precise information, successfully including again a structured layer.

In abstract, basis fashions are highly effective, however they aren’t a plug-and-play answer for parsing all enterprise unstructured information into neat rows and columns. They increase however don’t change the necessity for clever information pipelines. Gartner analysts have additionally cautioned that many organizations aren’t even able to leverage GenAI on their unstructured information attributable to governance and high quality points, utilizing LLMs with out fixing the underlying information is placing the cart earlier than the horse.

Structuring Unstructured Knowledge, Why Goal-Constructed Fashions are the reply

Right now, Gartner and different main analysts point out a transparent shift: conventional IDP, OCR, and ICR options have gotten out of date, changed by superior giant language fashions (LLMs) which are fine-tuned particularly for information extraction duties. Not like their predecessors, these purpose-built LLMs excel at deciphering the context of various and complicated paperwork with out the constraints of static templates or restricted sample matching.

Effective-tuned, data-extraction-focused LLMs leverage deep studying to know doc context, acknowledge delicate variations in construction, and constantly output high-quality, structured information. They’ll classify paperwork, extract particular fields—equivalent to contract numbers, buyer names, coverage particulars, dates, and transaction quantities—and validate extracted information with excessive accuracy, even from handwriting, low-quality scans, or unfamiliar layouts. Crucially, these fashions frequently be taught and enhance by processing extra examples, considerably lowering the necessity for ongoing human intervention.

McKinsey notes that organizations adopting these LLM-driven options see substantial enhancements in accuracy, scalability, and operational effectivity in comparison with conventional OCR/ICR strategies. By integrating seamlessly into enterprise workflows, these superior LLM-based extraction techniques permit RPA bots, AI brokers, and automation pipelines to operate successfully on the beforehand inaccessible 80% of unstructured enterprise information.

Consequently, trade leaders emphasize that enterprises should pivot towards fine-tuned, extraction-optimized LLMs as a central pillar of their information technique. Treating unstructured information with the identical rigor as structured information by these superior fashions unlocks important worth, lastly enabling true end-to-end automation and realizing the total potential of GenAI applied sciences.

Actual-World Examples: Enterprises Tackling Unstructured Knowledge with Nanonets

How are main enterprises fixing their unstructured information challenges immediately? Quite a lot of forward-thinking corporations have deployed AI-driven doc processing platforms like Nanonets to nice success. These examples illustrate that with the proper instruments (and information mindset), even legacy, paper-heavy processes can turn into streamlined and autonomous:

- Asian Paints (Manufacturing): One of many largest paint corporations on the earth, Asian Paints handled hundreds of vendor invoices and buy orders. They used Nanonets to automate their bill processing workflow, reaching a 90% discount in processing time for Accounts Payable. This translated to liberating up about 192 hours of guide work monthly for his or her finance crew. The AI mannequin extracts all key fields from invoices and integrates with their ERP, so employees not spend time typing in particulars or correcting errors.

- JTI (Japan Tobacco Worldwide) – Ukraine operations: JTI’s regional crew confronted a really lengthy tax refund declare course of that concerned shuffling giant quantities of paperwork between departments and authorities portals. After implementing Nanonets, they introduced the turnaround time down from 24 weeks to only 1 week, a 96% enchancment in effectivity. What was once a multi-month ordeal of information entry and verification grew to become a largely automated pipeline, dramatically rushing up money stream from tax refunds.

- Suzano (Pulp & Paper Trade): Suzano, a world pulp and paper producer, processes buy orders from varied worldwide purchasers. By integrating Nanonets into their order administration, they lowered the time taken per buy order from about 8 minutes to 48 seconds, roughly a 90% time discount in dealing with every order. This was achieved by routinely studying incoming buy paperwork (which arrive in numerous codecs) and populating their system with the wanted information. The result’s sooner order achievement and fewer guide workload.

- SaltPay (Fintech): SaltPay wanted to handle an enormous community of 100,000+ distributors, every submitting invoices in numerous codecs. Nanonets allowed SaltPay to simplify vendor bill administration, reportedly saving 99% of the time beforehand spent on this course of. What was as soon as an awesome, error-prone process is now dealt with by AI with minimal oversight.

These circumstances underscore a standard theme: organizations that leverage AI-driven information extraction can supercharge their automation efforts. They not solely save time and labor prices but in addition enhance accuracy (e.g. one case famous 99% accuracy achieved in information extraction) and scalability. Staff may be redeployed to extra strategic work as a substitute of typing or verifying information all day. The know-how (instruments) wasn’t the differentiator right here, the important thing was getting the info pipeline so as with the assistance of specialised AI fashions. As soon as the info grew to become accessible and clear, the prevailing automation instruments (workflows, RPA bots, analytics, and many others.) might lastly ship full worth.

Clear Knowledge Pipelines: The Basis of the Autonomous Enterprise

Within the pursuit of a “really autonomous enterprise”, the place processes run with minimal human intervention – having a clear, well-structured information pipeline is completely important. A “really autonomous enterprise” doesn’t simply want higher instruments—it wants higher information. Automation and AI are solely nearly as good as the data they eat, and when that gasoline is messy or unstructured, the engine sputters. Rubbish in, rubbish out is the only largest motive automation initiatives underdeliver.

Ahead-thinking leaders now deal with information readiness as a prerequisite, not an afterthought. Many enterprises spend 2 – 3 months upfront cleansing and organizing information earlier than AI initiatives as a result of skipping this step results in poor outcomes. A clear information pipeline—the place uncooked inputs like paperwork, sensor feeds, and buyer queries are systematically collected, cleansed, and remodeled right into a single supply of fact—is the inspiration that enables automation to scale seamlessly. As soon as that is in place, new use circumstances can plug into present information streams with out reinventing the wheel.

In distinction, organizations with siloed, inconsistent information stay trapped in partial automation, continuously counting on people to patch gaps and repair errors. True autonomy requires clear, constant, and accessible information throughout the enterprise—very like self-driving automobiles want correct roads earlier than they’ll function at scale.

The takeaway: The instruments for automation are extra highly effective than ever, however it’s the info that determines success. AI and RPA don’t fail attributable to lack of functionality; they fail attributable to lack of unpolluted, structured information. Resolve that, and the trail to the autonomous enterprise—and the following wave of productiveness—opens up.

Sources: