This publish is cowritten with Thomas Voss and Bernhard Hersberger from Hapag-Lloyd.

Hapag-Lloyd is without doubt one of the world’s main transport firms with greater than 308 fashionable vessels, 11.9 million TEUs (twenty-foot equal items) transported per 12 months, and 16,700 motivated staff in additional than 400 places of work in 139 nations. They join continents, companies, and other people by way of dependable container transportation companies on the foremost commerce routes throughout the globe.

On this publish, we share how Hapag-Lloyd developed and applied a machine studying (ML)-powered assistant predicting vessel arrival and departure occasions that revolutionizes their schedule planning. Through the use of Amazon SageMaker AI and implementing strong MLOps practices, Hapag-Lloyd has enhanced its schedule reliability—a key efficiency indicator within the trade and high quality promise to their prospects.

For Hapag-Lloyd, correct vessel schedule predictions are essential for sustaining schedule reliability, the place schedule reliability is outlined as proportion of vessels arriving inside 1 calendar day (earlier or later) of their estimated arrival time, communicated round 3 to 4 weeks earlier than arrival.

Previous to creating the brand new ML answer, Hapag-Lloyd relied on easy rule-based and statistical calculations, primarily based on historic transit patterns for vessel schedule predictions. Whereas this statistical technique supplied primary predictions, it couldn’t successfully account for real-time circumstances corresponding to port congestion, requiring vital guide intervention from operations groups.

Growing a brand new ML answer to switch the present system introduced a number of key challenges:

- Dynamic transport circumstances – The estimated time of arrival (ETA) prediction mannequin must account for quite a few variables that have an effect on journey period, together with climate circumstances, port-related delays corresponding to congestion, labor strikes, and surprising occasions that drive route adjustments. For instance, when the Suez Canal was blocked by the Ever Given container ship in March 2021, vessels needed to be rerouted round Africa, including roughly 10 days to their journey occasions.

- Knowledge integration at scale – The event of correct fashions requires integration of enormous volumes of historic voyage information with exterior real-time information sources together with port congestion data and vessel place monitoring (AIS). The answer must scale throughout 120 vessel companies or strains and 1,200 distinctive port-to-port routes.

- Sturdy MLOps infrastructure – A sturdy MLOps infrastructure is required to constantly monitor mannequin efficiency and rapidly deploy updates at any time when wanted. This consists of capabilities for normal mannequin retraining to adapt to altering patterns, complete efficiency monitoring, and sustaining real-time inference capabilities for speedy schedule changes.

Hapag-Llyod’s earlier strategy to schedule planning couldn’t successfully handle these challenges. A complete answer that might deal with each the complexity of vessel schedule prediction and supply the infrastructure wanted to maintain ML operations at world scale was wanted.

The Hapag-Lloyd community consists of over 308 vessels and lots of extra accomplice vessels that constantly circumnavigate the globe on predefined service routes, leading to greater than 3,500 port arrivals monthly. Every vessel operates on a hard and fast service line, making common spherical journeys between a sequence of ports. For example, a vessel may repeatedly sail a route from Southampton to Le Havre, Rotterdam, Hamburg, New York, and Philadelphia earlier than beginning the cycle once more. For every port arrival, an ETA should be supplied a number of weeks prematurely to rearrange essential logistics, together with berth home windows at ports and onward transportation of containers by sea, land or air transport. The next desk reveals an instance the place a vessel travels from Southampton to New York by way of Le Havre, Rotterdam, and Hamburg. The vessel’s time till arrival on the New York port may be calculated because the sum of ocean to port time to Southampton, and the respective berth occasions and port-to-port occasions for the intermediate ports known as whereas crusing to New York. If this vessel encounters a delay in Rotterdam, it impacts its arrival in Hamburg and cascades by way of the complete schedule, impacting arrivals in New York and past as proven within the following desk. This ripple impact can disrupt fastidiously deliberate transshipment connections and require intensive replanning of downstream operations.

| Port | Terminal name | Scheduled arrival | Scheduled departure |

| SOUTHAMPTON | 1 | 2025-07-29 07:00 | 2025-07-29 21:00 |

| LE HAVRE | 2 | 2025-07-30 16:00 | 2025-07-31 16:00 |

| ROTTERDAM | 3 | 2025-08-03 18:00 | 2025-08-05 03:00 |

| HAMBURG | 4 | 2025-08-07 07:00 | 2025-08-08 07:00 |

| NEW YORK | 5 | 2025-08-18 13:00 | 2025-08-21 13:00 |

| PHILADELPHIA | 6 | 2025-08-22 06:00 | 2025-08-24 16:30 |

| SOUTHAMPTON | 7 | 2025-09-01 08:00 | 2025-09-02 20:00 |

When a vessel departs Rotterdam with a delay, new ETAs should be calculated for the remaining ports. For Hamburg, we solely have to estimate the remaining crusing time from the vessel’s present place. Nonetheless, for subsequent ports like New York, the prediction requires a number of parts: the remaining crusing time to Hamburg, the period of port operations in Hamburg, and the crusing time from Hamburg to New York.

Answer overview

As an enter to the vessel ETA prediction, we course of the next two information sources:

- Hapag-Lloyd’s inside information, which is saved in an information lake. This consists of detailed vessel schedules and routes, port and terminal efficiency data, real-time port congestion and ready occasions, and vessel traits datasets. This information is ready for mannequin coaching utilizing AWS Glue jobs.

- Automated Identification System (AIS) information, which gives streaming updates on the vessel actions. This AIS information ingestion is batched each 20 minutes utilizing AWS Lambda and consists of essential data corresponding to latitude, longitude, pace, and course of vessels. New batches are processed utilizing AWS Glue and Iceberg to replace the present AIS database—at the moment holding round 35 million observations.

These information sources are mixed to create coaching datasets for the ML fashions. We fastidiously take into account the timing of accessible information by way of temporal splitting to keep away from information leakage. Knowledge leakage happens when utilizing data that wouldn’t be obtainable at prediction time in the actual world. For instance, when coaching a mannequin to foretell arrival time in Hamburg for a vessel at the moment in Rotterdam, we will’t use precise transit occasions that have been solely identified after the vessel reached Hamburg.

A vessel’s journey may be divided into totally different legs, which led us to develop a multi-step answer utilizing specialised ML fashions for every leg, that are orchestrated as hierarchical fashions to retrieve the general ETA:

- The Ocean to Port (O2P) mannequin predicts the time wanted for a vessel to succeed in its subsequent port from its present place at sea. The mannequin makes use of options corresponding to remaining distance to vacation spot, vessel pace, journey progress metrics, port congestion information, and historic sea leg durations.

- The Port to Port (P2P) mannequin forecasts crusing time between any two ports for a given date, contemplating key options corresponding to ocean distance between ports, current transit time developments, climate, and seasonal patterns.

- The Berth Time mannequin estimates how lengthy a vessel will spend at port. The mannequin makes use of vessel traits (corresponding to tonnage and cargo capability), deliberate container load, and historic port efficiency.

- The Mixed mannequin takes as enter the predictions from the O2P, P2P, and Berth Time fashions, together with the unique schedule. Somewhat than predicting absolute arrival occasions, it computes the anticipated deviation from the unique schedule by studying patterns in historic prediction accuracy and particular voyage circumstances. These computed deviations are then used to replace ETAs for the upcoming ports in a vessel’s schedule.

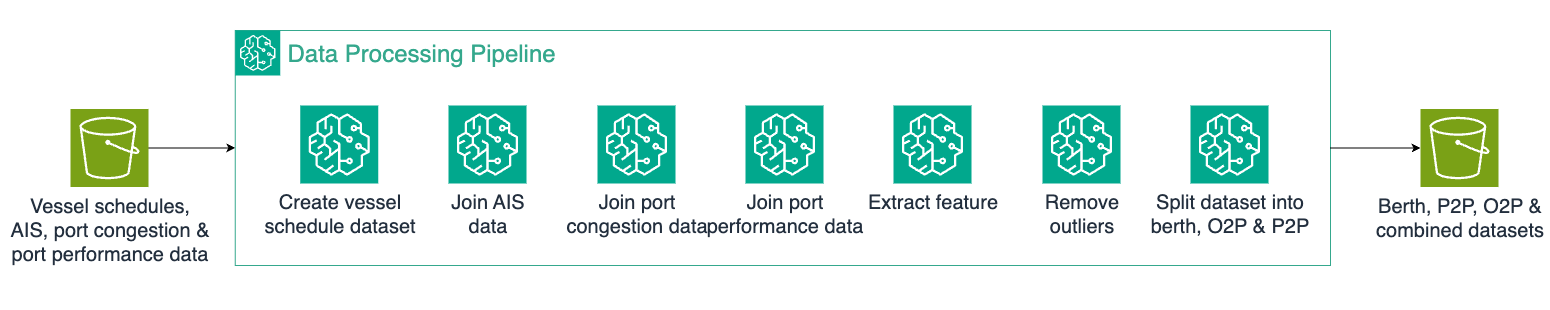

All 4 fashions are educated utilizing the XGBoost algorithm constructed into SageMaker, chosen for its capacity to deal with complicated relationships in tabular information and its strong efficiency with blended numerical and categorical options. Every mannequin has a devoted coaching pipeline in SageMaker Pipelines, dealing with information preprocessing steps and mannequin coaching. The next diagram reveals the info processing pipeline, which generates the enter datasets for ML coaching.

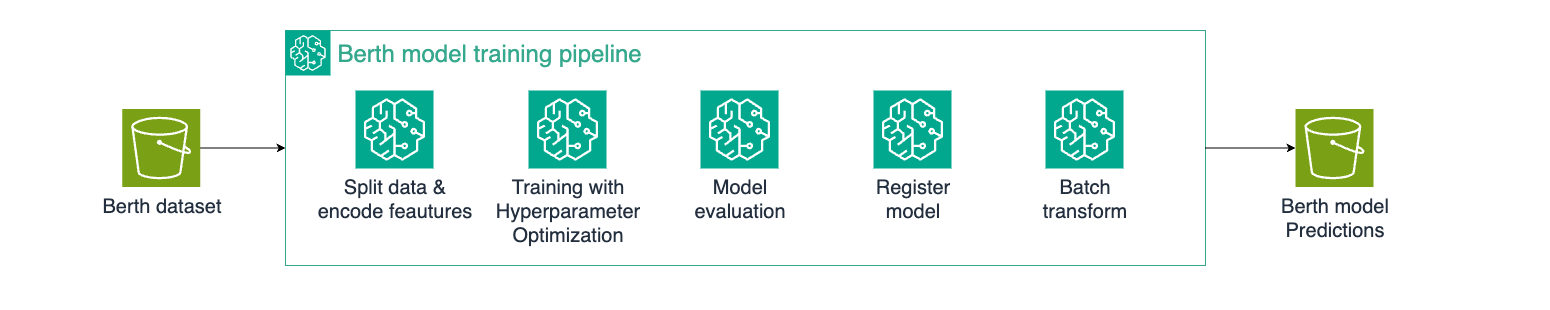

For example, this diagram reveals the coaching pipeline of the Berth mannequin. The steps within the SageMaker coaching pipelines of the Berth, P2P, O2P, and Mixed fashions are similar. Subsequently, the coaching pipeline is applied as soon as as a blueprint and re-used throughout the opposite fashions, enabling a quick turn-around time of the implementation.

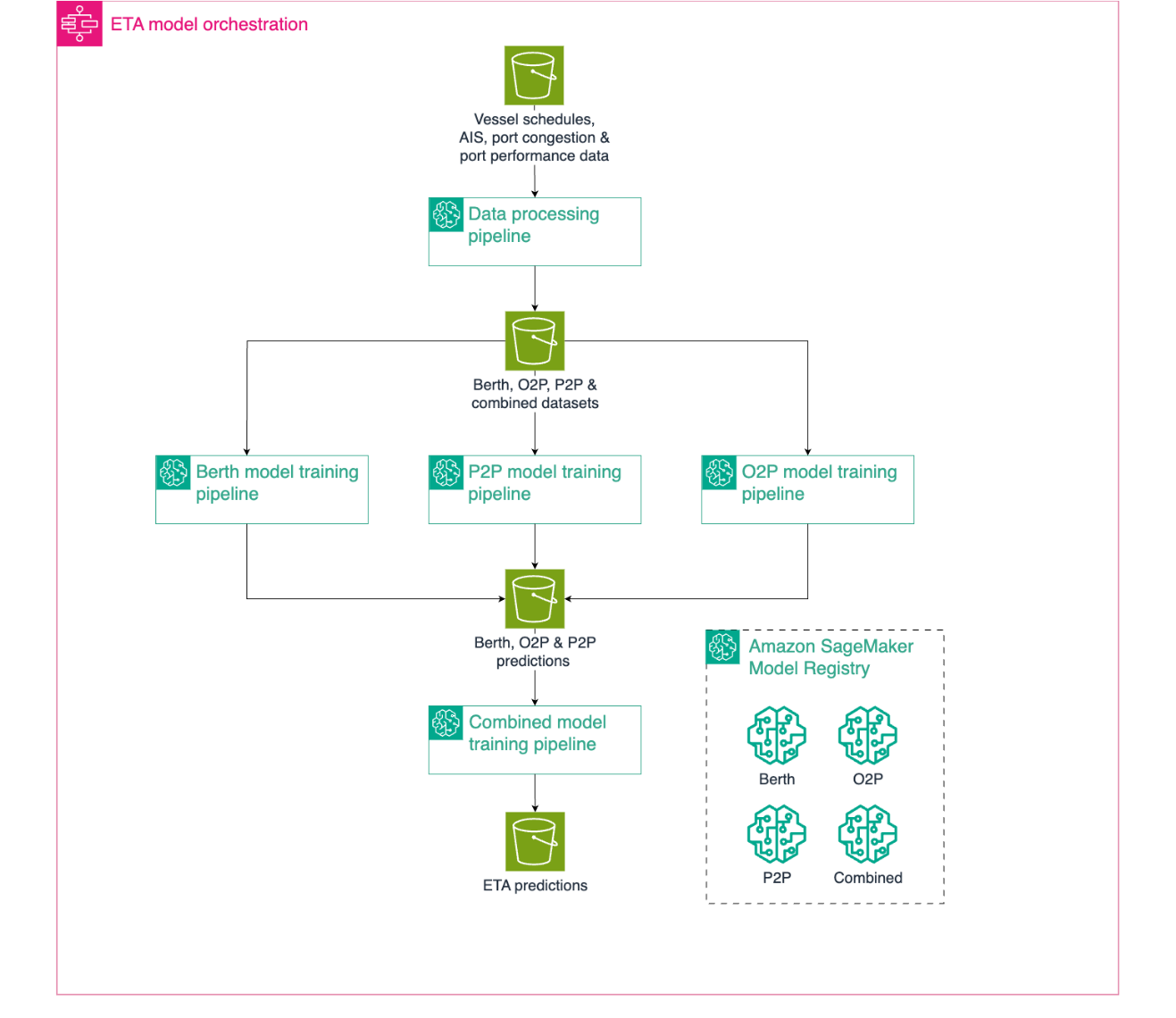

As a result of the Mixed mannequin is determined by outputs from the opposite three specialised fashions, we use AWS Step Capabilities to orchestrate the SageMaker pipelines for coaching. This helps be certain that the person fashions are up to date within the right sequence and maintains prediction consistency throughout the system. The orchestration of the coaching pipelines is proven within the following pipeline structure.

The person workflow begins with an information processing pipeline that prepares the enter information (vessel schedules, AIS information, port congestion, and port efficiency metrics) and splits it into devoted datasets. This feeds into three parallel SageMaker coaching pipelines for our base fashions (O2P, P2P, and Berth), every following a standardized means of characteristic encoding, hyperparameter optimization, mannequin analysis, and registration utilizing SageMaker Processing and hyperparameter turning jobs and SageMaker Mannequin Registry. After coaching, every base mannequin runs a SageMaker batch rework job to generate predictions that function enter options for the mixed mannequin coaching. The efficiency of the newest Mixed mannequin model is examined on the final 3 months of information with identified ETAs, and efficiency metrics (R², imply absolute error (MAE)) are computed. If the mannequin’s efficiency is beneath a set MAE threshold, the complete coaching course of fails and the mannequin model is robotically discarded, stopping the deployment of fashions that don’t meet the minimal efficiency threshold.

All 4 fashions are versioned and saved as separate mannequin bundle teams within the SageMaker Mannequin Registry, enabling systematic model management and deployment. This orchestrated strategy helps be certain that our fashions are educated within the right sequence utilizing parallel processing, leading to an environment friendly and maintainable coaching course of.The hierarchical mannequin strategy helps additional be certain that a level of explainability akin to the present statistical and rule-based answer is maintained—avoiding ML black field conduct. For instance, it turns into potential to spotlight unusually lengthy berthing time predictions when discussing predictions outcomes with enterprise consultants. This helps enhance transparency and construct belief, which in flip will increase acceptance inside the firm.

Inference answer walkthrough

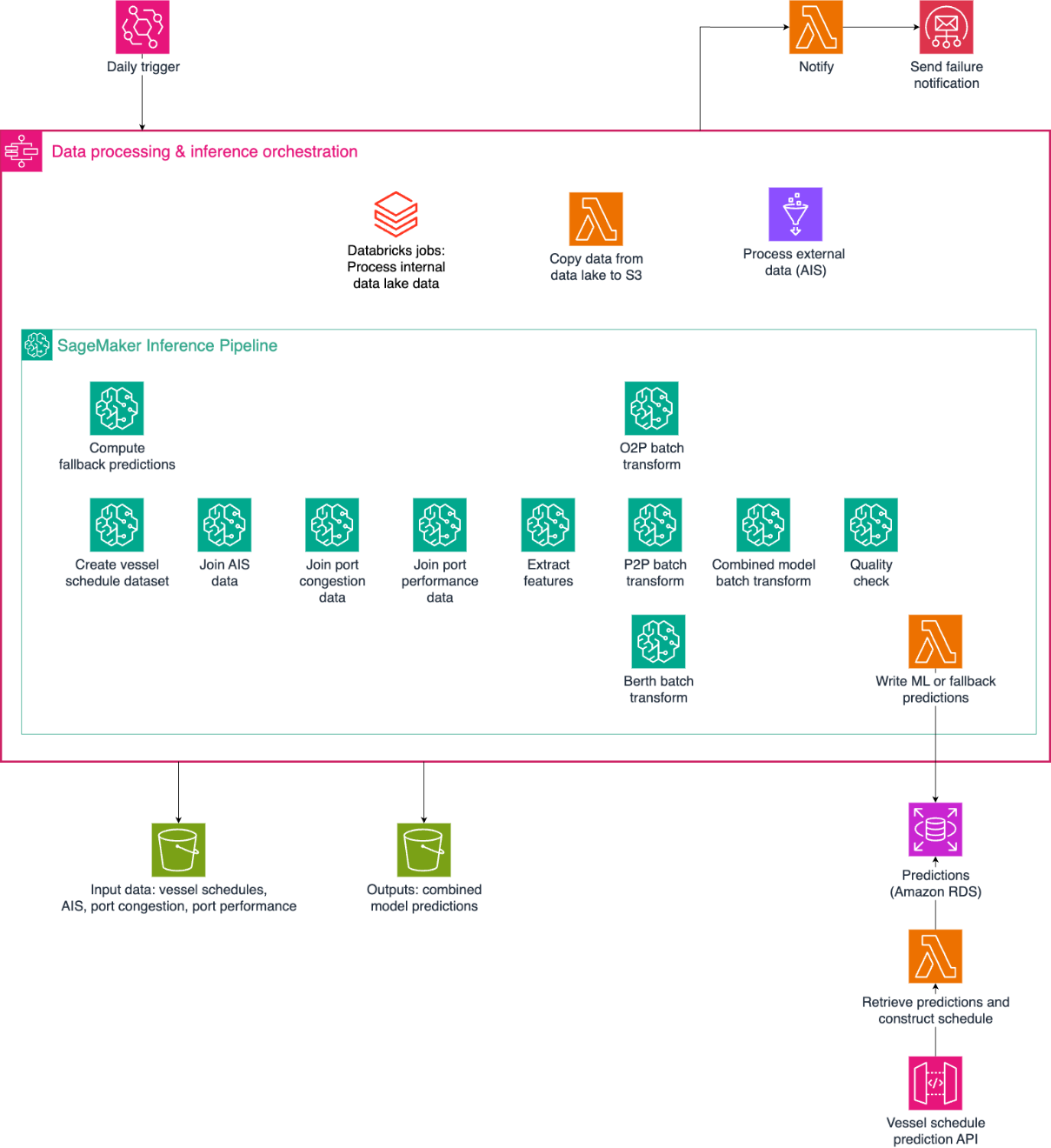

The inference infrastructure implements a hybrid strategy combining batch processing with real-time API capabilities as proven in Determine 5. As a result of most information sources replace every day and require intensive preprocessing, the core predictions are generated by way of nightly batch inference runs. These pre-computed predictions are complemented by a real-time API that implements enterprise logic for schedule adjustments and ETA updates.

- Day by day batch Inference:

- Amazon EventBridge triggers a Step Capabilities workflow day-after-day.

- The Step Capabilities workflow orchestrates the info and inference course of:

- Lambda copies inside Hapag-Lloyd information from the info lake to Amazon Easy Storage Service (Amazon S3).

- AWS Glue jobs mix the totally different information sources and put together inference inputs

- SageMaker inference executes in sequence:

- Fallback predictions are computed from historic averages and written to Amazon Relational Database Service (Amazon RDS). Fallback predictions are utilized in case of lacking information or a downstream inference failure.

- Preprocessing information for the 4 specialised ML fashions.

- O2P, P2P, and Berth mannequin batch transforms.

- The Mixed mannequin batch rework generates closing ETA predictions, that are written to Amazon RDS.

- Enter options and output information are saved in Amazon S3 for analytics and monitoring.

- For operational reliability, any failures within the inference pipeline set off speedy e mail notifications to the on-call operations group by way of Amazon Easy E mail Service (Amazon SES).

- Actual-time API:

- Amazon API Gateway receives shopper requests containing the present schedule and a sign for which vessel-port mixtures an ETA replace is required. By receiving the present schedule by way of the shopper request, we will handle intraday schedule updates whereas doing every day batch rework updates.

- The API Gateway triggers a Lambda perform calculating the response. The Lambda perform constructs the response by linking the ETA predictions (saved in Amazon RDS) with the present schedule utilizing customized enterprise logic, in order that we will handle short-term schedule adjustments unknown at inference time. Typical examples of short-term schedule adjustments are port omissions (for instance, because of port congestion) and one-time port calls.

This structure allows millisecond response occasions to customized requests whereas attaining a 99.5% availability (a most 3.5 hours downtime monthly).

Conclusion

Hapag Lloyd’s ML powered vessel scheduling assistant outperforms the present answer in each accuracy and response time. Typical API response occasions are within the order of lots of of milliseconds, serving to to make sure a real-time person expertise and outperforming the present answer by greater than 80%. Low response occasions are essential as a result of, along with totally automated schedule updates, enterprise consultants require low response occasions to work with the schedule assistant interactively. When it comes to accuracy, the MAE of the ML-powered ETA predictions outperform the present answer by roughly 12%, which interprets into climbing by two positions within the worldwide rating of schedule reliability on common. This is without doubt one of the key efficiency metrics in liner transport, and this can be a vital enchancment inside the trade.

To be taught extra about architecting and governing ML workloads at scale on AWS, see the AWS weblog publish Governing the ML lifecycle at scale, Half 1: A framework for architecting ML workloads utilizing Amazon SageMaker and the accompanying AWS workshop AWS Multi-Account Knowledge & ML Governance Workshop.

Acknowledgement

We acknowledge the numerous and worthwhile work of Michal Papaj and Piotr Zielinski from Hapag-Lloyd within the information science and information engineering areas of the mission.

In regards to the authors

Thomas Voss

Thomas Voss

Thomas Voss works at Hapag-Lloyd as an information scientist. Together with his background in academia and logistics, he takes satisfaction in leveraging information science experience to drive enterprise innovation and progress by way of the sensible design and modeling of AI options.

Bernhard Hersberger

Bernhard Hersberger

Bernhard Hersberger works as an information scientist at Hapag-Lloyd, the place he heads the AI Hub group in Hamburg. He’s passionate about integrating AI options throughout the corporate, taking complete accountability from figuring out enterprise points to deploying and scaling AI options worldwide.

Gabija Pasiunaite

Gabija Pasiunaite

At AWS, Gabija Pasiunaite was a Machine Studying Engineer at AWS Skilled Providers primarily based in Zurich. She specialised in constructing scalable ML and information options for AWS Enterprise prospects, combining experience in information engineering, ML automation and cloud infrastructure. Gabija has contributed to the AWS MLOps Framework utilized by AWS prospects globally. Outdoors work, Gabija enjoys exploring new locations and staying lively by way of climbing, snowboarding, and working.

Jean-Michel Lourier

Jean-Michel Lourier

Jean-Michel Lourier is a Senior Knowledge Scientist inside AWS Skilled Providers. He leads groups implementing information pushed purposes facet by facet with AWS prospects to generate enterprise worth out of their information. He’s captivated with diving into tech and studying about AI, machine studying, and their enterprise purposes. He’s additionally an enthusiastic bike owner.

Mousam Majhi

Mousam Majhi

Mousam Majhi is a Senior ProServe Cloud Architect specializing in Knowledge & AI inside AWS Skilled Providers. He works with Manufacturing and Journey, Transportation & Logistics prospects in DACH to realize their enterprise outcomes by leveraging information and AI powered options. Outdoors of labor, Mousam enjoys climbing within the Bavarian Alps.