At Amazon.ae, we serve roughly 10 million prospects month-to-month throughout 5 nations within the Center East and North Africa area—United Arab Emirates (UAE), Saudi Arabia, Egypt, Türkiye, and South Africa. Our AMET (Africa, Center East, and Türkiye) Funds crew manages fee alternatives, transactions, experiences, and affordability options throughout these various nations, publishing on common 5 new options month-to-month. Every characteristic requires complete check case technology, which historically consumed 1 week of handbook effort per undertaking. Our high quality assurance (QA) engineers spent this time analyzing enterprise requirement paperwork (BRDs), design paperwork, UI mocks, and historic check preparations—a course of that required one full-time engineer yearly merely for check case creation.

To enhance this handbook course of, we developed SAARAM (QA Lifecycle App), a multi-agent AI resolution that helps cut back check case technology from 1 week to hours. Utilizing Amazon Bedrock with Claude Sonnet by Anthropic and the Strands Brokers SDK, we lowered the time wanted to generate check instances from 1 week to mere hours whereas additionally enhancing check protection high quality. Our resolution demonstrates how finding out human cognitive patterns, fairly than optimizing AI algorithms alone, can create production-ready programs that improve fairly than substitute human experience.

On this publish, we clarify how we overcame the restrictions of single-agent AI programs by a human-centric strategy, carried out structured outputs to considerably cut back hallucinations and constructed a scalable resolution now positioned for enlargement throughout the AMET QA crew and later throughout different QA groups in Worldwide Rising Shops and Funds (IESP) Org.

Resolution overview

The AMET Funds QA crew validates code deployments affecting fee performance for hundreds of thousands of shoppers throughout various regulatory environments and fee strategies. Our handbook check case technology course of added turnaround time (TAT) within the product cycle, consuming invaluable engineering sources on repetitive check prep and documentation duties fairly than strategic testing initiatives. We wanted an automatic resolution that would preserve our high quality requirements whereas lowering the time funding.

Our goals included lowering check case creation time from 1 week to underneath a couple of hours, capturing institutional information from skilled testers, standardizing testing approaches throughout groups, and minimizing the hallucination points widespread in AI programs. The answer wanted to deal with advanced enterprise necessities spanning a number of fee strategies, regional laws, and buyer segments whereas producing particular, actionable check instances aligned with our current check administration programs.

The structure employs a classy multi-agent workflow. To attain this, we went by 3 totally different iterations and proceed to enhance and improve as new strategies are developed and new fashions are deployed.

The problem with conventional AI approaches

Our preliminary makes an attempt adopted typical AI approaches, feeding whole BRDs to a single AI agent for check case technology. This technique ceaselessly produced generic outputs like “confirm fee works appropriately” as a substitute of the precise, actionable check instances our QA crew requires. For instance, we want check instances as particular as “confirm that when a UAE buyer selects money on supply (COD) for an order above 1,000 AED with a saved bank card, the system shows the COD charge of 11 AED and processes the fee by the COD gateway with order state transitioning to ‘pending supply.’”

The only-agent strategy introduced a number of crucial limitations. Context size restrictions prevented processing massive paperwork successfully, however the lack of specialised processing phases meant the AI couldn’t perceive testing priorities or risk-based approaches. Moreover, hallucination points created irrelevant check situations that would mislead QA efforts. The basis trigger was clear: AI tried to compress advanced enterprise logic with out the iterative pondering course of that skilled testers make use of when analyzing necessities.

The next move chart illustrates our points when making an attempt to make use of a single agent with a complete immediate.

The human-centric breakthrough

Our breakthrough got here from a basic shift in strategy. As a substitute of asking, “How ought to AI take into consideration testing?”, we requested, “How do skilled people take into consideration testing?” to concentrate on following a particular step-by-step course of as a substitute of counting on the massive language mannequin (LLM) to comprehend this by itself. This philosophy change led us to conduct analysis interviews with senior QA professionals, finding out their cognitive workflows intimately.

We found that skilled testers don’t course of paperwork holistically—they work by specialised psychological phases. First, they analyze paperwork by extracting acceptance standards, figuring out buyer journeys, understanding UX necessities, mapping product necessities, analyzing person information, and assessing workstream capabilities. Then they develop exams by a scientific course of: journey evaluation, situation identification, information move mapping, check case improvement, and at last, group and prioritization.

We then decomposed our unique agent into sequential pondering actions that served as particular person steps. We constructed and examined every step utilizing Amazon Q Developer for CLI to ensure primary concepts have been sound and integrated each major and secondary inputs.

This perception led us to design SAARAM with specialised brokers that mirror these skilled testing approaches. Every agent focuses on a particular side of the testing course of, equivalent to how human specialists mentally compartmentalize totally different evaluation phases.

Multi-agent structure with Strands Brokers

Primarily based on our understanding of human QA workflows, we initially tried to construct our personal brokers from scratch. We needed to create our personal looping, serial, or parallel execution. We additionally created our personal orchestration and workflow graphs, which demanded appreciable handbook effort. To handle these challenges, we migrated to Strands Brokers SDK. This supplied the multi-agent orchestration capabilities important for coordinating advanced, interdependent duties whereas sustaining clear execution paths, serving to enhance our efficiency and cut back our improvement time.

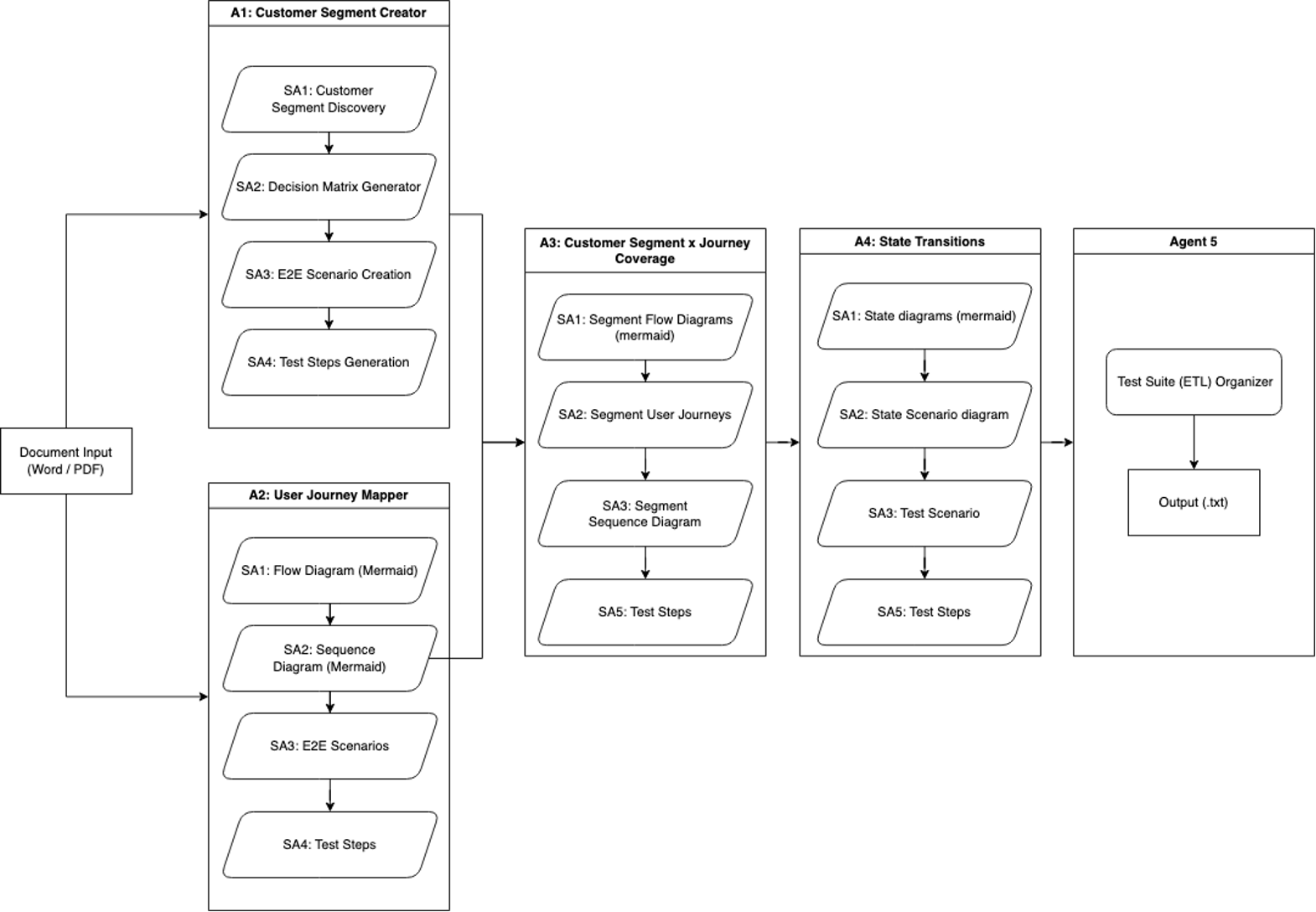

Workflow iteration 1: Finish-to-end check technology

Our first iteration of SAARAM consisted of a single enter and created our first specialised brokers. It concerned processing a piece doc by 5 specialised brokers to generate complete check protection.

Agent 1 known as the Buyer Phase Creator, and it focuses on buyer segmentation evaluation, utilizing 4 subagents:

- Buyer Phase Discovery identifies product person segments

- Resolution Matrix Generator creates parameter-based matrices

- E2E State of affairs Creation develops end-to-end (E2E) situations per section

- Check Steps Era detailed check case improvement

Agent 2 known as the Consumer Journey Mapper, and it employs 4 subagents to map product journeys comprehensively:

- The Circulate Diagram and Sequence Diagram are creators utilizing Mermaid syntax.

- The E2E Situations generator builds upon these diagrams.

- The Check Steps Generator is used for detailed check documentation.

Agent 3 known as Buyer Phase x Journey Protection, and it combines inputs from brokers 1 and a couple of to create detailed segment-specific analyses. It makes use of 4 subagents:

Agent 4 known as the State Transition Agent. It analyzes varied product state factors in buyer journey flows. Its sub-agents create Mermaid state diagrams representing totally different journey states, segment-specific state situation diagrams, and generate associated check situations and steps.

The workflow, proven within the following diagram, concludes with a primary extract, rework, and cargo (ETL) course of that consolidates and deduplicates the info from the brokers, saving the ultimate output as a textual content file.

This systematic strategy facilitates complete protection of buyer journeys, segments, and varied diagram sorts, enabling thorough check protection technology by iterative processing by brokers and subagents.

Addressing limitations and enhancing capabilities

In our journey to develop a extra strong and environment friendly instrument utilizing Strands Brokers, we recognized 5 essential limitations in our preliminary strategy:

- Context and hallucination challenges – Our first workflow confronted limitations from segregated agent operations the place particular person brokers independently collected information and created visible representations. This isolation led to restricted contextual understanding, leading to lowered accuracy and elevated hallucinations within the outputs.

- Knowledge technology inefficiencies – The restricted context out there to brokers brought on one other crucial challenge: the technology of extreme irrelevant information. With out correct contextual consciousness, brokers produced much less centered outputs, resulting in noise that obscured invaluable insights.

- Restricted parsing capabilities – The preliminary system’s information parsing scope proved too slender, restricted to solely buyer segments, journey mapping, and primary necessities. This restriction prevented brokers from accessing the total spectrum of knowledge wanted for complete evaluation.

- Single-source enter constraint – The workflow may solely course of Phrase paperwork, creating a big bottleneck. Trendy improvement environments require information from a number of sources, and this limitation prevented holistic information assortment.

- Inflexible structure issues – Importantly, the primary workflow employed a tightly coupled system with inflexible orchestration. This structure made it tough to switch, prolong, or reuse parts, limiting the system’s adaptability to altering necessities.

In our second iteration, we would have liked to implement strategic options to handle these points.

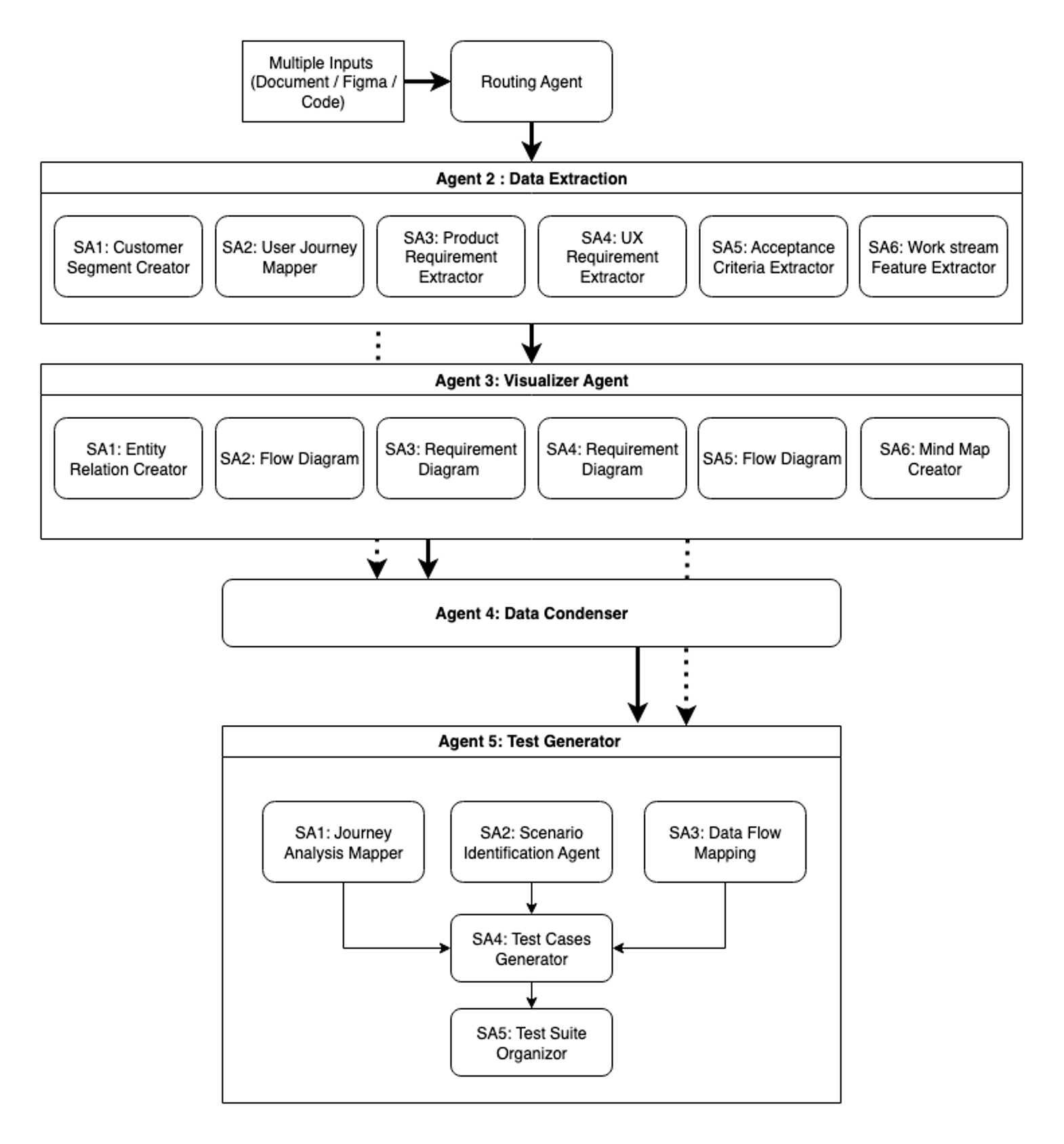

Workflow iteration 2: Complete evaluation workflow

Our second iteration represents an entire reimagining of the agentic workflow structure. Relatively than patching particular person issues, we rebuilt from the bottom up with modularity, context-awareness, and extensibility as core ideas:

Agent 1 is the clever gateway. The file kind determination agent serves because the system’s entry level and router. Processing documentation information, Figma designs, and code repositories, it categorizes and directs information to applicable downstream brokers. This clever routing is crucial for sustaining each effectivity and accuracy all through the workflow.

Agent 2 is for specialised information extraction. The Knowledge Extractor agent employs six specialised subagents, every centered on particular extraction domains. This parallel processing strategy facilitates thorough protection whereas sustaining sensible pace. Every subagent operates with domain-specific information, extracting nuanced data that generalized approaches would possibly overlook.

Agent 3 is the Visualizer agent, and it transforms extracted information into six distinct Mermaid diagram sorts, every serving particular analytical functions. Entity relation diagrams map information relationships and constructions, and move diagrams visualize processes and workflows. Requirement diagrams make clear product specs, and UX requirement visualizations illustrate person expertise flows. Course of move diagrams element system operations, and thoughts maps reveal characteristic relationships and hierarchies. These visualizations present a number of views on the identical data, serving to each human reviewers and downstream brokers perceive patterns and connections inside advanced datasets.

Agent 4 is the Knowledge Condenser agent, and it performs essential synthesis by clever context distillation, ensuring every downstream agent receives precisely the data wanted for its specialised activity. This agent, powered by its condensed data generator, merges outputs from each the Knowledge Extractor and Visualizer brokers whereas performing subtle evaluation.

The agent extracts crucial parts from the total textual content context—acceptance standards, enterprise guidelines, buyer segments, and edge instances—creating structured summaries that protect important particulars whereas lowering token utilization. It compares every textual content file with its corresponding Mermaid diagram, capturing data that could be missed in visible representations alone. This cautious processing maintains data integrity throughout agent handoffs, ensuring necessary information is just not misplaced because it flows by the system. The result’s a set of condensed addendums that enrich the Mermaid diagrams with complete context. This synthesis makes positive that when data strikes to check technology, it arrives full, structured, and optimized for processing.

Agent 5 is the Check Generator agent brings collectively the collected, visualized, and condensed data to provide complete check suites. Working with six Mermaid diagrams plus condensed data from Agent 4, this agent employs a pipeline of 5 subagents. The Journey Evaluation Mapper, State of affairs Identification Agent, and the Knowledge Circulate Mapping subagents generate complete check instances primarily based on their take of the enter information flowing from Agent 4.With the check instances generated throughout three crucial views, the Check Circumstances Generator evaluates them, reformatting in accordance with inside tips for consistency. Lastly, the Check Suite Organizer performs deduplication and optimization, delivering a last check suite that balances comprehensiveness with effectivity.

The system now handles way over the fundamental necessities and journey mapping of Workflow 1—it processes product necessities, UX specs, acceptance standards, and workstream extraction whereas accepting inputs from Figma designs, code repositories, and a number of doc sorts. Most significantly, the shift to modular structure basically modified how the system operates and evolves. Not like our inflexible first workflow, this design permits for reusing outputs from earlier brokers, integrating new testing kind brokers, and intelligently choosing check case turbines primarily based on person necessities, positioning the system for steady adaptation.

The next determine reveals our second iteration of SAARAM with 5 foremost brokers and a number of subagents with context engineering and compression.

Extra Strands Brokers options

Strands Brokers supplied the muse for our multi-agent system, providing a model-driven strategy that simplified advanced agent improvement. As a result of the SDK can join fashions with instruments by superior reasoning capabilities, we constructed subtle workflows with just a few strains of code. Past its core performance, two key options proved important for our manufacturing deployment: lowering hallucinations with structured outputs and workflow orchestration.

Decreasing hallucinations with structured outputs

The structured output characteristic of Strands Brokers makes use of Pydantic fashions to remodel historically unpredictable LLM outputs into dependable, type-safe responses. This strategy addresses a basic problem in generative AI: though LLMs excel at producing humanlike textual content, they will wrestle with persistently formatted outputs wanted for manufacturing programs. By implementing schemas by Pydantic validation, we make it possible for responses conform to predefined constructions, enabling seamless integration with current check administration programs.

The next pattern implementation demonstrates how structured outputs work in apply:

Pydantic routinely validates LLM responses in opposition to outlined schemas to facilitate kind correctness and required discipline presence. When responses don’t match the anticipated construction, validation errors present clear suggestions about what wants correction, serving to stop malformed information from propagating by the system. In the environment, this strategy delivered constant, predictable outputs throughout the brokers no matter immediate variations or mannequin updates, minimizing a complete class of information formatting errors. Consequently, our improvement crew labored extra effectively with full IDE assist.

Workflow orchestration advantages

The Strands Brokers workflow structure supplied the subtle coordination capabilities our multi-agent system required. The framework enabled structured coordination with express activity definitions, automated parallel execution for unbiased duties, and sequential processing for dependent operations. This meant we may construct advanced agent-to-agent communication patterns that will have been tough to implement manually.

The next pattern snippet reveals find out how to create a workflow in Strands Brokers SDK:

The workflow system delivered three crucial capabilities for our use case. First, parallel processing optimization allowed journey evaluation, situation identification, and protection evaluation to run concurrently, with unbiased brokers processing totally different elements with out blocking one another. The system routinely allotted sources primarily based on availability, maximizing throughput.

Second, clever dependency administration made positive that check improvement waited for situation identification to be accomplished, and group duties relied on the check instances being generated. Context was preserved and handed effectively between dependent phases, sustaining data integrity all through the workflow.

Lastly, the built-in reliability options supplied the resilience our system required. Computerized retry mechanisms dealt with transient failures gracefully, state persistence enabled pause and resume capabilities for long-running workflows, and complete audit logging supported each debugging and efficiency optimization efforts.

The next desk reveals examples of enter into the workflow and the potential outputs.

| Enter: Enterprise requirement doc | Output: Check instances generated |

Useful necessities:

|

TC006: Bank card fee success State of affairs: Buyer completes buy utilizing legitimate bank card Steps: 1. Add objects to cart and proceed to checkout. Anticipated consequence: Checkout kind displayed. 2. Enter transport data. Anticipated consequence: Delivery particulars saved. 3. Choose bank card fee technique. Anticipated consequence: Card kind proven. 4. Enter legitimate card particulars. Anticipated consequence: Card validated. 5. Submit fee. Anticipated consequence: Fee processed, order confirmed.TC008: Fee failure dealing with State of affairs: Fee fails attributable to inadequate funds or card decline Steps: 1. Enter card with inadequate funds. Anticipated consequence: Fee declined message. 2. System affords retry choice. Anticipated consequence: Fee kind redisplayed. 3. Strive different fee technique. Anticipated consequence: Different fee profitable. TC009: Fee gateway timeout TC010: Refund processing |

Integration with Amazon Bedrock

Amazon Bedrock served as the muse for our AI capabilities, offering seamless entry to Claude Sonnet by Anthropic by the Strands Brokers built-in AWS service integration. We chosen Claude Sonnet by Anthropic for its distinctive reasoning capabilities and skill to know advanced fee area necessities. The Strands Brokers versatile LLM API integration made this implementation easy. The next snippet reveals find out how to effortlessly create an agent in Strands Brokers:

The managed service structure of Amazon Bedrock lowered infrastructure complexity from our deployment. The service supplied automated scaling that adjusted to our workload calls for, facilitating constant efficiency throughout the brokers no matter visitors patterns. Constructed-in retry logic and error dealing with improved system reliability considerably, lowering the operational overhead usually related to managing AI infrastructure at scale. The mixture of the subtle orchestration capabilities of Strands Brokers and the strong infrastructure of Amazon Bedrock created a production-ready system that would deal with advanced check technology workflows whereas sustaining excessive reliability and efficiency requirements.

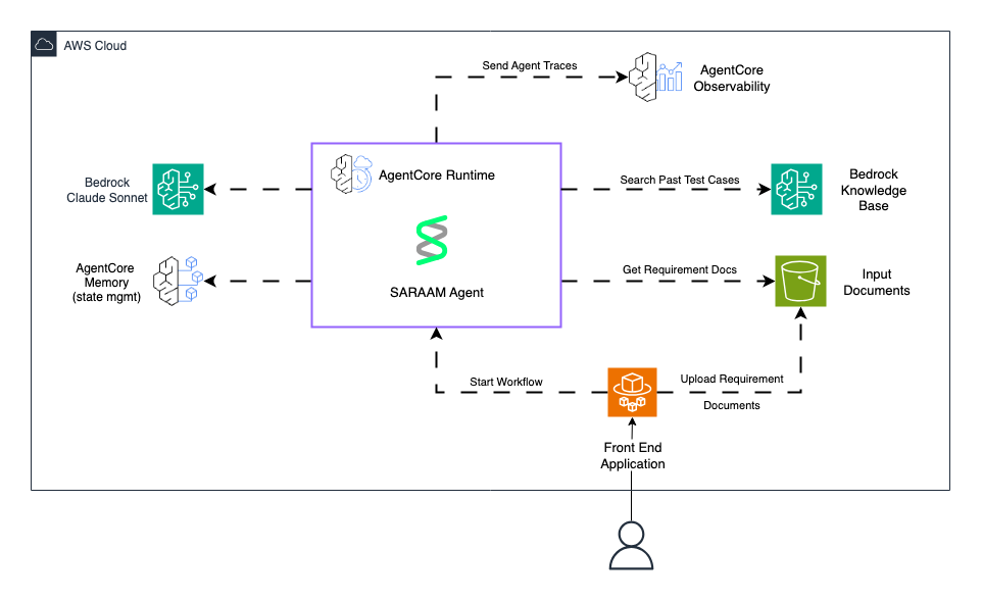

The next diagram reveals the deployment of the SARAAM agent with Amazon Bedrock AgentCore and Amazon Bedrock.

Outcomes and enterprise influence

The implementation of SAARAM has improved our QA processes with measurable enhancements throughout a number of dimensions. Earlier than SAARAM, our QA engineers spent 3–5 days manually analyzing BRD paperwork and UI mocks to create complete check instances. This handbook course of is now lowered to hours, with the system attaining:

- Check case technology time: Potential lowered from 1 week to hours

- Useful resource optimization: QA effort decreased from 1.0 full-time worker (FTE) to 0.2 FTE for validation

- Protection enchancment: 40% extra edge instances recognized in comparison with handbook course of

- Consistency: 100% adherence to check case requirements and codecs

The accelerated check case technology has pushed enhancements in our core enterprise metrics:

- Fee success price: Elevated by complete edge case testing and risk-based check prioritization

- Fee expertise: Enhanced buyer satisfaction as a result of groups can now iterate on check protection through the design part

- Developer velocity: Product and improvement groups generate preliminary check instances throughout design, enabling early high quality suggestions

SAARAM captures and preserves institutional information that was beforehand depending on particular person QA engineers:

- Testing patterns from skilled professionals at the moment are codified

- Historic check case learnings are routinely utilized to new options

- Constant testing approaches throughout totally different fee strategies and industries

- Lowered onboarding time for brand spanking new QA crew members

This iterative enchancment signifies that the system turns into extra invaluable over time.

Classes realized

Our journey growing SAARAM supplied essential insights for constructing production-ready AI programs. Our breakthrough got here from finding out how area specialists suppose fairly than optimizing how AI processes data. Understanding the cognitive patterns of testers and QA professionals led to an structure that naturally aligns with human reasoning. This strategy produced higher outcomes in comparison with purely technical optimizations. Organizations constructing related programs ought to make investments time observing and interviewing area specialists earlier than designing their AI structure—the insights gained immediately translate to simpler agent design.

Breaking advanced duties into specialised brokers dramatically improved each accuracy and reliability. Our multi-agent structure, enabled by the orchestration capabilities of Strands Brokers, handles nuances that monolithic approaches persistently miss. Every agent’s centered accountability permits deeper area experience whereas offering higher error isolation and debugging capabilities.

A key discovery was that the Strands Brokers workflow and graph-based orchestration patterns considerably outperformed conventional supervisor agent approaches. Though supervisor brokers make dynamic routing selections that may introduce variability, workflows present “brokers on rails”—a structured path facilitating constant, reproducible outcomes. Strands Brokers affords a number of patterns, together with supervisor-based routing, workflow orchestration for sequential processing with dependencies, and graph-based coordination for advanced situations. For check technology the place consistency is paramount, the workflow sample with its express activity dependencies and parallel execution capabilities delivered the optimum stability of flexibility and management. This structured strategy aligns completely with manufacturing environments the place reliability issues greater than theoretical flexibility.

Implementing Pydantic fashions by the Strands Brokers structured output characteristic successfully lowered type-related hallucinations in our system. By implementing AI responses to evolve to strict schemas, we facilitate dependable, programmatically usable outputs. This strategy has confirmed important when consistency and reliability are nonnegotiable. The sort-safe responses and automated validation have turn out to be foundational to our system’s reliability.

Our condensed data generator sample demonstrates how clever context administration maintains high quality all through multistage processing. This strategy of figuring out what to protect, condense, and go between brokers helps stop the context degradation that usually happens in token-limited environments. The sample is broadly relevant to multistage AI programs going through related constraints.

What’s subsequent

The modular structure we’ve constructed with Strands Brokers permits easy adaptation to different domains inside Amazon. The identical patterns that generate fee check instances might be utilized to retail programs testing, customer support situation technology for assist workflows, and cellular utility UI and UX check case technology. Every adaptation requires solely domain-specific prompts and schemas whereas reusing the core orchestration logic. All through the event of SAARAM, the crew efficiently addressed many challenges in check case technology—from lowering hallucinations by structured outputs to implementing subtle multi-agent workflows. Nevertheless, one crucial hole stays: the system hasn’t but been supplied with examples of what high-quality check instances really seem like in apply.

To bridge this hole, integrating Amazon Bedrock Information Bases with a curated repository of historic check instances would supply SAARAM with concrete, real-world examples through the technology course of. Through the use of the combination capabilities of Strands Brokers with Amazon Bedrock Information Bases, the system may search by previous profitable check instances to search out related situations earlier than producing new ones. When processing a BRD for a brand new fee characteristic, SAARAM would first question the information base for comparable check instances—whether or not for related fee strategies, buyer segments, or transaction flows—and use these as contextual examples to information its output.

Future deployment will use Amazon Bedrock AgentCore for complete agent lifecycle administration. Amazon Bedrock AgentCore Runtime supplies the manufacturing execution surroundings with ephemeral, session-specific state administration that maintains conversational context throughout energetic classes whereas facilitating isolation between totally different person interactions. The observability capabilities of Bedrock AgentCore assist ship detailed visualizations of every step in SAARAM’s multi-agent workflow, which the crew can use to hint execution paths by the 5 brokers, audit intermediate outputs from the Knowledge Condenser and Check Generator brokers, and establish efficiency bottlenecks by real-time dashboards powered by Amazon CloudWatch with standardized OpenTelemetry-compatible telemetry.

The service permits a number of superior capabilities important for manufacturing deployment: centralized agent administration and versioning by the Amazon Bedrock AgentCore management airplane, A/B testing of various workflow methods and immediate variations throughout the 5 subagents throughout the Check Generator, efficiency monitoring with metrics monitoring token utilization and latency throughout the parallel execution phases, automated agent updates with out disrupting energetic check technology workflows, and session persistence for sustaining context when QA engineers iteratively refine check suite outputs. This integration positions SAARAM for enterprise-scale deployment whereas offering the operational visibility and reliability controls that rework it from a proof of idea right into a manufacturing system able to dealing with the AMET crew’s formidable objective of increasing past Funds QA to serve the broader group.

Conclusion

SAARAM demonstrates how AI can change conventional QA processes when designed with human experience at its core. By lowering check case creation from 1 week to hours whereas enhancing high quality and protection, we’ve enabled sooner characteristic deployment and enhanced fee experiences for hundreds of thousands of shoppers throughout the MENA area. The important thing to our success wasn’t merely superior AI know-how—it was the mix of human experience, considerate structure design, and strong engineering practices. Via cautious examine of how skilled QA professionals suppose, implementation of multi-agent programs that mirror these cognitive patterns, and minimization of AI limitations by structured outputs and context engineering, we’ve created a system that enhances fairly than replaces human experience.

For groups contemplating related initiatives, our expertise emphasizes three crucial success components: make investments time understanding the cognitive processes of area specialists, implement structured outputs to attenuate hallucinations, and design multi-agent architectures that mirror human problem-solving approaches. These QA instruments aren’t supposed to switch human testers, they amplify their experience by clever automation. If you happen to’re serious about beginning your journey on brokers with AWS, try our pattern Strands Brokers implementations repo or our latest launch, Amazon Bedrock AgentCore, and the end-to-end examples with deployment on our Amazon Bedrock AgentCore samples repo.

Concerning the authors

Jayashree is a High quality Assurance Engineer at Amazon Music Tech, the place she combines rigorous handbook testing experience with an rising ardour for GenAI-powered automation. Her work focuses on sustaining excessive system high quality requirements whereas exploring revolutionary approaches to make testing extra clever and environment friendly. Dedicated to lowering testing monotony and enhancing product high quality throughout Amazon’s ecosystem, Jayashree is on the forefront of integrating synthetic intelligence into high quality assurance practices.

Jayashree is a High quality Assurance Engineer at Amazon Music Tech, the place she combines rigorous handbook testing experience with an rising ardour for GenAI-powered automation. Her work focuses on sustaining excessive system high quality requirements whereas exploring revolutionary approaches to make testing extra clever and environment friendly. Dedicated to lowering testing monotony and enhancing product high quality throughout Amazon’s ecosystem, Jayashree is on the forefront of integrating synthetic intelligence into high quality assurance practices.

Harsha Pradha G is a Snr. High quality Assurance Engineer half in MENA Funds at Amazon. With a robust basis in constructing complete high quality methods, she brings a novel perspective to the intersection of QA and AI as an rising QA-AI integrator. Her work focuses on bridging the hole between conventional testing methodologies and cutting-edge AI improvements, whereas additionally serving as an AI content material strategist and AI Creator.

Harsha Pradha G is a Snr. High quality Assurance Engineer half in MENA Funds at Amazon. With a robust basis in constructing complete high quality methods, she brings a novel perspective to the intersection of QA and AI as an rising QA-AI integrator. Her work focuses on bridging the hole between conventional testing methodologies and cutting-edge AI improvements, whereas additionally serving as an AI content material strategist and AI Creator.

Fahim Surani is Senior Options Architect as AWS, serving to prospects throughout Monetary Companies, Power, and Telecommunications design and construct cloud and generative AI options. His focus since 2022 has been driving enterprise cloud adoption, spanning cloud migrations, price optimization, event-driven architectures, together with main implementations acknowledged as early adopters of Amazon’s newest AI capabilities. Fahim’s work covers a variety of use instances, with a major curiosity in generative AI, agentic architectures. He’s a daily speaker at AWS summits and trade occasions throughout the area.

Fahim Surani is Senior Options Architect as AWS, serving to prospects throughout Monetary Companies, Power, and Telecommunications design and construct cloud and generative AI options. His focus since 2022 has been driving enterprise cloud adoption, spanning cloud migrations, price optimization, event-driven architectures, together with main implementations acknowledged as early adopters of Amazon’s newest AI capabilities. Fahim’s work covers a variety of use instances, with a major curiosity in generative AI, agentic architectures. He’s a daily speaker at AWS summits and trade occasions throughout the area.