At this 12 months’s Worldwide Convention on Machine Studying (ICML2025), Jaeho Kim, Yunseok Lee and Seulki Lee gained an excellent place paper award for his or her work Place: The AI Convention Peer Evaluate Disaster Calls for Creator Suggestions and Reviewer Rewards. We hear from Jaeho in regards to the issues they have been making an attempt to handle, and their proposed writer suggestions mechanism and reviewer reward system.

Might you say one thing about the issue that you simply deal with in your place paper?

Our place paper addresses the issues plaguing present AI convention peer assessment methods, whereas additionally elevating questions in regards to the future route of peer assessment.

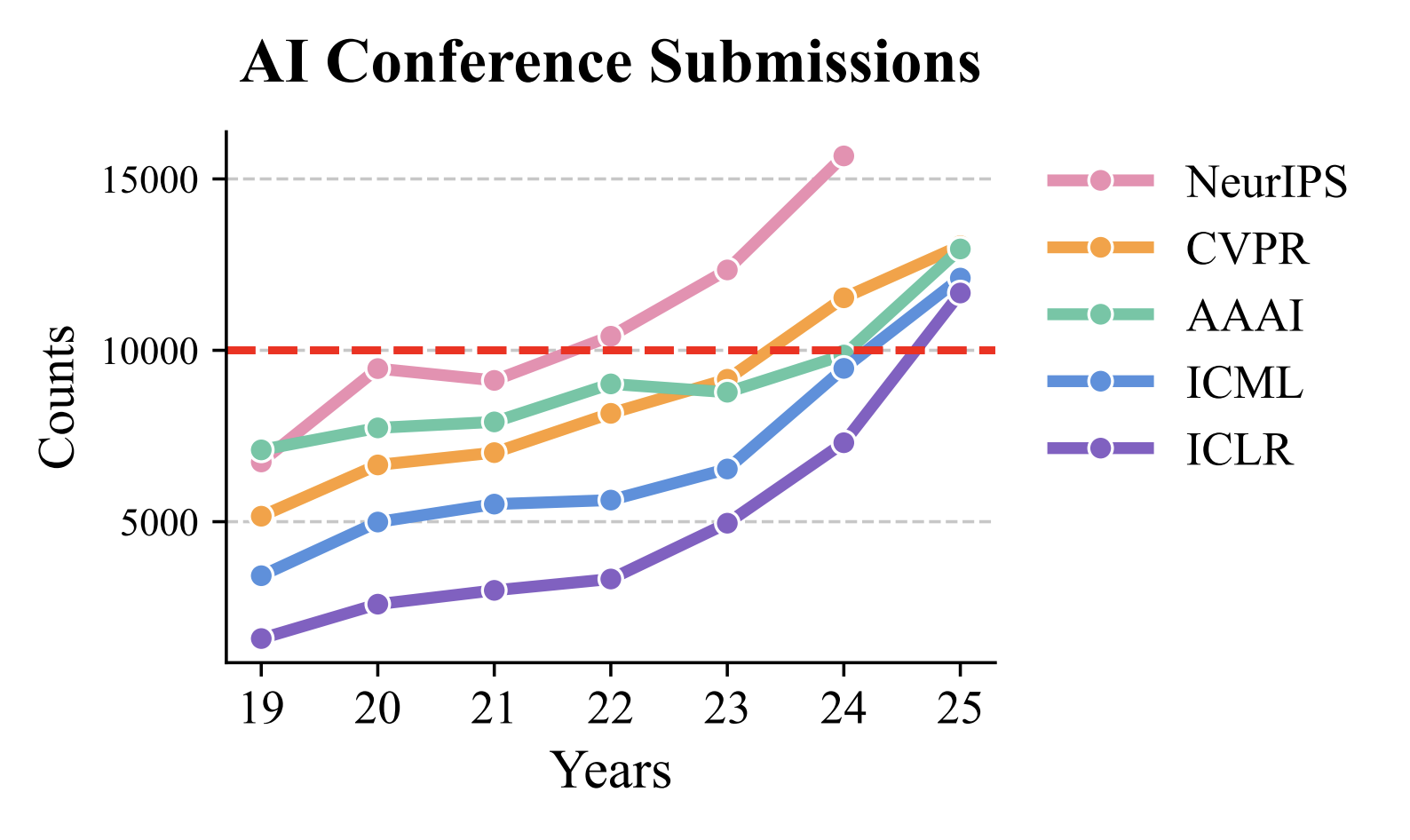

The approaching downside with the present peer assessment system in AI conferences is the exponential progress in paper submissions pushed by growing curiosity in AI. To place this with numbers, NeurIPS acquired over 30,000 submissions this 12 months, whereas ICLR noticed a 59.8% enhance in submissions in only one 12 months. This large enhance in submissions has created a elementary mismatch: whereas paper submissions develop exponentially, the pool of certified reviewers has not stored tempo.

Submissions to among the main AI conferences over the previous few years.

Submissions to among the main AI conferences over the previous few years.

This imbalance has extreme penalties. The vast majority of papers are now not receiving ample assessment high quality, undermining peer assessment’s important perform as a gatekeeper of scientific information. When the assessment course of fails, inappropriate papers and flawed analysis can slip by, doubtlessly polluting the scientific report.

Contemplating AI’s profound societal affect, this breakdown in high quality management poses dangers that stretch far past academia. Poor analysis that enters the scientific discourse can mislead future work, affect coverage selections, and in the end hinder real information development. Our place paper focuses on this vital query and proposes strategies on how we will improve the standard of assessment, thus main to higher dissemination of data.

What do you argue for within the place paper?

Our place paper proposes two main modifications to deal with the present peer assessment disaster: an writer suggestions mechanism and a reviewer reward system.

First, the writer suggestions system permits authors to formally consider the standard of evaluations they obtain. This method permits authors to evaluate reviewers’ comprehension of their work, determine potential indicators of LLM-generated content material, and set up fundamental safeguards in opposition to unfair, biased, or superficial evaluations. Importantly, this isn’t about penalizing reviewers, however somewhat creating minimal accountability to guard authors from the small minority of reviewers who might not meet skilled requirements.

Second, our reviewer incentive system supplies each rapid and long-term skilled worth for high quality reviewing. For brief-term motivation, writer analysis scores decide eligibility for digital badges (akin to “Prime 10% Reviewer” recognition) that may be displayed on tutorial profiles like OpenReview and Google Scholar. For long-term profession affect, we suggest novel metrics like a “reviewer affect rating” – basically an h-index calculated from the next citations of papers a reviewer has evaluated. This treats reviewers as contributors to the papers they assist enhance and validates their position in advancing scientific information.

Might you inform us extra about your proposal for this new two-way peer assessment methodology?

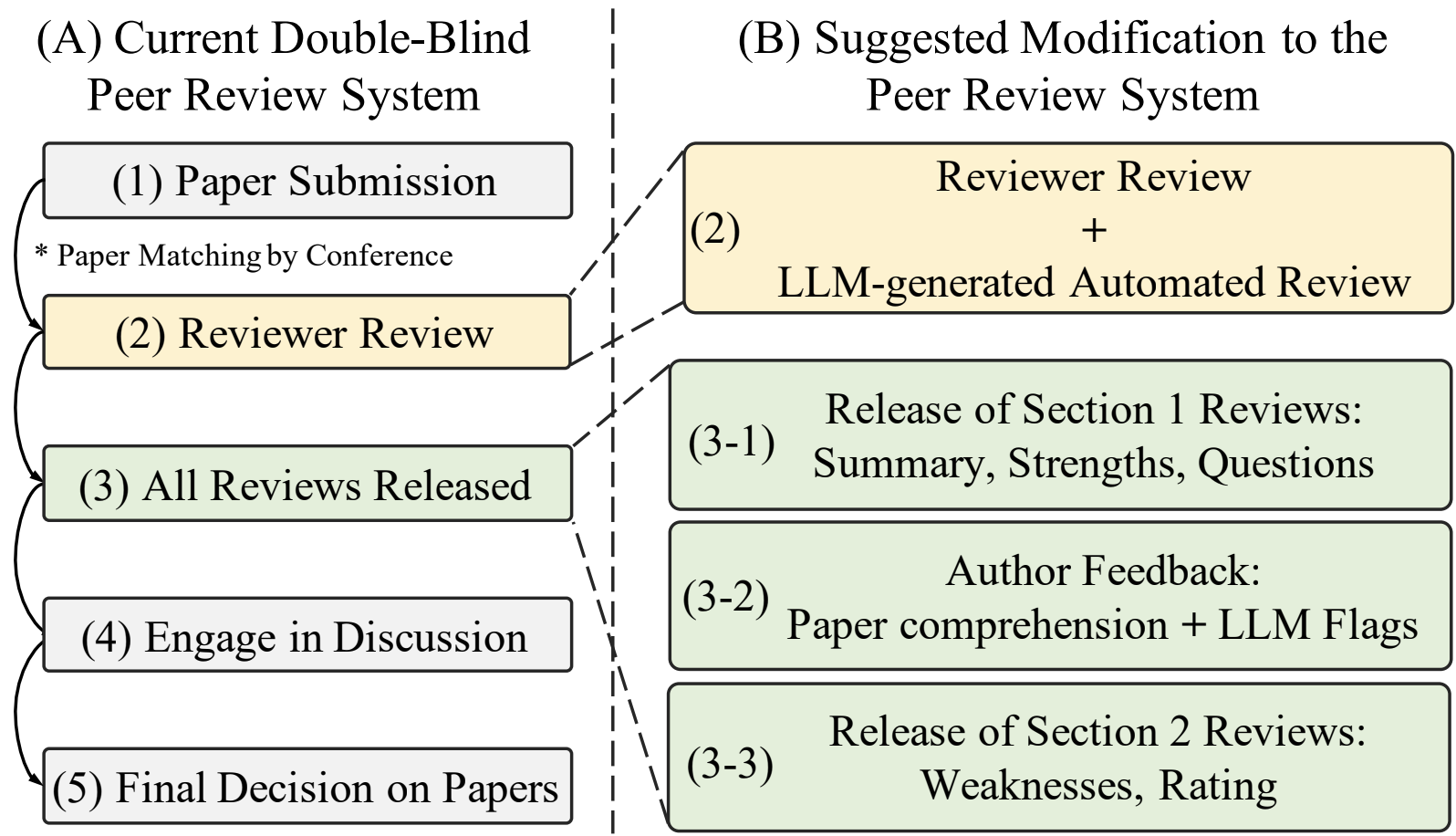

Our proposed two-way peer assessment system makes one key change to the present course of: we cut up assessment launch into two phases.

The authors’ proposed modification to the peer-review system.

The authors’ proposed modification to the peer-review system.

At the moment, authors submit papers, reviewers write full evaluations, and all evaluations are launched directly. In our system, authors first obtain solely the impartial sections – the abstract, strengths, and questions on their paper. Authors then present suggestions on whether or not reviewers correctly understood their work. Solely after this suggestions can we launch the second half containing weaknesses and scores.

This method provides three essential advantages. First, it’s sensible – we don’t want to vary current timelines or assessment templates. The second part could be launched instantly after the authors give suggestions. Second, it protects authors from irresponsible evaluations since reviewers know their work can be evaluated. Third, since reviewers usually assessment a number of papers, we will monitor their suggestions scores to assist space chairs determine (ir)accountable reviewers.

The important thing perception is that authors know their very own work greatest and might rapidly spot when a reviewer hasn’t correctly engaged with their paper.

Might you discuss in regards to the concrete reward system that you simply counsel within the paper?

We suggest each short-term and long-term rewards to handle reviewer motivation, which naturally declines over time regardless of beginning enthusiastically.

Brief-term: Digital badges displayed on reviewers’ tutorial profiles, awarded primarily based on writer suggestions scores. The objective is making reviewer contributions extra seen. Whereas some conferences listing prime reviewers on their web sites, these lists are arduous to seek out. Our badges can be prominently displayed on profiles and will even be printed on convention identify tags. Instance of a badge that would seem on profiles.

Instance of a badge that would seem on profiles.

Lengthy-term: Numerical metrics to quantify reviewer affect at AI conferences. We propose monitoring measures like an h-index for reviewed papers. These metrics may very well be included in tutorial portfolios, much like how we presently monitor publication affect.

The core concept is creating tangible profession advantages for reviewers whereas establishing peer assessment as knowledgeable tutorial service that rewards each authors and reviewers.

What do you suppose may very well be among the professionals and cons of implementing this method?

The advantages of our system are threefold. First, it’s a very sensible answer. Our method doesn’t change present assessment schedules or assessment burdens, making it straightforward to include into current methods. Second, it encourages reviewers to behave extra responsibly, realizing their work can be evaluated. We emphasize that almost all reviewers already act professionally – nevertheless, even a small variety of irresponsible reviewers can critically harm the peer assessment system. Third, with ample scale, writer suggestions scores will make conferences extra sustainable. Space chairs can have higher details about reviewer high quality, enabling them to make extra knowledgeable selections about paper acceptance.

Nevertheless, there may be robust potential for gaming by reviewers. Reviewers would possibly optimize for rewards by giving overly constructive evaluations. Measures to counteract these issues are positively wanted. We’re presently exploring options to handle this challenge.

Are there any concluding ideas you’d like so as to add in regards to the potential future

of conferences and peer-review?

One rising pattern we’ve noticed is the growing dialogue of LLMs in peer assessment. Whereas we consider present LLMs have a number of weaknesses (e.g., immediate injection, shallow evaluations), we additionally suppose they are going to finally surpass people. When that occurs, we are going to face a elementary dilemma: if LLMs present higher evaluations, why ought to people be reviewing? Simply because the fast rise of LLMs caught us unprepared and created chaos, we can’t afford a repeat. We must always begin getting ready for this query as quickly as attainable.

About Jaeho

|

Jaeho Kim is a Postdoctoral Researcher at Korea College with Professor Changhee Lee. He acquired his Ph.D. from UNIST underneath the supervision of Professor Seulki Lee. His essential analysis focuses on time collection studying, significantly creating basis fashions that generate artificial and human-guided time collection knowledge to cut back computational and knowledge prices. He additionally contributes to enhancing the peer assessment course of at main AI conferences, along with his work acknowledged by the ICML 2025 Excellent Place Paper Award. |

Learn the work in full

Place: The AI Convention Peer Evaluate Disaster Calls for Creator Suggestions and Reviewer Rewards, Jaeho Kim, Yunseok Lee, Seulki Lee.

AIhub

is a non-profit devoted to connecting the AI neighborhood to the general public by offering free, high-quality info in AI.

AIhub

is a non-profit devoted to connecting the AI neighborhood to the general public by offering free, high-quality info in AI.