This publish is co-written with Julieta Rappan, Macarena Blasi, and María Candela Blanco from the Authorities of the Metropolis of Buenos Aires.

The Authorities of the Metropolis of Buenos Aires repeatedly works to enhance citizen providers. In February 2019, it launched an AI assistant named Boti out there by means of WhatsApp, essentially the most extensively used messaging service in Argentina. With Boti, residents can conveniently and shortly entry all kinds of details about the town, reminiscent of renewing a driver’s license, accessing healthcare providers, and studying about cultural occasions. This AI assistant has change into a most well-liked communication channel and facilitates greater than 3 million conversations every month.

As Boti grows in recognition, the Authorities of the Metropolis of Buenos Aires seeks to offer new conversational experiences that harness the newest developments in generative AI. One problem that residents typically face is navigating the town’s advanced bureaucratic panorama. The Metropolis Authorities’s web site contains over 1,300 authorities procedures, every of which has its personal logic, nuances, and exceptions. The Metropolis Authorities acknowledged that Boti might enhance entry to this info by immediately answering residents’ questions and connecting them to the appropriate process.

To pilot this new answer, the Authorities of the Metropolis of Buenos Aires partnered with the AWS Generative AI Innovation Middle (GenAIIC). The groups labored collectively to develop an agentic AI assistant utilizing LangGraph and Amazon Bedrock. The answer contains two essential elements: an enter guardrail system and a authorities procedures agent. The enter guardrail makes use of a customized LLM classifier to research incoming person queries, figuring out whether or not to approve or block requests based mostly on their content material. Accepted requests are dealt with by the federal government procedures agent, which retrieves related procedural info and generates responses. Since most person queries deal with a single process, we developed a novel reasoning retrieval system to enhance retrieval accuracy. This method initially retrieves comparative summaries that disambiguate comparable procedures after which applies a big language mannequin (LLM) to pick essentially the most related outcomes. The agent makes use of this info to craft responses in Boti’s attribute model, delivering quick, useful, and expressive messages in Argentina’s Rioplatense Spanish dialect. We centered on distinctive linguistic options of this dialect together with the voseo (utilizing “vos” as an alternative of “tú”) and periphrastic future (utilizing “ir a” earlier than verbs).

On this publish, we dive into the implementation of the agentic AI system. We start with an summary of the answer, explaining its design and essential options. Then, we focus on the guardrail and agent subcomponents and assess their efficiency. Our analysis reveals that the guardrails successfully block dangerous content material, together with offensive language, dangerous opinions, immediate injection makes an attempt, and unethical behaviors. The agent achieves as much as 98.9% top-1 retrieval accuracy utilizing the reasoning retriever, which marks a 12.5–17.5% enchancment over customary retrieval-augmented era (RAG) strategies. Subject material consultants discovered that Boti’s responses had been 98% correct in voseo utilization and 92% correct in periphrastic future utilization. The promising outcomes of this answer set up a brand new period of citizen-government interplay.

Resolution overview

The Authorities of the Metropolis of Buenos Aires and the GenAIIC constructed an agentic AI assistant utilizing Amazon Bedrock and LangGraph that features an enter guardrail system to allow protected interactions and a authorities procedures agent to answer person questions. The workflow is proven within the following diagram.

The method begins when a person submits a query. In parallel, the query is handed to the enter guardrail system and authorities procedures agent. The enter guardrail system determines whether or not the query incorporates dangerous content material. If triggered, it stops graph execution and redirects the person to ask questions on authorities procedures. In any other case, the agent continues to formulate its response. The agent both calls a retrieval instrument, which permits it to acquire related context and metadata from authorities procedures saved in Amazon Bedrock Information Bases, or responds to the person. Each the enter guardrail and authorities procedures agent use the Amazon Bedrock Converse API for LLM inference. This API supplies entry to a wide array of LLMs, serving to us optimize efficiency and latency throughout completely different subtasks.

Enter guardrail system

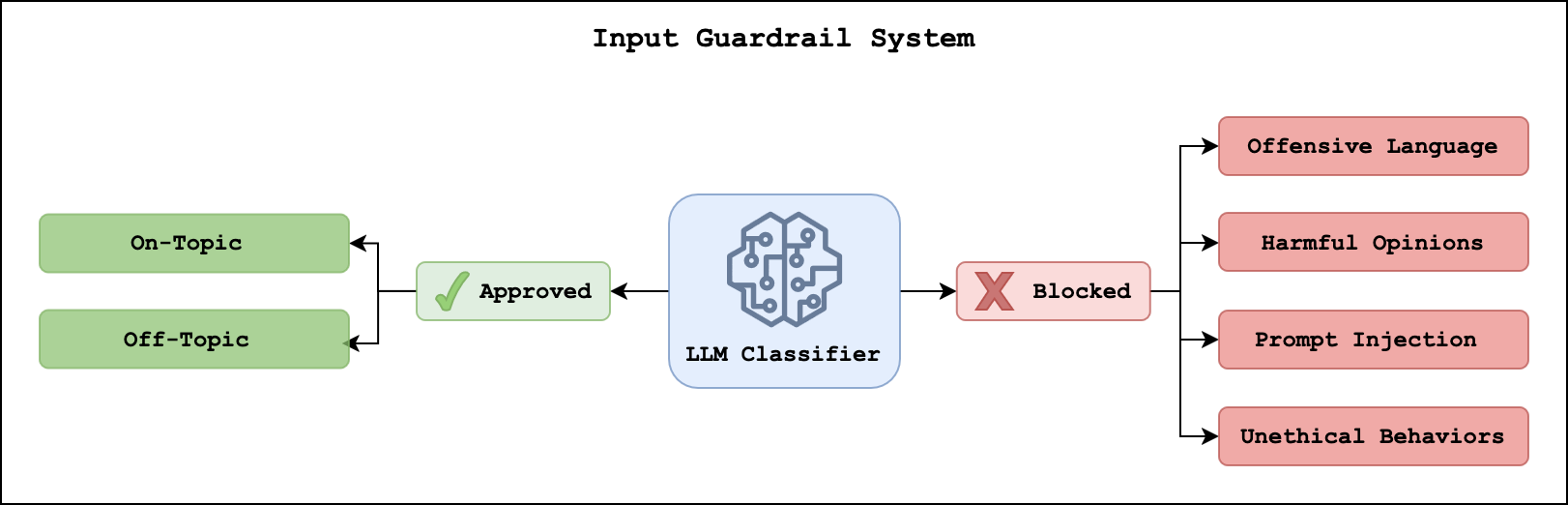

Enter guardrails assist stop the LLM system from processing dangerous content material. Though Amazon Bedrock Guardrails affords one implementation strategy with filters for particular phrases, content material, or delicate info, we developed a customized answer. This offered us higher flexibility to optimize efficiency for Rioplatense Spanish and monitor particular varieties of content material. The next diagram illustrates our strategy, wherein an LLM classifier assigns a main class (“authorised” or “blocked”) in addition to a extra detailed subcategory.

Accepted queries are throughout the scope of the federal government procedures agent. They encompass on-topic requests, which deal with authorities procedures, and off-topic requests, that are low-risk dialog questions that the agent responds to immediately. Blocked queries comprise high-risk content material that Boti ought to keep away from, together with offensive language, dangerous opinions, immediate injection assaults, or unethical behaviors.

We evaluated the enter guardrail system on a dataset consisting of each regular and dangerous person queries. The system efficiently blocked 100% of dangerous queries, whereas sometimes flagging regular queries as dangerous. This efficiency stability makes positive that Boti can present useful info whereas sustaining protected and acceptable interactions for customers.

Agent system

The federal government procedures agent is liable for answering person questions. It determines when to retrieve related procedural info utilizing its retrieval instrument and generates responses in Boti’s attribute model. Within the following sections, we study each processes.

Reasoning retriever

The agent can use a retrieval instrument to offer correct and up-to-date details about authorities procedures. Retrieval instruments sometimes make use of a RAG framework to carry out semantic similarity searches between person queries and a data base containing doc chunks saved as embeddings, after which present essentially the most related samples as context to the LLM. Authorities procedures, nevertheless, current challenges to this customary strategy. Associated procedures, reminiscent of renewing and reprinting drivers’ licenses, could be tough to disambiguate. Moreover, every person query sometimes requires info from one particular process. The combination of chunks returned from customary RAG approaches will increase the chance of producing incorrect responses.

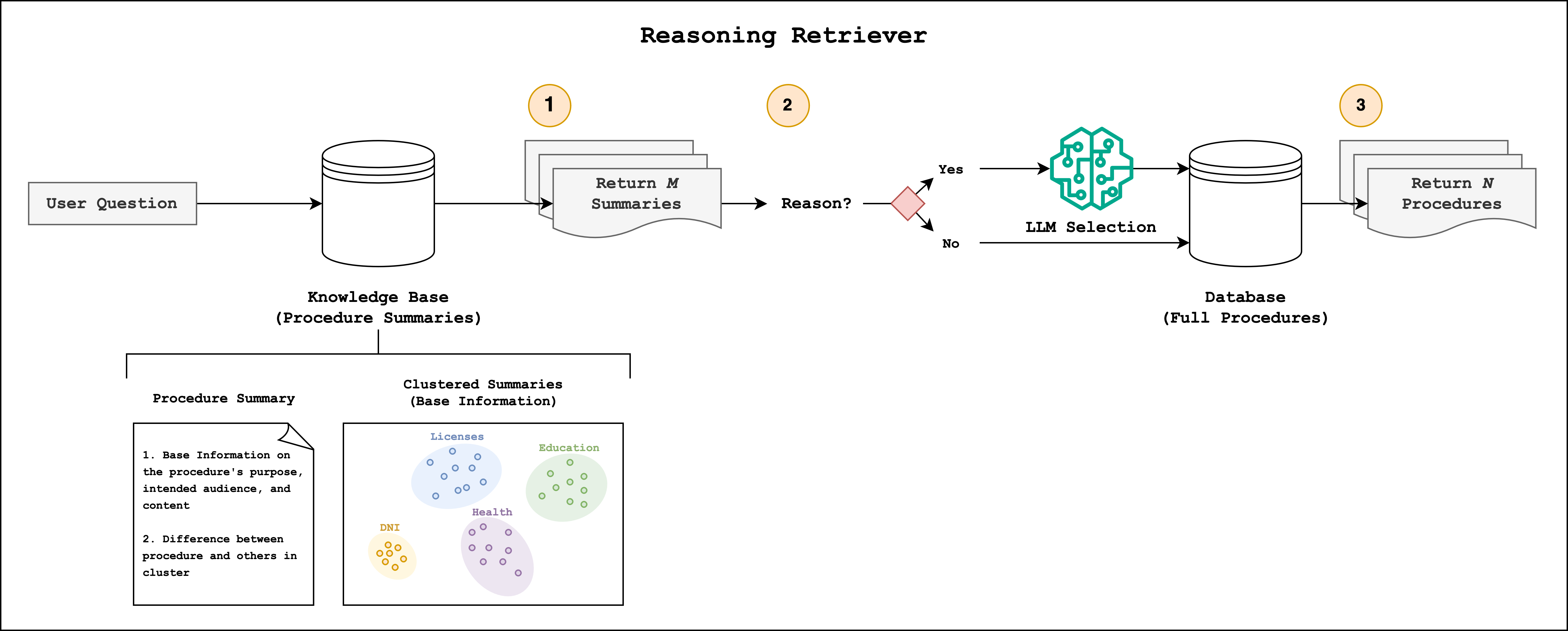

To raised disambiguate authorities procedures, the Buenos Aires and GenAIIC groups developed a reasoning retrieval methodology that makes use of comparative summaries and LLM choice. An summary of this strategy is proven within the following diagram.

A crucial preprocessing step earlier than retrieval is the creation of a authorities procedures data base. To seize each the important thing info contained in procedures and the way they associated to one another, we created comparative summaries. Every abstract incorporates fundamental info, such because the process’s function, meant viewers, and content material, reminiscent of prices, steps, and necessities. We clustered the bottom summaries into small teams, with a mean cluster dimension of 5, and used an LLM to generate descriptions about what made every process completely different from its neighbors. We appended the distinguishing descriptions to the bottom info to create the ultimate abstract. We observe that this strategy shares similarities to Anthropic’s Contextual Retrieval, which prepends explanatory context to doc chunk.

With the data base in place, we’re capable of retrieve related authorities procedures based mostly on the person question. The reasoning retriever completes three steps:

- Retrieve M Summaries: We retrieve between 1 and M comparative summaries utilizing semantic search.

- Elective Reasoning: In some circumstances, the preliminary retrieval surfaces comparable procedures. To guarantee that essentially the most related procedures are returned to the agent, we apply an optionally available LLM reasoning step. The situation for this step happens when the ratio of the primary and second retrieval scores falls under a threshold worth. An LLM follows a chain-of-thought (CoT) course of wherein it compares the person question to the retrieved summaries. It discards irrelevant procedures and reorders the remaining ones based mostly on relevance. If the person question is particular sufficient, this course of sometimes returns one end result. By making use of this reasoning step selectively, we reduce latency and token utilization whereas sustaining excessive retrieval accuracy.

- Retrieve N Full-Textual content Procedures: After essentially the most related procedures are recognized, we fetch their full paperwork and metadata from an Amazon DynamoDB desk. The metadata incorporates info just like the supply URL and the sentiment of the process. The agent sometimes receives between 1 and N outcomes, the place N ≤ M.

The agent receives the retrieved full textual content procedures in its context window. It follows its personal CoT course of to find out the related content material and URL supply attributions when producing its reply.

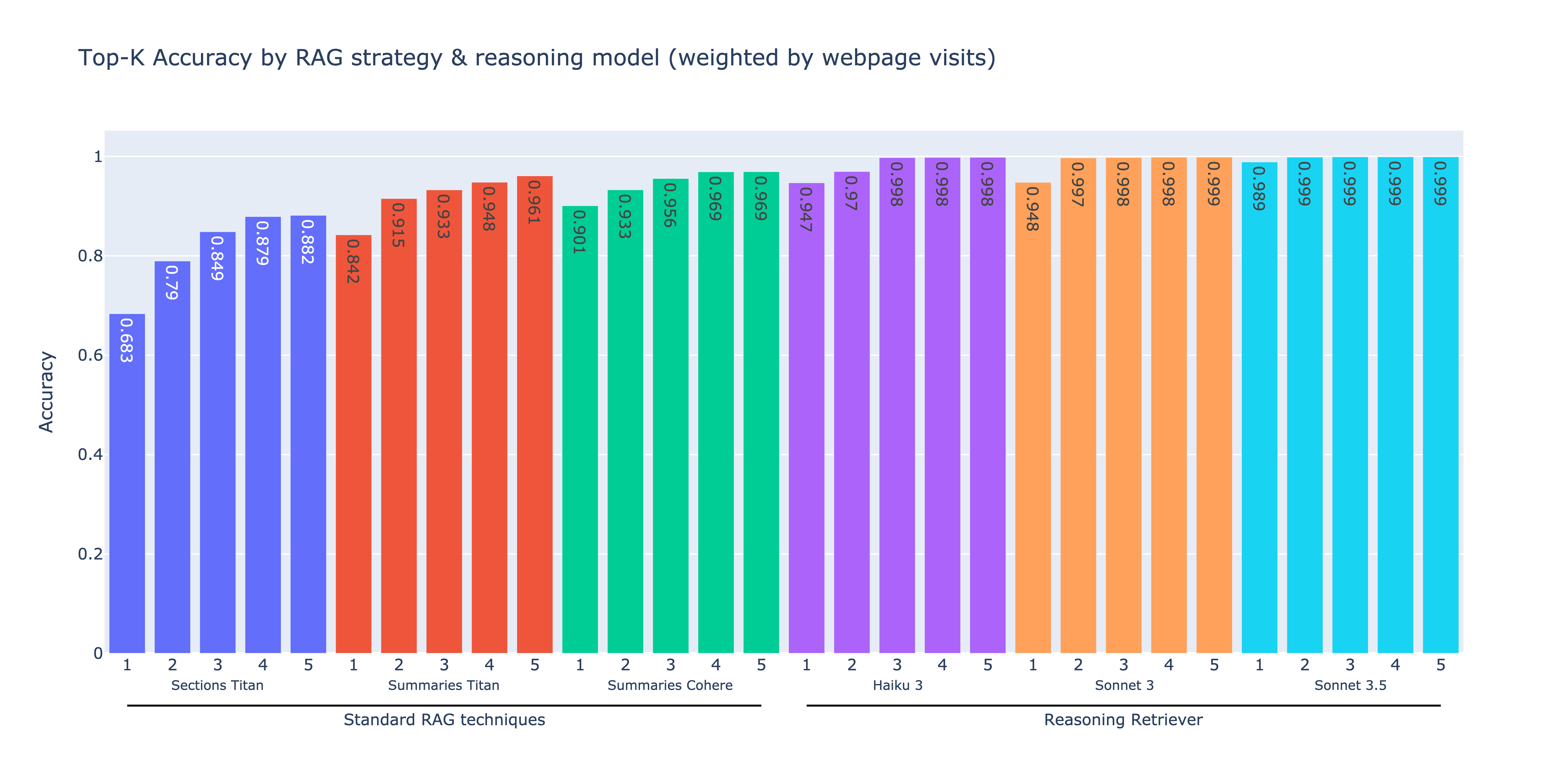

We evaluated our reasoning retriever towards customary RAG methods utilizing an artificial dataset of 1,908 questions derived from identified supply procedures. The efficiency was measured by figuring out whether or not the proper process appeared within the top-k retrieved outcomes for every query. The next plot compares the top-k retrieval accuracy for every strategy throughout completely different fashions, organized so as of ascending efficiency from left to proper. The metrics are proportionally weighted based mostly on every process’s webpage go to frequency, ensuring that our analysis displays real-world utilization patterns.

The primary three approaches signify customary vector-based retrieval strategies. The primary methodology, Part Titan, concerned chunking procedures by doc sections, concentrating on roughly 250 phrases per chunk, after which embedding the chunks utilizing Amazon Titan Textual content Embeddings v2. The second methodology, Summaries Titan, consisted of embedding the process summaries utilizing the identical embedding mannequin. By embedding summaries reasonably than doc textual content, the retrieval accuracy improved by 7.8–15.8%. The third methodology, Summaries Cohere, concerned embedding process summaries utilizing Cohere Multilingual v3 on Amazon Bedrock. The Cohere Multilingual embedding mannequin offered a noticeable enchancment in retrieval accuracy in comparison with the Amazon Titan embedding fashions, with all top-k values above 90%.

The subsequent three approaches use the reasoning retriever. We embedded the process summaries utilizing the Cohere Multilingual mannequin, retrieved 10 summaries throughout the preliminary retrieval step, and optionally utilized the LLM-based reasoning step utilizing both Anthropic’s Haiku 3, Claude 3 Sonnet, or Claude 3.5 Sonnet on Amazon Bedrock. All three reasoning retrievers constantly outperform customary RAG methods, reaching 12.5–17.5% increased top-k accuracies. Anthropic’s Claude 3.5 Sonnet delivered the best efficiency with 98.9% top-1 accuracy. These outcomes display how combining embedding-based retrieval with LLM-powered reasoning can enhance RAG efficiency.

Reply era

After gathering the required info, the agent responds utilizing Boti’s distinctive communication model: concise, useful messages in Rioplatense Spanish. We maintained this voice by means of immediate engineering that specified the next:

- Character – Convey a heat and pleasant tone, offering fast options to on a regular basis issues

- Response size – Restrict responses to some sentences

- Construction – Manage content material utilizing lists and highlights key info utilizing daring textual content

- Expression – Use emojis to mark necessary necessities and add visible cues

- Dialect – Incorporate Rioplatense linguistic options, together with voseo, periphrastic future, and regional vocabulary (for instance, “acordate,” “entrar,” “acá,” and “allá”).

Authorities procedures typically handle delicate matters, like accidents, well being, or safety. To facilitate acceptable responses, we integrated sentiment evaluation into our data base as metadata. This permits our system to path to completely different immediate templates. Delicate matters are directed to prompts with diminished emoji utilization and extra empathetic language, whereas impartial matters obtain customary templates.

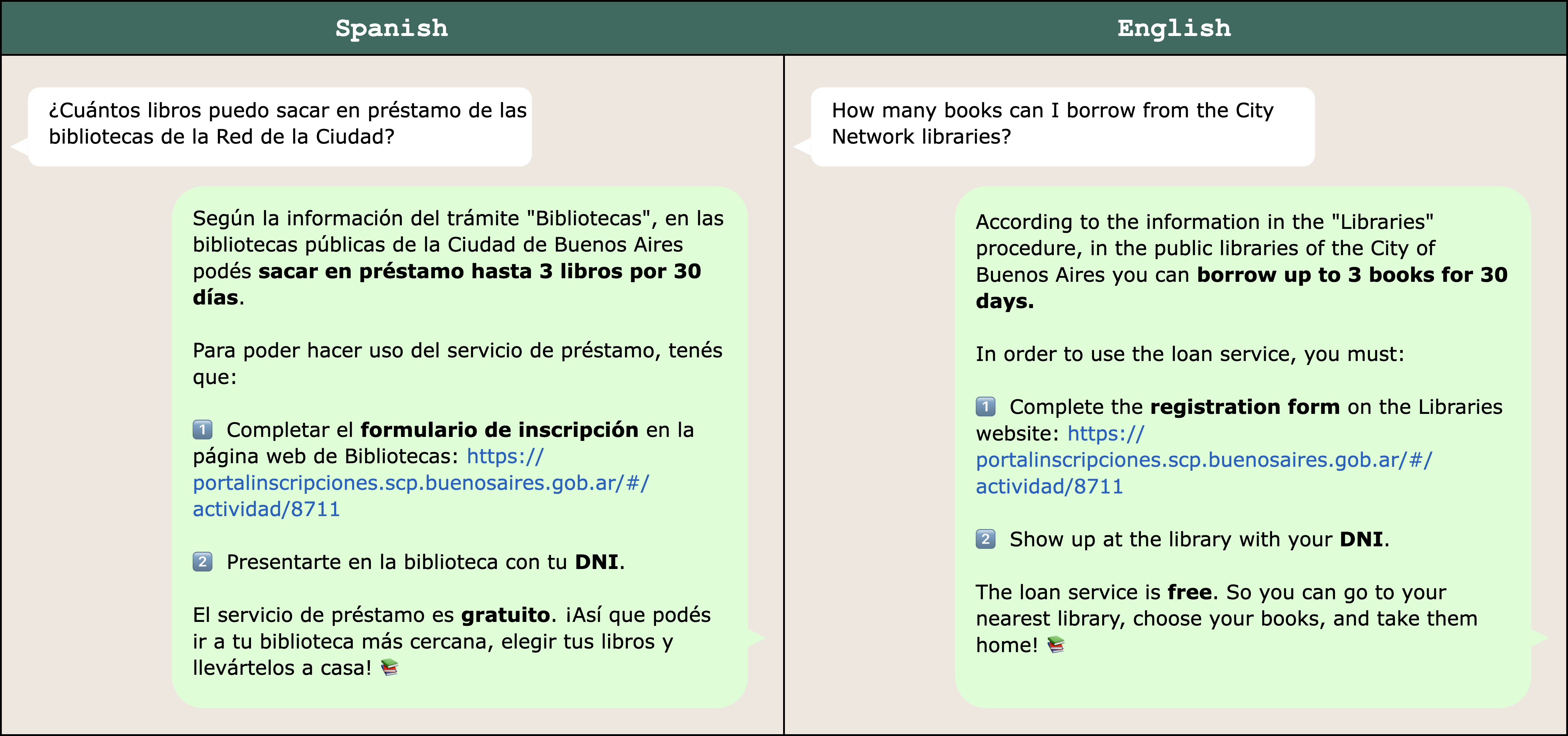

The next determine reveals a pattern response to a query about borrowing library books. It has been translated to English for comfort.

To validate our immediate engineering strategy, subject material consultants on the Authorities of the Metropolis of Buenos Aires reviewed a pattern of Boti’s responses. Their evaluation confirmed excessive constancy to Rioplatense Spanish, with 98% accuracy in voseo utilization and 92% in periphrastic future utilization.

Conclusion

This publish described the agentic AI assistant constructed by the Authorities of the Metropolis of Buenos Aires and the GenAIIC to answer residents’ questions on authorities procedures. The answer consists of two main elements: an enter guardrail system that helps stop the system from responding to dangerous person queries and a authorities procedures agent that retrieves related info and generates responses. The enter guardrails successfully block dangerous content material, together with queries with offensive language, dangerous opinions, immediate injection, and unethical behaviors. The federal government procedures agent employs a novel reasoning retrieval methodology that disambiguates comparable authorities procedures, reaching as much as 98.9% top-1 retrieval accuracy and a 12.5–17.5% enchancment over customary RAG strategies. Via immediate engineering, responses are delivered in Rioplatense Spanish utilizing Boti’s voice. Subject material consultants rated Boti’s linguistic efficiency extremely, with 98% accuracy in voseo utilization and 92% in periphrastic future utilization.

As generative AI advances, we anticipate to repeatedly enhance our answer. The increasing catalog of LLMs out there in Amazon Bedrock makes it potential to experiment with newer, extra highly effective fashions. This contains fashions that course of textual content, as explored within the answer on this publish, in addition to fashions that course of speech, permitting for direct speech-to-speech interactions. We would additionally discover the fine-tuning capabilities of Amazon Bedrock to customise fashions in order that they higher seize the linguistic options of Rioplatense Spanish. Past mannequin enhancements, we are able to iterate on our agent framework. The agent’s instrument set could be expanded to assist different duties related to authorities procedures like account creation, kind completion, and appointment scheduling. Because the Metropolis Authorities develops new experiences for residents, we are able to think about implementing multi-agent frameworks wherein specialist brokers, like the federal government procedures agent, deal with particular duties.

To be taught extra about Boti and AWS’s generative AI capabilities, take a look at the next assets:

In regards to the authors

Julieta Rappan is Director of the Digital Channels Division of the Buenos Aires Metropolis Authorities, the place she coordinates the panorama of digital and conversational interfaces. She has intensive expertise within the complete administration of strategic and technological initiatives, in addition to in main high-performance groups centered on the event of digital services and products. Her management drives the implementation of technological options with a deal with scalability, coherence, public worth, and innovation—the place generative applied sciences are starting to play a central function.

Macarena Blasi is Chief of Employees on the Digital Channels Division of the Buenos Aires Metropolis Authorities, working throughout the town’s essential digital providers, together with Boti—the WhatsApp-based digital assistant—and the official Buenos Aires web site. She started her journey working in conversational expertise design, later serving as product proprietor and Operations Supervisor after which as Head of Expertise and Content material, main multidisciplinary groups centered on bettering the standard, accessibility, and usefulness of public digital providers. Her work is pushed by a dedication to constructing clear, inclusive, and human-centered experiences within the public sector.

María Candela Blanco is Operations Supervisor for High quality Assurance, Usability, and Steady Enchancment on the Buenos Aires Authorities, the place she leads the content material, analysis, and conversational technique throughout the town’s essential digital channels, together with the Boti AI assistant and the official Buenos Aires web site. Outdoors of tech, Candela research literature at UNSAM and is deeply captivated with language, storytelling, and the methods they form our interactions with expertise.

Leandro Micchele is a Software program Developer centered on making use of AI to real-world use circumstances, with experience in AI assistants, voice, and imaginative and prescient options. He serves because the technical lead and marketing consultant for the Boti AI assistant on the Buenos Aires Authorities and works as a Software program Developer at Telecom Argentina. Past tech, his self-discipline extends to martial arts: he has over 20 years of expertise and at the moment teaches Aikido.

Hugo Albuquerque is a Deep Studying Architect on the AWS Generative AI Innovation Middle. Earlier than becoming a member of AWS, Hugo had intensive expertise working as a knowledge scientist within the media and leisure and advertising and marketing sectors. In his free time, he enjoys studying different languages like German and training social dancing, reminiscent of Brazilian Zouk.

Enrique Balp is a Senior Knowledge Scientist on the AWS Generative AI Innovation Middle engaged on cutting-edge AI options. With a background within the physics of advanced programs centered on neuroscience, he has utilized information science and machine studying throughout healthcare, power, and finance for over a decade. He enjoys hikes in nature, meditation retreats, and deep friendships.

Diego Galaviz is a Deep Studying Architect on the AWS Generative AI Innovation Middle. Earlier than becoming a member of AWS, he had over 8 years of experience as a knowledge scientist throughout various sectors, together with monetary providers, power, huge tech, and cybersecurity. He holds a grasp’s diploma in synthetic intelligence, which enhances his sensible trade expertise.

Laura Kulowski is a Senior Utilized Scientist on the AWS Generative AI Innovation Middle, the place she works with prospects to construct generative AI options. Earlier than becoming a member of Amazon, Laura accomplished her PhD at Harvard’s Division of Earth and Planetary Sciences and investigated Jupiter’s deep zonal flows and magnetic discipline utilizing Juno information.

Rafael Fernandes is the LATAM chief of the AWS Generative AI Innovation Middle, whose mission is to speed up the event and implementation of generative AI within the area. Earlier than becoming a member of Amazon, Rafael was a co-founder within the monetary providers trade area and a knowledge science chief with over 12 years of expertise in Europe and LATAM.