Constructing customized basis fashions requires coordinating a number of property throughout the event lifecycle similar to knowledge property, compute infrastructure, mannequin structure and frameworks, lineage, and manufacturing deployments. Information scientists create and refine coaching datasets, develop customized evaluators to evaluate mannequin high quality and security, and iterate via fine-tuning configurations to optimize efficiency. As these workflows scale throughout groups and environments, monitoring which particular dataset variations, evaluator configurations, and hyperparameters produced every mannequin turns into difficult. Groups typically depend on handbook documentation in notebooks or spreadsheets, making it troublesome to breed profitable experiments or perceive the lineage of manufacturing fashions.

This problem intensifies in enterprise environments with a number of AWS accounts for improvement, staging, and manufacturing. As fashions transfer via deployment pipelines, sustaining visibility into their coaching knowledge, analysis standards, and configurations requires important coordination. With out automated monitoring, groups lose the flexibility to hint deployed fashions again to their origins or share property persistently throughout experiments. Amazon SageMaker AI helps monitoring and managing property utilized in generative AI improvement. With Amazon SageMaker AI you possibly can register and model fashions, datasets, and customized evaluators, then mechanically capturing relationships and lineage as you fine-tune, consider, and deploy generative AI fashions. This reduces handbook monitoring overhead and offers full visibility into how fashions have been created, from base basis mannequin via manufacturing deployment.

On this publish, we’ll discover the brand new capabilities and core ideas that assist organizations observe and handle fashions improvement and deployment lifecycles. We are going to present you ways the options are configured to coach fashions with computerized end-to-end lineage, from dataset add and versioning to mannequin fine-tuning, analysis, and seamless endpoint deployment.

Managing dataset variations throughout experiments

As you refine coaching knowledge for mannequin customization, you usually create a number of variations of datasets. You possibly can register datasets and create new variations as your knowledge evolves, with every model tracked independently. While you register a dataset in SageMaker AI, you present the S3 location and metadata describing the dataset. As you refine your knowledge—whether or not including extra examples, bettering high quality, or adjusting for particular use instances—you possibly can create new variations of the identical dataset. Every model, as proven within the following picture, maintains its personal metadata and S3 location so you possibly can observe the evolution of your coaching knowledge over time.

While you use a dataset for fine-tuning, Amazon SageMaker AI mechanically hyperlinks the precise dataset model to the ensuing mannequin. This helps the comparability between fashions educated with completely different dataset variations and helps you perceive which knowledge refinements led to higher efficiency. You too can reuse the identical dataset model throughout a number of experiments for consistency when testing completely different hyperparameters or fine-tuning methods.

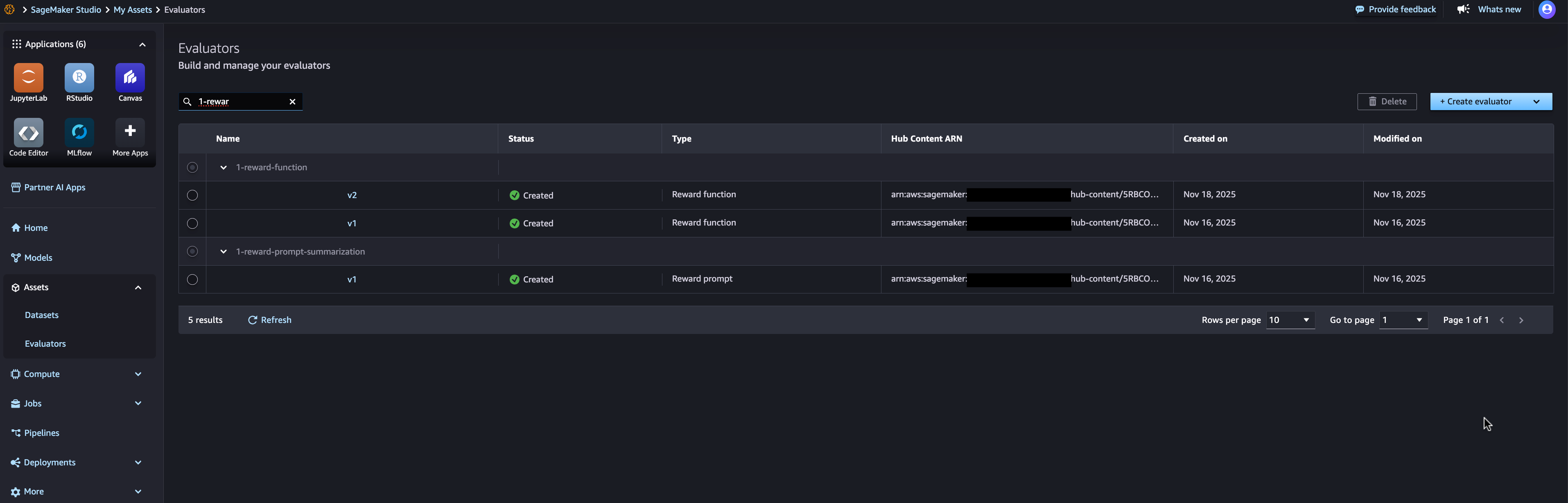

Creating reusable customized evaluators

Evaluating customized fashions typically requires domain-specific high quality, security, or efficiency standards. A customized evaluator consists of Lambda operate code that receives enter knowledge and returns analysis outcomes together with scores and validation standing. You possibly can outline evaluators for numerous functions—checking response high quality, assessing security and toxicity, validating output format, or measuring task-specific accuracy. You possibly can observe customized evaluators utilizing AWS Lambda features that implement your analysis logic, then model and reuse these evaluators throughout fashions and datasets, as proven within the following picture.

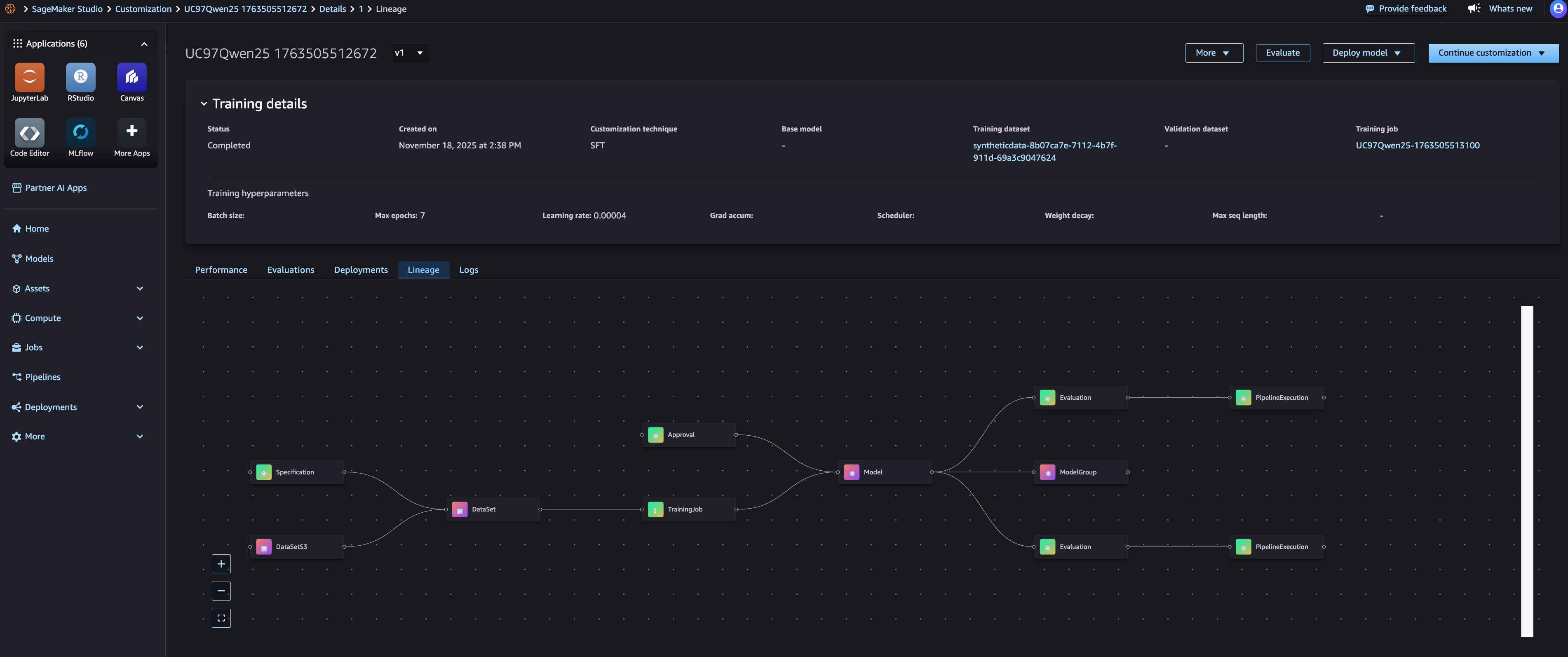

Automated lineage monitoring all through the event lifecycle

SageMaker AI lineage monitoring functionality mechanically captures relationships between property as you construct and consider fashions. While you create a fine-tuning job, Amazon SageMaker AI hyperlinks the coaching job to enter datasets, base basis fashions, and output fashions. While you run analysis jobs, it connects evaluations to the fashions being assessed and the evaluators used. This computerized lineage seize means you don’t have to manually doc which property have been used for every experiment. You possibly can view the whole lineage for a mannequin, exhibiting its base basis mannequin, coaching datasets with particular variations, hyperparameters, analysis outcomes, and deployment areas, as proven within the picture beneath.

With the lineage view, you possibly can hint any deployed fashions again to their origins. For instance, if you should perceive why a manufacturing mannequin behaves in a sure method, you possibly can see precisely which coaching knowledge, fine-tuning configuration, and analysis standards have been used. That is significantly worthwhile for governance, reproducibility, and debugging functions. You too can use lineage data to breed experiments. By figuring out the precise dataset model, evaluator model, and configuration used for a profitable mannequin, you possibly can recreate the coaching course of with confidence that you simply’re utilizing equivalent inputs.

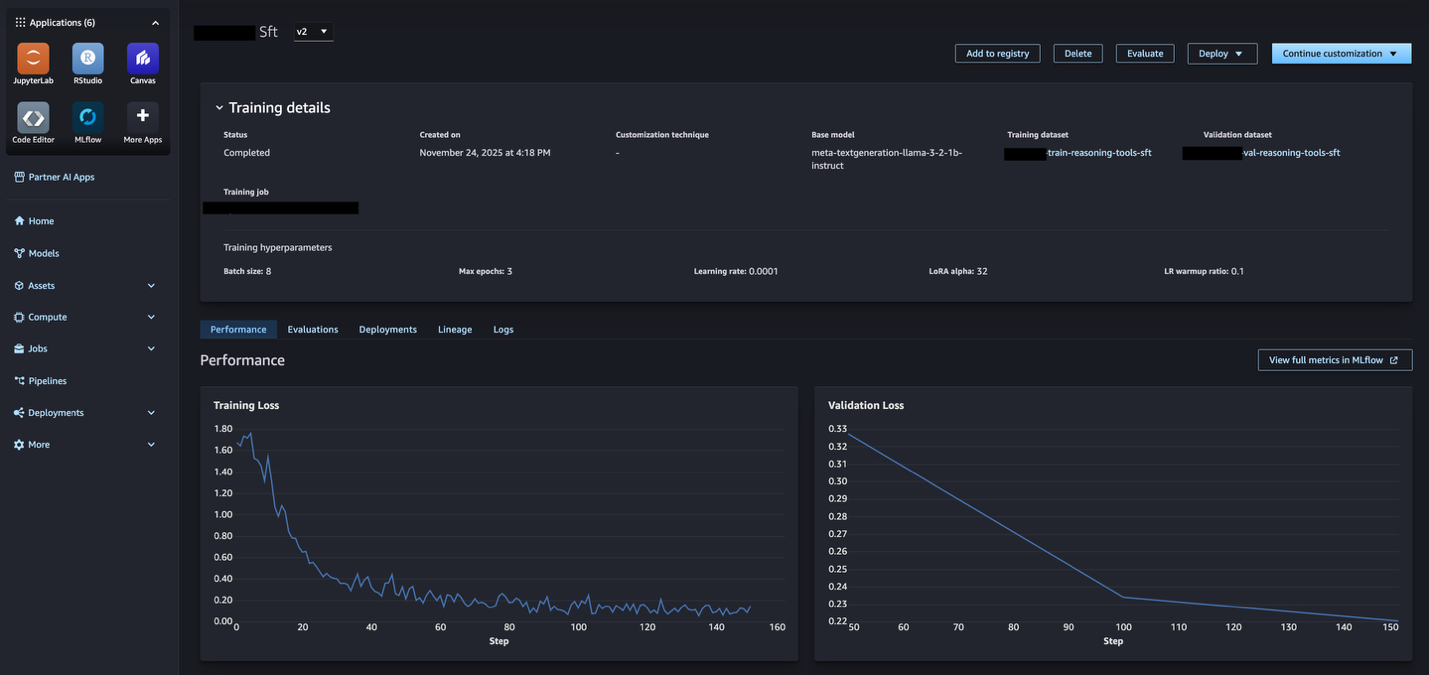

Integrating with MLflow for experiment monitoring

The mannequin customization capabilities of Amazon SageMaker AI are by default habits built-in with SageMaker AI MLflow Apps, offering computerized linking between mannequin coaching jobs and MLflow experiments. While you run mannequin customization jobs, all the required MLflow actions are mechanically carried out for you – the default SageMaker AI MLflow App is mechanically used, an MLflow experiment chosen for you and all of the metrics, parameters, and artifacts are logged for you. From the SageMaker AI Studio mannequin web page, it is possible for you to to see metrics sourced from MLflow (as proven within the following picture) and additional view full metrics throughout the related MLflow experiment.

With MLflow integration it’s easy to check a number of mannequin candidates. You should utilize MLflow to visualise efficiency metrics throughout experiments, determine the best-performing mannequin, then use the lineage to grasp which particular datasets and evaluators produced that end result. This helps you make knowledgeable choices about which fashions to advertise to manufacturing based mostly on each quantitative metrics and asset provenance.

Getting began with monitoring and managing generative AI property

By bringing these numerous mannequin customization property and processes—dataset versioning, evaluator monitoring, mannequin efficiency, mannequin deployment – you possibly can flip the scattered mannequin property right into a traceable, reproducible, and manufacturing prepared workflow with computerized end-to-end lineage. This functionality is now out there in supported AWS Areas. You possibly can entry this functionality via Amazon SageMaker AI Studio, and the SageMaker python SDK.

To get began:

- Open Amazon SageMaker AI Studio and navigate to the Fashions part.

- Customise the JumpStart base fashions to create a mannequin.

- Navigate to the Belongings part to handle datasets and evaluators.

- Register your first dataset by offering an S3 location and metadata.

- Create a customized evaluator utilizing an current Lambda operate or create a brand new one.

- Use registered datasets in your fine-tuning jobs—lineage is captured mechanically.

- View lineage for the mannequin to see full relationships.

For extra data, go to the Amazon SageMaker AI documentation.

Concerning the authors

Amit Modi is the product chief for SageMaker AI MLOps, ML Governance, and Inference at AWS. With over a decade of B2B expertise, he builds scalable merchandise and groups that drive innovation and ship worth to prospects globally.

Amit Modi is the product chief for SageMaker AI MLOps, ML Governance, and Inference at AWS. With over a decade of B2B expertise, he builds scalable merchandise and groups that drive innovation and ship worth to prospects globally.

Sandeep Raveesh is a GenAI Specialist Options Architect at AWS. He works with buyer via their AIOps journey throughout mannequin coaching, GenAI purposes like Brokers, and scaling GenAI use-cases. He additionally focuses on go-to-market methods serving to AWS construct and align merchandise to resolve business challenges within the generative AI house. You possibly can join with Sandeep on LinkedIn to study GenAI options.

Sandeep Raveesh is a GenAI Specialist Options Architect at AWS. He works with buyer via their AIOps journey throughout mannequin coaching, GenAI purposes like Brokers, and scaling GenAI use-cases. He additionally focuses on go-to-market methods serving to AWS construct and align merchandise to resolve business challenges within the generative AI house. You possibly can join with Sandeep on LinkedIn to study GenAI options.