Extracting structured knowledge from paperwork like invoices, receipts, and kinds is a persistent enterprise problem. Variations in format, structure, language, and vendor make standardization troublesome, and handbook knowledge entry is gradual, error-prone, and unscalable. Conventional optical character recognition (OCR) and rule-based techniques typically fall brief in dealing with this complexity. As an illustration, a regional financial institution may have to course of hundreds of disparate paperwork—mortgage purposes, tax returns, pay stubs, and IDs—the place handbook strategies create bottlenecks and enhance the chance of error. Clever doc processing (IDP) goals to resolve these challenges by utilizing AI to categorise paperwork, extract or derive related info, and validate the extracted knowledge to make use of it in enterprise processes. One in every of its core objectives is to transform unstructured or semi-structured paperwork into usable, structured codecs resembling JSON, which then include particular fields, tables, or different structured goal info. The goal construction must be constant, in order that it may be used as a part of workflows or different downstream enterprise techniques or for reporting and insights technology. The next determine exhibits the workflow, which includes ingesting unstructured paperwork (for instance, invoices from a number of distributors with various layouts) and extracting related info. Regardless of variations in key phrases, column names, or codecs throughout paperwork, the system normalizes and outputs the extracted knowledge right into a constant, structured JSON format.

Imaginative and prescient language fashions (VLMs) mark a revolutionary development in IDP. VLMs combine giant language fashions (LLMs) with specialised picture encoders, creating actually multi-modal AI capabilities of each textual reasoning and visible interpretation. In contrast to conventional doc processing instruments, VLMs course of paperwork extra holistically—concurrently analyzing textual content content material, doc structure, spatial relationships, and visible components in a way that extra intently resembles human comprehension. This method allows VLMs to extract which means from paperwork with unprecedented accuracy and contextual understanding. For readers focused on exploring the foundations of this expertise, Sebastian Raschka’s publish—Understanding Multimodal LLMs—presents a superb primer on multimodal LLMs and their capabilities.

This publish has 4 important sections that replicate the first contributions of our work and embody:

- An summary of the varied IDP approaches out there, together with the choice (our advisable answer) for fine-tuning as a scalable method.

- Pattern code for fine-tuning VLMs for document-to-JSON conversion utilizing Amazon SageMaker AI and the SWIFT framework, a light-weight toolkit for fine-tuning numerous giant fashions.

- Growing an analysis framework to evaluate efficiency processing structured knowledge.

- A dialogue of the attainable deployment choices, together with an specific instance for deploying the fine-tuned adapter.

SageMaker AI is a completely managed service to construct, prepare and deploy fashions at scale. On this publish, we use SageMaker AI to fine-tune the VLMs and deploy them for each batch and real-time inference.

Conditions

Earlier than you start, be sure to have the next arrange to be able to efficiently observe the steps outlined on this publish and the accompanying GitHub repository:

- AWS account: You want an energetic AWS account with permissions to create and handle sources in SageMaker AI, Amazon Easy Storage Service (Amazon S3), and Amazon Elastic Container Registry (Amazon ECR).

- IAM permissions: Your IAM consumer or position should have enough permissions. For manufacturing setups, observe the precept of least privilege as described in safety finest practices in IAM. For a sandbox setup we recommend the next roles:

- Full entry to Amazon SageMaker AI (for instance,

AmazonSageMakerFullAccess). - Learn/write entry to S3 buckets for storing datasets and mannequin artifacts.

- Permissions to push and pull Docker photographs from Amazon ECR (for instance,

AmazonEC2ContainerRegistryPowerUser). - If utilizing particular SageMaker occasion sorts, ensure your service quotas are enough.

- Full entry to Amazon SageMaker AI (for instance,

- GitHub repository: Clone or obtain the venture code from our GitHub repository. This repository incorporates the notebooks, scripts, and Docker artifacts referenced on this publish.

- Native surroundings arrange:

- Python: Python 3.10 or greater is advisable.

- AWS CLI: Ensure that the AWS Command Line Interface (AWS CLI) is put in and configured with credentials which have the required permissions.

- Docker: Docker should be put in and operating in your native machine if you happen to plan to construct the customized Docker container for deployment.

- Jupyter Pocket book and Lab: To run the supplied notebooks.

- Set up the required Python packages by operating

pip set up -r necessities.txtfrom the cloned repository’s root listing.

- Familiarity (advisable):

- Fundamental understanding of Python programming.

- Familiarity with AWS providers, notably SageMaker AI.

- Conceptual information of LLMs, VLMs, and the container expertise shall be useful.

Overview of doc processing and generative AI approaches

There are various levels of autonomy in clever doc processing. On one finish of the spectrum are absolutely handbook processes: People manually studying paperwork and getting into the data right into a type utilizing a pc system. Most techniques at this time are semi-autonomous doc processing options. For instance, a human taking an image of a receipt and importing it to a pc system that mechanically extracts a part of the data. The objective is to get to completely autonomous clever doc processing techniques. This implies decreasing the error fee and assessing the use case particular danger of errors. AI is considerably reworking doc processing by enabling higher ranges of automation. Quite a lot of approaches exist, ranging in complexity and accuracy—from specialised fashions for OCR, to generative AI.

Specialised OCR fashions that don’t depend on generative AI are designed as pre-trained, task-specific ML fashions that excel at extracting structured info resembling tables, kinds, and key-value pairs from frequent doc sorts like invoices, receipts, and IDs. Amazon Textract is one instance of any such service. This service presents excessive accuracy out of the field and requires minimal setup, making it well-suited for workloads the place primary textual content extraction is required, and paperwork don’t range considerably in construction or include photographs.

Nonetheless, as you enhance the complexity and variability of paperwork, along with including multimodality, utilizing generative AI may also help enhance doc processing pipelines.

Whereas highly effective, making use of general-purpose VLMs or LLMs to doc processing isn’t easy. Efficient immediate engineering is essential to information the mannequin. Processing giant volumes of paperwork (scaling) requires environment friendly batching and infrastructure. As a result of LLMs are stateless, offering historic context or particular schema necessities for each doc might be cumbersome.

Approaches to clever doc processing that use LLMs or VLMs fall into 4 classes:

- Zero-shot prompting: the inspiration mannequin (FM) receives the results of earlier OCR or a PDF and the directions to carry out the doc processing process.

- Few-shot prompting: the FM receives the results of earlier OCR or a PDF, the directions to carry out the doc processing process, and a few examples.

- Retrieval-augmented few-shot prompting: just like the previous technique, however the examples despatched to the mannequin are chosen dynamically utilizing Retrieval Augmented Era (RAG).

- Nice-tuning VLMs

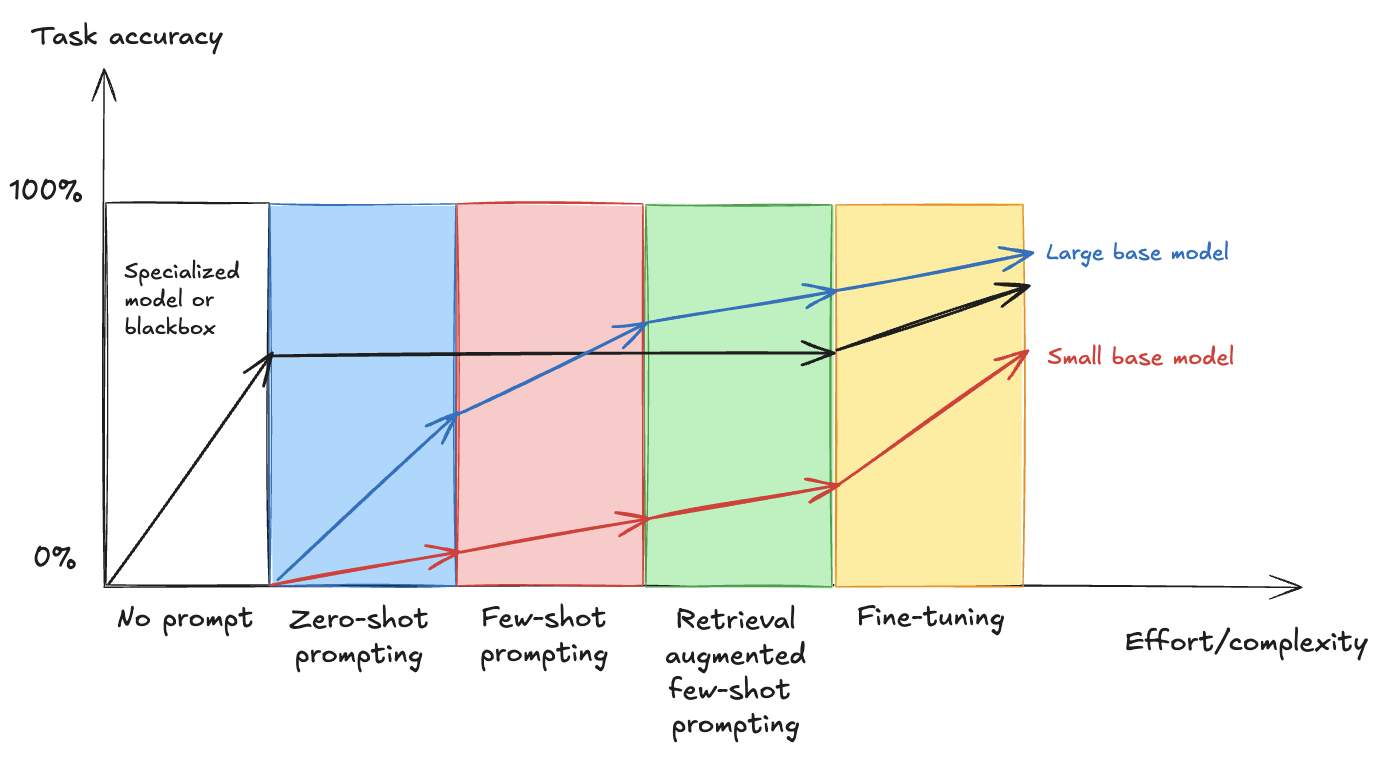

Within the following, you’ll be able to see the connection between growing effort and complexity and process accuracy, demonstrating how completely different strategies—from primary immediate engineering to superior fine-tuning—affect the efficiency of enormous and small base fashions in comparison with a specialised answer (impressed by the weblog publish Evaluating LLM fine-tuning strategies)

As you progress throughout the horizontal axis, the methods develop in complexity, and as you progress up the vertical axis, you enhance general accuracy. Usually, giant base fashions present higher efficiency than small base fashions within the methods that require immediate engineering, nonetheless as we clarify within the outcomes of this publish, fine-tuning small base fashions can ship related outcomes as fine-tuning giant base fashions for a selected process.

Zero-shot prompting

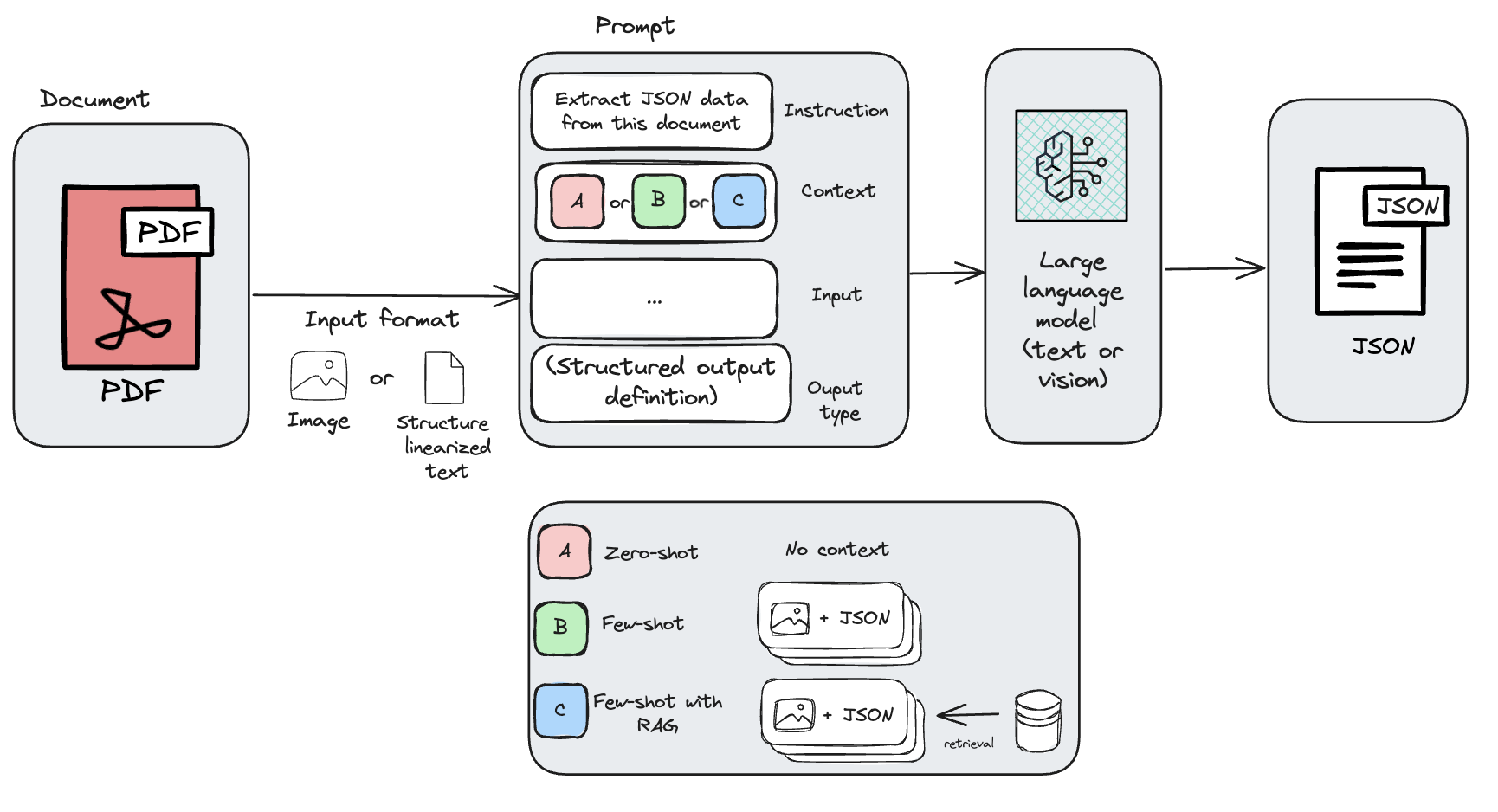

Zero-shot prompting is a way to make use of language fashions the place the mannequin is given a process with out prior examples or fine-tuning. As a substitute, it depends solely on the immediate’s wording and its pre-trained information to generate a response. In doc processing, this method includes giving the mannequin both a picture of a PDF doc, the OCR-extracted textual content from the PDF, or a structured markdown illustration of the doc and offering directions to carry out the doc processing process, along with the specified output format.

Amazon Bedrock Knowledge Automation makes use of zero-shot prompting with generative AI to carry out IDP. You should use Bedrock Knowledge Automation to automate the transformation of multi-modal knowledge—together with paperwork containing textual content and complicated constructions, resembling tables, charts and pictures—into structured codecs. You may profit from customization capabilities by the creation of blueprints that specify output necessities utilizing pure language or a schema editor. Bedrock Knowledge Automation can even extract bounding containers for the recognized entities and route paperwork appropriately to the right blueprint. These options might be configured and used by a single API, making it considerably extra highly effective than a primary zero-shot prompting method.

Whereas out-of-the-box VLMs can deal with common OCR duties successfully, they typically wrestle with the distinctive construction and nuances of customized paperwork—resembling invoices from numerous distributors. Though crafting a immediate for a single doc is perhaps easy, the variability throughout a whole bunch of vendor codecs makes immediate iteration a labor-intensive and time-consuming course of.

Few-shot prompting

Transferring to a extra advanced method, you’ve gotten few-shot prompting, a way used with LLMs the place a small variety of examples are supplied inside the immediate to information the mannequin in finishing a selected process. In contrast to zero-shot prompting, which depends solely on pure language directions, few-shot prompting improves accuracy and consistency by demonstrating the specified input-output conduct by examples.

One different is to make use of the Amazon Bedrock Converse API to carry out few shot prompting. Converse API gives a constant strategy to entry LLMs utilizing Amazon Bedrock. It helps turn-based messages between the consumer and the generative AI mannequin, and permits together with paperwork as a part of the content material. Another choice is utilizing Amazon SageMaker Jumpstart, which you need to use to deploy fashions from suppliers like HuggingFace.

Nonetheless, almost certainly what you are promoting must course of several types of paperwork (for instance, invoices, contracts and hand written notes) and even inside one doc sort there are a lot of variations, for instance, there’s not one standardized bill structure and as a substitute every vendor has their very own structure that you simply can not management. Discovering a single or just a few examples that cowl all of the completely different paperwork you wish to course of is difficult.

Retrieval-augmented few-shot prompting

One strategy to handle the problem of discovering the appropriate examples is to dynamically retrieve beforehand processed paperwork as examples and add them to the immediate at runtime (RAG).

You may retailer just a few annotated samples in a vector retailer and retrieve them based mostly on the doc that must be processed. Amazon Bedrock Information Bases helps you implement the complete RAG workflow from ingestion to retrieval and immediate augmentation with out having to construct customized integrations to knowledge sources and handle knowledge flows.

This turns the clever doc processing downside right into a search downside, which comes with its personal challenges on how you can enhance the accuracy of the search. Along with how you can scale for a number of varieties of paperwork, the few-shot method is dear as a result of each doc processed requires an extended immediate with examples. This leads to an elevated variety of enter tokens.

As proven within the previous determine, the immediate context will range based mostly on the technique chosen (zero-shot, few-shot or few-shot with RAG), which can general change the outcomes obtained.

Nice-tuning VLMs

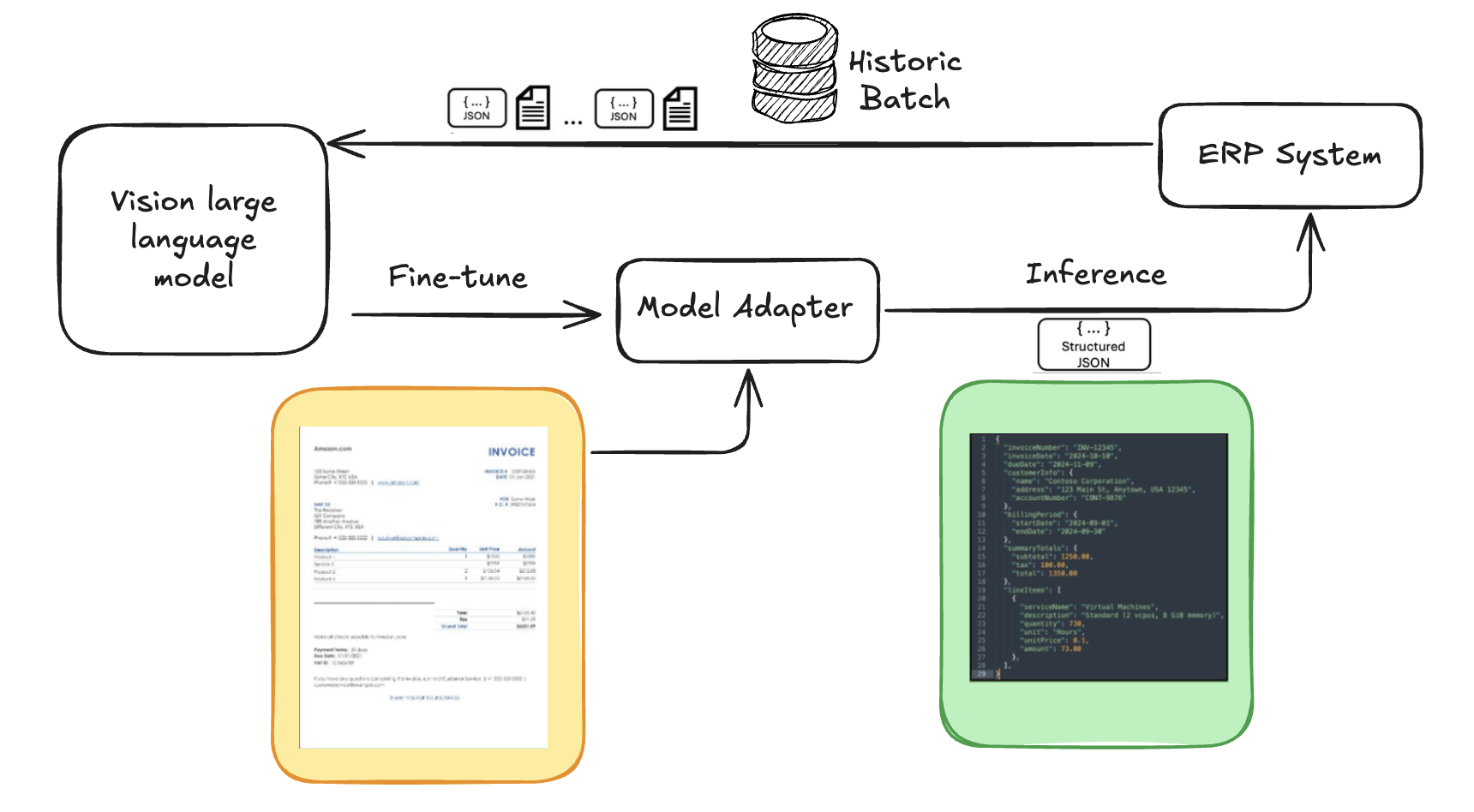

On the finish of the spectrum, you’ve gotten the choice to fine-tune a customized mannequin to carry out doc processing. That is our advisable method and what we deal with on this publish. Nice-tuning is a technique the place a pre-trained LLM is additional educated on a selected dataset to specialize it for a specific process or area. Within the context of doc processing, fine-tuning includes utilizing labeled examples—resembling annotated invoices, contracts, or insurance coverage kinds—to show the mannequin precisely how you can extract or interpret related info. Often, the labor-intensive a part of fine-tuning is buying an acceptable, high-quality dataset. Within the case of doc processing, your organization in all probability already has a historic dataset in its present doc processing system. You may export this knowledge out of your doc processing system (for instance out of your enterprise useful resource planning (ERP) system) and use it because the dataset for fine-tuning. This fine-tuning method is what we deal with on this publish as a scalable, excessive accuracy, and cost-effective method for clever doc processing.

The previous approaches signify a spectrum of methods to enhance LLM efficiency alongside two axes: LLM optimization (shaping mannequin conduct by immediate engineering or fine-tuning) and context optimization (enhancing what the mannequin is aware of at inference by strategies resembling few-shot studying or RAG). These strategies might be mixed—for instance, utilizing RAG with few-shot prompts or incorporating retrieved knowledge into fine-tuning—to maximise accuracy.

Nice-tuning VLMs for document-to-JSON conversion

Our method—the advisable answer for cost-effective document-to-JSON conversion—makes use of a VLM and fine-tunes it utilizing a dataset of historic paperwork paired with their corresponding ground-truth JSON that we take into account as annotations. This permits the mannequin to be taught the particular patterns, fields, and output construction related to your historic knowledge, successfully educating it to learn your paperwork and extract info in line with your required schema.

The next determine exhibits a high-level structure of the document-to-JSON conversion course of for fine-tuning VLMs by utilizing historic knowledge. This permits the VLM to be taught from excessive knowledge variations and helps be sure that the structured output matches the goal system construction and format.

Nice-tuning presents a number of benefits over relying solely on OCR or common VLMs:

- Schema adherence: The mannequin learns to output JSON matching a selected goal construction, which is significant for integration with downstream techniques like ERPs.

- Implicit discipline location: Nice-tuned VLMs typically be taught to find and extract fields with out specific bounding field annotations within the coaching knowledge, simplifying knowledge preparation considerably.

- Improved textual content extraction high quality: The mannequin turns into extra correct at extracting textual content even from visually advanced or noisy doc layouts.

- Contextual understanding: The mannequin can higher perceive the relationships between completely different items of data on the doc.

- Lowered immediate engineering: Submit fine-tuning, the mannequin requires much less advanced or shorter prompts as a result of the specified extraction conduct is constructed into its weights.

For our fine-tuning course of, we chosen the Swift framework. Swift gives a complete, light-weight toolkit for fine-tuning numerous giant language fashions, together with VLMs like Qwen-VL and Llama-Imaginative and prescient.

Knowledge preparation

To fine-tune the VLMs, you’ll use the Fatura2 dataset, a multi-layout bill picture dataset comprising 10,000 invoices with 50 distinct layouts.

The Swift framework expects coaching knowledge in a selected JSONL (JSON Strains) format. Every line within the file is a JSON object representing a single coaching instance. For multimodal duties, this JSON object sometimes contains:

messages: An inventory of conversational turns (for instance, system, consumer, assistant). The consumer flip incorporates placeholders for photographs (for instance,) and the textual content immediate that guides the mannequin. The assistant flip incorporates the goal output, which on this case is the ground-truth JSON string. photographs: An inventory of relative paths—inside the dataset listing construction—to the doc web page photographs (JPG information) related to this coaching instance.

As with commonplace ML apply, the dataset is break up into coaching, improvement (validation), and take a look at units to successfully prepare the mannequin, tune hyperparameters, and consider its last efficiency on unseen knowledge. Every doc (which could possibly be single-page or multi-page) paired with its corresponding ground-truth JSON annotation constitutes a single row or instance in our dataset. In our use case, one coaching pattern is the bill picture (or a number of photographs of doc pages) and the corresponding detailed JSON extraction. This one-to-one mapping is crucial for supervised fine-tuning.

The conversion course of, detailed within the dataset creation pocket book from the related GitHub repo, includes a number of key steps:

- Picture dealing with: If the supply doc is a PDF, every web page is rendered right into a high-quality PNG picture.

- Annotation processing (fill lacking values): We apply mild pre-processing to the uncooked JSON annotation. Nice-tuning a number of fashions on an open supply dataset, we noticed that the efficiency will increase when all keys are current in each JSON pattern. To keep up this consistency, the goal JSONs within the dataset are made to incorporate the identical set of top-level keys (derived from the complete dataset). If a key’s lacking for a specific doc, it’s added with a null worth.

- Key ordering: The keys inside the processed JSON annotation are sorted alphabetically. This constant ordering helps the mannequin be taught a secure output construction.

- Immediate development: A consumer immediate is constructed. This immediate contains

tags (one for every web page of the doc) and explicitly lists the JSON keys the mannequin is anticipated to extract. Together with the JSON keys within the prompts improves the fine-tuned mannequin’s efficiency. - Swift formatting: These elements (immediate, picture paths, goal JSON) are assembled into the Swift JSONL format. Swift datasets help multimodal inputs, together with photographs, movies and audios.

The next is an instance construction of a single coaching occasion in Swift’s JSONL format, demonstrating how multimodal inputs are organized. This contains conversational messages, paths to pictures, and objects containing bounding field (bbox) coordinates for visible references inside the textual content. For extra details about how you can create a customized dataset for Swift, see the Swift documentation.

Nice-tuning frameworks and sources

In our analysis of fine-tuning frameworks to be used with SageMaker AI, we thought-about a number of outstanding choices highlighted locally and related to our wants. These included Hugging Face Transformers, Hugging Face Autotrain, Llama Manufacturing unit, Unsloth, Torchtune, and ModelScope SWIFT (referred to easily as SWIFT on this publish, aligning with the SWIFT 2024 paper by Zhao and others.).

After experimenting with these, we determined to make use of SWIFT due to its light-weight nature, complete help for numerous Parameter-Environment friendly Nice-Tuning (PEFT) strategies like LoRA and DoRA, and its design tailor-made for environment friendly coaching of a wide selection of fashions, together with the VLMS used on this publish (for instance, Qwen-VL 2.5). Its scripting method integrates seamlessly with SageMaker AI coaching jobs, permitting for scalable and reproducible fine-tuning runs within the cloud.

There are a number of methods for adapting pre-trained fashions: full fine-tuning, the place all mannequin parameters are up to date, PEFT, which presents a extra environment friendly different by updating solely a small new variety of parameters (adapters), and quantization, a way that reduces mannequin dimension and hurries up inference utilizing lower-precision codecs (see Sebastian Rashka’s publish on fine-tuning to be taught extra about every approach).

Our venture makes use of LoRA and DoRA, as configured within the fine-tuning pocket book.

The next is an instance of configuring and operating a fine-tuning job (LoRA) as a SageMaker AI coaching job utilizing SWIFT and distant operate. When executing this operate, the fine-tuning shall be executed remotely as a SageMaker AI coaching job.

Nice-tuning VLMs sometimes requires GPU situations due to their computational calls for. For fashions like Qwen2.5-VL 3B, an occasion resembling an Amazon SageMaker AI ml.g5.2xlarge or ml.g6.8xlarge might be appropriate. Coaching time is a operate of dataset dimension, mannequin dimension, batch dimension, variety of epochs, and different hyperparameters. As an illustration, as famous in our venture readme.md, fine-tuning Qwen2.5 VL 3B on 300 Fatura2 samples took roughly 2,829 seconds (roughly 47 minutes) on an ml.g6.8xlarge occasion utilizing Spot pricing. This demonstrates how smaller fashions, when fine-tuned successfully, can ship distinctive efficiency cost-efficiently. Bigger fashions like Llama-3.2-11B-Imaginative and prescient would usually require extra substantial GPU sources (for instance, ml.g5.12xlarge or bigger) and longer coaching occasions.

Analysis and visualization of structured outputs (JSON)

A key facet of any automation or machine studying venture is analysis. With out evaluating your answer, you don’t understand how nicely it performs at fixing what you are promoting downside. We wrote an analysis pocket book that you need to use as a framework. Evaluating the efficiency of document-to-JSON fashions includes evaluating the model-generated JSON outputs for unseen enter paperwork (take a look at dataset) in opposition to the ground-truth JSON annotations.

Key metrics employed in our venture embody:

- Precise match (EM) – accuracy: This metric measures whether or not the extracted worth for a selected discipline is an actual character-by-character match to the ground-truth worth. It’s a strict metric, typically reported as a share.

- Character error fee (CER) – edit distance: calculates the minimal variety of single-character edits (insertions, deletions, or substitutions) required to vary the mannequin’s predicted string into the ground-truth string, sometimes normalized by the size of the ground-truth string. A decrease CER signifies higher efficiency.

- Recall-Oriented Understudy for Gisting Analysis (ROGUE): It is a suite of metrics that examine n-grams (sequences of phrases) and the longest frequent subsequence between the anticipated output and the reference. Whereas historically used for textual content summarization, ROUGE scores can even present insights into the general textual similarity of the generated JSON string in comparison with the bottom fact.

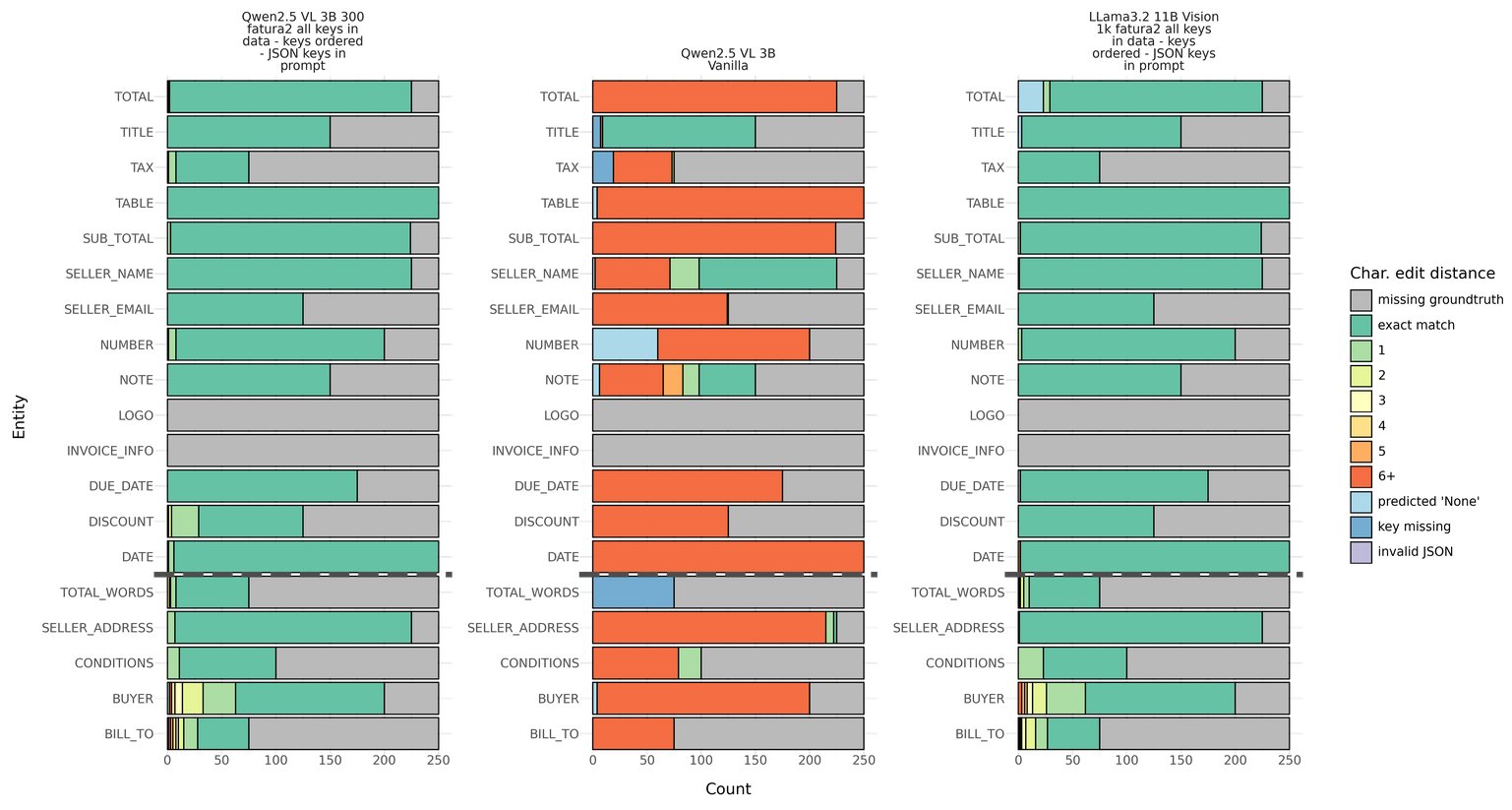

Visualizations are useful for understanding mannequin efficiency nuances. The next edit distance heatmap picture gives a granular view, displaying how intently the predictions match the bottom fact (inexperienced means the mannequin’s output precisely matches the bottom fact, and shades of yellow, orange, and purple depict growing deviations). Every mannequin has its personal bar chart, permitting fast comparability throughout fashions. The X-axis is the variety of pattern paperwork. On this case, we ran inference on 250 unseen samples from the Fatura2 dataset. The Y-axis exhibits the JSON keys that we requested the mannequin to extract; which shall be completely different for you relying on what construction your downstream system requires.

Within the picture, you’ll be able to see the efficiency of three completely different fashions on the Fatura2 dataset. From left to proper: Qwen2.5 VL 3B fine-tuned on 300 samples from the Fatura2 dataset, within the center Qwen2.5 VL 3B with out fine-tuning (labeled vanilla), and Llama 3.2 11B imaginative and prescient fine-tuned on 1,000 samples.

The gray shade exhibits the samples for which the Fatura2 dataset doesn’t include any floor fact, which is why these are the identical throughout the three fashions.

For an in depth, step-by-step walk-through of how the analysis metrics are calculated, the particular Python code used, and the way the visualizations are generated, see the excellent analysis pocket book in our venture.

The picture exhibits that Qwen2.5 vanilla is simply respectable at extracting the Title and Vendor Identify from the doc. For the opposite keys it makes greater than six character edit errors. Nonetheless, out of the field Qwen2.5 is sweet at adhering to the JSON schema with just a few predictions the place the bottom line is lacking (darkish blue shade) and no predictions of JSON that couldn’t be parsed (for instance, lacking citation marks, lacking parentheses, or a lacking comma). Analyzing the 2 fine-tuned fashions, you’ll be able to see enchancment in efficiency with most samples, precisely matching the bottom fact on all keys. There are solely slight variations between fine-tuned Qwen2.5 and fine-tuned Llama 3.2, for instance fine-tuned Qwen2.5 barely outperforms fine-tuned Llama 3.2 on Complete, Title, Circumstances, and Purchaser; whereas fine-tuned Llama 3.2 barely outperforms fine-tuned Qwen2.5 on Vendor Tackle, Low cost, Tax, and Low cost.

The objective is to enter a doc into your fine-tuned mannequin and obtain a clear, structured JSON object that precisely maps the extracted info to predefined fields. JSON-constrained decoding enforces adherence to a specified JSON schema throughout inference and is helpful to verify the output is legitimate JSON. For the Fatura2 dataset, this method was not needed—our fine-tuned Qwen 2.5 mannequin persistently produced legitimate JSON outputs with out extra constraints. Nonetheless, incorporating constrained decoding stays a worthwhile safeguard, notably for manufacturing environments the place output reliability is crucial.

Pocket book 07 visualizes the enter doc and the extracted JSON knowledge side-by-side.

Deploying the fine-tuned mannequin

After you fine-tune a mannequin and consider it in your dataset, you’ll want to deploy it to run inference to course of your paperwork. Relying in your use case, a special deployment choice is perhaps extra appropriate.

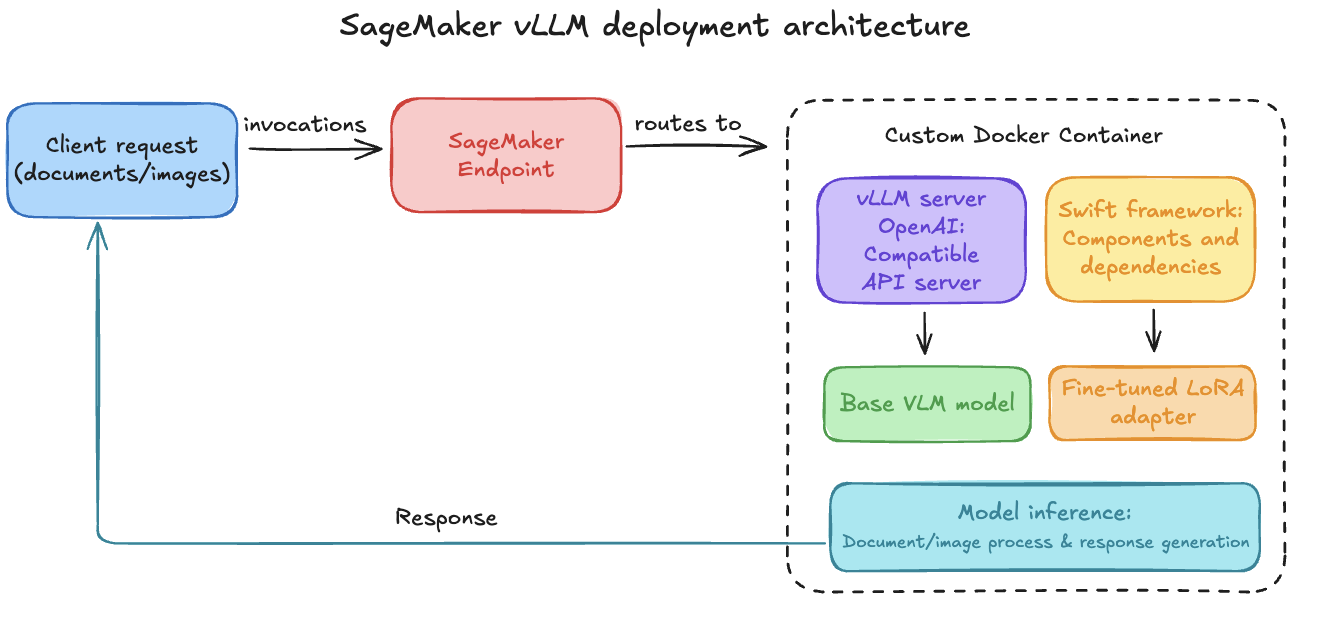

Choice a: vLLM container prolonged for SageMaker

To deploy our fine-tuned mannequin for real-time inference, we use SageMaker endpoints. SageMaker endpoints present absolutely managed internet hosting for real-time inference for FMs, deep studying, and different ML fashions and permits managed autoscaling and value optimum deployment strategies. The method, detailed in our deploy mannequin pocket book, includes constructing a customized Docker container. This container packages the vLLM serving engine, extremely optimized for LLM and VLM inference, together with the Swift framework elements wanted to load our particular mannequin and adapter. vLLM gives an OpenAI-compatible API server by default, appropriate for dealing with doc and picture inputs with VLMs. Our customized docker-artifacts and Dockerfile adapts this vLLM base for SageMaker deployment. Key steps embody:

- Organising the required surroundings and dependencies.

- Configuring an entry level that initializes the vLLM server.

- Ensuring the server can load the bottom VLM and dynamically apply our fine-tuned LoRA adapter. The Amazon S3 path to the adapter (

mannequin.tar.gz) is handed utilizing theADAPTER_URIsurroundings variable when creating the SageMaker mannequin. - The container, after being constructed and pushed to Amazon ECR, is then deployed to a SageMaker endpoint, which listens for invocation requests and routes them to the vLLM engine contained in the container.

The next picture exhibits a SageMaker vLLM deployment structure, the place a customized Docker container from Amazon ECR is deployed to a SageMaker endpoint. The container makes use of vLLM’s OpenAI-compatible API and Swift to serve a base VLM with a fine-tuned LoRA adapter dynamically loaded from Amazon S3.

Choice b (optionally available): Inference elements on SageMaker

For extra advanced inference workflows which may contain refined pre-processing of enter paperwork, post-processing of the extracted JSON, and even chaining a number of fashions (for instance, a classification mannequin adopted by an extraction mannequin), Amazon SageMaker inference elements supply enhanced flexibility. You should use them to construct a pipeline of a number of containers or fashions inside a single endpoint, every dealing with a selected a part of the inference logic.

Choice c: Customized mannequin inference in Amazon Bedrock

Now you can import your customized fashions in Amazon Bedrock after which use Amazon Bedrock options to make inference calls to the mannequin. Qwen 2.5 structure is supported (see Supported Architectures). For extra info, see Amazon Bedrock Customized Mannequin Import now usually out there.

Clear up

To keep away from ongoing costs, it’s essential to take away the AWS sources created for this venture once you’re completed.

- SageMaker endpoints and fashions:

- Within the AWS Administration Console for SageMaker AI, go to Inference after which Endpoints. Choose and delete endpoints created for this venture.

- Then, go to Inference after which Fashions and delete the related fashions.

- Amazon S3 knowledge:

- Navigate to the Amazon S3 console.

- Delete the S3 buckets or particular folders or prefixes used for datasets, mannequin artifacts (for instance, mannequin.tar.gz from coaching jobs), and inference outcomes. Observe: Ensure you don’t delete knowledge wanted by different initiatives.

- Amazon ECR photographs and repositories:

- Within the Amazon ECR console, delete Docker photographs and the repository created for the customized vLLM container if you happen to deployed one.

- CloudWatch logs (optionally available):

- Logs from SageMaker actions are saved in Amazon CloudWatch. You may delete related log teams (for instance,

/aws/sagemaker/TrainingJobsand/aws/sagemaker/Endpoints) if desired, although many have automated retention insurance policies.

- Logs from SageMaker actions are saved in Amazon CloudWatch. You may delete related log teams (for instance,

Vital: At all times confirm sources earlier than deletion. In case you experimented with Amazon Bedrock customized mannequin imports, ensure these are additionally cleaned up. Use AWS Value Explorer to watch for surprising costs.

Conclusion and future outlook

On this publish, we demonstrated that fine-tuning VLMs gives a strong and versatile method to automate and considerably improve doc understanding capabilities. We now have additionally demonstrated that utilizing targeted fine-tuning permits smaller, multi-modal fashions to compete successfully with a lot bigger counterparts (98% accuracy with Qwen2.5 VL 3B). The venture additionally highlights that fine-tuning VLMs for document-to-JSON processing might be accomplished cost-effectively by utilizing Spot situations and PEFT strategies (roughly $1 USD to fine-tune a 3 billion parameter mannequin on round 200 paperwork).

The fine-tuning process was carried out utilizing Amazon SageMaker coaching jobs and the Swift framework, which proved to be a flexible and efficient toolkit for orchestrating this fine-tuning course of.

The potential for enhancing and increasing this work is huge. Some thrilling future instructions embody deploying structured doc fashions on CPU-based, serverless compute like AWS Lambda or Amazon SageMaker Serverless Inference utilizing instruments like llama.cpp or vLLM. Utilizing quantized fashions can allow low-latency, cost-efficient inference for sporadic workloads. One other future route contains bettering analysis of structured outputs by going past field-level metrics. This contains validating advanced nested constructions and tables utilizing strategies like tree edit distance for tables (TEDS).

The whole code repository, together with the notebooks, utility scripts, and Docker artifacts, is out there on GitHub that will help you get began unlocking insights out of your paperwork. For the same method, utilizing Amazon Nova, please consult with this AWS weblog for optimizing doc AI and structured outputs by fine-tuning Amazon Nova Fashions and on-demand inference.

Concerning the Authors

Arlind Nocaj is a GTM Specialist Options Architect for AI/ML and Generative AI for Europe central based mostly in AWS Zurich Workplace, who guides enterprise prospects by their digital transformation journeys. With a PhD in community analytics and visualization (Graph Drawing) and over a decade of expertise as a analysis scientist and software program engineer, he brings a novel mix of educational rigor and sensible experience to his position. His main focus lies in utilizing the total potential of information, algorithms, and cloud applied sciences to drive innovation and effectivity. His areas of experience embody Machine Studying, Generative AI and particularly Agentic techniques with Multi-modal LLMs for doc processing and structured insights.

Arlind Nocaj is a GTM Specialist Options Architect for AI/ML and Generative AI for Europe central based mostly in AWS Zurich Workplace, who guides enterprise prospects by their digital transformation journeys. With a PhD in community analytics and visualization (Graph Drawing) and over a decade of expertise as a analysis scientist and software program engineer, he brings a novel mix of educational rigor and sensible experience to his position. His main focus lies in utilizing the total potential of information, algorithms, and cloud applied sciences to drive innovation and effectivity. His areas of experience embody Machine Studying, Generative AI and particularly Agentic techniques with Multi-modal LLMs for doc processing and structured insights.

Malte Reimann is a Options Architect based mostly in Zurich, working with prospects throughout Switzerland and Austria on their cloud initiatives. His focus lies in sensible machine studying purposes—from immediate optimization to fine-tuning imaginative and prescient language fashions for doc processing. The latest instance, working in a small crew to supply deployment choices for Apertus on AWS. An energetic member of the ML neighborhood, Malte balances his technical work with a disciplined method to health, preferring early morning health club classes when it’s empty. Throughout summer time weekends, he explores the Swiss Alps on foot and having fun with time in nature. His method to each expertise and life is easy: constant enchancment by deliberate apply, whether or not that’s optimizing a buyer’s cloud deployment or getting ready for the following hike within the clouds.

Malte Reimann is a Options Architect based mostly in Zurich, working with prospects throughout Switzerland and Austria on their cloud initiatives. His focus lies in sensible machine studying purposes—from immediate optimization to fine-tuning imaginative and prescient language fashions for doc processing. The latest instance, working in a small crew to supply deployment choices for Apertus on AWS. An energetic member of the ML neighborhood, Malte balances his technical work with a disciplined method to health, preferring early morning health club classes when it’s empty. Throughout summer time weekends, he explores the Swiss Alps on foot and having fun with time in nature. His method to each expertise and life is easy: constant enchancment by deliberate apply, whether or not that’s optimizing a buyer’s cloud deployment or getting ready for the following hike within the clouds.

Nick McCarthy is a Senior Generative AI Specialist Options Architect on the Amazon Bedrock crew, targeted on mannequin customization. He has labored with AWS shoppers throughout a variety of industries — together with healthcare, finance, sports activities, telecommunications, and power — serving to them speed up enterprise outcomes by the usage of AI and machine studying. Exterior of labor, Nick loves touring, exploring new cuisines, and studying about science and expertise. He holds a Bachelor’s diploma in Physics and a Grasp’s diploma in Machine Studying.

Nick McCarthy is a Senior Generative AI Specialist Options Architect on the Amazon Bedrock crew, targeted on mannequin customization. He has labored with AWS shoppers throughout a variety of industries — together with healthcare, finance, sports activities, telecommunications, and power — serving to them speed up enterprise outcomes by the usage of AI and machine studying. Exterior of labor, Nick loves touring, exploring new cuisines, and studying about science and expertise. He holds a Bachelor’s diploma in Physics and a Grasp’s diploma in Machine Studying.

Irene Marban Alvarez is a Generative AI Specialist Options Architect at Amazon Net Providers (AWS), working with prospects in the UK and Eire. With a background in Biomedical Engineering and Masters in Synthetic Intelligence, her work focuses on serving to organizations leverage the newest AI applied sciences to speed up their enterprise. In her spare time, she loves studying and cooking for her associates.

Irene Marban Alvarez is a Generative AI Specialist Options Architect at Amazon Net Providers (AWS), working with prospects in the UK and Eire. With a background in Biomedical Engineering and Masters in Synthetic Intelligence, her work focuses on serving to organizations leverage the newest AI applied sciences to speed up their enterprise. In her spare time, she loves studying and cooking for her associates.