AI clusters have solely remodeled the way in which site visitors flows inside information facilities. More often than not, site visitors now strikes east–west between GPUs throughout mannequin coaching and checkpointing, reasonably than north–south between purposes and the web. This means a shift in the place bottlenecks happen. CPUs, which have been as soon as liable for encapsulation, movement management, and safety, are actually on the crucial path. This provides latency and variability that makes it more durable to make use of GPUs.

On account of this efficiency restrict, the DPU/SmartNIC has developed from being an non-compulsory accelerator to turning into crucial infrastructure. “Knowledge middle is the brand new unit of computing,” NVIDIA CEO Jensen Huang mentioned throughout the GTC 2021. “There’s no method you’re going to do this on the CPU. So you need to transfer the networking stack off. You need to transfer the safety stack off, and also you need to transfer the information processing and information motion stack off.” Jensen Huang, interview with The Subsequent Platform. NVIDIA claims its Spectrum-X Ethernet cloth (encompassing congestion management, adaptive routing, and telemetry) can ship as much as 48% increased storage learn bandwidth for AI workloads.

The community interface is now a layer that processes issues. The query of maturity is now not whether or not offloading is important, however which offloads at present present a measurable operational ROI.

The place AI Cloth Visitors and Reliability Turn out to be Vital

AI workloads function synchronously: when one node experiences congestion, all GPUs within the cluster wait. Meta stories that routing-induced movement collisions and uneven site visitors distribution in early RoCE deployments “degraded the coaching efficiency as much as greater than 30%,” prompting adjustments in routing and collective tuning. These points are usually not purely architectural; they emerge immediately from how east–west flows behave at scale.

InfiniBand has lengthy offered credit-based link-level movement management (per-VL) to ensure lossless supply and forestall buffer overruns, i.e., a {hardware} mechanism constructed into the hyperlink layer. Ethernet is evolving alongside comparable traces by way of the Extremely Ethernet Consortium (UEC): its Extremely Ethernet Transport (UET) work introduces endpoint/host-aware transport, congestion administration guided by real-time suggestions, and coordination between endpoints and switches, explicitly transferring extra congestion dealing with and telemetry into the NIC/endpoint.

InfiniBand stays the benchmark for deterministic cloth habits. Ethernet-based AI materials are quickly evolving by way of improvements in UET and SmartNIC.

Community professionals should consider silicon capabilities, not simply hyperlink speeds. Reliability is now decided by telemetry, congestion management, and offload help on the NIC/DPU stage.

Additionally Learn: Smarter DevOps with Kite: AI Meets Kubernetes

Offload Sample: Encapsulation and Stateless Pipeline Processing

AI clusters at cloud and enterprise scale depend on overlays comparable to VXLAN and GENEVE to phase site visitors throughout tenants and domains. Historically, these encapsulation duties run on the CPU.

DPUs and SmartNICs offload encapsulation, hashing, and movement matching immediately into {hardware} pipelines, lowering jitter and liberating CPU cycles. NVIDIA paperwork VXLAN {hardware} offloads on its NICs/DPUs and claims Spectrum-X delivers materials AI-fabric beneficial properties, together with as much as 48% increased storage learn bandwidth in associate assessments and greater than 4x decrease latency versus conventional Ethernet in Supermicro benchmarking.

Offload for VXLAN and stateless movement processing is supported throughout NVIDIA BlueField, AMD Pensando Elba, and Marvell OCTEON 10 platforms.

From a aggressive perspective:

- NVIDIA focuses on integrating tightly with Datacenter Infrastructure-on-a-Chip (DOCA) for GPU-accelerated AI workloads.

- AMD Pensando affords P4 programmability and integration with Cisco Good Switches.

- Intel IPU brings Arm-heavy designs for transport programmability.

Encapsulation offload is now not a efficiency enhancer; it’s foundational for predictable AI cloth habits.

Offload Sample: Inline Encryption and East–West Safety

As AI fashions cross sovereign boundaries and multi-tenant clusters change into frequent, encryption of east–west site visitors has change into obligatory. Nevertheless, encrypting this site visitors within the host CPU introduces measurable efficiency penalties. In a joint VMware–6WIND–NVIDIA validation, BlueField-2 DPUs offloaded IPsec for a 25 Gbps testbed (2×25 GbE BlueField-2), demonstrating increased throughput and decrease host-CPU use for the 6WIND vSecGW on vSphere 8.

Determine: Because of NVIDIA

Marvell positions its OCTEON 10 DPUs for inline safety offload in AI information facilities, citing built-in crypto accelerators able to 400+ Gbps IPsec/TLS (Marvell OCTEON 10 DPU Household media deck); the corporate additionally highlights rising AI-infrastructure demand in its investor communications. Encryption offload is shifting from non-compulsory to required as AI turns into regulated infrastructure.

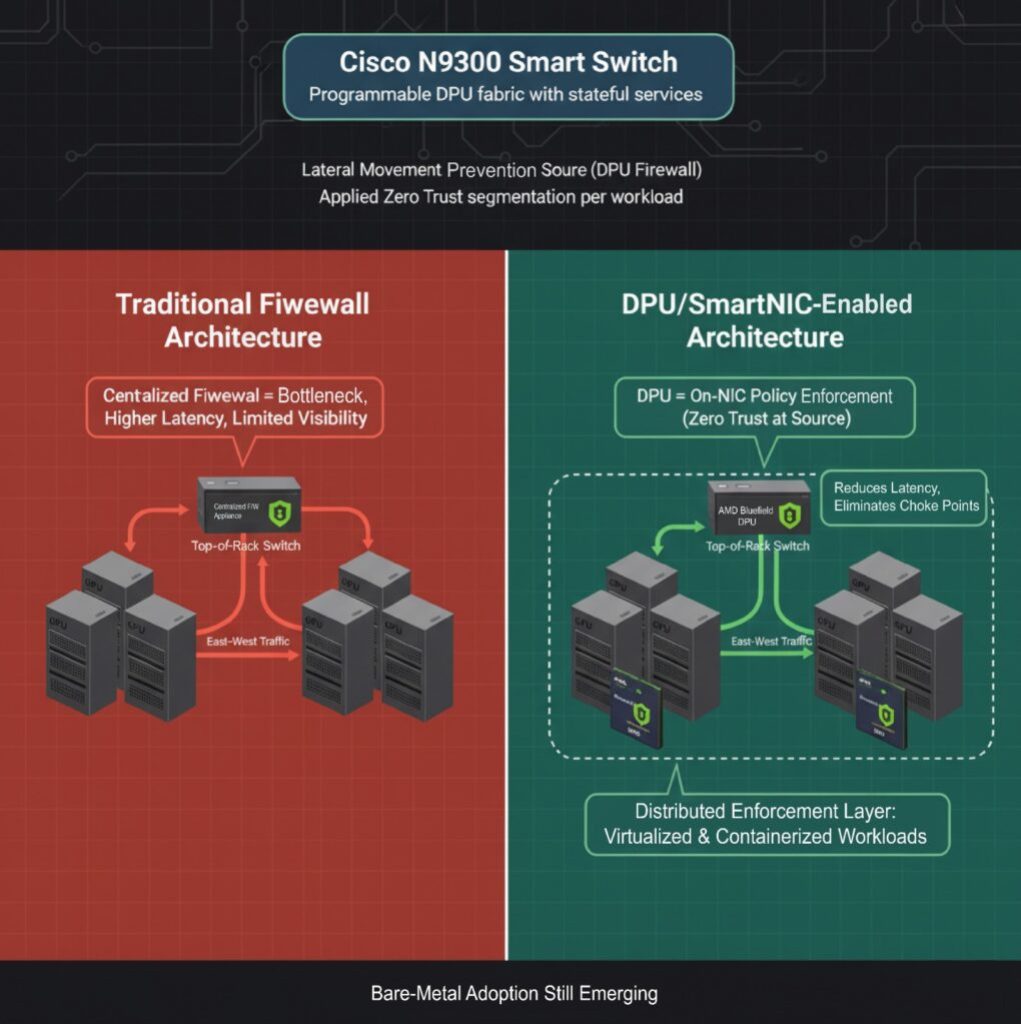

Offload Sample: Microsegmentation and Distributed Firewalling

GPU servers are sometimes deployed in high-trust zones, however there are nonetheless dangers of lateral motion, particularly in environments with many tenants or when inference is finished on shared infrastructure. Conventional firewalls are configured exterior the GPUs and pressure east–west site visitors by way of centralized choke factors. This bottleneck contributes to elevated latency and creates blind spots in operations.

DPUs and SmartNICs now allow you to arrange L4 firewalls immediately on the NIC, implementing coverage on the supply. Cisco launched the N9300 Sequence “Good Switches,” which have programmable DPUs that add stateful companies on to the information middle cloth to hurry up operations. NVIDIA’s BlueField DPU equally helps microsegmentation, permitting operators to use Zero Belief ideas to GPU workloads with out involving the host CPU.

Whereas firewall offload is production-ready for virtualized and containerized environments, its utility in bare-metal AI cloth deployments continues to be growing.

Community engineers achieve a brand new enforcement level contained in the server itself. This offload sample is gaining traction in regulated and sovereign AI deployments the place east–west isolation is required.

Additionally Learn: Agentic AI vs AI Brokers: Key Variations & Impression on the Way forward for AI

Case Snapshot: Ethernet AI Cloth Operations in Manufacturing

To beat cloth instability, Meta co-designed the transport layer and collective library, implementing Enhanced ECMP site visitors engineering, queue-pair scaling, and a receiver-driven admission mannequin. These adjustments yielded as much as 40% enchancment in AllReduce completion latency, demonstrating that cloth efficiency is now decided as a lot by transport logic within the NIC as by change structure.

In one other instance, a joint VMware–6WIND–NVIDIA validation, BlueField-2 DPUs offloaded IPsec for a 6WIND vSecGW on vSphere 8. The lab setup (restricted by BlueField-2’s dual-25 GbE ports) focused and demonstrated at the very least 25 Gbps aggregated IPsec throughput and confirmed that offloading elevated throughput and improved utility response, whereas liberating host-CPU cores.

Actual deployments validate efficiency beneficial properties. Nevertheless, impartial benchmarks evaluating distributors stay restricted. Community architects ought to consider vendor claims by way of the lens of revealed deployment proof, reasonably than counting on advertising and marketing figures.

Purchaser’s Panorama: Silicon and SDK Maturity

The aggressive panorama is being remodeled by DPU and SmartNIC methods. The next desk highlights key concerns and variations amongst numerous distributors.

| Vendor | Differentiator | Maturity | Key Issues |

| NVIDIA | Tight integration with GPUs, DOCA SDK, and superior telemetry | Excessive | Highest efficiency; ecosystem lock-in is a priority |

| AMD Pensando | P4-based pipeline, Cisco integration | Excessive | Robust in enterprise and hybrid deployments |

| Intel IPU | Programmable transport, crypto acceleration | Rising | Anticipated 2025 rollout; backed by Google deployment historical past |

| Marvell OCTEON | Energy-efficient, storage-centric offload | Medium | Energy in edge and disaggregated storage AI |

Consumers are prioritizing greater than uncooked speeds and feeds. Omdia emphasizes that efficient operations now hinge on AI-driven automation and actionable telemetry, not simply increased hyperlink charges.

Procurement selections should be aligned not solely with efficiency targets however with SDK roadmap maturity and long-term platform lock-in dangers.

Aggressive and Architectural Selections: What Operators Should Determine

As AI materials transfer from early deployment to scaled manufacturing, infrastructure leaders are confronted with a number of strategic selections that may form price, efficiency, and operational danger for years to come back.

DPU vs. SuperNIC vs. Excessive-Finish NIC

DPUs ship you Arm cores, crypto blocks, and storage/community offload capabilities. They work greatest in AI environments which have a number of tenants, are regulated, or are delicate to safety. SuperNICs, like NVIDIA’s Spectrum-X adapters, are designed to work with switches with very low latency and deep telemetry integration, however they lack general-purpose processors.

Excessive-end NICs (with out offload capabilities) should still serve single-tenant or small-scale AI clusters, however lack long-term viability for multi-pod AI materials.

Ethernet vs. InfiniBand for AI Materials

InfiniBand continues to be the perfect at native congestion management and predictable latency. Nevertheless, Ethernet is rapidly gaining popularity as distributors standardize Extremely Ethernet Transport and add SmartNIC/DPU offload. InfiniBand is the only option for hyperscale deployments the place you settle for vendor lock-in.

“Once we first initiated our protection of AI Again-end Networks in late 2023, the market was dominated by InfiniBand, holding over 80 p.c share… Because the trade strikes to 800 Gbps and past, we imagine Ethernet is now firmly positioned to overhaul InfiniBand in these high-performance deployments.” Sameh Boujelbene, Vice President, Dell’Oro Group.

SDK and Ecosystem Management

Vendor management over software program ecosystems is turning into a key differentiator. NVIDIA DOCA, AMD’s P4-based framework, and Intel’s IPU SDK every symbolize divergent growth paths. Selecting a vendor in the present day successfully means selecting a programming mannequin and long-term integration technique.

Additionally Learn: How AI Chatbots Can Assist Streamline Your Enterprise Operations?

When it Pencils Out and What to Watch Subsequent

DPUs and SmartNICs are now not positioned as future enablers. They’re turning into a required infrastructure for AI-scale networking. The enterprise case is most clear in clusters the place:

- East–west site visitors dominates

- GPU utilization is affected by microburst congestion

- Regulatory or multi-tenant necessities mandate encryption or isolation

- Storage site visitors interferes with compute efficiency

Early adopters report measurable ROI. NVIDIA disclosed improved GPU utilization and a 48% improve in sustained storage throughput in Spectrum-X deployments that mix telemetry and congestion offload. In the meantime, Marvell and AMD report rising connect charges for DPUs in AI design wins the place operators require information path autonomy from the host CPU.

Over the subsequent 12 months, community professionals ought to carefully monitor:

- NVIDIA’s roadmap for BlueField-4 and SuperNIC enhancements

- AMD Pensando’s Salina DPUs built-in into Cisco Good Switches

- UEC 1.0 specification and vendor adoption timelines

- Intel’s first manufacturing deployments of the E2200 IPU

- Unbiased benchmarks evaluating Ethernet Extremely Cloth vs. InfiniBand efficiency below AI collective hundreds

The economics of AI networking now hinge on the place processing occurs. The strategic shift is underway from CPU-centric architectures to materials the place DPUs and SmartNICs outline efficiency, reliability, and safety at scale.