The rise of highly effective giant language fashions (LLMs) that may be consumed through API calls has made it remarkably easy to combine synthetic intelligence (AI) capabilities into purposes. But regardless of this comfort, a big variety of enterprises are selecting to self-host their very own fashions—accepting the complexity of infrastructure administration, the price of GPUs within the serving stack, and the problem of conserving fashions up to date. The choice to self-host usually comes down to 2 important components that APIs can not tackle. First, there may be knowledge sovereignty: the necessity to be sure that delicate info doesn’t go away the infrastructure, whether or not because of regulatory necessities, aggressive considerations, or contractual obligations with prospects. Second, there may be mannequin customization: the power to tremendous tune fashions on proprietary knowledge units for industry-specific terminology and workflows or create specialised capabilities that general-purpose APIs can not supply.

Amazon SageMaker AI addresses the infrastructure complexity of self-hosting by abstracting away the operational burden. By way of managed endpoints, SageMaker AI handles the provisioning, scaling, and monitoring of GPU assets, permitting groups to deal with mannequin efficiency reasonably than infrastructure administration. The system gives inference-optimized containers with well-liked frameworks like vLLM pre-configured for max throughput and minimal latency. As an illustration, the Giant Mannequin Inference (LMI) v16 container picture makes use of vLLM v0.10.2, which makes use of the V1 engine and comes with help for brand new mannequin architectures and new {hardware}, such because the Blackwell/SM100 era. This managed method transforms what sometimes requires devoted machine studying operations (MLOps) experience right into a deployment course of that takes only a few strains of code.

Reaching optimum efficiency with these managed containers nonetheless requires cautious configuration. Parameters like tensor parallelism diploma, batch dimension, most sequence size, and concurrency limits can dramatically affect each latency and throughput—and discovering the suitable steadiness in your particular workload and price constraints is an iterative course of that may be time-consuming.

BentoML’s LLM-Optimizer addresses this problem by enabling systematic benchmarking throughout completely different parameter configurations, changing handbook trial-and-error with an automatic search course of. The software lets you outline constraints similar to particular latency targets or throughput necessities, making it easy to establish configurations that meet your service degree targets. You need to use LLM-Optimizer to search out optimum serving parameters for vLLM regionally or in your improvement setting, apply those self same configurations on to the SageMaker AI endpoint for a seamless transition to manufacturing. This submit illustrates this course of by discovering an optimum deployment for a Qwen-3-4B mannequin on an Amazon SageMaker AI endpoint.

This submit is written for training ML engineers, options architects, and system builders who already deploy fashions on Amazon SageMaker or comparable infrastructure. We assume familiarity with GPU situations, endpoints, and mannequin serving, and deal with sensible efficiency optimization. The reasons of inference metrics are included not as a newbie tutorial, however to construct shared instinct. For particular parameters like batch dimension & tensor parallelism, and the way they instantly affect price and latency in manufacturing.

Answer overview

The step-by-step breakdown is as follows:

- Outline constraints in Jupyter Pocket book: The method begins inside SageMaker AI Studio, the place customers open a Jupyter Pocket book to outline the deployment targets and constraints of the use case. These constraints can embrace goal latency, desired throughput, and output tokens.

- Run theoretical and empirical benchmarks with the BentoML LLM-Optimizer: The LLM-Optimizer first runs a theoretical GPU efficiency estimate to establish possible configurations for the chosen {hardware} (on this instance, an

ml.g6.12xlarge). It executes benchmark checks utilizing the vLLM serving engine throughout a number of parameter combos similar to tensor parallelism, batch dimension, and sequence size to empirically measure latency and throughput. Primarily based on these benchmarks, the optimizer robotically determines probably the most environment friendly serving configuration that satisfies the offered constraints. - Generate and deploy optimized configuration in a SageMaker endpoint: As soon as the benchmarking is full, the optimizer returns a JSON configuration file containing the optimum parameter values. This JSON is handed from the Jupyter Pocket book to the SageMaker Endpoint configuration, which deploys the LLM (on this instance, the

Qwen/Qwen3-4Bmannequin utilizing the vLLM-based LMI container) in a managed HTTP endpoint utilizing the optimum runtime parameters.

The next determine is an outline of the workflow performed all through the submit.

Earlier than leaping into the theoretical underpinnings of inference optimization, it’s price grounding why these ideas matter within the context of real-world deployments. When groups transfer from API-based fashions to self-hosted endpoints, they inherit the accountability for tuning efficiency parameters that instantly have an effect on price and consumer expertise. Understanding how latency and throughput work together by the lens of GPU structure and arithmetic depth permits engineers to make these trade-offs intentionally reasonably than by trial and error.

Transient overview of LLM efficiency

Earlier than diving into the sensible software of this workflow, we cowl key ideas that construct instinct for why inference optimization is important for LLM-powered purposes. The next primer isn’t educational; it’s to offer the psychological mannequin wanted to interpret LLM-Optimizer’s outputs and perceive why sure configurations yield higher outcomes.

Key efficiency metrics

Throughput (requests/second): What number of requests your system completes per second. Larger throughput means serving extra customers concurrently.

Latency (seconds): The entire time from when a request arrives till the entire response is returned. Decrease latency means sooner consumer expertise.

Arithmetic depth: The ratio of computation carried out to knowledge moved. This determines whether or not your workload is:

Reminiscence-bound: Restricted by how briskly you possibly can transfer knowledge (low arithmetic depth)

Compute-bound: Restricted by uncooked GPU processing energy (excessive arithmetic depth)

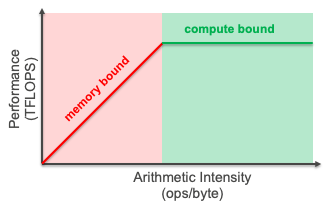

The roofline mannequin

The roofline mannequin visualizes efficiency by plotting throughput in opposition to arithmetic depth. For deeper content material on the roofline mannequin, go to the AWS Neuron Batching documentation. The mannequin reveals whether or not your software is bottlenecked by reminiscence bandwidth or computational capability. For LLM inference, this mannequin helps establish for those who’re restricted by:

- Reminiscence bandwidth: Knowledge switch between GPU reminiscence and compute models (typical for small batch sizes)

- Compute capability: Uncooked floating-point operations (FLOPS) out there on the GPU (typical for giant batch sizes)

The throughput-latency trade-off

In follow, optimizing LLM inference follows a basic trade-off: as you improve throughput, latency rises. This occurs as a result of:

- Bigger batch sizes → Extra requests processed collectively → Larger throughput

- Extra concurrent requests → Longer queue wait instances → Larger latency

- Tensor parallelism → Distributes mannequin throughout GPUs → Impacts each metrics in another way

The problem lies find the optimum configuration throughout a number of interdependent parameters:

- Tensor parallelism diploma (what number of GPUs to make use of)

- Batch dimension (most variety of tokens processed collectively)

- Concurrency limits (most variety of simultaneous requests)

- KV cache allocation (reminiscence for consideration states)

Every parameter impacts throughput and latency in another way whereas respecting {hardware} constraints like GPU reminiscence and compute bandwidth. This multi-dimensional optimization drawback is exactly why LLM-Optimizer is effective—it systematically explores the configuration area reasonably than counting on handbook trial-and-error.

For an outline on LLM Inference as an entire, BentoML has offered invaluable assets of their LLM Inference Handbook.

Sensible software: Discovering an optimum deployment of Qwen3-4B on Amazon SageMaker AI

Within the following sections, we stroll by a hands-on instance of figuring out and making use of optimum serving configurations for LLM deployment. Particularly, we:

- Deploy the

Qwen/Qwen3-4Bmannequin utilizing vLLM on anml.g6.12xlargeoccasion (4x NVIDIA L4 GPUs, 24GB VRAM every). - Outline life like workload constraints:

- Goal: 10 requests per second (RPS)

- Enter size: 1,024 tokens

- Output size: 512 tokens

- Discover a number of serving parameter combos:

- Tensor parallelism diploma (1, 2, or 4 GPUs)

- Max batched tokens (4K, 8K, 16K)

- Concurrency ranges (32, 64, 128)

- Analyze outcomes utilizing:

- Theoretical GPU reminiscence calculations

- Benchmarking knowledge

- Throughput vs. latency trade-offs

By the top, you’ll see how theoretical evaluation, empirical benchmarking, and managed endpoint deployment come collectively to ship a production-ready LLM setup that balances latency, throughput, and price.

Stipulations

The next are the conditions wanted to run by this instance:

- Entry to SageMaker Studio. This makes deployment & inference easy, or an interactive improvement setting (IDE) similar to PyCharm or Visible Studio Code.

- To benchmark and deploy the mannequin, test that the really helpful occasion varieties are accessible, primarily based on the mannequin dimension. To confirm the mandatory service quotas, full the next steps:

- On the Service Quotas console, beneath AWS Providers, choose Amazon SageMaker.

- Confirm enough quota for the required occasion kind for “endpoint deployment” (within the right area).

- If wanted, request a quota improve/contact AWS for help.

The next code particulars methods to set up the mandatory packages:

Run the LLM-Optimizer

To get began, instance constraints should be outlined primarily based on the focused workflow.

Instance constraints:

- Enter tokens: 1024

- Output tokens: 512

- E2E Latency: <= 60 seconds

- Throughput: >= 5 RPS

Run the estimate

Step one with llm-optimizer is to run an estimation. Working an estimate analyzes the Qwen/Qwen3-4b mannequin on 4x L4 GPUs and estimate the efficiency for an enter size of 1024 tokens, and an output of 512 tokens. As soon as run, the theoretical bests for latency and throughput are calculated mathematically and returned. The roofline evaluation returned identifies the workloads bottlenecks, and quite a few server and consumer arguments are returned, to be used within the following step, operating the precise benchmark.

Beneath the hood, LLM-Optimizer performs roofline evaluation to estimate LLM serving efficiency. It begins by fetching the mannequin structure from HuggingFace to extract parameters like hidden dimensions, variety of layers, consideration heads, and whole parameters. Utilizing these architectural particulars, it calculates the theoretical FLOPs required for each prefill (processing enter tokens) and decode (producing output tokens) phases, accounting for consideration operations, MLP layers, and KV cache entry patterns. It compares the arithmetic depth (FLOPs per byte moved) of every section in opposition to the GPU’s {hardware} traits—particularly the ratio of compute capability (TFLOPs) to reminiscence bandwidth (TB/s)—to find out whether or not prefill and decode are memory-bound or compute-bound. From this evaluation, the software estimates TTFT (time-to-first-token), ITL (inter-token latency), and end-to-end latency at numerous concurrency ranges. It additionally calculates three theoretical concurrency limits: KV cache reminiscence capability, prefill compute capability, and decode throughput capability. Lastly, it generates tuning instructions that sweep throughout completely different tensor parallelism configurations, batch sizes, and concurrency ranges for empirical benchmarking to validate the theoretical predictions.

The next code particulars methods to run an preliminary estimation primarily based on the chosen constraints:

Anticipated output:

Run the benchmark

With the estimation outputs in hand, an knowledgeable determination could be made on what parameters to make use of for benchmarking primarily based on the beforehand outlined constraints. Beneath the hood, LLM-Optimizer transitions from theoretical estimation to empirical validation by launching a distributed benchmarking loop that evaluates real-world serving efficiency on the goal {hardware}. For every permutation of server and consumer arguments, the software robotically spins up a vLLM occasion with the desired tensor parallelism, batch dimension, and token limits, then drives load utilizing an artificial or dataset-based request generator (e.g., ShareGPT). Every run captures low-level metrics—time-to-first-token (TTFT), inter-token latency (ITL), end-to-end latency, tokens per second, and GPU reminiscence utilization—throughout concurrent request patterns. These measurements are aggregated right into a Pareto frontier, permitting LLM-Optimizer to establish configurations that finest steadiness latency and throughput inside the consumer’s constraints. In essence, this step grounds the sooner theoretical roofline evaluation in actual efficiency knowledge, producing reproducible metrics that instantly inform deployment tuning.

The next code runs the benchmark, utilizing info from the estimate:

This outputs the next permutations to the vLLM engine for testing. The next are easy calculations on the completely different combos of consumer & server arguments that the benchmark runs:

- 3

tensor_parallel_sizex 3max_num_batched_tokenssettings = 9 - 3

max_concurrencyx 1num prompts= 3 - 9 * 3 = 27 completely different checks

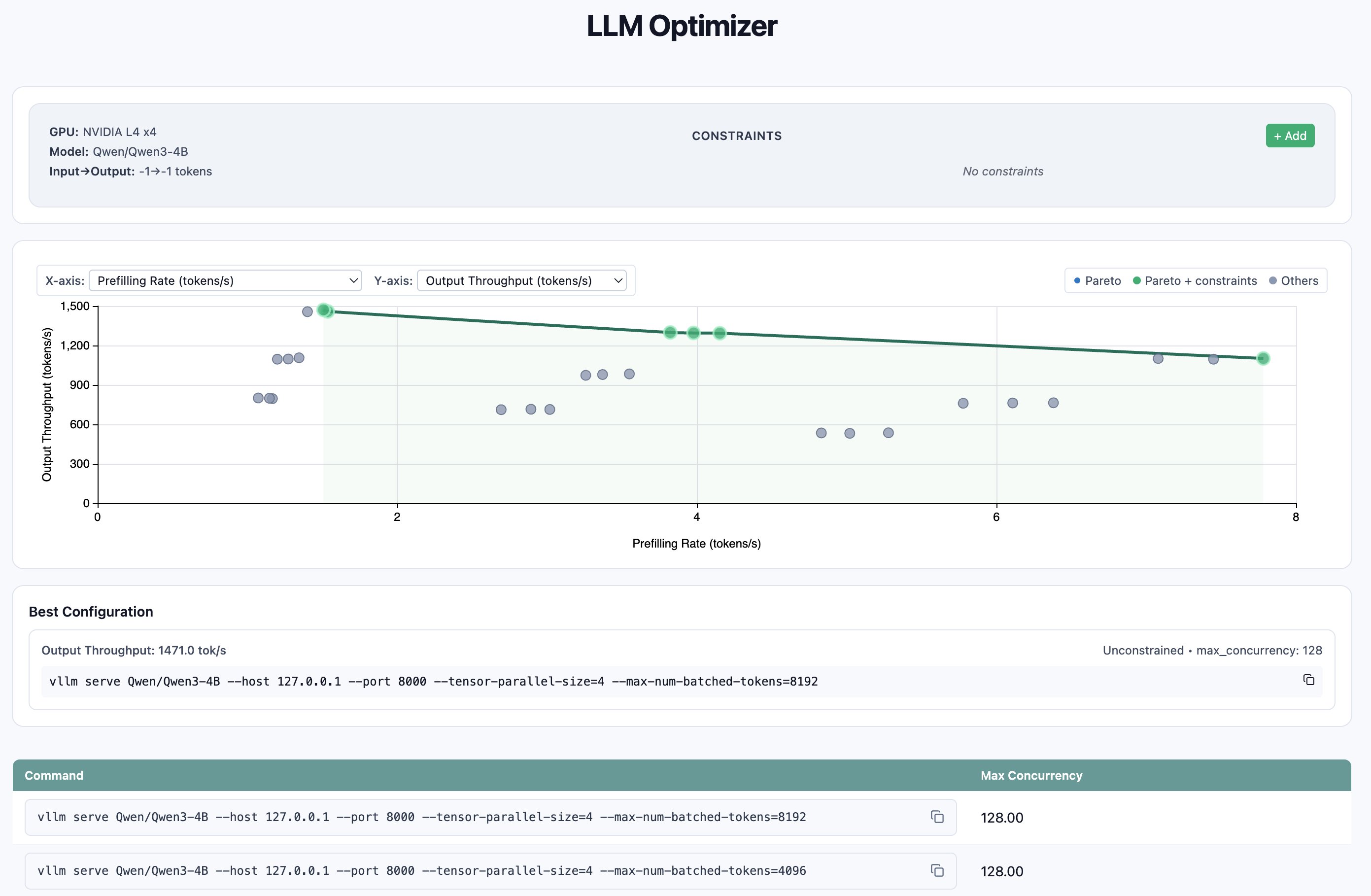

As soon as accomplished, three artifacts are generated:

- An HTML file containing a Pareto dashboard of the outcomes: An interactive visualization that highlights the trade-offs between latency and throughput throughout the examined configurations.

- A JSON file summarizing the benchmark outcomes: This compact output aggregates the important thing efficiency metrics (e.g., latency, throughput, GPU utilization) for every take a look at permutation and is used for programmatic evaluation or downstream automation.

- A JSONL file containing the total report of particular person benchmark runs: Every line represents a single take a look at configuration with detailed metadata, enabling fine-grained inspection, filtering, or customized plotting.

Instance benchmark report output:

Unpacking the benchmark outcomes, we will use the metrics p99 e2e latency and request throughput at numerous ranges of concurrency to make an knowledgeable determination. The benchmark outcomes revealed that tensor parallelism of 4 throughout the out there GPUs persistently outperformed decrease parallelism settings, with the optimum configuration being tensor_parallel_size=4, max_num_batched_tokens=8192, and max_concurrency=128, attaining 7.51 requests/second and a pair of,270 enter tokens/second—a 2.7x throughput enchancment over the naive single-GPU baseline (2.74 req/s).Whereas this configuration delivered peak throughput, it got here with elevated p99 end-to-end latency of 61.4 seconds beneath heavy load; for latency-sensitive workloads, the candy spot was tensor_parallel_size=4 with max_num_batched_tokens=4096 at reasonable concurrency (32), which maintained sub-24-second p99 latency whereas nonetheless delivering 5.63 req/s—greater than double the baseline throughput. The information demonstrates that transferring from a naive single-GPU setup to optimized 4-way tensor parallelism with tuned batch sizes can unlock substantial efficiency features, with the precise configuration selection relying on whether or not the deployment prioritizes most throughput or latency assurances.

To visualise the outcomes, LLM-Optimizer gives a handy perform to view the outputs plotted in a Pareto dashboard. The Pareto dashboard could be displayed with the next line of code:

With the proper artifacts now in hand, the mannequin with the proper configurations could be deployed.

Deploying to Amazon SageMaker AI

With the optimum serving parameters recognized by LLM-Optimizer, the ultimate step is to deploy the tuned mannequin into manufacturing. Amazon SageMaker AI gives a really perfect setting for this transition, abstracting away the infrastructure complexity of distributed GPU internet hosting whereas preserving fine-grained management over inference parameters. Through the use of LMI containers, builders can deploy open-source frameworks like vLLM at scale, with out managing CUDA dependencies, GPU scheduling, or load balancing manually.

SageMaker AI LMI containers are high-performance Docker pictures particularly designed for LLM inference. These containers combine natively with frameworks similar to vLLM and TensorRT, and supply built-in help for multi-GPU tensor parallelism, steady batching, streaming token era, and different optimizations important to low-latency serving. The LMI v16 container used on this instance consists of vLLM v0.10.2 and the V1 engine, supporting new mannequin architectures and bettering each latency and throughput in comparison with earlier variations.

Now that the perfect quantitative values for inference serving have been decided, these configurations could be handed on to the container as setting variables. (please refer right here for in-depth steerage):

When these setting variables are utilized, SageMaker robotically injects them into the container’s runtime configuration layer, which initializes the vLLM engine with the specified arguments. Throughout startup, the container downloads the mannequin weights from Hugging Face, configures the GPU topology for tensor parallel execution throughout the out there gadgets (on this case, on the ml.g6.12xlarge occasion), and registers the mannequin with the SageMaker Endpoint Runtime. This makes certain that the mannequin runs with the identical optimized settings validated by LLM-Optimizer, bridging the hole between experimentation and manufacturing deployment.

The next code demonstrates methods to bundle and deploy the mannequin for real-time inference on SageMaker AI:

As soon as the mannequin assemble is created, you possibly can create and activate the endpoint:

After deployment, the endpoint is able to deal with dwell visitors and could be invoked instantly for inference:

These code snippets show the deployment movement conceptually. For a whole end-to-end pattern on deploying an LMI container for actual time inference on SageMaker AI, check with this instance.

Conclusion

The journey from mannequin choice to manufacturing deployment now not must depend on trial and error. By combining BentoML’s LLM-Optimizer with Amazon SageMaker AI, organizations can now transfer from speculation to deployment by a data-driven, automated optimization loop. This workflow replaces handbook parameter tuning with a repeatable course of that quantifies efficiency trade-offs, aligns with business-level latency and throughput targets, and deploys the perfect configuration instantly right into a managed inference setting. This workflow addresses a important problem in manufacturing LLM deployment: with out systematic optimization, groups face an costly guessing sport between over-provisioning GPU assets and risking degraded consumer expertise. As demonstrated on this walkthrough, the efficiency variations are substantial—misconfigured setups can require 2-4x extra GPUs whereas delivering 2-3x greater latency. What might historically take an engineer days or perhaps weeks of handbook trial-and-error testing turns into a couple of hours of automated benchmarking. By combining LLM-Optimizer’s clever configuration search with SageMaker AI’s managed infrastructure, groups could make data-driven deployment selections that instantly affect each cloud prices and consumer satisfaction, focusing their efforts on constructing differentiated AI experiences reasonably than tuning inference parameters.

The mix of automated benchmarking and managed large-model deployment represents a big step ahead in making enterprise AI each accessible and economically environment friendly. By leveraging LLM-Optimizer for clever configuration search and SageMaker AI for scalable, fault-tolerant internet hosting, groups can deal with constructing differentiated AI experiences reasonably than managing infrastructure or tuning inference stacks manually. Finally, the perfect LLM configuration isn’t simply the one which runs quickest—it’s the one which meets particular latency, throughput, and price targets in manufacturing. With BentoML’s LLM-Optimizer and Amazon SageMaker AI, that steadiness could be found systematically, reproduced persistently, and deployed confidently.

Further assets

Concerning the authors

Josh Longenecker is a Generative AI/ML Specialist Options Architect at AWS, partnering with prospects to architect and deploy cutting-edge AI/ML options. He’s a part of the Neuron Knowledge Science Knowledgeable TFC and keen about pushing boundaries within the quickly evolving AI panorama. Outdoors of labor, you’ll discover him on the fitness center, outside, or having fun with time along with his household.

Josh Longenecker is a Generative AI/ML Specialist Options Architect at AWS, partnering with prospects to architect and deploy cutting-edge AI/ML options. He’s a part of the Neuron Knowledge Science Knowledgeable TFC and keen about pushing boundaries within the quickly evolving AI panorama. Outdoors of labor, you’ll discover him on the fitness center, outside, or having fun with time along with his household.

Mohammad Tahsin is a Generative AI/ML Specialist Options Architect at AWS, the place he works with prospects to design, optimize, and deploy fashionable AI/ML options. He’s keen about steady studying and staying on the frontier of latest capabilities within the discipline. In his free time, he enjoys gaming, digital artwork, and cooking.

Mohammad Tahsin is a Generative AI/ML Specialist Options Architect at AWS, the place he works with prospects to design, optimize, and deploy fashionable AI/ML options. He’s keen about steady studying and staying on the frontier of latest capabilities within the discipline. In his free time, he enjoys gaming, digital artwork, and cooking.