Deploying AI brokers safely in regulated industries is difficult. With out correct boundaries, brokers that entry delicate information or execute transactions can pose vital safety dangers. Not like conventional software program, an AI agent chooses actions to attain a purpose by invoking instruments, accessing information, and adapting its reasoning utilizing information from its surroundings and customers. This autonomy is exactly what makes brokers so highly effective and what makes safety a non-negotiable concern.

A helpful psychological mannequin for agent security is to isolate the agent from the surface world. To do that, consider partitions round an agent that defines what the agent can entry, what it might work together with, and what results it might have on the surface world. And not using a well-defined wall, an agent that may ship emails, question databases, execute code, or set off monetary transactions is dangerous. These capabilities can result in information exfiltration, unintended entry to code or infrastructure, or immediate injection assaults. How are you going to implement AI security reliably, at scale, with out slowing down innovation? Coverage in Amazon Bedrock AgentCore offers you a principled technique to implement these boundaries at runtime, at scale.

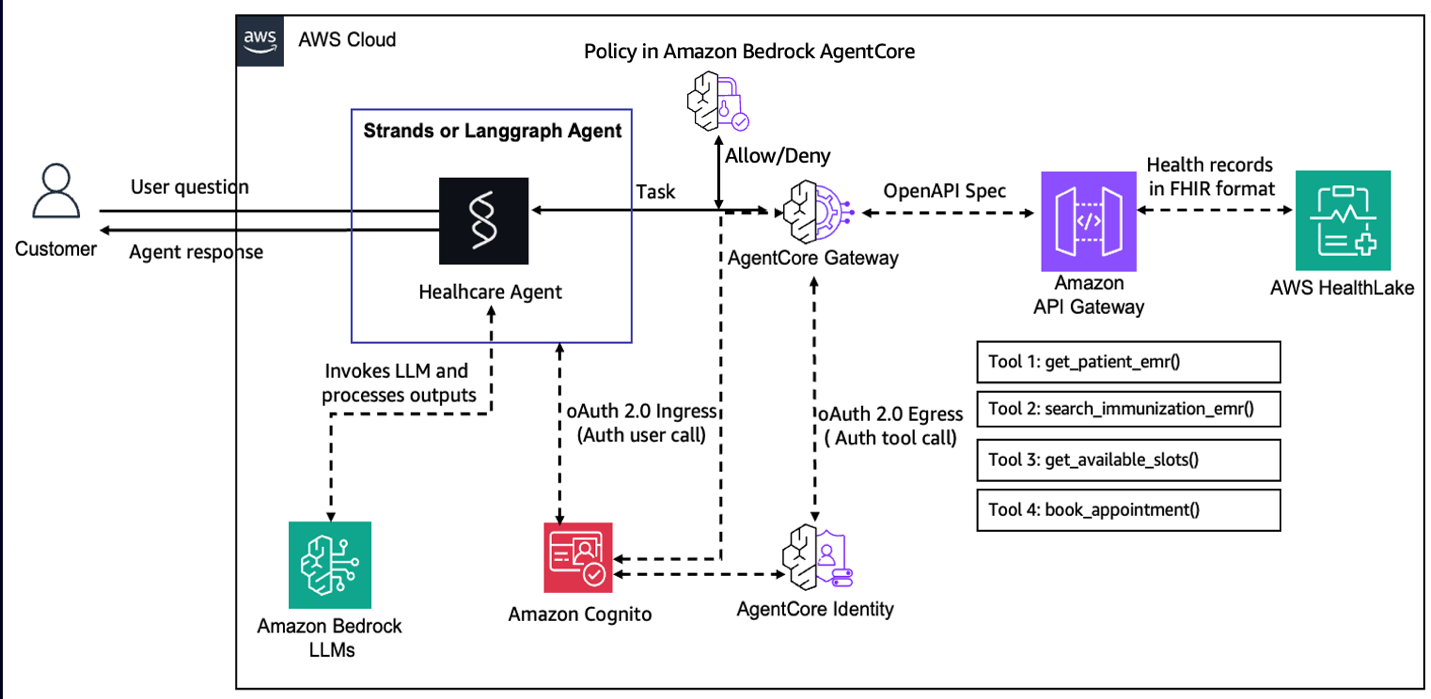

On this put up, we use a healthcare appointment scheduling agent to know how Coverage in Amazon Bedrock AgentCore creates a deterministic enforcement layer that operates independently of the agent’s personal reasoning. Healthcare is a pure match for this exploration. Brokers working on this area should deal with delicate affected person information, respect strict entry boundaries, and implement enterprise guidelines constantly. This requires deterministic coverage enforcement to take care of the protection of affected person information and safe runtime operations. The complete pattern is accessible on GitHub at amazon-bedrock-agentcore-samples.

You’ll learn to flip pure language descriptions of your enterprise guidelines into Cedar insurance policies, then use these insurance policies to implement fine-grained, identity-aware controls in order that brokers solely entry the instruments and information that their customers are licensed to make use of. Additionally, you will see the best way to apply Coverage by AgentCore Gateway, intercepting and evaluating each agent-to-tool request at runtime.

The lacking layer: Why brokers want exterior coverage enforcement

Securing AI brokers is more durable than securing conventional software program. The issues that make brokers highly effective, similar to open-ended reasoning, versatile instrument use, and adaptableness to new conditions, additionally create unpredictable behaviors which might be a lot more durable to soundly management. Brokers depend on massive language mannequin (LLM) inference, which might hallucinate and has no built-in laborious separation between trusted directions and incidental textual content. This makes brokers susceptible to immediate injection and associated adversarial assaults that exploit these LLM weaknesses to override safeguards and subvert meant conduct. These dangers are sometimes managed by constraining the agent in code. This works, but it surely carries refined prices. The agent’s conduct is now solely as secure because the correctness of that wrapper code, which is now an implicit safety boundary. Reasoning about whether or not the coverage is full requires cautious code evaluation throughout a probably massive code base. When a safety workforce must audit whether or not the proper guidelines are in place, they’re studying software code somewhat than a transparent, auditable coverage definition. Coverage in Amazon Bedrock AgentCore takes a unique strategy: transfer the coverage fully exterior the agent. Now the coverage is enforced earlier than the instrument invocation by the agent by AgentCore Gateway. Thus, coverage is enforced no matter what the agent does, no matter how the agent is prompted or manipulated, and no matter what bugs exist within the agent code itself. With this separation, you possibly can give attention to functionality with out treating each line of tool-calling code as a possible safety boundary.

Cedar: Language for deterministic coverage enforcement

Not like embedding guidelines in agent code, exterior coverage enforcement offers auditable safety boundaries and separates functionality improvement from safety issues. To make this type of deterministic exterior enforcement sensible, you want a coverage language that’s each machine-efficient and human-auditable. Cedar, utilized by Coverage in Amazon Bedrock AgentCore, offers this lacking layer by turning safety intent into exact, analyzable insurance policies.

Cedar is the authorization language utilized in AgentCore Coverage as a result of it was designed to be each a sensible authorization language and a goal for automated mathematical evaluation. Every coverage specifies a principal (who), motion (what), and useful resource (the place), with circumstances within the when clause. The next instance exhibits the best way to allow solely Alice to view the holiday picture:

allow( principal == Person::"alice", motion == Motion::"view", useful resource == Picture::"VacationPhoto94.jpg");Along with attributes on principals, sources, and actions, you should use Cedar to go a context object whose attributes (for instance, readOnly) might be referenced in insurance policies to situation choices on runtime data. This following instance exhibits the way you may create a coverage that permits the consumer alice to carry out a readOnly motion:

allow( principal == PhotoFlash::Person::"alice", motion, useful resource) when { context has readOnly && context.readOnly == true };Cedar’s semantics together with default deny, forbid wins over allow and order-independent analysis, make it easy to cause about insurance policies compositionally. Analysis latency can also be minimal due to the restricted loop-free construction. Cedar insurance policies shouldn’t have unwanted side effects like file system entry, system calls, or networking. So, they are often safely evaluated with out sandboxing, even when written by untrusted authors.

Coverage in Amazon Bedrock AgentCore

Constructing on the necessity for deterministic, exterior enforcement, Coverage in Amazon Bedrock AgentCore offers the concrete mechanism that evaluates each agent-to-tool request towards explicitly outlined Cedar insurance policies. A coverage engine is a group of insurance policies expressed in Cedar. To make coverage authoring accessible, insurance policies might be created in two methods: authored instantly as Cedar for fine-grained programmatic management or generated from plain English statements which might be routinely formalized into Cedar. Ranging from pure language, the service generates syntactically appropriate Cedar code, validates it towards the gateway schema, and analyzes it to floor overly permissive, overly restrictive, or in any other case problematic insurance policies earlier than they’re enforced. After outlined, Coverage in AgentCore intercepts agent visitors by Amazon Bedrock AgentCore Gateways, evaluating each agent-to-tool request towards the insurance policies within the coverage engine earlier than granting or denying instrument entry.

Making use of coverage to a healthcare appointment scheduling agent

To make these ideas concrete, let’s stroll by how Coverage in Amazon Bedrock AgentCore might be utilized to a healthcare appointment scheduling agent. That is an AI system that helps inquire about present immunization standing/schedule, checks appointment slots, and books appointments. We need to safe the AI system utilizing Coverage to assist stop unauthorized entry to affected person information, inadvertent publicity of protected well being data (PHI), or a affected person canceling one other affected person’s appointment.

Coverage in Amazon Bedrock AgentCore has a default-deny posture and forbid wins over allow default-deny posture and forbid routinely wins over allow. Along with these rules, we present how insurance policies might be composed from a small set of readable, auditable Cedar guidelines to enhance the protection of the AI agent in manufacturing.

Getting began

To do that instance your self, begin by cloning the Amazon Bedrock AgentCore samples repository and navigating to the healthcare appointment agent folder:

From there, comply with the setup and deployment directions within the README for this listing to configure your AWS surroundings, deploy the pattern stack, and invoke the agent end-to-end.

Conditions

To make use of Coverage in Amazon Bedrock AgentCore along with your agentic software, confirm that you’ve met the next conditions:

- An energetic AWS account

- Affirmation of the AWS Areas the place Coverage in AgentCore is accessible

- Acceptable IAM permissions to create, check, and fix coverage engine to your AgentCore Gateway. (Notice: The IAM coverage ought to be fine-grained and restricted to vital sources utilizing correct ARN patterns for manufacturing utilization):

[ { "Sid": "PolicyEngineManagement", "Effect": "Allow", "Action": [ "bedrock-agentcore:CreatePolicyEngine", "bedrock-agentcore:UpdatePolicyEngine", "bedrock-agentcore:GetPolicyEngine", "bedrock-agentcore:DeletePolicyEngine", "bedrock-agentcore:ListPolicyEngines" ], "Useful resource": "arn:aws:bedrock-agentcore:${aws:area}:${aws:accountId}:policy-engine/*" }, { "Sid": "CedarPolicyManagement", "Impact": "Permit", "Motion": [ "bedrock-agentcore:CreatePolicy", "bedrock-agentcore:GetPolicy", "bedrock-agentcore:ListPolicies", "bedrock-agentcore:UpdatePolicy", "bedrock-agentcore:DeletePolicy" ], "Useful resource": "arn:aws:bedrock-agentcore:${aws:area}:${aws:accountId}:policy-engine/*/coverage/*" }, { "Sid": "NaturalLanguagePolicyGeneration", "Impact": "Permit", "Motion": [ "bedrock-agentcore:StartPolicyGeneration", "bedrock-agentcore:GetPolicyGeneration", "bedrock-agentcore:ListPolicyGenerations", "bedrock-agentcore:ListPolicyGenerationAssets" ], "Useful resource": "arn:aws:bedrock-agentcore:${aws:area}:${aws:accountId}:policy-engine/*/policy-generation/*" }, { "Sid": "AttachPolicyEngineToGateway", "Impact": "Permit", "Motion": [ "bedrock-agentcore:UpdateGateway", "bedrock-agentcore:GetGateway", "bedrock-agentcore:ManageResourceScopedPolicy", "bedrock-agentcore:ManageAdminPolicy" ], "Useful resource": "arn:aws:bedrock-agentcore:${aws:area}:${aws:accountId}:gateway/*" }]Substitute

Coverage implementation steps

- To get began, first create a coverage engine that may maintain the required insurance policies that we are going to create for the healthcare appointment scheduling agent.

- There are three totally different choices to writer insurance policies. You may generate Cedar statements from guidelines expressed in pure language, use a form-based strategy to Cedar coverage creation, or instantly present the Cedar assertion. Within the subsequent part, we are going to have a look at some examples which cowl these authoring choices.

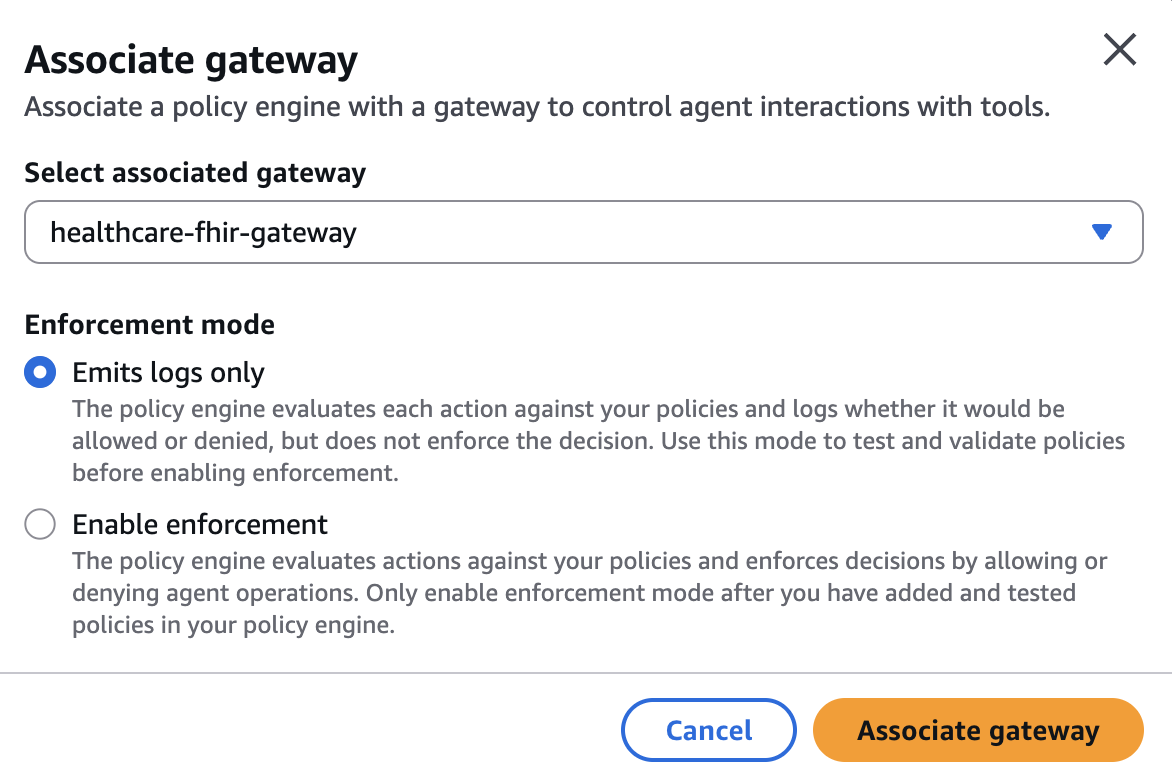

- Lastly, the coverage engine might be related to a Gateway. This may be finished within the

LOG_ONLYmode to validate how insurance policies work to your agent with out disrupting manufacturing visitors. That is finished by observing the conduct recorded within the observability logs. If you end up assured that the agent has the anticipated conduct, you possibly can affiliate the coverage engine with the gateway within the implement mode.

The healthcare appointment agent has the next instruments:

getPatient: GET /get_patient_emr— Get a affected person file by the required question parameterpatient_id(string).searchImmunization: POST /search_immunization_emr— Search immunization information with required parametersearch_value(string; affected person ID).bookAppointment: POST /book_appointment— Ebook an appointment by offeringdate_string(string; “YYYY-MM-DD HH:MM”).getSlots: GET /get_available_slots— Get accessible slots by required question parameterdate_string(string; “YYYY-MM-DD”).

Now let’s writer some insurance policies for this agent to safe how the agent accesses these instruments!

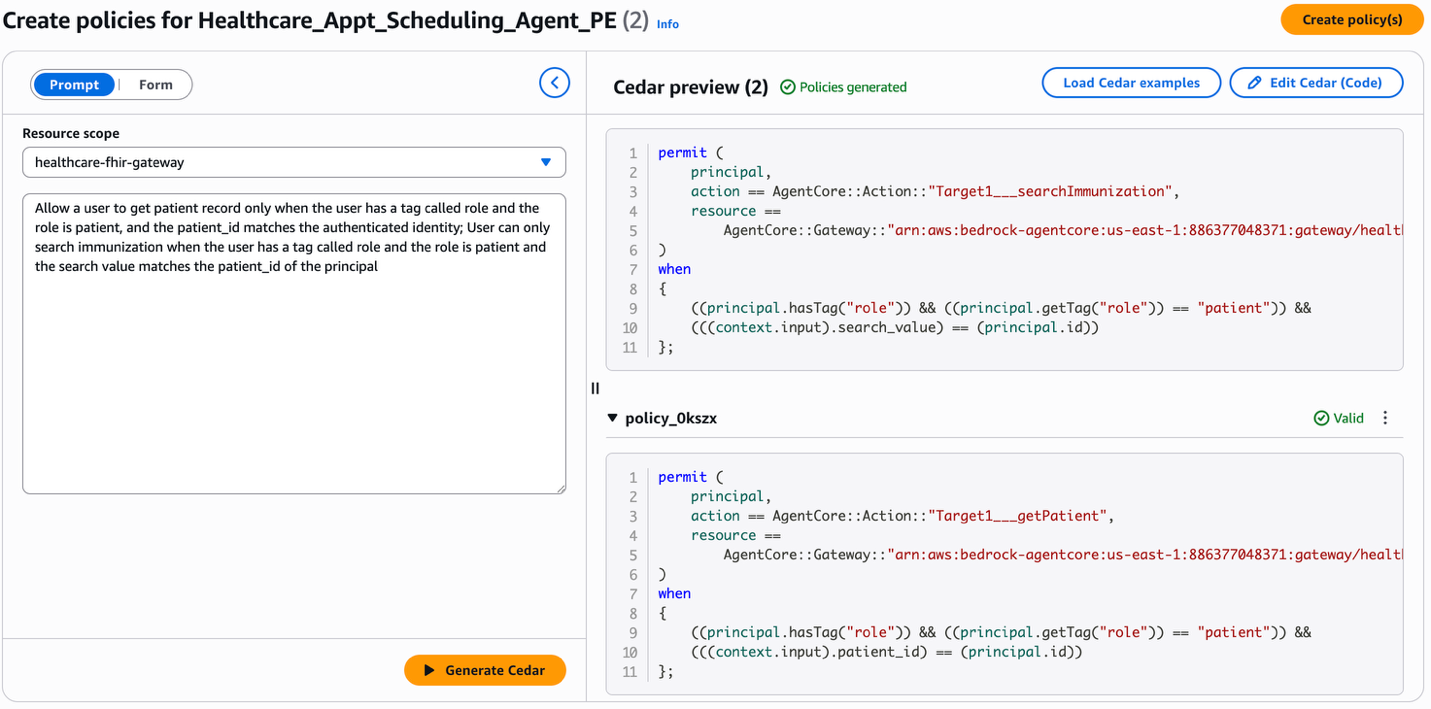

Id-based insurance policies

A elementary rule in a healthcare agent is that sufferers ought to solely be capable of act on their very own information. We need to create a coverage that allows a affected person to learn their very own affected person/immunization information. For every instrument, you possibly can state that the instrument parameter (patient_id within the case of the getPatient instrument, search_value within the case of searchImmunization instrument) should match the authenticated ID of the consumer. Within the following picture, we present you how one can writer these insurance policies by utilizing statements in pure language and producing the corresponding Cedar insurance policies.

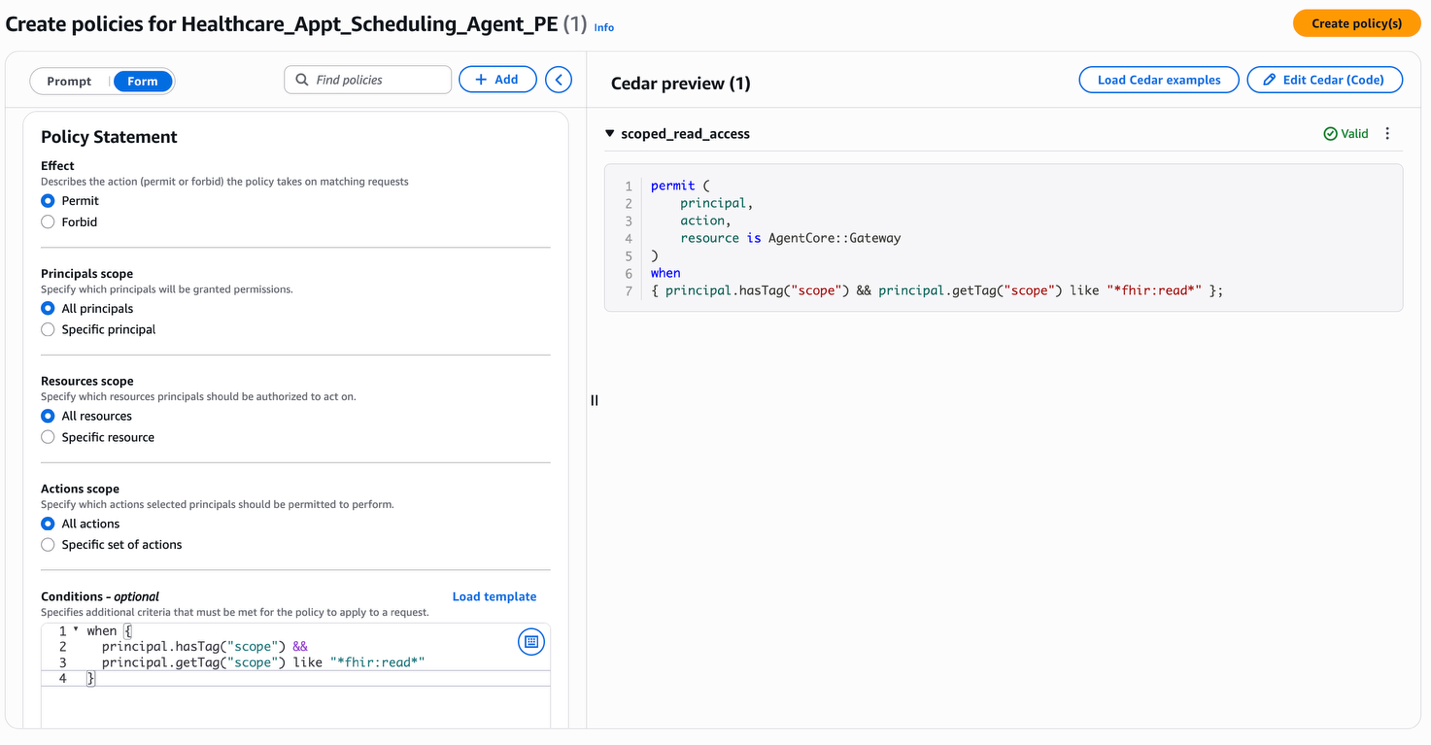

Learn vs. write separation

A standard sample in healthcare methods is to permit broad learn entry whereas tightly proscribing write operations. An instance coverage for this agent can be to make reads potential just for an authenticated consumer with an appropriate scope (for instance, fhir:learn) and hold the writes individually managed. Within the following picture, you possibly can see how the form-based coverage creation works. You may select the impact, principal, useful resource, motion and supply the circumstances to create a coverage. The picture exhibits the creation of a coverage to permit customers to entry instruments within the healthcare software’s gateway solely when the principal comprises fhir:learn within the principal scope.

Like this coverage for scoped learn entry, now you can use a scope appointment:write within the scope of the principal to manage write entry.

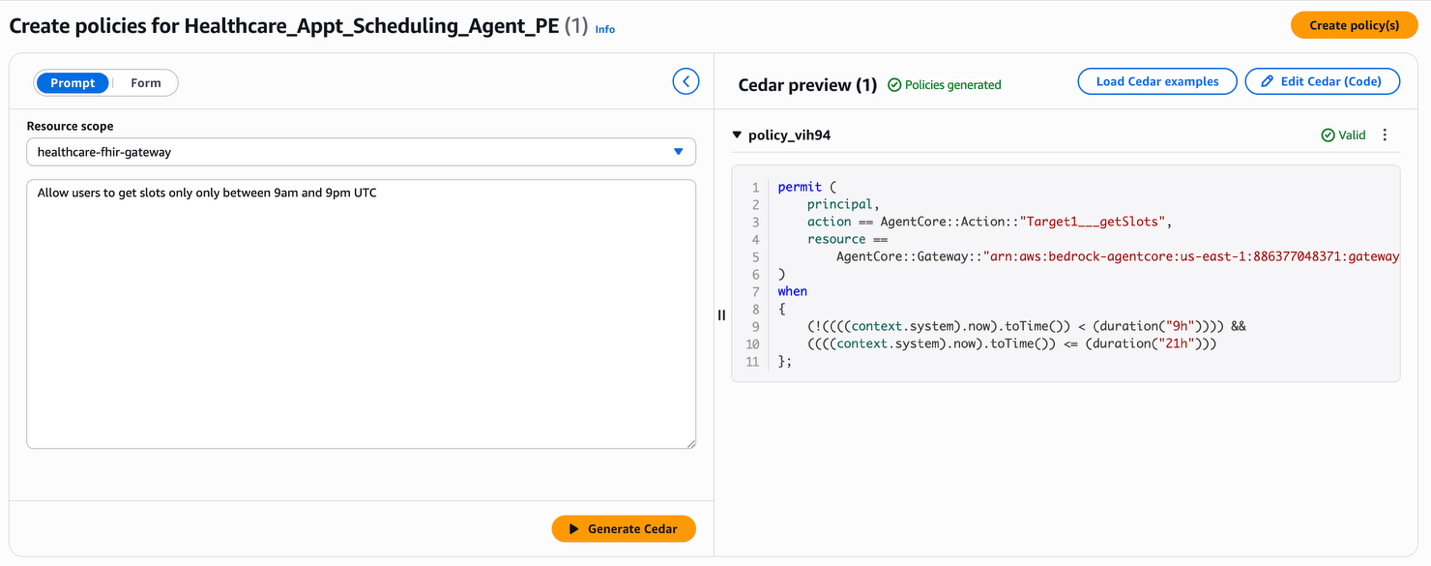

Threat controls on scheduling

Past entry management, Coverage can implement enterprise guidelines or harmful patterns that assist stop abuse. By utilizing specific forbids to laborious‑cease harmful enter patterns to instruments, you possibly can safe your software even when the agent is compromised. For instance, allow us to create a coverage to dam displaying appointment slots exterior of restricted hours.Within the following picture, you possibly can see that the pure language assertion “Permit customers to get slots solely between 9 am and 9 pm UTC” is transformed to the Cedar coverage.

Now you can construct extra insurance policies to your use case and create a coherent coverage set coherent to ascertain an entire safety posture. The Coverage engine analysis mannequin is default-deny: if no allow coverage matches a request, it’s blocked. Use broad allow guidelines for widespread, low-risk operations. Add focused forbid guidelines for high-risk instruments, delicate information boundaries, and recognized abuse vectors. This layered strategy helps you create a coverage set that’s each readable by a safety auditor and enforceable at runtime unbiased of what the agent’s LLM reasoning produces. Right here, the agent doesn’t must “know” these guidelines. It doesn’t must be prompted to respect them, fine-tuned to comply with them, or trusted to use them accurately underneath adversarial circumstances. Coverage in Amazon Bedrock AgentCore enforces them on the gateway, earlier than instrument name execution, no matter how the agent arrived at its resolution.

Testing coverage enforcement

Allow us to now perceive the conduct of the insurance policies we now have created and related to the gateway for the healthcare appointment scheduling agent. The next two check circumstances display the identity-scoped entry coverage in motion when the agent invokes the getPatient instrument on behalf of a consumer. In each circumstances, the authenticated consumer is affected person adult-patient-001.The Cedar coverage being examined is the affected person read-only entry rule. This rule permits the getPatient instrument name solely when the `patient_id` within the request matches the authenticated consumer’s identification:

allow( principal, motion == AgentCore::Motion::"Target1___getPatient", useful resource == AgentCore::Gateway::"{gateway_arn}") when { principal.hasTag("position") && principal.getTag("position") == "affected person" && context.enter has patient_id && principal.hasTag("patient_id") && context.enter.patient_id == principal.getTag("patient_id")};Take a look at 1a — ALLOW: Affected person accessing their very own file**

The agent sends a instruments/name request to the gateway to invoke getPatient with the instrument parameter for patient_id set to adult-patient-001. As a result of the patient_id within the instrument enter matches the authenticated consumer’s patient_id declare, the Cedar coverage permits the request:

Immediate: “Get my affected person data for affected person ID adult-patient-001”

Coverage resolution: ALLOW

End result: Affected person file returned efficiently

Take a look at 1b — DENY: Affected person accessing one other affected person’s file

Now the agent sends the identical instruments/name request for getPatient, with patient_id set to pediatric-patient-001. The Cedar coverage compares the instrument enter towards the authenticated consumer’s patient_id declare, finds a mismatch, and denies the request as a result of there’s no matching allow coverage.

Immediate: “Get affected person data for affected person ID pediatric-patient-001”

Coverage resolution: DENY

End result: Device execution denied by coverage enforcement

The identical agent code, the identical mannequin, and the identical instrument are utilized in each circumstances. The one distinction is the coverage analysis on the gateway boundary. The instrument is protected against the denied request as a result of the gateway intercepts it earlier than execution.The next instance demonstrates a unique enforcement sample: a forbid rule that blocks scheduling operations exterior of permitted hours. The earlier Threat Controls on Scheduling part used pure language to generate a allow rule that permits slot entry throughout the 9 AM–9 PM window. The identical constraint might be expressed as a forbid that explicitly blocks requests exterior that window. Each approaches produce the identical enforcement consequence; we use the forbid kind for example how Cedar’s “forbid all the time wins” semantics can hard-stop harmful patterns:

forbid( principal, motion == AgentCore::Motion::"Target1___getSlots", useful resource == AgentCore::Gateway::"{gateway_arn}") when context.time.hour > 21);Take a look at 2a — ALLOW: Requesting slots throughout permitted hours

The agent requests accessible slots for a date throughout clinic hours (e.g., 2 PM UTC). This coverage’s when clause doesn’t match as a result of the request hour falls inside the 9–21 vary, so the request proceeds to the corresponding allow coverage and succeeds.

Immediate: “Present me accessible appointment slots for 2025-08-15” (requested at 14:00 UTC)

Coverage resolution: ALLOW

End result: Out there slots returned for the requested date

Take a look at 2b — DENY: Requesting slots exterior permitted hours

The agent makes the identical request, however at 3 AM UTC. The forbid rule matches as a result of the request hour is lower than 9, and since forbid wins over allow in Cedar, the request is blocked whatever the different allow insurance policies.

Immediate: “Present me accessible appointment slots for 2025-08-15” (requested at 03:00 UTC)

Coverage resolution: DENY

End result: Device execution denied by coverage enforcement

Collectively, these check circumstances illustrate each enforcement patterns: allow with identity-scoped circumstances and forbid with time-based restrictions. That is what makes exterior coverage enforcement deterministic. The result relies on the coverage definition and the request context, not on the agent’s reasoning.

Clear up sources

To keep away from ongoing expenses, delete the Amazon Bedrock AgentCore insurance policies, take away check brokers, and clear up any related sources utilizing the offered CDK destroy instructions and the coverage cleanup script. To run the cleanup script:

python coverage/setup_policy.py --cleanupConclusion

AI brokers are solely as reliable because the boundaries that comprise them. These boundaries should be enforced deterministically. Coverage in Amazon Bedrock AgentCore offers you a principled technique to outline these boundaries and implement them on the gateway layer on each agent-to-tool request. These insurance policies are enforced independently of the agent’s reasoning and are auditable by anybody in your safety workforce. For enterprises deploying brokers in regulated industries, this separation between functionality and enforcement is the muse that makes production-grade agentic methods potential. Within the subsequent put up on this collection, we’ll dive into the important thing variations between Lambda interceptors and Coverage in AgentCore Gateway, and stroll by architectural patterns for utilizing every individually and collectively, to construct sturdy ruled agentic functions.

Subsequent steps

Prepared so as to add deterministic coverage enforcement to your personal brokers? These sources will get you up and operating rapidly:

- The Coverage Developer Information covers Cedar coverage authoring, pure language to Cedar formalization, AWS KMS encryption, and CDK constructs for infrastructure as code (IaC) deployments.

- The AgentCore Getting Began workshop walks you thru constructing and securing an agent end-to-end, together with integrating Coverage with AgentCore Gateway.

Have questions on implementing these insurance policies in your particular use case? Share your ideas within the feedback on this put up or join with the group within the AWS boards.

Acknowledgement

Particular due to everybody who contributed to the launch: groups lead by Pushpinder Dua, Raja Kapur, Sean Eichenberger, Jean-Baptiste Tristan, Sandesh Swamy, Maira Ladeira Tanke, Tanvi Girinath and Amanda Lester.

In regards to the authors