This weblog put up is predicated on work co-developed with Flo Well being.

Healthcare science is quickly advancing. Sustaining correct and up-to-date medical content material straight impacts folks’s lives, well being choices, and well-being. When somebody searches for well being data, they’re typically at their most weak, making accuracy not simply necessary, however doubtlessly life-saving.

Flo Well being creates hundreds of medical articles yearly, offering thousands and thousands of customers worldwide with medically credible data on girls’s well being. Verifying the accuracy and relevance of this huge content material library is a big problem. Medical information evolves repeatedly, and handbook assessment of every article shouldn’t be solely time-consuming but additionally vulnerable to human error. For this reason the crew at Flo Well being, the corporate behind the main girls’s well being app Flo, is utilizing generative AI to facilitate medical content material accuracy at scale. Via a partnership with AWS Generative AI Innovation Middle, Flo Well being is growing an revolutionary strategy, additional known as, “Medical Automated Content material Evaluate and Revision Optimization Answer” (MACROS) to confirm and keep the accuracy of its intensive well being data library. This AI-powered answer is able to:

- Effectively processing giant volumes of medical content material primarily based on credible scientific sources.

- Figuring out potential inaccuracies or outdated data primarily based on credible scientific sources.

- Proposing updates primarily based on the most recent medical analysis and tips, in addition to incorporating consumer suggestions.

The system powered by Amazon Bedrock allows Flo Well being to conduct medical content material critiques and revision assessments at scale, making certain up-to-date accuracy and supporting extra knowledgeable healthcare decision-making. This technique performs detailed content material evaluation, offering complete insights on medical requirements and tips adherence for Flo’s medical specialists to assessment. It is usually designed for seamless integration with Flo’s current tech infrastructure, facilitating automated updates the place applicable.

This two-part sequence explores Flo Well being’s journey with generative AI for medical content material verification. Half 1 examines our proof of idea (PoC), together with the preliminary answer, capabilities, and early outcomes. Half 2 covers specializing in scaling challenges and real-world implementation. Every article stands alone whereas collectively displaying how AI transforms medical content material administration at scale.

Proof of Idea targets and success standards

Earlier than diving into the technical answer, we established clear goals for our PoC medical content material assessment system:

Key Aims:

- Validate the feasibility of utilizing generative AI for medical content material verification

- Decide accuracy ranges in comparison with handbook assessment

- Assess processing time and price enhancements

Success Metrics:

- Accuracy: Content material piece recall of 90%

- Effectivity: Cut back detection time from hours to minutes per guideline

- Price Discount: Cut back professional assessment workload

- High quality: Preserve Flo’s editorial requirements and medical accuracy

- Velocity: 10x sooner than handbook assessment course of

To confirm the answer meets Flo Well being’s excessive requirements for medical content material, Flo Well being’s medical specialists and content material groups had been working carefully with AWS technical specialists by common assessment periods, offering vital suggestions and medical experience to repeatedly improve the AI mannequin’s efficiency and accuracy. The result’s MACROS, our custom-built answer for AI-assisted medical content material verification.

Answer overview

On this part, we define how the MACROS answer makes use of Amazon Bedrock and different AWS providers to automate medical content material assessment and revisions.

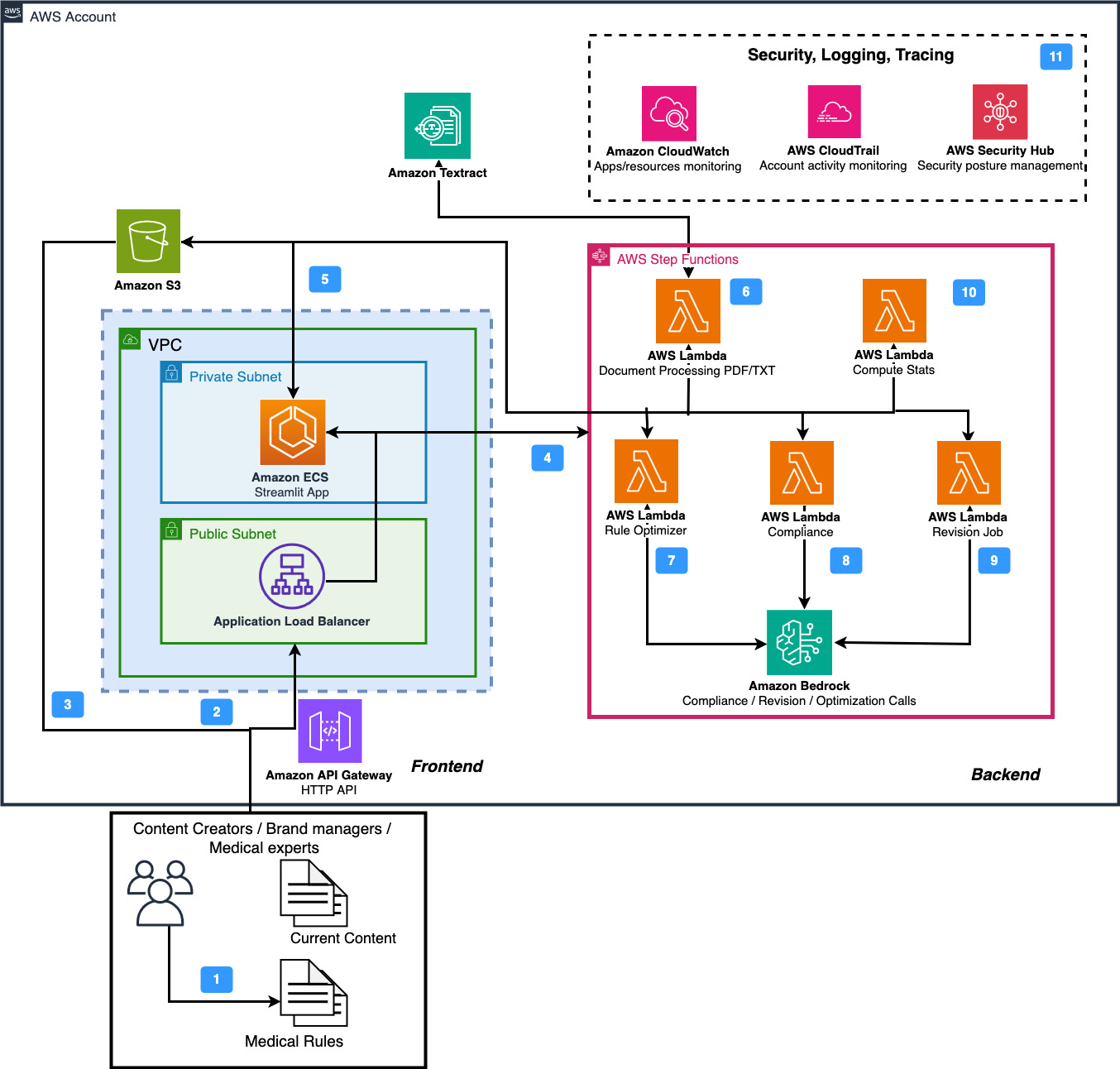

Determine 1. Medical Automated Content material Evaluate and Revision Optimization Answer Overview

As proven in Determine 1, the developed answer helps two main processes:

- Content material Evaluate and Revision: Allows the medical requirements and elegance adherence of current medical articles at scale given the pre-specified {custom} guidelines and tips and proposes a revision that conforms to the brand new medical requirements in addition to Flo’s model and tone tips.

- Rule Optimization: MACROS accelerates the method of extracting the brand new (medical) tips from the (medical) analysis, pre-processing them into the format wanted for content material assessment, in addition to optimizing their high quality.

Each steps could be performed by the consumer interface (UI) in addition to the direct API name. The UI assist allows medical specialists to straight see the content material assessment statistics, work together with modifications, and do handbook changes. The API name assist is meant for the combination into pipeline for periodic evaluation.

Structure

Determine 2 depicts the structure of MACROS. It consists of two main components: backend and frontend.

Determine 2. MACROS structure

Within the following, the movement of main app elements is offered:

1. Customers start by gathering and making ready content material that should meet medical requirements and guidelines.

2. Within the second step, the info is offered as PDF, TXT recordsdata or textual content by the Streamlit UI that’s hosted in Amazon Elastic Container Service (ECS). The authentication for file add occurs by Amazon API Gateway

3. Alternatively, {custom} Flo Well being JSON recordsdata could be straight uploaded to the Amazon Easy Storage Service (S3) bucket of the answer stack.

4. The ECS hosted frontend has AWS IAM permissions to orchestrate duties utilizing AWS Step Capabilities.

5. Additional, the ECS container has entry to the S3 for itemizing, downloading and importing recordsdata both through pre-signed URL or boto3.

6. Optionally, if the enter file is uploaded through the UI, the answer invokes AWS Step Capabilities service that begins the pre-processing performance inside hosted by an AWS Lambda perform. This Lambda has entry to Amazon Textract for extracting textual content from PDF recordsdata. The recordsdata are saved in S3 and likewise returned to the UI.

7-9. Hosted on AWS Lambda, Rule Optimizer, Content material Evaluate and Revision capabilities are orchestrated through AWS Step Operate. They’ve entry to Amazon Bedrock for generative AI capabilities to carry out rule extraction from unstructured information, content material assessment and revision, respectively. Moreover, they’ve entry to S3 through boto3 SDK to retailer the outcomes.

10. The Compute Stats AWS Lambda perform has entry to S3 and may learn and mix the outcomes of particular person revision and assessment runs.

11. The answer leverages Amazon CloudWatch for system monitoring and log administration. For manufacturing deployments coping with vital medical content material, the monitoring capabilities may very well be prolonged with {custom} metrics and alarms to supply extra granular insights into system efficiency and content material processing patterns.

Future enhancements

Whereas our present structure makes use of AWS Step Capabilities for workflow orchestration, we’re exploring the potential of Amazon Bedrock Flows for future iterations. Bedrock Flows affords promising capabilities for streamlining AI-driven workflows, doubtlessly simplifying our structure and enhancing integration with different Bedrock providers. This various might present extra seamless administration of our AI processes, particularly as we scale and evolve our answer.

Content material assessment and revision

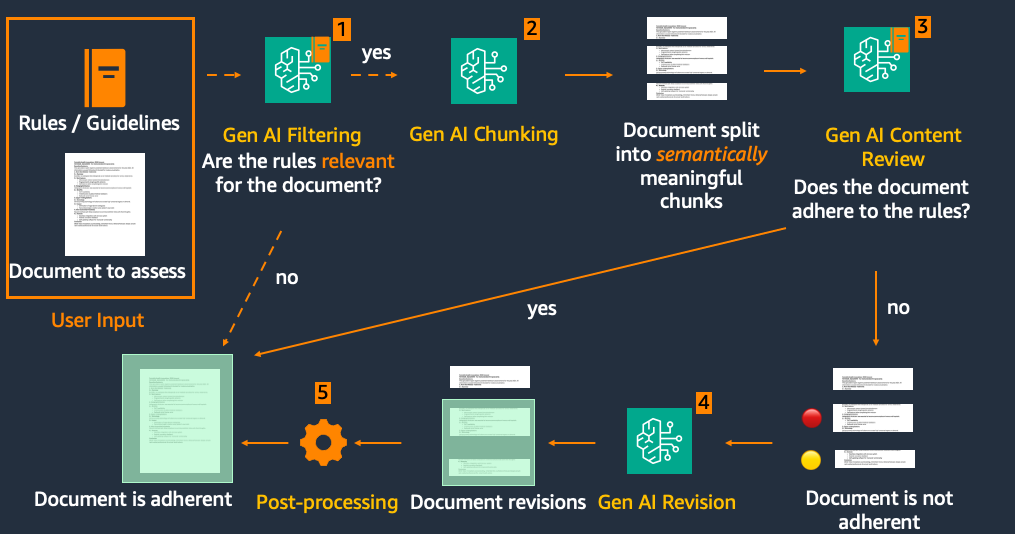

On the core of MACROS lies its Content material Evaluate and Revision performance with Amazon Bedrock basis fashions. The Content material Evaluate and Revision block consists of 5 main elements: 1) The optionally available Filtering stage 2) Chunking 3) Evaluate 4) Revision and 5) Publish-processing, depicted in Determine 3.

Determine 3. Content material assessment and revision pipeline

Right here’s how MACROS processes the uploaded medical content material:

- Filtering (Optionally available): The journey begins with an optionally available filtering step. This sensible characteristic checks whether or not the algorithm is related for the article, doubtlessly saving time and sources on pointless processing.

- Chunking: The supply textual content is then cut up into paragraphs. This important step facilitates good high quality evaluation and helps stop unintended revisions to unrelated textual content. Chunking could be performed utilizing heuristics, equivalent to punctuation or common expression-based splits, in addition to utilizing giant language fashions (LLM) to determine semantically full chunks of textual content.

- Evaluate: Every paragraph or part undergoes an intensive assessment in opposition to the related guidelines and tips.

- Revision: Solely the paragraphs flagged as non-adherent transfer ahead to the revision stage, streamlining the method and sustaining the integrity of adherent content material. The AI suggests updates to convey non-adherent paragraphs consistent with the most recent tips and Flo’s model necessities.

- Publish-processing: Lastly, the revised paragraphs are seamlessly built-in again into the unique textual content, leading to an up to date, adherent doc.

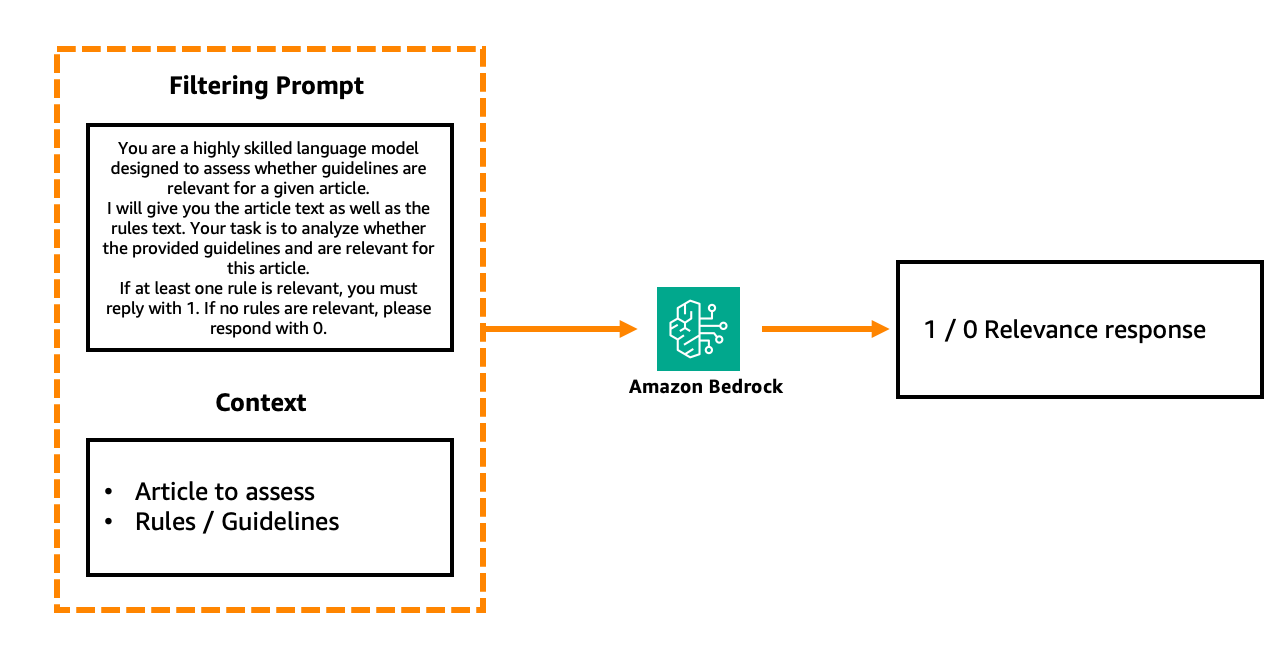

The Filtering step could be performed utilizing a further LLM through Amazon Bedrock name that assesses every part individually with the next immediate construction:

Determine 4. Simplified LLM-based filtering step

Additional, non-LLM approaches could be possible to assist the Filtering step:

- Encoding the foundations and the articles into dense embedding vectors and calculating similarity between them. By setting the similarity threshold we are able to determine which rule set is taken into account to be related for the enter doc.

- Equally, the direct keyword-level overlap between the doc and the rule could be recognized utilizing BLEU or ROUGE metrics.

Content material assessment, as already talked about, is performed on a textual content part foundation in opposition to group of guidelines and results in response in XML format, equivalent to:

Right here, 1 signifies adherence and 0 – non-adherence of the textual content to the required guidelines. Utilizing XML format helps to realize dependable parsing of the output.

This Evaluate step iterates over the sections within the textual content to ensure that the LLM pays consideration to every part individually, which led to extra sturdy leads to our experimentation. To facilitate increased non-adherent part detection accuracy, the consumer also can use the Multi-call mode, the place as a substitute of 1 Amazon Bedrock name assessing adherence of the article in opposition to all guidelines, we’ve one impartial name per rule.

The Revision step receives the output of the Evaluate (non-adherent sections and the explanations for non-adherence), in addition to the instruction to create the revision in the same tone. It then suggests revisions of the non-adherent sentences in a method just like the unique textual content. Lastly, the Publish-processing step combines the unique textual content with new revisions, ensuring that no different sections are modified.

Completely different steps of the movement require totally different ranges of LLM mannequin complexity. Whereas easier duties like chunking could be completed effectively with a comparatively small mannequin like Claude Haiku fashions household, extra advanced reasoning duties like content material assessment and revision require bigger fashions like Claude Sonnet or Opus fashions household to facilitate correct evaluation and high-quality content material technology. This tiered strategy to mannequin choice optimizes each efficiency and cost-efficiency of the answer.

Working modes

The Content material Evaluate and Revision characteristic operates in two UI modes: Detailed Doc Processing and Multi Doc Processing, every catering to totally different scales of content material administration. The Detailed Doc Processing mode affords a granular strategy to content material evaluation and is depicted in Determine 5. Customers can add paperwork in numerous codecs (PDF, TXT, JSON or paste textual content straight) and specify the rules in opposition to which the content material ought to be evaluated.

Determine 5. Detailed Doc Processing instance

Customers can select from predefined rule units, right here, Vitamin D, Breast Well being, and Premenstrual Syndrome and Dysphoric Dysfunction (PMS and PMDD), or enter {custom} tips. These {custom} tips can embody guidelines equivalent to “The title of the article have to be medically correct” in addition to adherent and non-adherent to the rule examples of content material.

The rulesets ensure that the evaluation aligns with particular medical requirements and Flo’s distinctive model information. The interface permits for on-the-fly changes, making it excellent for thorough, particular person doc critiques. For larger-scale operations, the Multi Doc Processing mode ought to be used. This mode is designed to deal with quite a few {custom} JSON recordsdata concurrently, mimicking how Flo would combine MACROS into their content material administration system.

Extracting guidelines and tips from unstructured information

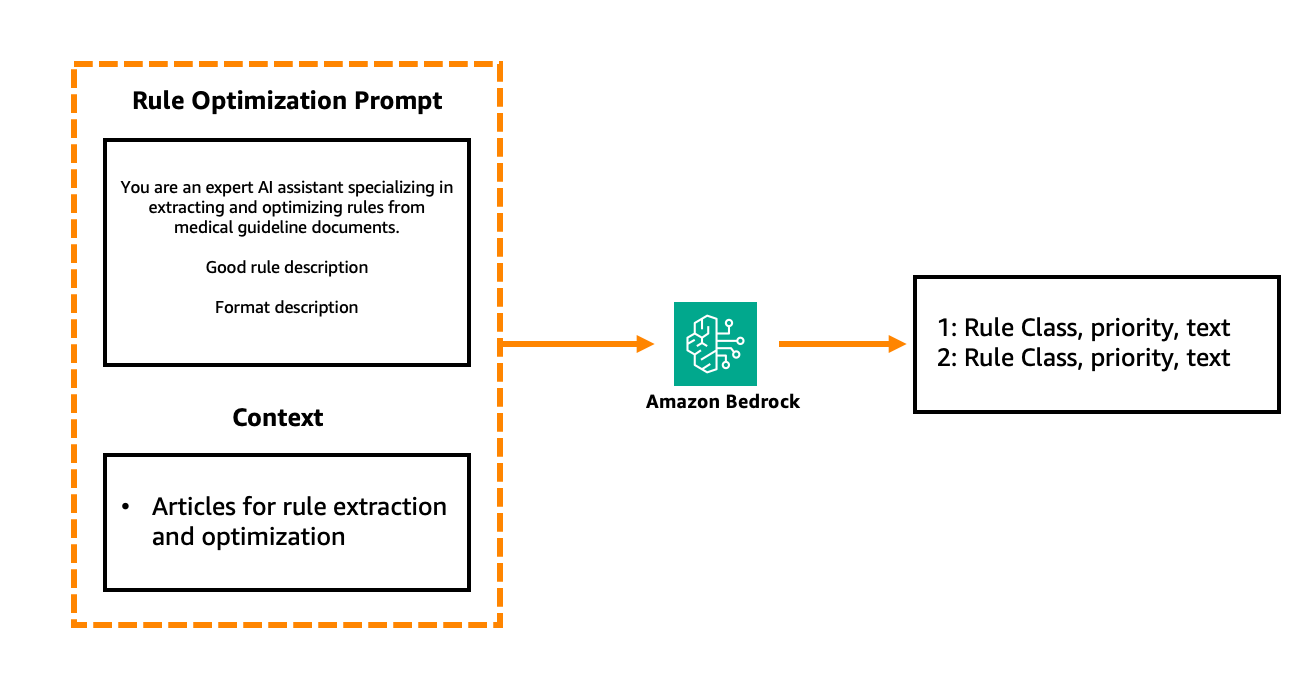

Actionable and well-prepared tips aren’t all the time instantly obtainable. Generally they’re given in unstructured recordsdata or must be discovered. Utilizing the Rule Optimizer characteristic, we are able to extract and refine actionable tips from a number of advanced paperwork.

Rule Optimizer processes uncooked PDF paperwork to extract textual content, which is then chunked into significant sections primarily based on doc headers. This segmented content material is processed by Amazon Bedrock utilizing specialised system prompts, with two distinct modes: Type/tonality and Medical mode.

Type/tonality mode focuses on extracting the rules on how the textual content ought to be written, its model, what codecs and phrases can or can’t be used.

Rule Optimizer assigns a precedence for every rule: excessive, medium, and low. The precedence stage signifies the rule’s significance, guiding the order of content material assessment and focusing consideration on vital areas first. Rule Optimizer features a handbook enhancing interface the place customers can refine rule textual content, modify classifications, and handle priorities. Due to this fact, if customers must replace a given rule, the modifications are saved for future use in Amazon S3.

The Medical mode is designed to course of medical paperwork and is tailored to a extra scientific language. It permits grouping of extracted guidelines into three courses:

- Medical situation tips

- Therapy particular tips

- Adjustments to recommendation and developments in well being

Determine 6. Simplified medical rule optimization immediate

Determine 6 offers an instance of a medical rule optimization immediate, consisting of three major elements: position setting – medical AI professional, description of what makes a superb rule, and at last the anticipated output. We determine the sufficiently good high quality for a rule whether it is:

- Clear, unambiguous, and actionable

- Related, constant, and concise (max two sentences)

- Written in energetic voice

- Avoids pointless jargon

Implementation issues and challenges

Throughout our PoC growth, we recognized a number of essential issues that may profit others implementing related options:

- Information preparation: This emerged as a basic problem. We realized the significance of standardizing enter codecs for each medical content material and tips whereas sustaining constant doc buildings. Creating various take a look at units throughout totally different medical subjects proved important for complete validation.

- Price administration: Monitoring and optimizing price shortly turned a key precedence. We carried out token utilization monitoring and optimized immediate design and batch processing to stability efficiency and effectivity.

- Regulatory and moral compliance: Given the delicate nature of medical content material, strict regulatory and moral safeguards had been vital. We established sturdy documentation practices for AI choices, carried out strict model management for medical tips and steady human medical professional oversight for the AI-generated recommendations. Regional healthcare laws had been fastidiously thought-about all through implementation.

- Integration and scaling: We suggest beginning with a standalone testing surroundings whereas planning for future content material administration system (CMS) integration by well-designed API endpoints. Constructing with modularity in thoughts proved beneficial for future enhancements. All through the method, we confronted frequent challenges equivalent to sustaining context in lengthy medical articles, balancing processing velocity with accuracy, and facilitating constant tone throughout AI-suggested revisions.

- Mannequin optimization: The varied mannequin choice functionality of Amazon Bedrock proved notably beneficial. Via its platform, we are able to select optimum fashions for particular duties, obtain price effectivity with out sacrificing accuracy, and easily improve to newer fashions – all whereas sustaining our current structure.

Preliminary Outcomes

Our Proof of Idea delivered sturdy outcomes throughout the vital success metrics, demonstrating the potential of AI-assisted medical content material assessment. The answer exceeded goal processing velocity enhancements whereas sustaining 80% accuracy and over 90% recall in figuring out content material requiring updates. Most notably, the AI-powered system utilized medical tips extra constantly than handbook critiques and considerably lowered the time burden on medical specialists.

Key Takeaways

Throughout implementation, we uncovered a number of insights vital for optimizing AI efficiency in medical content material evaluation. Content material chunking was important for correct evaluation throughout lengthy paperwork, and professional validation of parsing guidelines helped medical specialists to keep up scientific precision.Most significantly, the undertaking confirmed that human-AI collaboration – not full automation – is essential to profitable implementation. Common professional suggestions and clear efficiency metrics guided system refinements and incremental enhancements. Whereas the system considerably streamlines the assessment course of, it really works finest as an augmentation instrument, with medical specialists remaining important for last validation, making a extra environment friendly hybrid strategy to medical content material administration.

Conclusion and subsequent steps

This primary a part of our sequence demonstrates how generative AI could make the medical content material assessment course of sooner, extra environment friendly, and scalable whereas sustaining excessive accuracy. Keep tuned for Half 2 of this sequence, the place we cowl the manufacturing journey, deep diving into challenges and scaling methods.Are you prepared to maneuver your AI initiatives into manufacturing?

In regards to the authors

Liza (Elizaveta) Zinovyeva, Ph.D., is an Utilized Scientist at AWS Generative AI Innovation Middle and is predicated in Berlin. She helps prospects throughout totally different industries to combine Generative AI into their current functions and workflows. She is enthusiastic about AI/ML, finance and software program safety subjects. In her spare time, she enjoys spending time together with her household, sports activities, studying new applied sciences, and desk quizzes.

Liza (Elizaveta) Zinovyeva, Ph.D., is an Utilized Scientist at AWS Generative AI Innovation Middle and is predicated in Berlin. She helps prospects throughout totally different industries to combine Generative AI into their current functions and workflows. She is enthusiastic about AI/ML, finance and software program safety subjects. In her spare time, she enjoys spending time together with her household, sports activities, studying new applied sciences, and desk quizzes.

Callum Macpherson is a Information Scientist on the AWS Generative AI Innovation Middle, the place cutting-edge AI meets real-world enterprise transformation. Callum companions straight with AWS prospects to design, construct, and scale generative AI options that unlock new alternatives, speed up innovation, and ship measurable impression throughout industries.

Callum Macpherson is a Information Scientist on the AWS Generative AI Innovation Middle, the place cutting-edge AI meets real-world enterprise transformation. Callum companions straight with AWS prospects to design, construct, and scale generative AI options that unlock new alternatives, speed up innovation, and ship measurable impression throughout industries.

Arefeh Ghahvechi is a Senior AI Strategist on the AWS GenAI Innovation Middle, specializing in serving to prospects notice speedy worth from generative AI applied sciences by bridging innovation and implementation. She identifies high-impact AI alternatives whereas constructing the organizational capabilities wanted for scaled adoption throughout enterprises and nationwide initiatives.

Arefeh Ghahvechi is a Senior AI Strategist on the AWS GenAI Innovation Middle, specializing in serving to prospects notice speedy worth from generative AI applied sciences by bridging innovation and implementation. She identifies high-impact AI alternatives whereas constructing the organizational capabilities wanted for scaled adoption throughout enterprises and nationwide initiatives.

Nuno Castro is a Sr. Utilized Science Supervisor. He’s has 19 years expertise within the discipline in industries equivalent to finance, manufacturing, and journey, main ML groups for 11 years.

Nuno Castro is a Sr. Utilized Science Supervisor. He’s has 19 years expertise within the discipline in industries equivalent to finance, manufacturing, and journey, main ML groups for 11 years.

![]() Dmitrii Ryzhov is a Senior Account Supervisor at Amazon Internet Providers (AWS), serving to digital-native firms unlock enterprise potential by AI, generative AI, and cloud applied sciences. He works carefully with prospects to determine high-impact enterprise initiatives and speed up execution by orchestrating strategic AWS assist, together with entry to the best experience, sources, and innovation packages.

Dmitrii Ryzhov is a Senior Account Supervisor at Amazon Internet Providers (AWS), serving to digital-native firms unlock enterprise potential by AI, generative AI, and cloud applied sciences. He works carefully with prospects to determine high-impact enterprise initiatives and speed up execution by orchestrating strategic AWS assist, together with entry to the best experience, sources, and innovation packages.

Nikita Kozodoi, PhD, is a Senior Utilized Scientist on the AWS Generative AI Innovation Middle engaged on the frontier of AI analysis and enterprise. Nikita builds and deploys generative AI and ML options that clear up real-world issues and drive enterprise impression for AWS prospects throughout industries.

Nikita Kozodoi, PhD, is a Senior Utilized Scientist on the AWS Generative AI Innovation Middle engaged on the frontier of AI analysis and enterprise. Nikita builds and deploys generative AI and ML options that clear up real-world issues and drive enterprise impression for AWS prospects throughout industries.

Aiham Taleb, PhD, is a Senior Utilized Scientist on the Generative AI Innovation Middle, working straight with AWS enterprise prospects to leverage Gen AI throughout a number of high-impact use circumstances. Aiham has a PhD in unsupervised illustration studying, and has business expertise that spans throughout numerous machine studying functions, together with laptop imaginative and prescient, pure language processing, and medical imaging.

Aiham Taleb, PhD, is a Senior Utilized Scientist on the Generative AI Innovation Middle, working straight with AWS enterprise prospects to leverage Gen AI throughout a number of high-impact use circumstances. Aiham has a PhD in unsupervised illustration studying, and has business expertise that spans throughout numerous machine studying functions, together with laptop imaginative and prescient, pure language processing, and medical imaging.