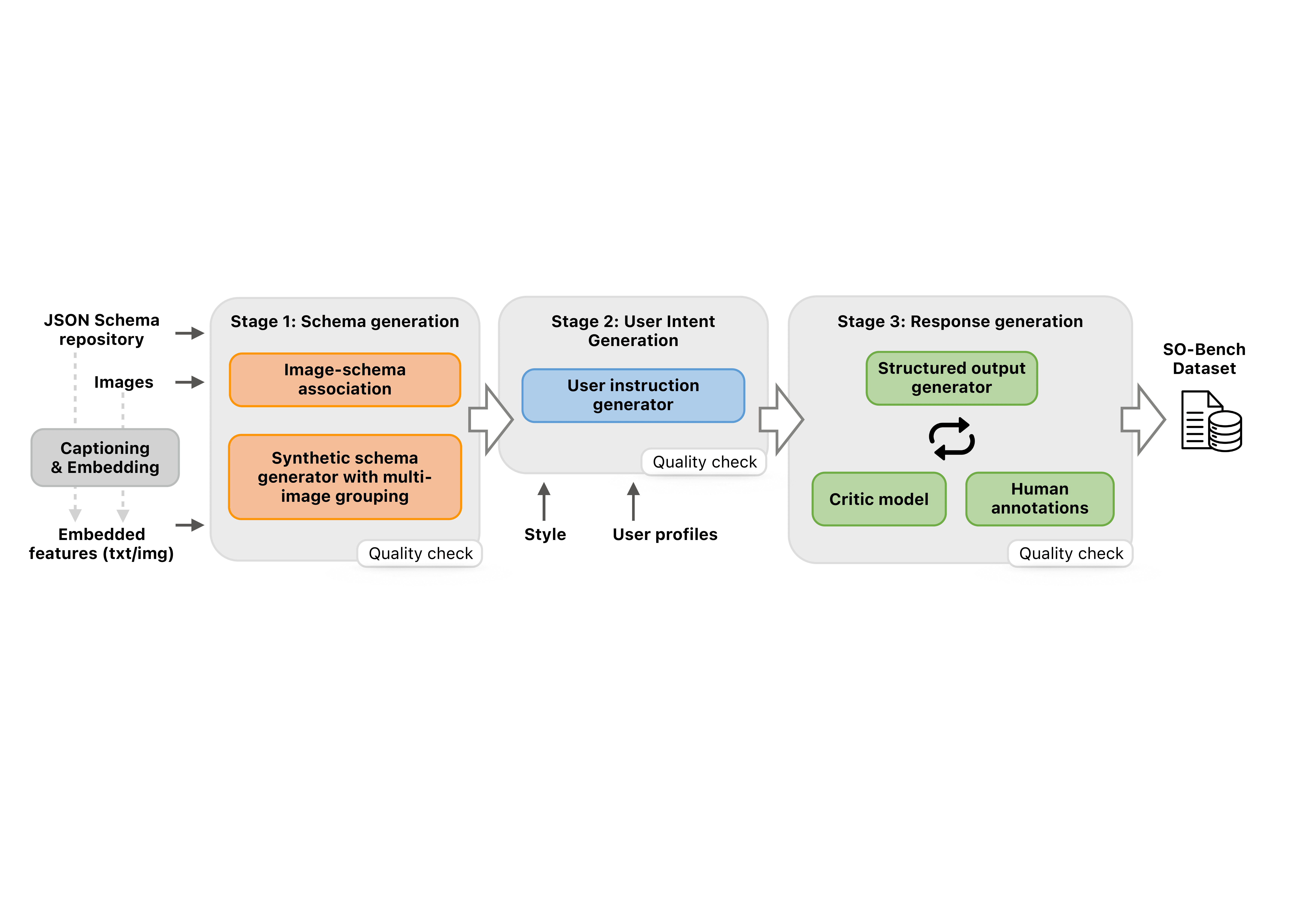

Multimodal giant language fashions (MLLMs) are more and more deployed in real-world, agentic settings the place outputs should not solely be right, but additionally conform to predefined knowledge schemas. Regardless of current progress in structured era in textual area, there’s nonetheless no benchmark that systematically evaluates schema-grounded info extraction and reasoning over visible inputs. On this work, we conduct a complete examine of visible structural output capabilities for MLLMs with our rigorously designed SO-Bench benchmark. Masking 4 visible domains, together with UI screens, pure photos, paperwork, and charts, SO-Bench is constructed from over 6.5K numerous JSON schemas and 1.8K curated image-schema pairs with human-verified high quality. Benchmarking experiments on open-sourced and frontier proprietary fashions reveal persistent gaps in predicting correct, schema compliant outputs, highlighting the necessity for higher multimodal structured reasoning. Past benchmarking, we additional conduct coaching experiments to largely enhance the mannequin’s structured output functionality. We plan to make the benchmark out there to the neighborhood.