This put up is co-written by Thomas Capelle and Ray Strickland from Weights & Biases (W&B).

Generative synthetic intelligence (AI) adoption is accelerating throughout enterprises, evolving from easy basis mannequin interactions to classy agentic workflows. As organizations transition from proof-of-concepts to manufacturing deployments, they require sturdy instruments for growth, analysis, and monitoring of AI functions at scale.

On this put up, we show the right way to use Basis Fashions (FMs) from Amazon Bedrock and the newly launched Amazon Bedrock AgentCore alongside W&B Weave to assist construct, consider, and monitor enterprise AI options. We cowl the whole growth lifecycle from monitoring particular person FM calls to monitoring advanced agent workflows in manufacturing.

Overview of W&B Weave

Weights & Biases (W&B) is an AI developer system that gives complete instruments for coaching fashions, fine-tuning, and leveraging basis fashions for enterprises of all sizes throughout varied industries.

W&B Weave presents a unified suite of developer instruments to assist each stage of your agentic AI workflows. It permits:

- Tracing & monitoring: Observe giant language mannequin (LLM) calls and utility logic to debug and analyze manufacturing methods.

- Systematic iteration: Refine and iterate on prompts, datasets and fashions.

- Experimentation: Experiment with completely different fashions and prompts within the LLM Playground.

- Analysis: Use customized or pre-built scorers alongside our comparability instruments to systematically assess and improve utility efficiency. Acquire person and skilled suggestions for real-life testing and analysis.

- Guardrails: Assist defend your utility with safeguards for content material moderation, immediate security, and extra. Use customized or third-party guardrails (together with Amazon Bedrock Guardrails) or W&B Weave’s native guardrails.

W&B Weave might be absolutely managed by Weights & Biases in a multi-tenant or single-tenant setting or might be deployed in a buyer’s Amazon Digital Personal Cloud (VPC) immediately. As well as, W&B Weave’s integration into the W&B Improvement Platform supplies organizations a seamlessly built-in expertise between the mannequin coaching/fine-tuning workflow and the agentic AI workflow.

To get began, subscribe to the Weights & Biases AI Improvement Platform by way of AWS Market. People and educational groups can subscribe to W&B at no further value.

Monitoring Amazon Bedrock FMs with W&B Weave SDK

W&B Weave integrates seamlessly with Amazon Bedrock by way of Python and TypeScript SDKs. After putting in the library and patching your Bedrock consumer, W&B Weave routinely tracks the LLM calls:

This integration routinely variations experiments and tracks configurations, offering full visibility into your Amazon Bedrock functions with out modifying core logic.

Experimenting with Amazon Bedrock FMs in W&B Weave Playground

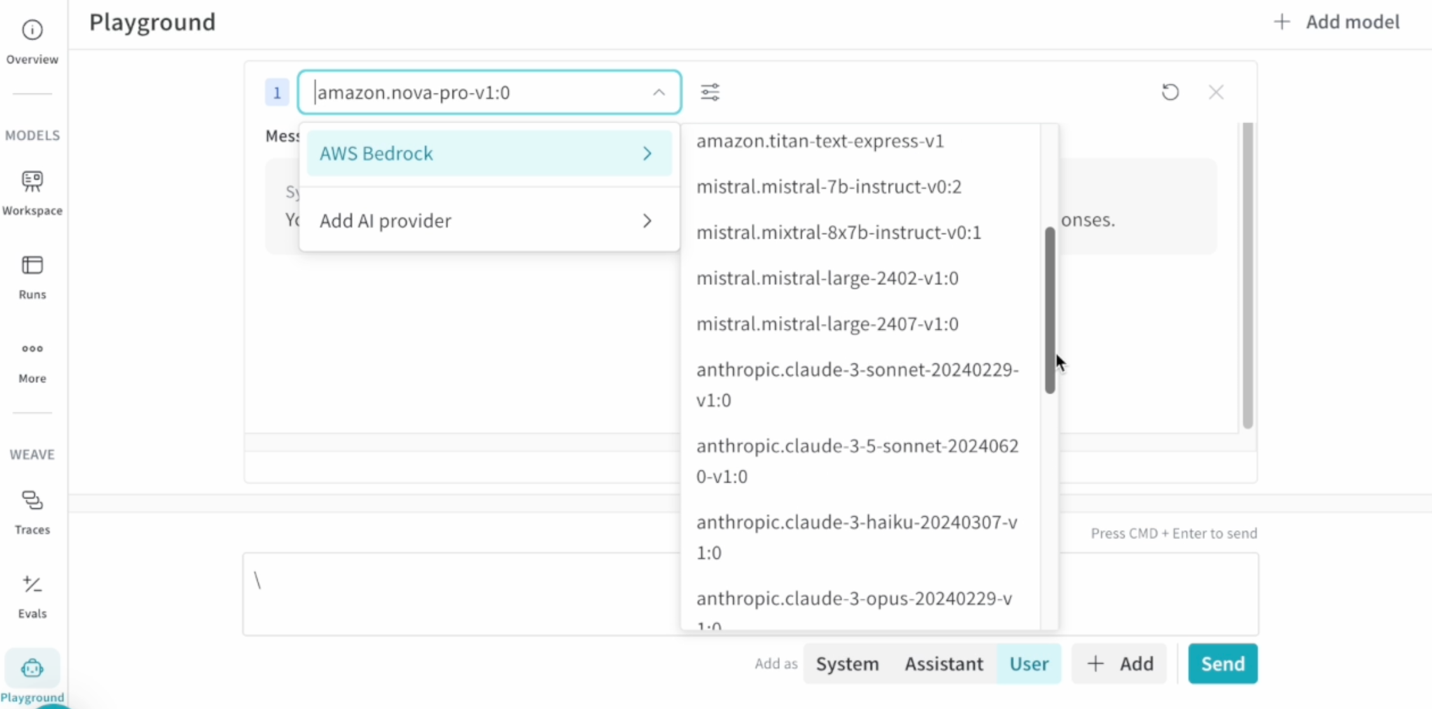

The W&B Weave Playground accelerates immediate engineering with an intuitive interface for testing and evaluating Bedrock fashions. Key options embody:

- Direct immediate enhancing and message retrying

- Facet-by-side mannequin comparability

- Entry from hint views for fast iteration

To start, add your AWS credentials within the Playground settings, choose your most well-liked Amazon Bedrock FMs, and begin experimenting. The interface permits fast iteration on prompts whereas sustaining full traceability of experiments.

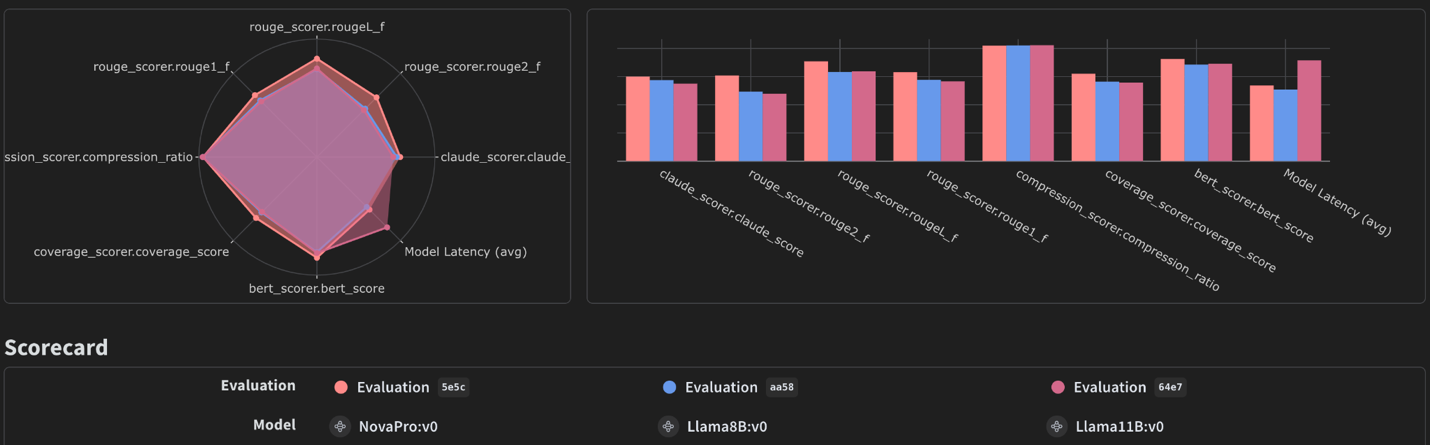

Evaluating Amazon Bedrock FMs with W&B Weave Evaluations

W&B Weave Evaluations supplies devoted instruments for evaluating generative AI fashions successfully. By leveraging W&B Weave Evaluations alongside Amazon Bedrock, customers can effectively consider these fashions, analyze outputs, and visualize efficiency throughout key metrics. Customers can use in-built scorers from W&B Weave, third celebration or customized scorers, and human/skilled suggestions as effectively. This mix permits for a deeper understanding of the tradeoffs between fashions, comparable to variations in value, accuracy, pace, and output high quality.

W&B Weave has a first-class method to monitor evaluations with Mannequin & Analysis lessons. To arrange an analysis job, prospects can:

- Outline a dataset or checklist of dictionaries with a group of examples to be evaluated

- Create an inventory of scoring capabilities. Every perform ought to have a model_output and optionally, different inputs out of your examples, and return a dictionary with the scores

- Outline an Amazon Bedrock mannequin by utilizing Mannequin class

- Consider this mannequin by calling Analysis

Right here’s an instance of organising an analysis job:

The analysis dashboard visualizes efficiency metrics, enabling knowledgeable choices about mannequin choice and configuration. For detailed steerage, see our earlier put up on evaluating LLM summarization with Amazon Bedrock and Weave.

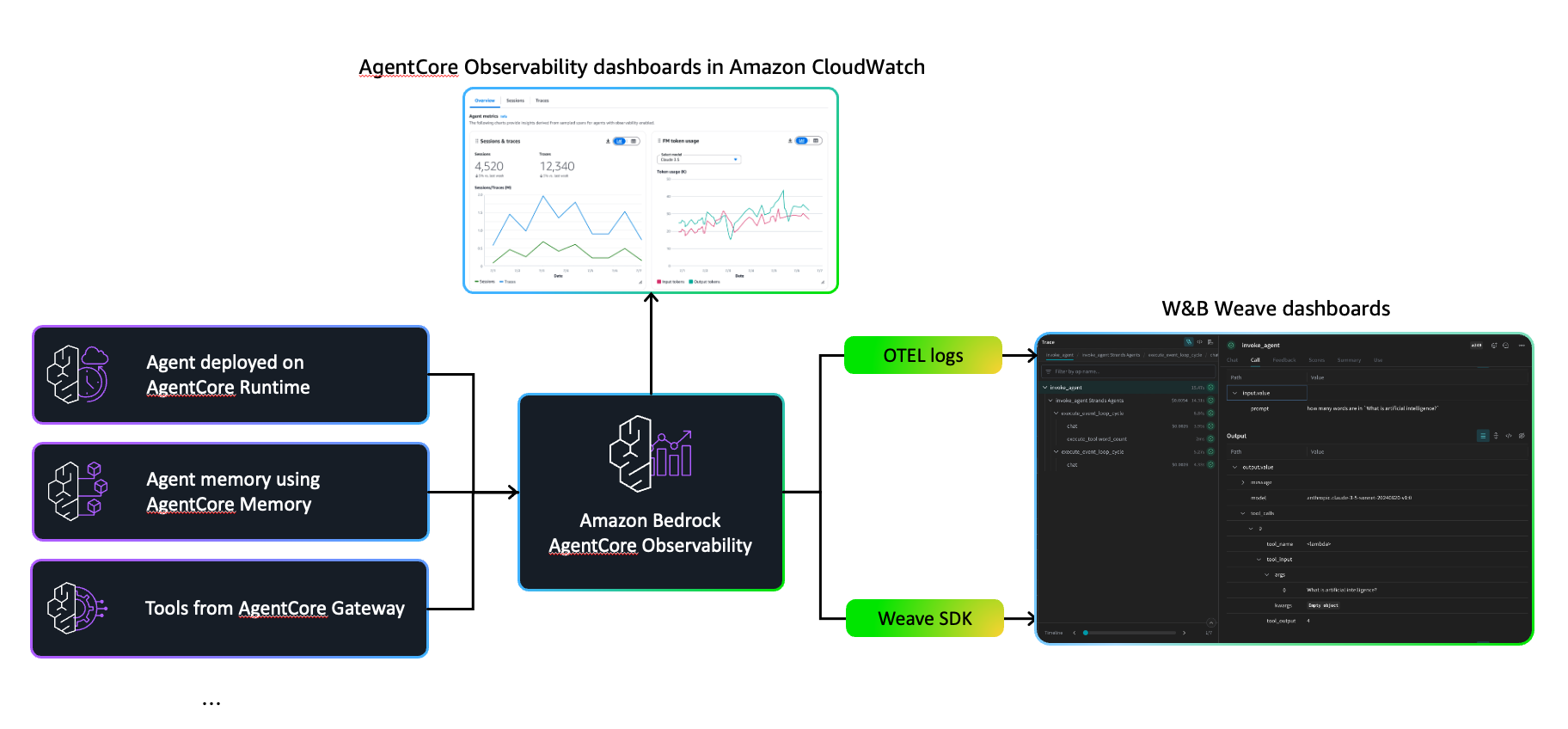

Enhancing Amazon Bedrock AgentCore Observability with W&B Weave

Amazon Bedrock AgentCore is a whole set of companies for deploying and working extremely succesful brokers extra securely at enterprise scale. It supplies safer runtime environments, workflow execution instruments, and operational controls that work with well-liked frameworks like Strands Brokers, CrewAI, LangGraph, and LlamaIndex, in addition to many LLM fashions – whether or not from Amazon Bedrock or exterior sources.

AgentCore consists of built-in observability by way of Amazon CloudWatch dashboards that monitor key metrics like token utilization, latency, session length, and error charges. It additionally traces workflow steps, exhibiting which instruments had been invoked and the way the mannequin responded, offering important visibility for debugging and high quality assurance in manufacturing.

When working with AgentCore and W&B Weave collectively, groups can use AgentCore’s built-in operational monitoring and safety foundations whereas additionally utilizing W&B Weave if it aligns with their current growth workflows. Organizations already invested within the W&B setting could select to include W&B Weave’s visualization instruments alongside AgentCore’s native capabilities. This method offers groups flexibility to make use of the observability resolution that most closely fits their established processes and preferences when growing advanced brokers that chain a number of instruments and reasoning steps.

There are two predominant approaches so as to add W&B Weave observability to your AgentCore brokers: utilizing the native W&B Weave SDK or integrating by way of OpenTelemetry.

Native W&B Weave SDK

The only method is to make use of W&B Weave’s @weave.op decorator to routinely monitor perform calls. Initialize W&B Weave along with your undertaking title and wrap the capabilities you need to monitor:

Since AgentCore runs as a docker container, add W&B weave to your dependencies (for instance, uv add weave) to incorporate it in your container picture.

OpenTelemetry Integration

For groups already utilizing OpenTelemetry or wanting vendor-neutral instrumentation, W&B Weave helps OTLP (OpenTelemetry Protocol) immediately:

This method maintains compatibility with AgentCore’s current OpenTelemetry infrastructure whereas routing traces to W&B Weave for visualization.When utilizing each AgentCore and W&B Weave collectively, groups have a number of choices for observability. AgentCore’s CloudWatch integration displays system well being, useful resource utilization, and error charges whereas offering tracing for agent reasoning and gear choice. W&B Weave presents visualization capabilities that current execution knowledge in codecs acquainted to groups already utilizing the W&B setting. Each options present visibility into how brokers course of data and make choices, permitting organizations to decide on the observability method that greatest aligns with their current workflows and preferences.This dual-layer method means customers can:

- Monitor manufacturing service degree agreements (SLAs) by way of CloudWatch alerts

- Debug advanced agent behaviors in W&B Weave’s hint explorer

- Optimize token utilization and latency with detailed execution breakdowns

- Examine agent efficiency throughout completely different prompts and configurations

The mixing requires minimal code modifications, preserves your current AgentCore deployment, and scales along with your agent complexity. Whether or not you’re constructing easy tool-calling brokers or orchestrating multi-step workflows, this observability stack supplies the insights wanted to iterate shortly and deploy confidently.

For implementation particulars and full code examples, discuss with our earlier put up.

Conclusion

On this put up, we demonstrated the right way to construct and optimize enterprise-grade agentic AI options by combining Amazon Bedrock’s FMs and AgentCore with W&B Weave’s complete observability toolkit. We explored how W&B Weave can improve each stage of the LLM growth lifecycle—from preliminary experimentation within the Playground to systematic analysis of mannequin efficiency, and eventually to manufacturing monitoring of advanced agent workflows.

The mixing between Amazon Bedrock and W&B Weave supplies a number of key capabilities:

- Computerized monitoring of Amazon Bedrock FM calls with minimal code modifications utilizing the W&B Weave SDK

- Speedy experimentation by way of the W&B Weave Playground’s intuitive interface for testing prompts and evaluating fashions

- Systematic analysis with customized scoring capabilities to guage completely different Amazon Bedrock fashions

- Complete observability for AgentCore deployments, with CloudWatch metrics offering extra sturdy operational monitoring supplemented by detailed execution traces

To get began:

- Request a free trial or subscribe to Weights &Biases AI Improvement Platform by way of AWS Market

- Set up the W&B Weave SDK and comply with our code examples to start monitoring your Bedrock FM calls

- Experiment with completely different fashions within the W&B Weave Playground by including your AWS credentials and testing varied Amazon Bedrock FMs

- Arrange evaluations utilizing the W&B Weave Analysis framework to systematically evaluate mannequin efficiency on your use instances

- Improve your AgentCore brokers by including W&B Weave observability utilizing both the native SDK or OpenTelemetry integration

Begin with a easy integration to trace your Amazon Bedrock calls, then progressively undertake extra superior options as your AI functions develop in complexity. The mixture of Amazon Bedrock and W&B Weave’s complete growth instruments supplies the inspiration wanted to construct, consider, and preserve production-ready AI options at scale.

In regards to the authors

James Yi is a Senior AI/ML Companion Options Architect at AWS. He spearheads AWS’s strategic partnerships in Rising Applied sciences, guiding engineering groups to design and develop cutting-edge joint options in generative AI. He permits area and technical groups to seamlessly deploy, function, safe, and combine companion options on AWS. James collaborates intently with enterprise leaders to outline and execute joint Go-To-Market methods, driving cloud-based enterprise progress. Outdoors of labor, he enjoys taking part in soccer, touring, and spending time together with his household.

James Yi is a Senior AI/ML Companion Options Architect at AWS. He spearheads AWS’s strategic partnerships in Rising Applied sciences, guiding engineering groups to design and develop cutting-edge joint options in generative AI. He permits area and technical groups to seamlessly deploy, function, safe, and combine companion options on AWS. James collaborates intently with enterprise leaders to outline and execute joint Go-To-Market methods, driving cloud-based enterprise progress. Outdoors of labor, he enjoys taking part in soccer, touring, and spending time together with his household.

Ray Strickland is a Senior Companion Options Architect at AWS specializing in AI/ML, Agentic AI and Clever Doc Processing. He permits companions to deploy scalable generative AI options utilizing AWS greatest practices and drives innovation by way of strategic companion enablement packages. Ray collaborates throughout a number of AWS groups to speed up AI adoption and has in depth expertise in companion analysis and enablement.

Ray Strickland is a Senior Companion Options Architect at AWS specializing in AI/ML, Agentic AI and Clever Doc Processing. He permits companions to deploy scalable generative AI options utilizing AWS greatest practices and drives innovation by way of strategic companion enablement packages. Ray collaborates throughout a number of AWS groups to speed up AI adoption and has in depth expertise in companion analysis and enablement.

Thomas Capelle is a Machine Studying Engineer at Weights & Biases. He’s answerable for maintaining the www.github.com/wandb/examples repository stay and updated. He additionally builds content material on MLOPS, functions of W&B to industries, and enjoyable deep studying generally. Beforehand he was utilizing deep studying to resolve short-term forecasting for photo voltaic power. He has a background in City Planning, Combinatorial Optimization, Transportation Economics, and Utilized Math.

Thomas Capelle is a Machine Studying Engineer at Weights & Biases. He’s answerable for maintaining the www.github.com/wandb/examples repository stay and updated. He additionally builds content material on MLOPS, functions of W&B to industries, and enjoyable deep studying generally. Beforehand he was utilizing deep studying to resolve short-term forecasting for photo voltaic power. He has a background in City Planning, Combinatorial Optimization, Transportation Economics, and Utilized Math.

Scott Juang is the Director of Alliances at Weights & Biases. Previous to W&B, he led various strategic alliances at AWS and Cloudera. Scott studied Supplies Engineering and has a ardour for renewable power.

Scott Juang is the Director of Alliances at Weights & Biases. Previous to W&B, he led various strategic alliances at AWS and Cloudera. Scott studied Supplies Engineering and has a ardour for renewable power.