This put up was co-authored with Jingwei Zuo from TII.

We’re excited to announce the provision of the Expertise Innovation Institute (TII)’s Falcon-H1 fashions on Amazon Bedrock Market and Amazon SageMaker JumpStart. With this launch, builders and knowledge scientists can now use six instruction-tuned Falcon-H1 fashions (0.5B, 1.5B, 1.5B-Deep, 3B, 7B, and 34B) on AWS, and have entry to a complete suite of hybrid structure fashions that mix conventional consideration mechanisms with State House Fashions (SSMs) to ship distinctive efficiency with unprecedented effectivity.

On this put up, we current an outline of Falcon-H1 capabilities and present the best way to get began with TII’s Falcon-H1 fashions on each Amazon Bedrock Market and SageMaker JumpStart.

Overview of TII and AWS collaboration

TII is a number one analysis institute primarily based in Abu Dhabi. As a part of UAE’s Superior Expertise Analysis Council (ATRC), TII focuses on superior know-how analysis and improvement throughout AI, quantum computing, autonomous robotics, cryptography, and extra. TII employs worldwide groups of scientists, researchers, and engineers in an open and agile surroundings, aiming to drive technological innovation and place Abu Dhabi and the UAE as a worldwide analysis and improvement hub in alignment with the UAE Nationwide Technique for Synthetic Intelligence 2031.

TII and Amazon Internet Providers (AWS) are collaborating to broaden entry to made-in-the-UAE AI fashions throughout the globe. By combining TII’s technical experience in constructing massive language fashions (LLMs) with AWS Cloud-based AI and machine studying (ML) providers, professionals worldwide can now construct and scale generative AI functions utilizing the Falcon-H1 sequence of fashions.

About Falcon-H1 fashions

The Falcon-H1 structure implements a parallel hybrid design, utilizing parts from Mamba and Transformer architectures to mix the quicker inference and decrease reminiscence footprint of SSMs like Mamba with the effectiveness of Transformers’ consideration mechanism in understanding context and enhanced generalization capabilities. The Falcon-H1 structure scales throughout a number of configurations starting from 0.5–34 billion parameters and offers native help for 18 languages. In keeping with TII, the Falcon-H1 household demonstrates notable effectivity with printed metrics indicating that smaller mannequin variants obtain efficiency parity with bigger fashions. Among the advantages of Falcon-H1 sequence embrace:

- Efficiency – The hybrid attention-SSM mannequin has optimized parameters with adjustable ratios between consideration and SSM heads, resulting in quicker inference, decrease reminiscence utilization, and powerful generalization capabilities. In keeping with TII benchmarks printed in Falcon-H1’s technical weblog put up and technical report, Falcon-H1 fashions reveal superior efficiency throughout a number of scales in opposition to different main Transformer fashions of comparable or bigger scales. For instance, Falcon-H1-0.5B delivers efficiency much like typical 7B fashions from 2024, and Falcon-H1-1.5B-Deep rivals lots of the present main 7B-10B fashions.

- Wide selection of mannequin sizes – The Falcon-H1 sequence consists of six sizes: 0.5B, 1.5B, 1.5B-Deep, 3B, 7B, and 34B, with each base and instruction-tuned variants. The Instruct fashions at the moment are obtainable in Amazon Bedrock Market and SageMaker JumpStart.

- Multilingual by design – The fashions help 18 languages natively (Arabic, Czech, German, English, Spanish, French, Hindi, Italian, Japanese, Korean, Dutch, Polish, Portuguese, Romanian, Russian, Swedish, Urdu, and Chinese language) and may scale to over 100 languages in response to TII, due to a multilingual tokenizer educated on various language datasets.

- As much as 256,000 context size – The Falcon-H1 sequence permits functions in long-document processing, multi-turn dialogue, and long-range reasoning, displaying a definite benefit over opponents in sensible long-context functions like Retrieval Augmented Technology (RAG).

- Sturdy knowledge and coaching technique – Coaching of Falcon-H1 fashions employs an progressive strategy that introduces advanced knowledge early on, opposite to conventional curriculum studying. It additionally implements strategic knowledge reuse primarily based on cautious memorization window evaluation. Moreover, the coaching course of scales easily throughout mannequin sizes by a custom-made Maximal Replace Parametrization (µP) recipe, particularly tailored for this novel structure.

- Balanced efficiency in science and knowledge-intensive domains – By means of a rigorously designed knowledge combination and common evaluations throughout coaching, the mannequin achieves sturdy normal capabilities and broad world information whereas minimizing unintended specialization or domain-specific biases.

In step with their mission to foster AI accessibility and collaboration, TII have launched Falcon-H1 fashions beneath the Falcon LLM license. It gives the next advantages:

- Open supply nature and accessibility

- Multi-language capabilities

- Price-effectiveness in comparison with proprietary fashions

- Power-efficiency

About Amazon Bedrock Market and SageMaker JumpStart

Amazon Bedrock Market gives entry to over 100 common, rising, specialised, and domain-specific fashions, so you’ll find the perfect proprietary and publicly obtainable fashions to your use case primarily based on elements reminiscent of accuracy, flexibility, and value. On Amazon Bedrock Market you may uncover fashions in a single place and entry them by unified and safe Amazon Bedrock APIs. You can even choose your required variety of situations and the occasion kind to satisfy the calls for of your workload and optimize your prices.

SageMaker JumpStart helps you shortly get began with machine studying. It offers entry to state-of-the-art mannequin architectures, reminiscent of language fashions, laptop imaginative and prescient fashions, and extra, with out having to construct them from scratch. With SageMaker JumpStart you may deploy fashions in a safe surroundings by provisioning them on SageMaker inference situations and isolating them inside your digital non-public cloud (VPC). You can even use Amazon SageMaker AI to additional customise and fine-tune the fashions and streamline all the mannequin deployment course of.

Resolution overview

This put up demonstrates the best way to deploy a Falcon-H1 mannequin utilizing each Amazon Bedrock Market and SageMaker JumpStart. Though we use Falcon-H1-0.5B for instance, you may apply these steps to different fashions within the Falcon-H1 sequence. For assist figuring out which deployment possibility—Amazon Bedrock Market or SageMaker JumpStart—most accurately fits your particular necessities, see Amazon Bedrock or Amazon SageMaker AI?

Deploy Falcon-H1-0.5B-Instruct with Amazon Bedrock Market

On this part, we present the best way to deploy the Falcon-H1-0.5B-Instruct mannequin in Amazon Bedrock Market.

Conditions

To strive the Falcon-H1-0.5B-Instruct mannequin in Amazon Bedrock Market, you need to have entry to an AWS account that may comprise your AWS assets.Previous to deploying Falcon-H1-0.5B-Instruct, confirm that your AWS account has ample quota allocation for ml.g6.xlarge situations. The default quota for endpoints utilizing a number of occasion varieties and sizes is 0, so making an attempt to deploy the mannequin and not using a greater quota will set off a deployment failure.

To request a quota enhance, open the AWS Service Quotas console and seek for Amazon SageMaker. Find ml.g6.xlarge for endpoint utilization and select Request quota enhance, then specify your required restrict worth. After the request is accepted, you may proceed with the deployment.

Deploy the mannequin utilizing the Amazon Bedrock Market UI

To deploy the mannequin utilizing Amazon Bedrock Market, full the next steps:

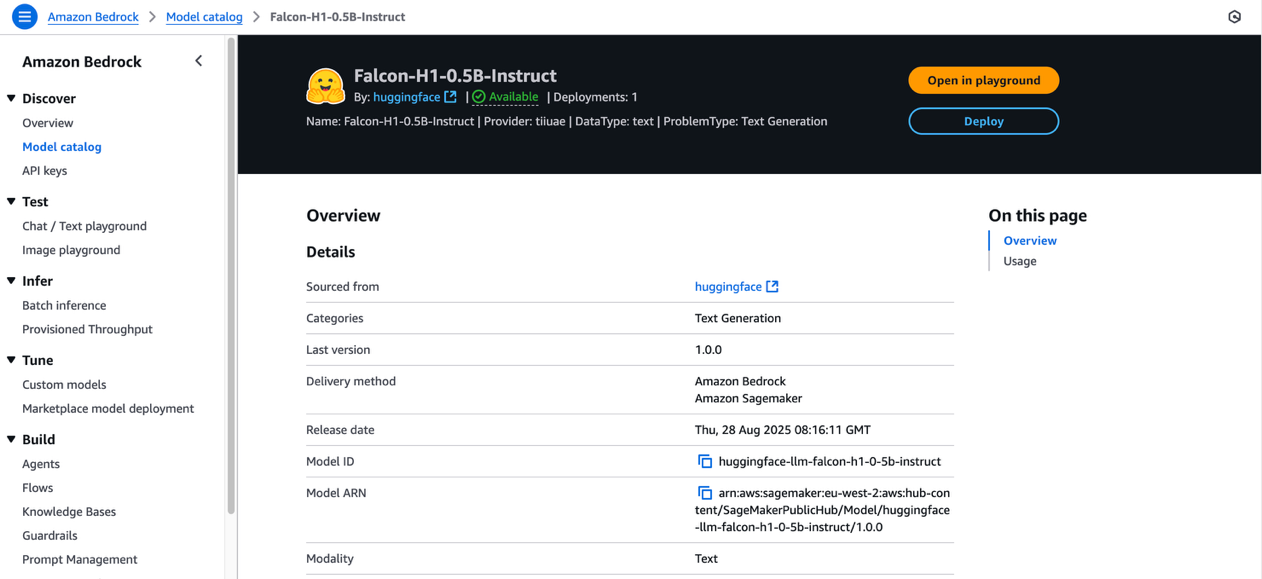

- On the Amazon Bedrock console, beneath Uncover within the navigation pane, select Mannequin catalog.

- Filter for Falcon-H1 because the mannequin title and select Falcon-H1-0.5B-Instruct.

The mannequin overview web page consists of details about the mannequin’s license phrases, options, setup directions, and hyperlinks to additional assets.

- Assessment the mannequin license phrases, and when you agree with the phrases, select Deploy.

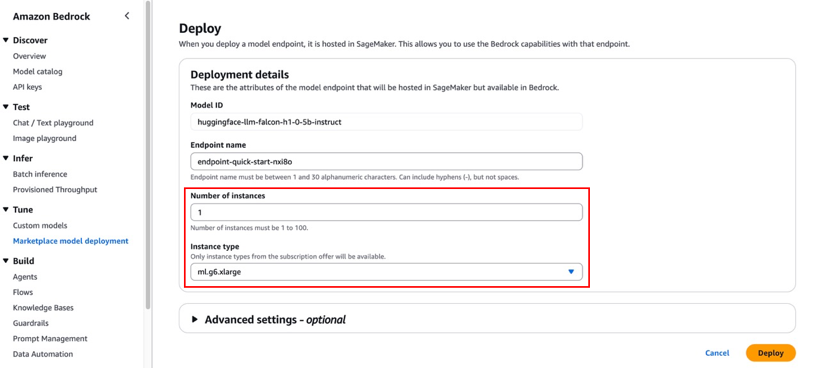

- For Endpoint title, enter an endpoint title or depart it because the default pre-populated title.

- To attenuate prices whereas experimenting, set the Variety of situations to 1.

- For Occasion kind, select from the listing of suitable occasion varieties. Falcon-H1-0.5B-Instruct is an environment friendly mannequin, so ml.m6.xlarge is ample for this train.

Though the default configurations are usually ample for primary wants, you may customise superior settings like VPC, service entry permissions, encryption keys, and useful resource tags. These superior settings may require adjustment for manufacturing environments to keep up compliance along with your group’s safety protocols.

- Select Deploy.

- A immediate asks you to remain on the web page whereas the AWS Identification and Entry Administration (IAM) position is being created. In case your AWS account lacks ample quota for the chosen occasion kind, you’ll obtain an error message. On this case, discuss with the previous prerequisite part to extend your quota, then strive the deployment once more.

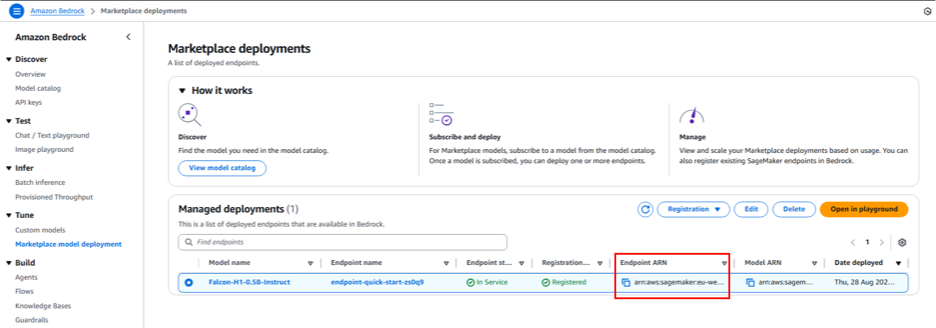

Whereas deployment is in progress, you may select Market mannequin deployments within the navigation pane to observe the deployment progress within the Managed deployment part. When the deployment is full, the endpoint standing will change from Creating to In Service.

Work together with the mannequin within the Amazon Bedrock Market playground

Now you can check Falcon-H1 capabilities immediately within the Amazon Bedrock playground by choosing the managed deployment and selecting Open in playground.

Now you can use the Amazon Bedrock Market playground to work together with Falcon-H1-0.5B-Instruct.

Invoke the mannequin utilizing code

On this part, we reveal to invoke the mannequin utilizing the Amazon Bedrock Converse API.

Change the placeholder code with the endpoint’s Amazon Useful resource Identify (ARN), which begins with arn:aws:sagemaker. You could find this ARN on the endpoint particulars web page within the Managed deployments part.

To be taught extra in regards to the detailed steps and instance code for invoking the mannequin utilizing Amazon Bedrock APIs, discuss with Submit prompts and generate response utilizing the API.

Deploy Falcon-H1-0.5B-Instruct with SageMaker JumpStart

You may entry FMs in SageMaker JumpStart by Amazon SageMaker Studio, the SageMaker SDK, and the AWS Administration Console. On this walkthrough, we reveal the best way to deploy Falcon-H1-0.5B-Instruct utilizing the SageMaker Python SDK. Consult with Deploy a mannequin in Studio to discover ways to deploy the mannequin by SageMaker Studio.

Conditions

To deploy Falcon-H1-0.5B-Instruct with SageMaker JumpStart, you need to have the next stipulations:

- An AWS account that may comprise your AWS assets.

- An IAM position to entry SageMaker AI. To be taught extra about how IAM works with SageMaker AI, see Identification and Entry Administration for Amazon SageMaker AI.

- Entry to SageMaker Studio with a JupyterLab area, or an interactive improvement surroundings (IDE) reminiscent of Visible Studio Code or PyCharm.

Deploy the mannequin programmatically utilizing the SageMaker Python SDK

Earlier than deploying Falcon-H1-0.5B-Instruct utilizing the SageMaker Python SDK, be sure to have put in the SDK and configured your AWS credentials and permissions.

The next code instance demonstrates the best way to deploy the mannequin:

When the earlier code phase completes efficiently, the Falcon-H1-0.5B-Instruct mannequin deployment is full and obtainable on a SageMaker endpoint. Word the endpoint title proven within the output—you’ll substitute the placeholder within the following code phase with this worth.The next code demonstrates the best way to put together the enter knowledge, make the inference API name, and course of the mannequin’s response:

Clear up

To keep away from ongoing expenses for AWS assets used whereas experimenting with Falcon-H1 fashions, make certain to delete all deployed endpoints and their related assets if you’re completed. To take action, full the next steps:

- Delete Amazon Bedrock Market assets:

- On the Amazon Bedrock console, select Market mannequin deployment within the navigation pane.

- Below Managed deployments, select the Falcon-H1 mannequin endpoint you deployed earlier.

- Select Delete and make sure the deletion when you now not want to make use of this endpoint in Amazon Bedrock Market.

- Delete SageMaker endpoints:

- On the SageMaker AI console, within the navigation pane, select Endpoints beneath Inference.

- Choose the endpoint related to the Falcon-H1 fashions.

- Select Delete and make sure the deletion. This stops the endpoint and avoids additional compute expenses.

- Delete SageMaker fashions:

- On the SageMaker AI console, select Fashions beneath Inference.

- Choose the mannequin related along with your endpoint and select Delete.

At all times confirm that each one endpoints are deleted after experimentation to optimize prices. Consult with the Amazon SageMaker documentation for added steerage on managing assets.

Conclusion

The provision of Falcon-H1 fashions in Amazon Bedrock Market and SageMaker JumpStart helps builders, researchers, and companies construct cutting-edge generative AI functions with ease. Falcon-H1 fashions provide multilingual help (18 languages) throughout varied mannequin sizes (from 0.5B to 34B parameters) and help as much as 256K context size, due to their environment friendly hybrid attention-SSM structure.

Through the use of the seamless discovery and deployment capabilities of Amazon Bedrock Market and SageMaker JumpStart, you may speed up your AI innovation whereas benefiting from the safe, scalable, and cost-effective AWS Cloud infrastructure.

We encourage you to discover the Falcon-H1 fashions in Amazon Bedrock Market or SageMaker JumpStart. You should utilize these fashions in AWS Areas the place Amazon Bedrock or SageMaker JumpStart and the required occasion varieties can be found.

For additional studying, discover the AWS Machine Studying Weblog, SageMaker JumpStart GitHub repository, and Amazon Bedrock Consumer Information. Begin constructing your subsequent generative AI software with Falcon-H1 fashions and unlock new prospects with AWS!

Particular due to everybody who contributed to the launch: Evan Kravitz, Varun Morishetty, and Yotam Moss.

Concerning the authors

Mehran Nikoo leads the Go-to-Market technique for Amazon Bedrock and agentic AI in EMEA at AWS, the place he has been driving the event of AI programs and cloud-native options during the last 4 years. Previous to becoming a member of AWS, Mehran held management and technical positions at Trainline, McLaren, and Microsoft. He holds an MBA from Warwick Enterprise College and an MRes in Pc Science from Birkbeck, College of London.

Mehran Nikoo leads the Go-to-Market technique for Amazon Bedrock and agentic AI in EMEA at AWS, the place he has been driving the event of AI programs and cloud-native options during the last 4 years. Previous to becoming a member of AWS, Mehran held management and technical positions at Trainline, McLaren, and Microsoft. He holds an MBA from Warwick Enterprise College and an MRes in Pc Science from Birkbeck, College of London.

Mustapha Tawbi is a Senior Accomplice Options Architect at AWS, specializing in generative AI and ML, with 25 years of enterprise know-how expertise throughout AWS, IBM, Sopra Group, and Capgemini. He has a PhD in Pc Science from Sorbonne and a Grasp’s diploma in Information Science from Heriot-Watt College Dubai. Mustapha leads generative AI technical collaborations with AWS companions all through the MENAT area.

Mustapha Tawbi is a Senior Accomplice Options Architect at AWS, specializing in generative AI and ML, with 25 years of enterprise know-how expertise throughout AWS, IBM, Sopra Group, and Capgemini. He has a PhD in Pc Science from Sorbonne and a Grasp’s diploma in Information Science from Heriot-Watt College Dubai. Mustapha leads generative AI technical collaborations with AWS companions all through the MENAT area.

Jingwei Zuo is a Lead Researcher on the Expertise Innovation Institute (TII) within the UAE, the place he leads the Falcon Foundational Fashions crew. He obtained his PhD in 2022 from College of Paris-Saclay, the place he was awarded the Plateau de Saclay Doctoral Prize. He holds an MSc (2018) from the College of Paris-Saclay, an Engineer diploma (2017) from Sorbonne Université, and a BSc from Huazhong College of Science & Expertise.

Jingwei Zuo is a Lead Researcher on the Expertise Innovation Institute (TII) within the UAE, the place he leads the Falcon Foundational Fashions crew. He obtained his PhD in 2022 from College of Paris-Saclay, the place he was awarded the Plateau de Saclay Doctoral Prize. He holds an MSc (2018) from the College of Paris-Saclay, an Engineer diploma (2017) from Sorbonne Université, and a BSc from Huazhong College of Science & Expertise.

John Liu is a Principal Product Supervisor for Amazon Bedrock at AWS. Beforehand, he served because the Head of Product for AWS Web3/Blockchain. Previous to becoming a member of AWS, John held varied product management roles at public blockchain protocols and monetary know-how (fintech) corporations for 14 years. He additionally has 9 years of portfolio administration expertise at a number of hedge funds.

John Liu is a Principal Product Supervisor for Amazon Bedrock at AWS. Beforehand, he served because the Head of Product for AWS Web3/Blockchain. Previous to becoming a member of AWS, John held varied product management roles at public blockchain protocols and monetary know-how (fintech) corporations for 14 years. He additionally has 9 years of portfolio administration expertise at a number of hedge funds.

Hamza MIMI is a Options Architect for companions and strategic offers within the MENAT area at AWS, the place he bridges cutting-edge know-how with impactful enterprise outcomes. With experience in AI and a ardour for sustainability, he helps organizations architect progressive options that drive each digital transformation and environmental accountability, remodeling advanced challenges into alternatives for progress and optimistic change.

Hamza MIMI is a Options Architect for companions and strategic offers within the MENAT area at AWS, the place he bridges cutting-edge know-how with impactful enterprise outcomes. With experience in AI and a ardour for sustainability, he helps organizations architect progressive options that drive each digital transformation and environmental accountability, remodeling advanced challenges into alternatives for progress and optimistic change.